ResAdapt: Adaptive Resolution for Efficient Multimodal Reasoning

Multimodal Large Language Models (MLLMs) achieve stronger visual understanding by scaling input fidelity, yet the resulting visual token growth makes jointly sustaining high spatial resolution and long temporal context prohibitive. We argue that the …

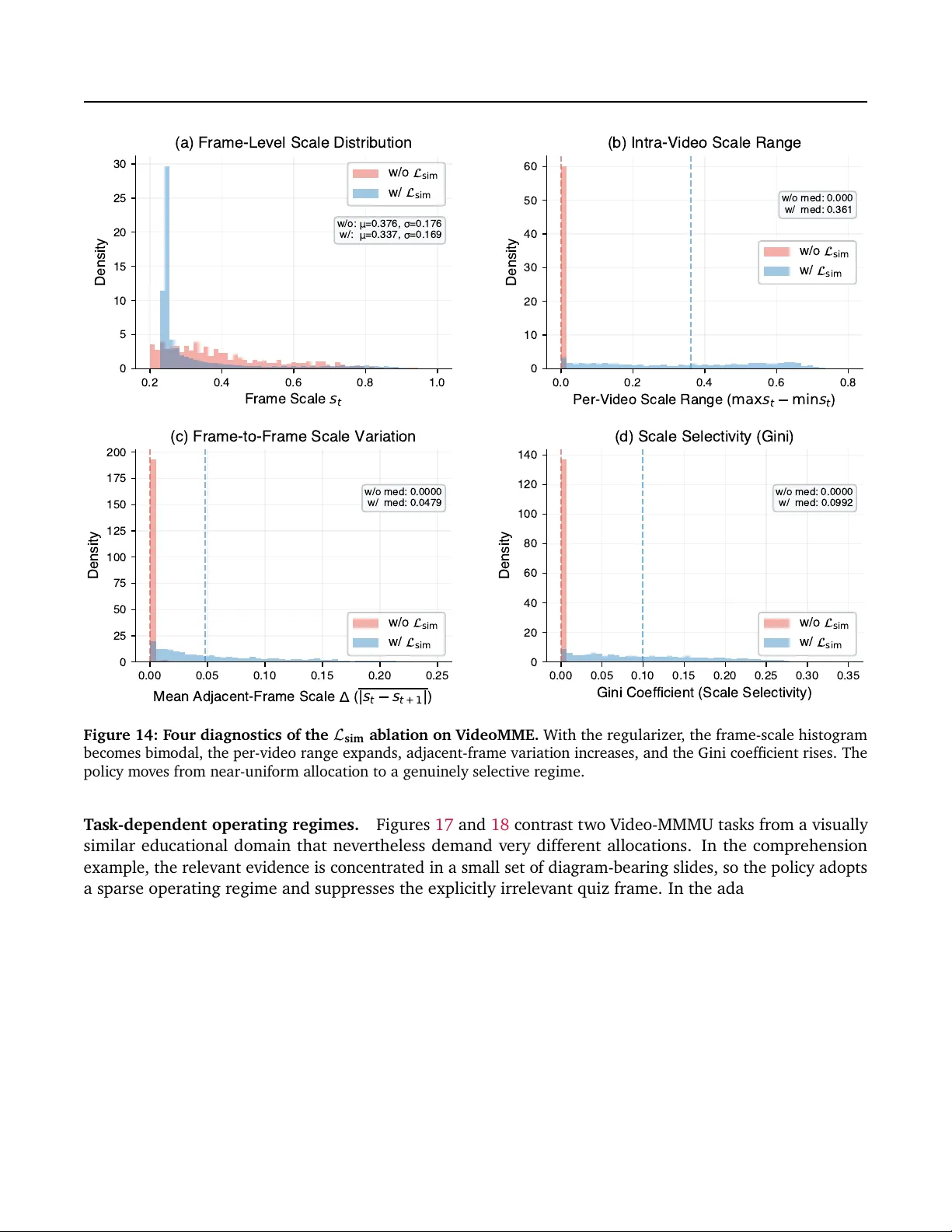

Authors: Huanxuan Liao, Zhongtao Jiang, Yupu Hao