Universal Approximation Constraints of Narrow ResNets: The Tunnel Effect

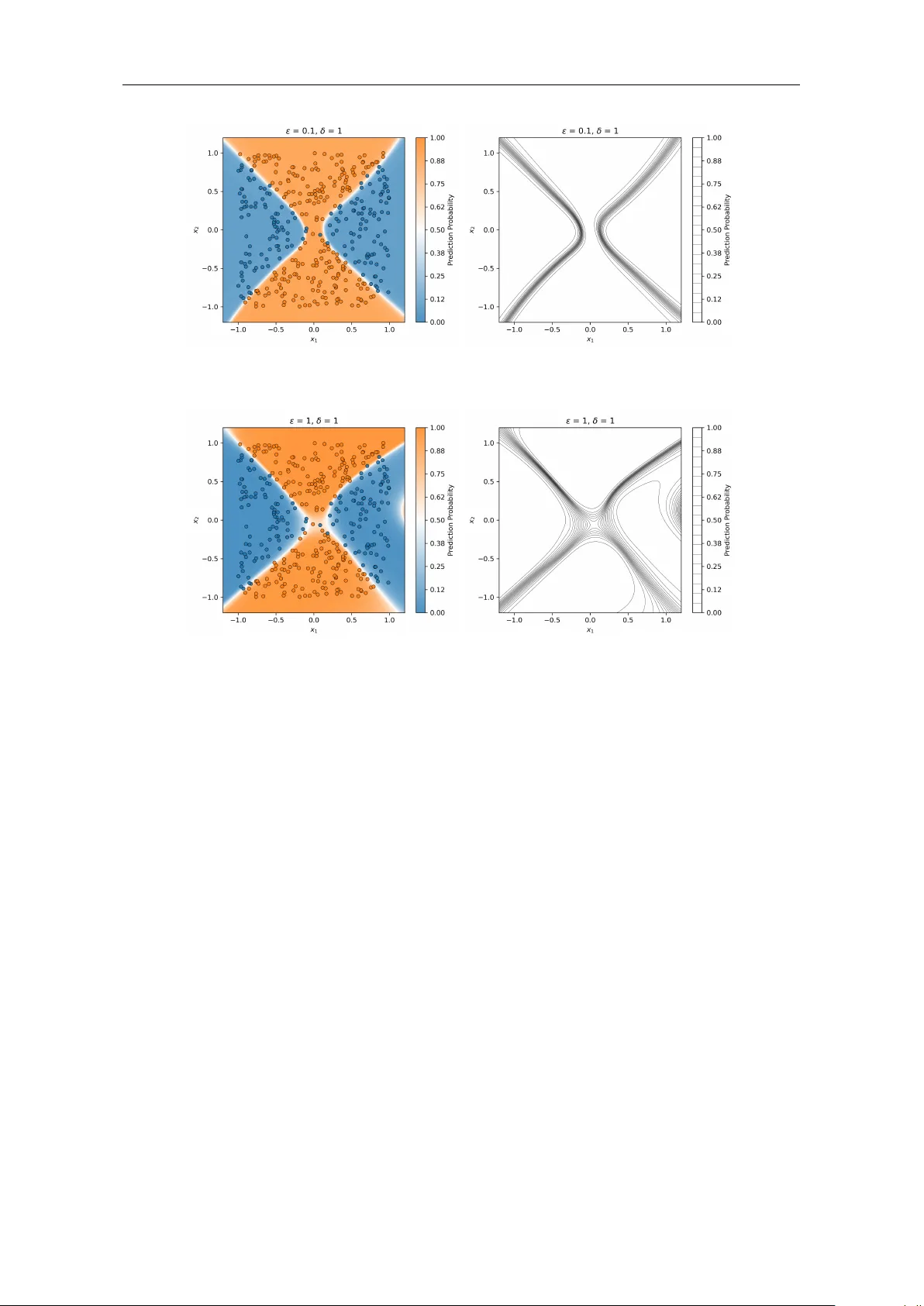

We analyze the universal approximation constraints of narrow Residual Neural Networks (ResNets) both theoretically and numerically. For deep neural networks without input space augmentation, a central constraint is the inability to represent critical…

Authors: Christian Kuehn, Sara-Viola Kuntz, Tobias Wöhrer