Learning from imperfect quantum data via unsupervised domain adaptation with classical shadows

Learning from quantum data using classical machine learning models has emerged as a promising paradigm toward realizing quantum advantages. Despite extensive analyses on their performance, clean and fully labeled quantum data from the target domain a…

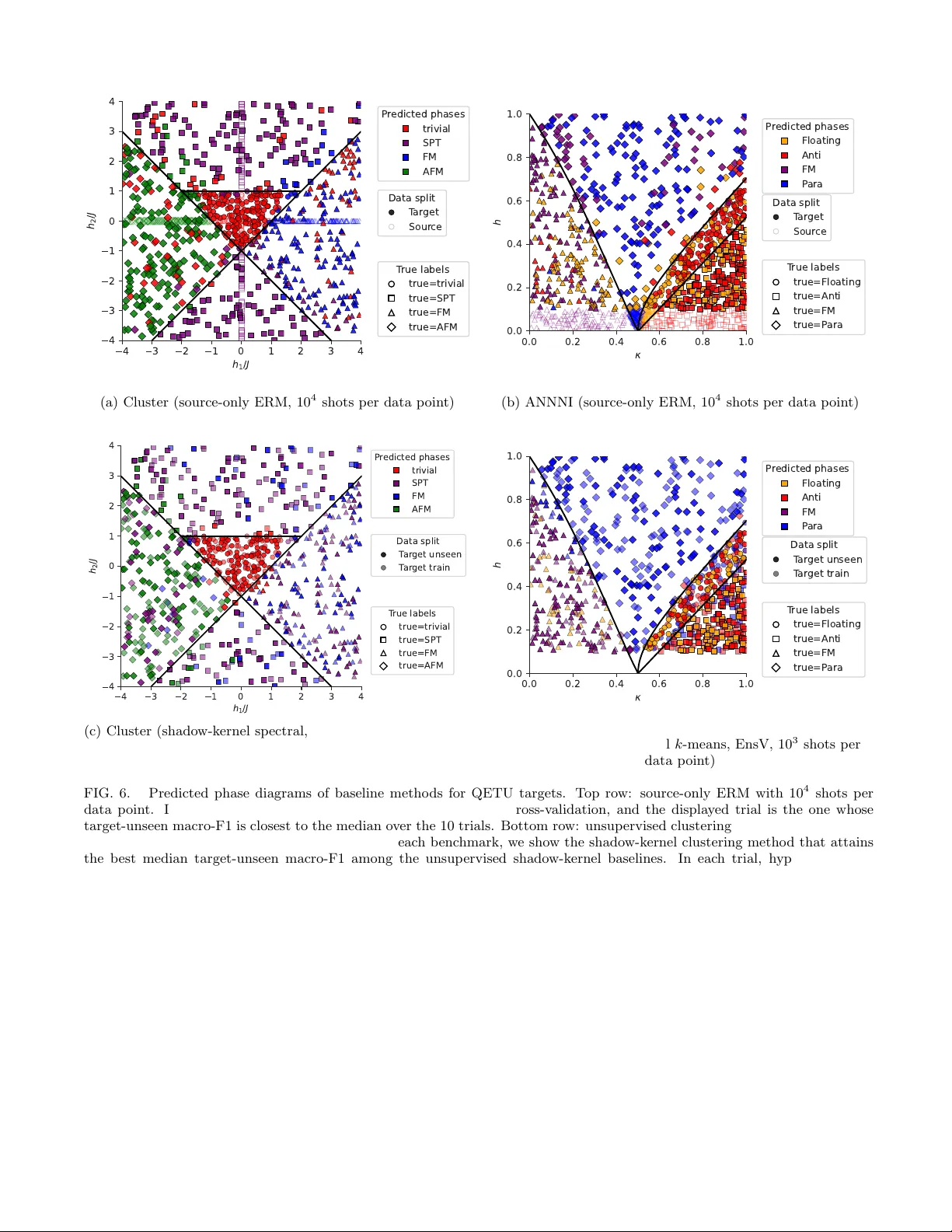

Authors: Kosuke Ito, Akira Tanji, Hiroshi Yano