DELTA: A DAG-aware Efficient OCS Logical Topology Optimization Framework for AIDCs

The rapid scaling of large language models (LLMs) exacerbates communication bottlenecks in AI data centers (AIDCs). To overcome this, optical circuit switches (OCS) are increasingly adopted for their superior bandwidth capacity and energy efficiency.…

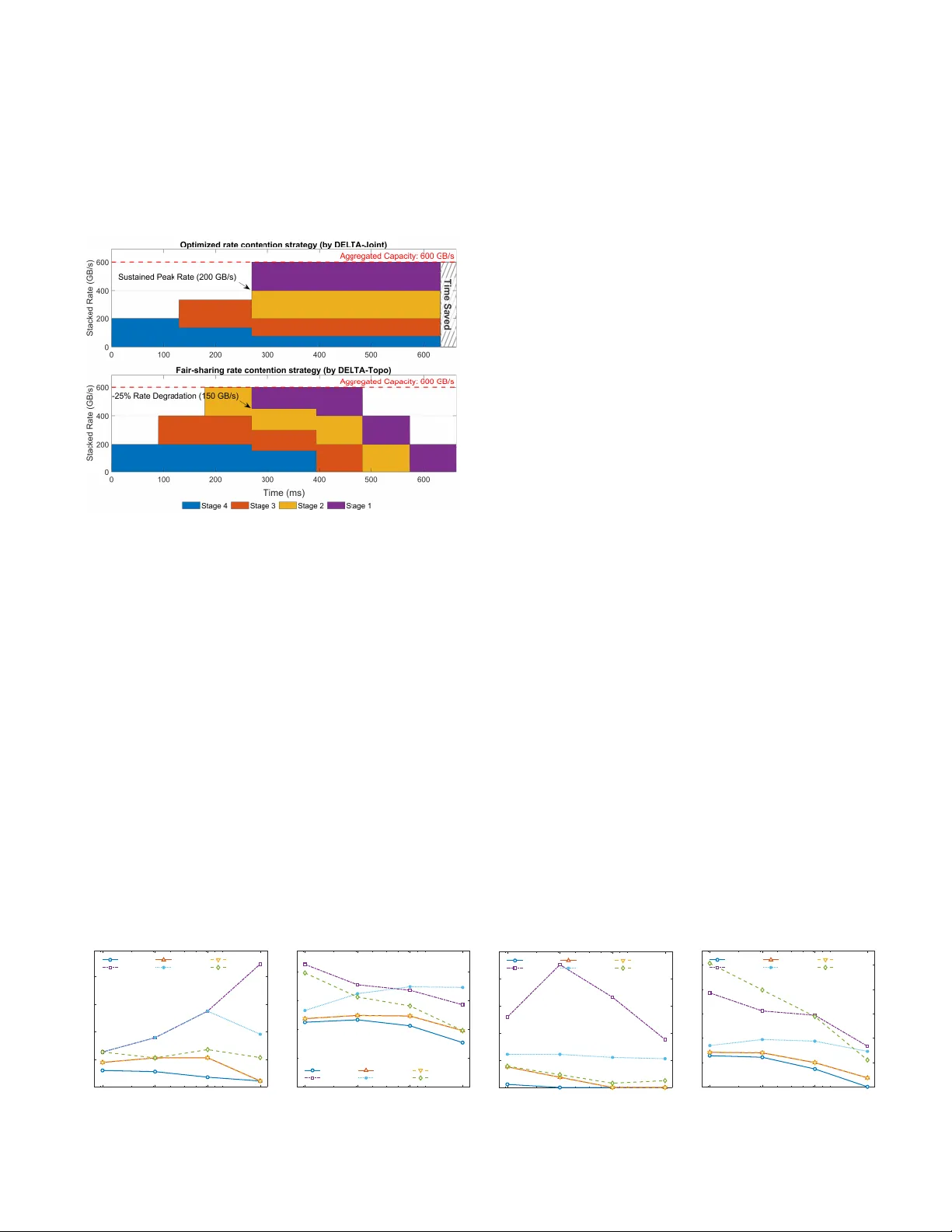

Authors: Niangen Ye, Jingya Liu, Weiqiang Sun