Meta-Harness: End-to-End Optimization of Model Harnesses

The performance of large language model (LLM) systems depends not only on model weights, but also on their harness: the code that determines what information to store, retrieve, and present to the model. Yet harnesses are still designed largely by ha…

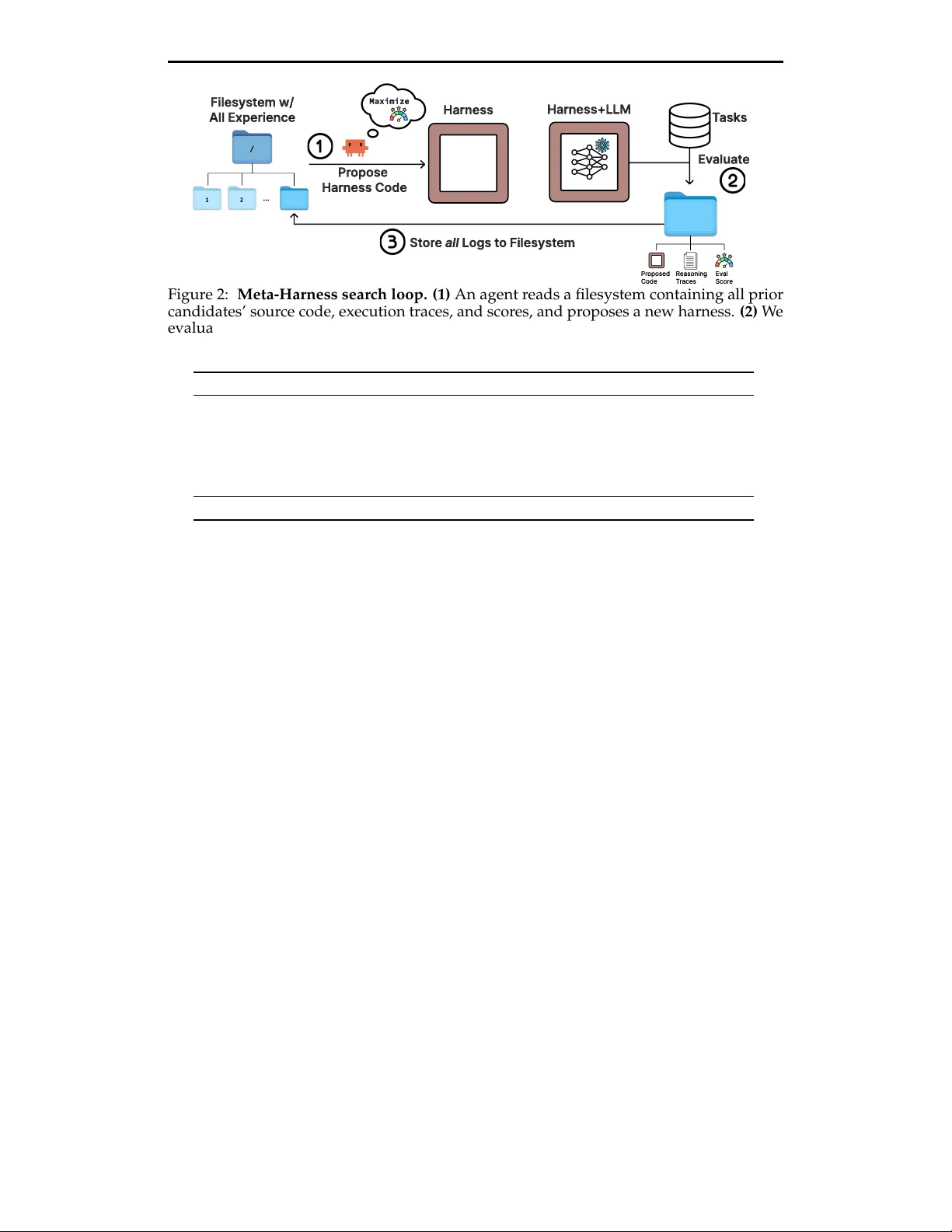

Authors: Yoonho Lee, Roshen Nair, Qizheng Zhang