Tightening CVaR Approximations via Scenario-Wise Scaling for Chance-Constrained Programming

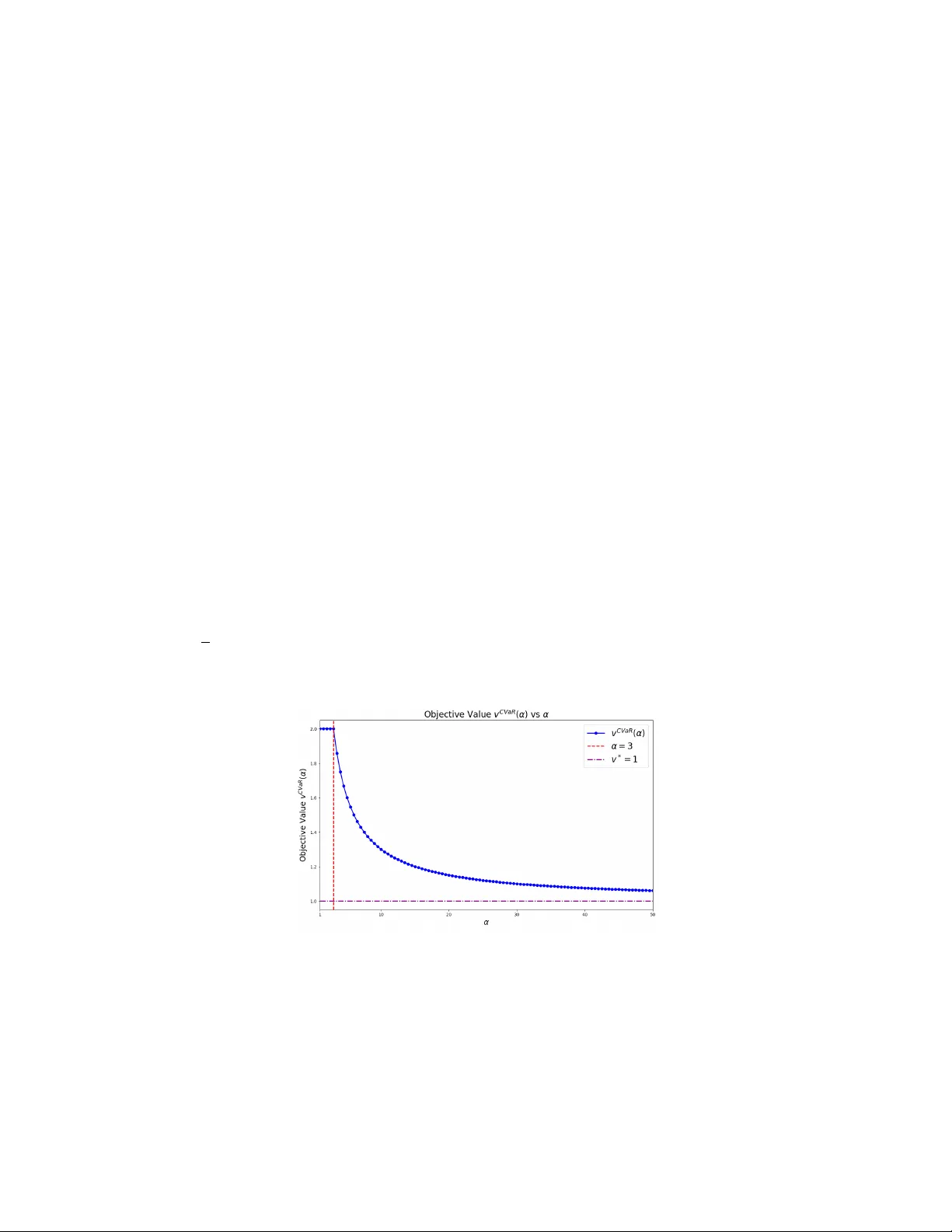

Chance-constrained programs (CCPs) provide a powerful modeling framework for decision-making under uncertainty, but their nonconvex feasible regions make them computationally challenging. A widely used convex inner approximation replaces chance const…

Authors: Rui Chen, Nan Jiang