Sommelier: Scalable Open Multi-turn Audio Pre-processing for Full-duplex Speech Language Models

As the paradigm of AI shifts from text-based LLMs to Speech Language Models (SLMs), there is a growing demand for full-duplex systems capable of real-time, natural human-computer interaction. However, the development of such models is constrained by …

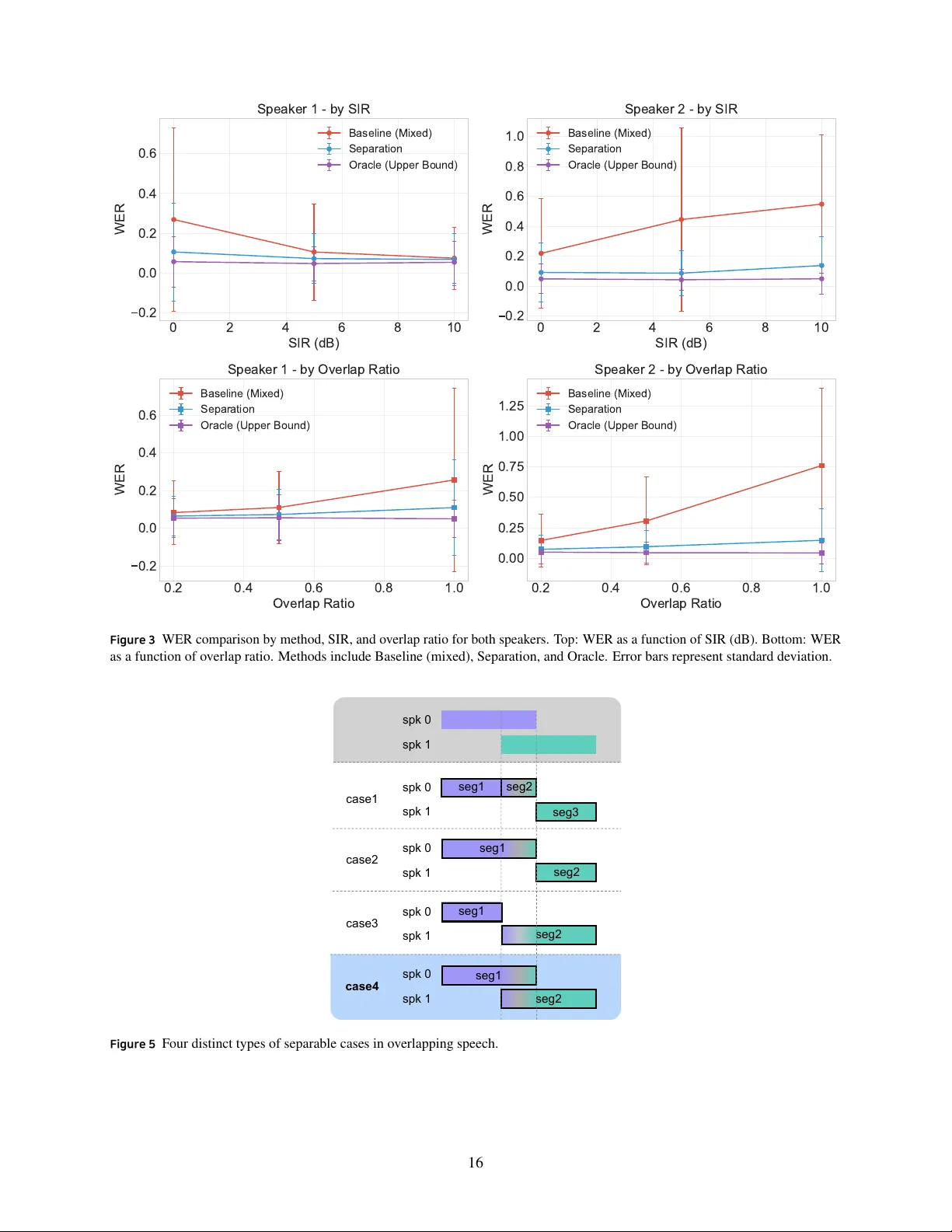

Authors: Kyudan Jung, Jihwan Kim, Soyoon Kim