SkillRouter: Skill Routing for LLM Agents at Scale

Reusable skills let LLM agents package task-specific procedures, tool affordances, and execution guidance into modular building blocks. As skill ecosystems grow to tens of thousands of entries, exposing every skill at inference time becomes infeasibl…

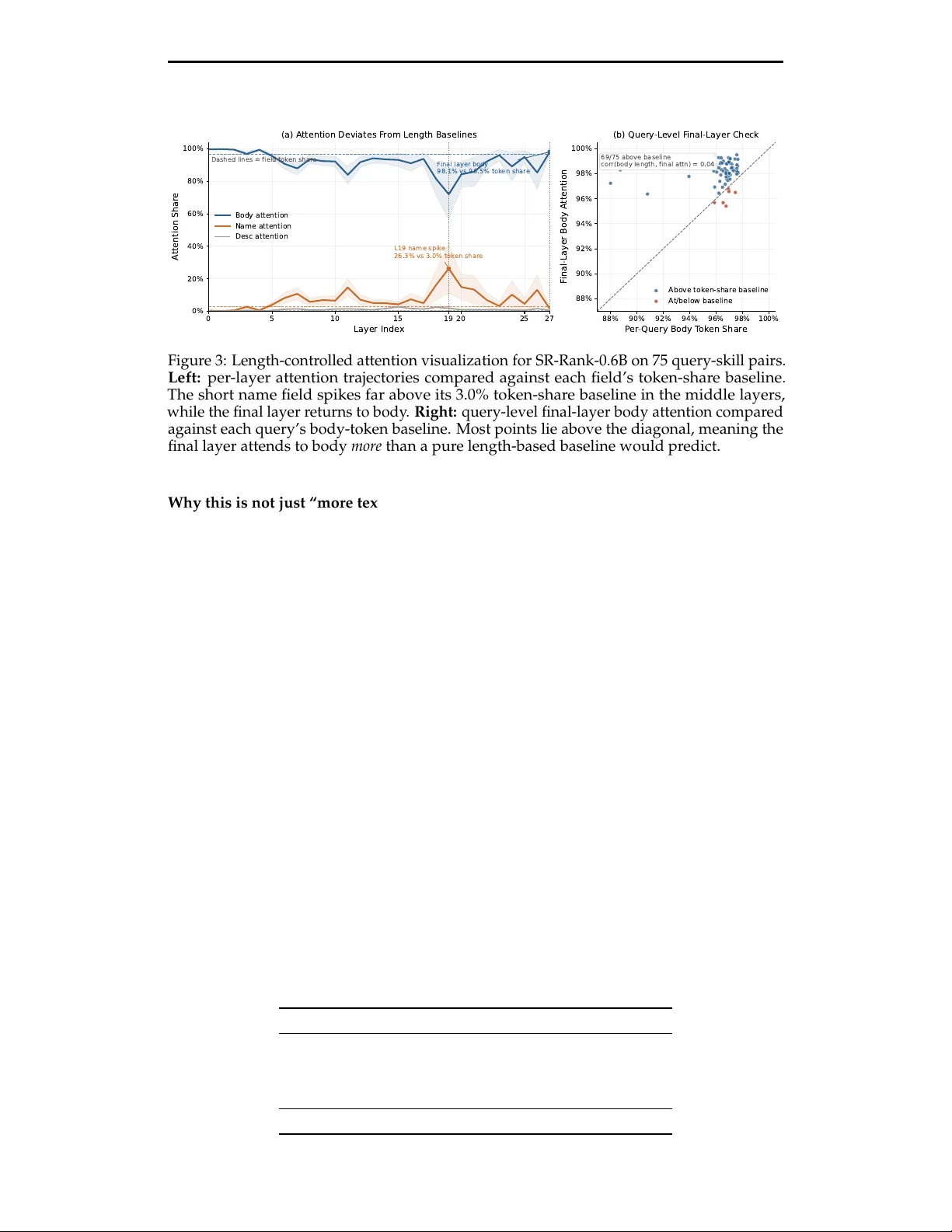

Authors: YanZhao Zheng, ZhenTao Zhang, Chao Ma