Scaling Attention via Feature Sparsity

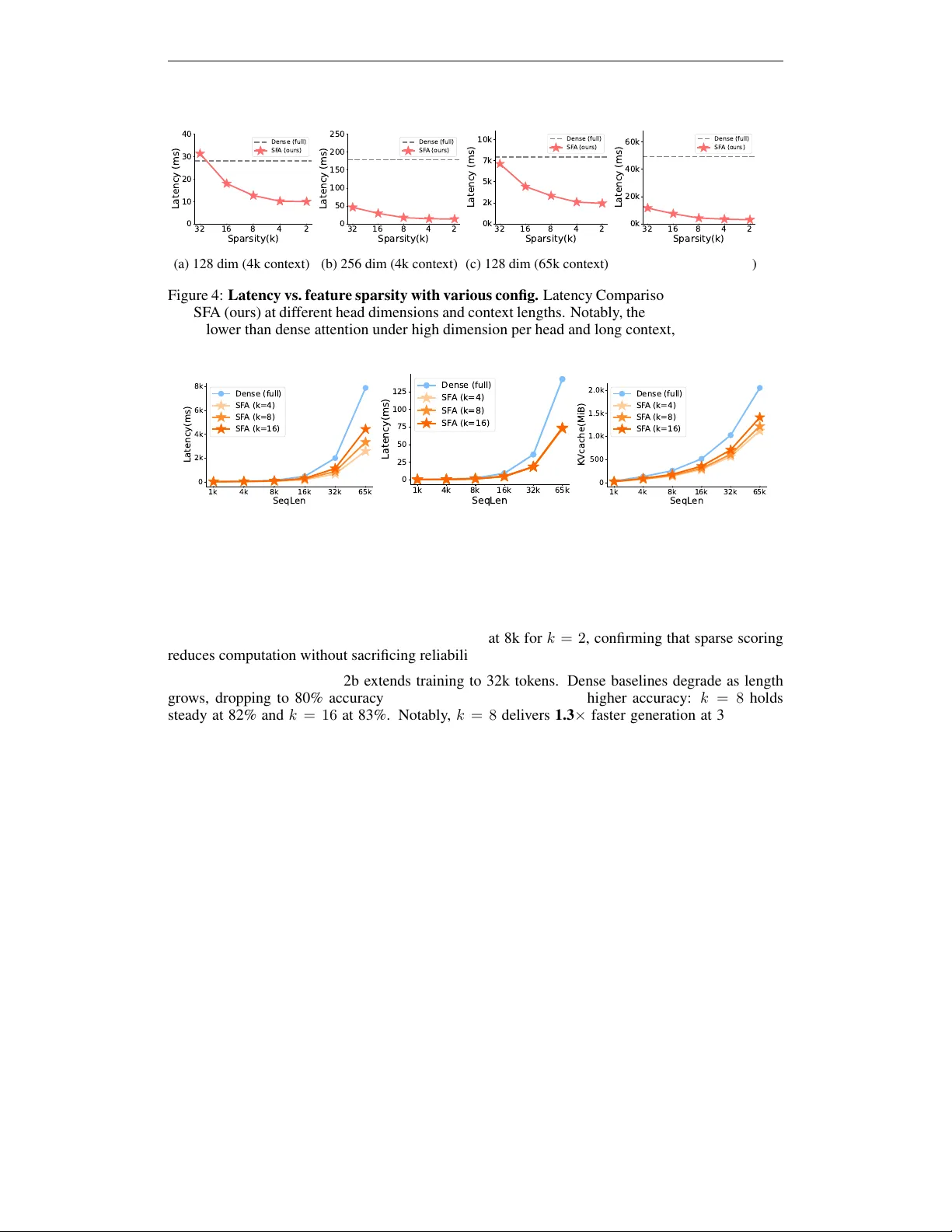

Scaling Transformers to ultra-long contexts is bottlenecked by the $O(n^2 d)$ cost of self-attention. Existing methods reduce this cost along the sequence axis through local windows, kernel approximations, or token-level sparsity, but these approache…

Authors: Yan Xie, Tiansheng Wen, Tangda Huang