RG-TTA: Regime-Guided Meta-Control for Test-Time Adaptation in Streaming Time Series

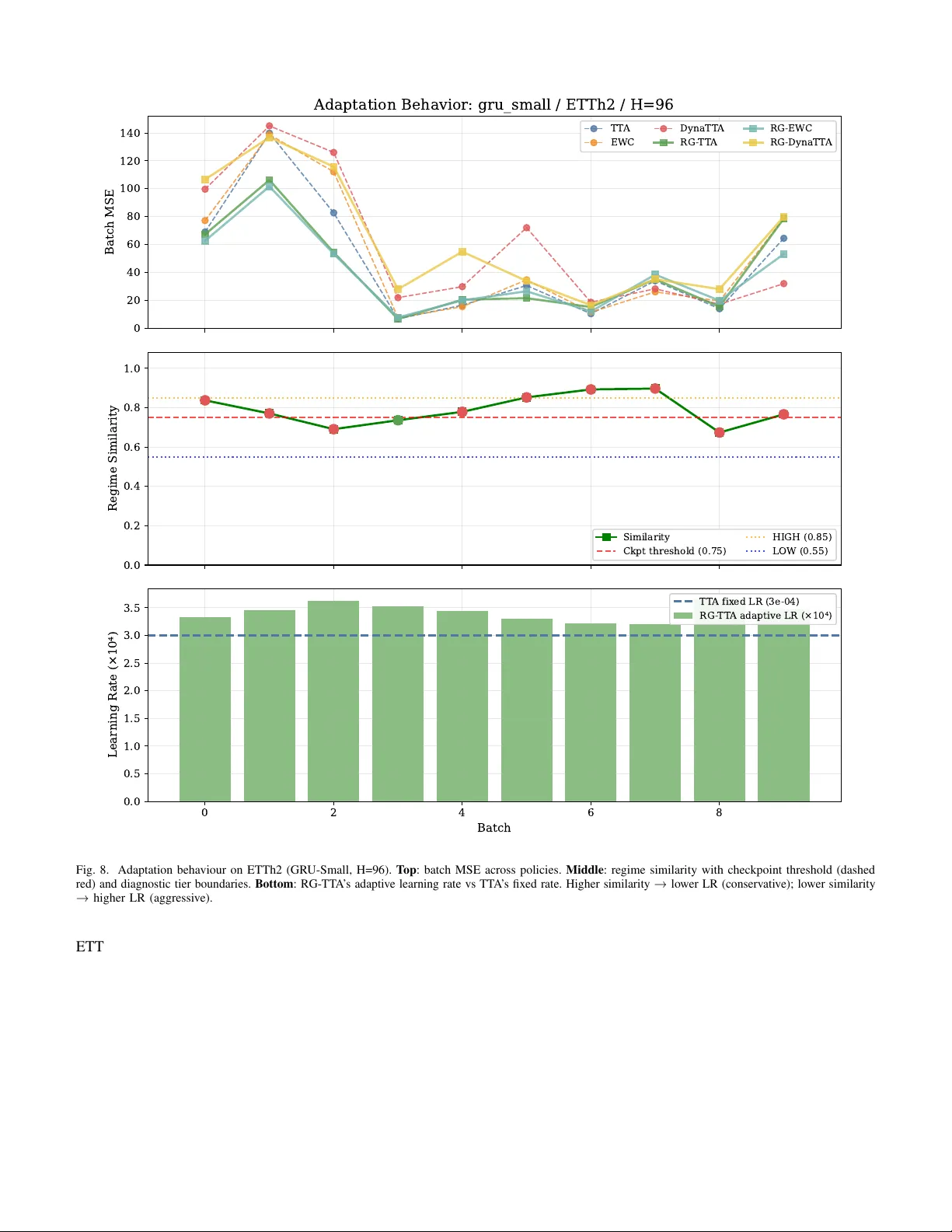

Test-time adaptation (TTA) enables neural forecasters to adapt to distribution shifts in streaming time series, but existing methods apply the same adaptation intensity regardless of the nature of the shift. We propose Regime-Guided Test-Time Adaptat…

Authors: Indar Kumar, Akanksha Tiwari, Sai Krishna Jasti