UNIFERENCE: A Discrete Event Simulation Framework for Developing Distributed AI Models

Developing and evaluating distributed inference algorithms remains difficult due to the lack of standardized tools for modeling heterogeneous devices and networks. Existing studies often rely on ad-hoc testbeds or proprietary infrastructure, making r…

Authors: Doğaç Eldenk, Stephen Xia

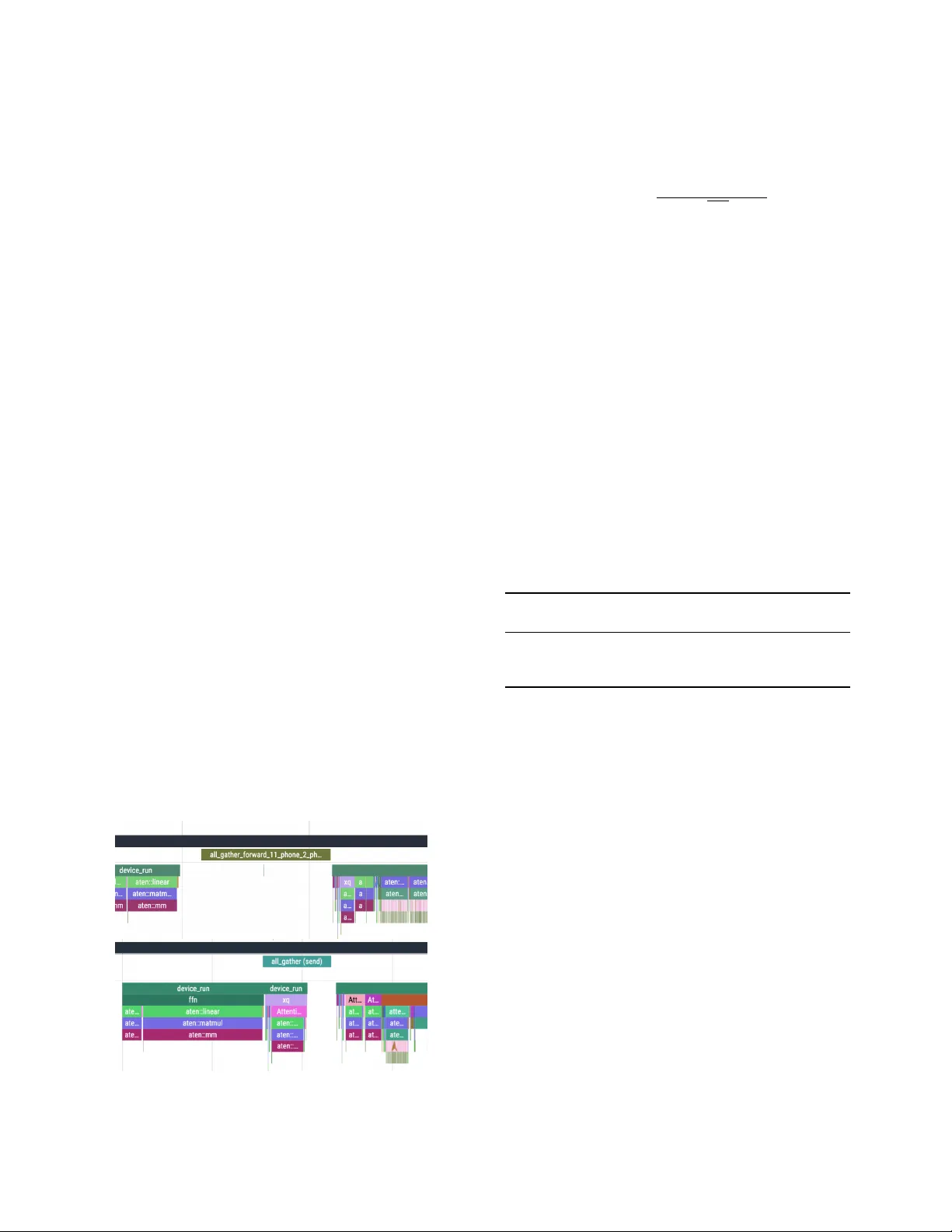

UNIFERENCE: A Discrete Ev ent Simulation Frame w ork for De v eloping Distrib uted AI Models Do ˘ gac ¸ Eldenk Department of Computer Science Northwestern University Evanston, United States dogac@u.northwestern.edu Stephen Xia Department of Computer Engineering Northwestern University Evanston, United States stephen.xia@northwestern.edu Abstract —Developing and evaluating distributed inference al- gorithms remains difficult due to the lack of standardized tools for modeling heterogeneous devices and networks. Existing stud- ies often rely on ad-hoc testbeds or proprietary infrastructure, making results hard to repr oduce and limiting exploration of hypothetical hardwar e or network configurations. W e present Uniference , a discrete-e vent simulation (DES) framework de- signed for dev eloping, benchmarking, and deploying distributed AI models within a unified en vironment. Uniference models device and network behavior through lightweight logical pro- cesses that synchronize only on communication primitives, elim- inating rollbacks while preserving the causal order . It integrates seamlessly with PyT orch Distributed, enabling the same codebase to transition from simulation to real deployment. Our evaluation demonstrates that Uniference profiles runtime with up to 98.6% accuracy compared to real physical deployments across diverse backends and hardwar e setups. By bridging simulation and deployment, Uniference pro vides an accessible, repro- ducible platform for studying distributed inference algorithms and exploring future system designs, from high-performance clusters to edge-scale devices. The framework is open-sourced at https://github.com/Dogacel/Unifer ence. Index T erms —Distributed Inference, Large Language Models, Edge Computing, Model Parallelism, Discrete-Event Simulation I . I N T R O D U C T I O N Recent progress in machine learning has shown that model accuracy continues to improv e with scale: larger datasets, parameters, and compute resources consistently yield better performance. Howe ver , this scaling law has quickly outpaced hardware capabilities. Modern large language models contain tens of billions of parameters, too large to fit onto a single GPU ev en in high-end data centers. At the same time, many real- world deplo yments operate under the opposite constraint. The y rely on heterogeneous and resource-limited hardw are [1], rang- ing from older GPUs to embedded and mobile devices. The resulting landscape spans edge to cloud, where computation is fragmented across devices with vastly different compute, memory , and network characteristics. Ef ficiently serving these models across different environments has therefore become a key systems challenge [2]–[7]. While distributed training has received extensi ve attention [8], distributed inference presents a distinct and relativ ely under-e xplored problem. Training is an offline, throughput- oriented process that can tolerate high latency and relies on asynchronous, uniform hardware. On the other hand, infer- ence is online and latency-critical: it must respond in real time to user queries or sensor input [9]. Moreov er , inference workloads often run on different hardware, including high- performance or consumer-grade GPUs, laptops, smartphones, IoT devices, and robots based on connectivity , pri vac y and cost constraints. In these en vironments, distributing inference across nearby de vices or combining edge and cloud resources greatly improves accuracy and efficienc y by utilizing idle or more powerful compute resources [10]. Numerous techniques have been proposed to partition mod- els and distribute inference. T ensor (TP), pipeline (PP), and expert-parallel (EP) techniques are some of them [11]. Dev el- opment of such algorithms are complex, and their ev aluation remains fragmented and hard to reproduce. Most studies test algorithms on proprietary or limited hardware, using ad-hoc datasets and network setups based on their access to resources [12]–[15]. Replicating such experiments is often infeasible. Realistic testing requires access to many types of devices, careful control of bandwidth and latency , and extensiv e en- vironment configuration. These barriers make development and experimentation expensi ve thus reducing transparency in distributed inference research. Even when the hardware is av ailable, the lack of standardized benchmarks prev ents fair comparison, and exploring hypothetical conditions (e.g., future 6G networks) is impossible without physical access. As a re- sult, researchers lack a unified, controllable, and reproducible way to study how distributed inference algorithms behave across div erse devices and network conditions. Sev eral discrete-ev ent simulation (DES) framew orks exist that allow users to perform fine-grained network-lev el mod- eling and simulation, such as OMNeT++ and ns-3. Howe ver , most neural network development and research are conducted in Python, while these tools are primarily implemented in C++, making integration challenging. Even existing Python-based tools such as SimPy lack native support for neural network inference, requiring dev elopers to build custom abstractions and workarounds to adapt existing architectures. In this paper , we propose Uniference , short for unified inference—a discrete-event simulation (DES) framework for dev eloping distrib uted AI models that synchronizes only on network primitiv es (e.g., send, recv , all-reduce). This de- sign eliminates rollback overhead and enables accurate per- Framework Use Case Network Sim Integration Complexity Custom Algorithms DistSim Analyze training time and device activities in dis- tributed DNN training Profiled N/A N/A SimAI Simulator for AI large-scale training for analyzing performance data Advanced High Not Supported EdgeAISim Simulating and modelling AI models in edge com- puting environments Simple Medium Not Supported V idur LLM inference simulator for deployment conf. Profiled Medium Partial Support HERMES Event-dri ven, multi-stage inference pipeline simula- tion and optimization Simple Low Partial Support simPy Discrete-ev ent simulation (ns.py) Medium Supported OMNET++ Discrete-ev ent and network simulation Advanced High (C++) Supported sim4DistrDL DES for distributed training and network sim Advanced Medium Limited Support Uniference DES for distrib uted algorithm development, benchmarking and deployment Profiled Low Supported T ABLE I C O MPA R IS O N O F E XI S T I NG TO O L S . formance modeling across devices and networks through a unified simulation and deployment API. Uniference is implemented in Python and supports deployment via Py- T orch Distributed, allowing seamless integration with existing models. It treats distributed inference as a first-class citizen, providing abstractions and tools that make benchmarking and profiling more accessible without the need to manually con- figure complex device arrays or fine-tune network parameters. Moreov er , Uniference ensures that communication is free of race conditions and deadlocks, providing deterministic and reproducible execution across distributed en vironments. Our contributions are as follows: 1) W e propose Uniference , a DES framework for de- veloping distributed AI architectures. Uniference pro- vides tools that enable developers to simulate, emulate, deploy , and distribute their own de veloped models across various compute platforms and network conditions. 2) Le veraging Uniference , we implement and ev alu- ate the most popular transformer based parallelization schemes, TP , PP , TP-PP Hybrid and V oltage [16], and demonstrate that our framework can simulate within 98.6% accuracy , compared to real deployments on v ary- ing hardware platforms and network conditions. 3) Through a case study , we show how profiling tools in Uniference guided the discov ery , ev aluation, and deployment of Kilovolts , an optimization that overlaps computation and communication in the V oltage paral- lelization scheme, leading to an inference speedup of up to 16%. I I . B AC K G RO U N D A N D M OT I V A T I O N A. Distribution of AI Models As AI models grow larger , running them on multiple de vices has become the de facto standard on high-performance settings [17]. Recently released models can’t fit on a single GPU as they require more than hundreds of GBs of memory . Moreov er , many mobile, IoT , and edge scenarios uses hardware with e ven less compute. Therefore consumers are forced to choose from smaller models and sacrifice performance. There has been v ar- ious techniques such as Quantization [18] or Pruning [19] [20] that try to perform ef ficiently under limited compute. Howe ver they work under the assumption that on-device compute is fixed. W e belie ve it is possible to increase compute by utilizing nearby devices by distributing the model or workload across devices, which would democratize access to more powerful models. B. Simulation of Distributed Algorithms Most common tools for simulating distributed systems and machine learning workflo ws has been listed in T able I. First, simulations of client and server load include V idur [21], EdgeAISim [22], SimAI [23], DistSim [24] focus on balancing the load coming to the servers, tuning parameters such as batching policy , model parallelism strategy , hardware and model type. One short-coming of such simulators is that they are not running real models underneath but rather predict runtime and behavior based on pre-collected metrics, such as memory usage of different KV -cache sizes and models. Those models come in handy when researchers use them to optimize their compute clusters that serve inference requests to users, howe ver they only support a given preset of models and strategies supported by the simulator . Second, performance predictiv e models [25] usually work well in single-device deployments. They utilize kernel-le vel mappings and optimizations, execution graphs, hardware specs and memory layout to predict time, memory , and power of LLM runtime on varying platforms. Howe ver , predicting these metrics in a multi-de vice setting is dif ficult due to the difficulty in modeling communication. Third, pipeline optimizers such as DistSim or HERMES [2], focus on optimizing the training of AI models by modeling them as pipelines. They focus on batch sizes, GPU utilization, backward passes, filling pipeline bubbles [26], pre-fill step or RA G retriev als. Howe ver they do not provide an easy API for users to dev elop their custom algorithms. C. Discr ete Event Simulation Discrete ev ent simulation, common in network simulators, models the system as a set of states and state transformations based on internal clocks. Conservati ve approaches simulate the next time step only when all causalities are resolved and it is safe to do so. In optimistic simulation, individual systems can move their clock forward and roll-back if some interaction happens. But for neural networks, rollbacks hav e high computation cost. Currently , DES is used in deep learning frameworks for simulating and optimizing training workloads such as sim4DistrDL [27] or inference workloads such as HERMES. DES has shown to be useful for accurate training simulation without access to real resources. Discrete ev ent network simulators, such as OMNET++, can model computer communication costs with great precision, but do not provide an easy way to integrate existing AI models. This is for two reasons. First, most AI models are de veloped and prototyped in python, while network simulators are often written in C/C++. Second, many of these simulators require dev elopers to extend their interfaces, which is difficult for integrating existing models. D. Motivation Existing tools are proven to be useful in different settings such as single-device runtime prediction or training pipeline optimizations. Howe ver , they do not capture the complexity of modeling and ev aluating distributed algorithms. Also, existing DES lack an easy way to integrate with AI models. Looking back, existing work on distributed algorithms lack common benchmarks or straightforward ways to replicate their studies. Also static analysis falls short when predicting runtime of distributed algorithms, most notably due to two factors. Batching Efficiency: Analytical models assume linear pro- cessing. They fail to capture the GPU’ s sub-linear scaling (tiling effects), leading to massive overestimation of latency at high loads. V ariable Payloads: Analytical models typically assume fixed network payloads. They fail to capture varying transmission times caused by dynamic tensor shapes. There- fore a DES based simulator is needed for accurate prediction and high adaptability . Our goal is to provide a unified interface and benchmark for dev elopment, simulation, ev aluation and deployment of distributed AI models that is calibrated and tested against real world deployments. I I I . D E S I G N A. Simulation Engine Uniference runs a discrete-event simulation engine where devices and networks are modeled as logical processes with independently running local clocks. Each process syn- chronizes either at network ev ents or programmer-defined yield points. Uniference executes real code instead of simulation, it captures the underlying kernel-lev el operations and ex ecution graphs as close to the original execution. Synchronization at interaction: Interaction ev ents are de- fined as networked operations, such as sending a message, receiving a message, running all-gather , all-reduce or broadcast operations. Such interactions schedule a network operation at the engine lev el. Deadlock detection: The engine runs the simulation while preserving the causal order of e vents based on network dependencies as shown in Figure 1, which detects deadlocks. The causal order also ensures that the simulator Network World Device 1 Device 2 Attention MLP All Gather Attention MLP All Gather Transfer All Reduce All Reduce Transfer Device 1 Attention Device 2 Attention Network Device 2 MLP Device 1 MLP Network Simulation Time Real Time Fig. 1. Discrete Event Simulation Overvie w doesn’t need to rollback ev ents and can accurately simulate the interactions between devices. B. Simulation of Networked Operations The simulation engine models distributed communication operations at a granular lev el, tracking how bandwidth is dynamically allocated across devices as operations start and finish. Since distributed algorithms depend on network latency and bandwidth, users can either provide these parameters directly or use the built-in profiler to measure them from the actual network. W e evaluate the accuracy of both profiling and simulation in subsection IV -B. C. Simulation Modes and Deployment The simulation engine has two modes. 1) Host Emulation: The emulation runs on a host device. Each simulated device runs the AI model on a separate logical process (a light-weight thread). 2) Deployment: The application can be directly deployed to run or benchmark real-life performance on a specific hardware without any code changes. The unified API ensures there are no discrepancy between simulation runs and deployment, ensuring the algorithmic correctness. D. Pr ofiling Devices for Simulation Uniference enables profiling device runtime, kernel launches and network events real-time and exporting the simulated timeline using chrome-traces format. Uniference also inte grates directly with the PyT orch profiler to collect lo w- lev el traces. Heterogeneity: T o estimate the runtime of a de vice, users can either specify a slowdo wn factor or directly run the simu- lation on the target device type for more accurate results. The slowdo wn factor allows users to approximate the performance of hardware they do not have access to at scale. Furthermore, users can assign unique slo wdo wn factors to specific kernel s or code regions, enabling the emulation of heterogeneous systems with varying scaling characteristics I V . R E S U LT S A N D D I S C U S S I O N Uniference supports a bring your own ar chitectur e de- velopment model. For ev aluating our engine’ s accuracy , we hav e integrated a text-generation transformer model LLama3 and a multi-modal vision model CLIP-V iT [28]. A. Simulation Overhead In this section, we ev aluate the impact Uniference ’ s ov erhead on its ability to accurately profile inference time. The main source of overhead is thread switching, which occurs in all discrete ev ent simulators when the engine stops and T ABLE II U N I F E R E N C E ’ S S U PP O RT E D D I ST R I BU T E D C O M MU N I C A T I O N O P E RAT IO N S Operation Hops T otal Bandwidth Description broadcast N − 1 ( N − 1) × M One node sends data to all other nodes in the network all_gather N − 1 N × ( N − 1) × M Each node shares its data with all other nodes all_reduce 2( N − 1) 2( N − 1) × M Performs reduction operation (sum, max, etc.) across all nodes send 1 M Point-to-point transfer from one node to a specific destination recv 1 M Point-to-point receive from a specific source node Note: N = number of nodes, M = message size. All gather and reduce are ring-based algorithms and use send / recv underneath. resumes dif ferent processes. Next, we describe our setup to ev aluate the impacts of thread switching. Setup. Our experiments were run on Jetson Orin Nano and an HPC cluster , 80GB NVIDIA A100 SXM4 with 128GB RAM allocation and 16 vCPUs of Intel(R) Xeon(R) Gold 6338 CPU @ 2.00GHz with the model LLama 3.2-1B and 3B. For LLMs, the auto-regressiv e nature of text-generation creates complexities in predicting normalized run-times. First of all, as the generated text is auto-regressi ve, the generation task gets more computationally expensiv e ov er time. Secondly , if the initial prompt is too long, it causes a huge imbalance in computation over time, as kv-caching causes next token gener- ation to be orders of magnitudes faster than the initial pre-fill. Therefore experiments have been designed with different text size and max next token counts to ensure the simulator is tested under different conditions. In order to get stable results and ensure the model doesn’t stop generating tokens, the LLM is asked to count numbers without stopping. W e have altered the amount of numbers in the prompt. Results. Latin Hypercube Sampling of text-length between 0 and 1000, and thread switch (yield) probability between 0 and 1 was used to get maximum coverage for our auto- regressi ve performance benchmark. The same experiment was conducted with 1-device, 2-device and 4-device simulations. The effect of device count, number of yields and sequence length on the model runtime was compared using ordinary least squares (OLS) regression. Results in T able III for the autoregressi ve task show number of yields or simulated de- vices were statistically significant ( p < 0 . 01 ), howe ver doing permutation importance (PI) and partial R 2 analysis shows that their ov erhead is practically insignificant. The same experiment was repeated for the prefill task. This time, sequence length varied between 0 and 2000. The same LHS simulation and OLS regression was run again. This time, a quadratic relationship between time and prompt length was found. Parameters yield and device count also did not hav e practically significant effect on the runtime. T ABLE III M A NY T OK E N P R E DI C T I ON E X PE R I M EN T RE G R E SS I O N R E S ULT S coef std err PI R 2 Const 0.054 0.000 - 0.779 Max tokens 12.19 0.001 24.91 0.325 Y ield count 0.191 0.000 0.000 0.999 Device count 0.000 0.000 0.000 0.999 T ABLE IV M O DE L P ER F O RM A N C E C O MPA R IS O N ( R 2 A N D M A PE ) . Hardwar e Backend R 2 MAPE HPC NCCL 0.9704 0.1783 HPC Gloo 0.9999 0.0248 Jetson Orin Gloo 1.0000 0.0091 T ABLE V E S TI M A T I ON AC C U RA CY O F R E A L T I ME S P EN T O N N E TW O R K Hardwar e Backend R 2 MAPE HPC NCCL 0.78-0.90 0.2065-0.3626 HPC Gloo 0.9860 0.0536 B. Network Simulation Accuracy W e have deployed Uniference in a real setting to compare time spent on network to the simulation to ensure accuracy of runtime predictions. Three experiments were con- ducted on, 8-node HPC cluster (A100 GPU, InfiniBand), 8- node HPC cluster (A100 GPU, 1 Gbps Ethernet), 4× Jetson Orin Nano DevKits (1 Gbps Ethernet). Infiniband connection used the NCCL backend for syn- chronizing data between distrib uted processes, whereas others used Gloo. Each experiment has started on 2 devices and went up to 8 devices (4 for Jetson). On each hardware, two types of experiment was run. The first experiment is designed to accurately model the network parameters, latency and bandwidth. The second experiment was used for ev aluating our simulation results in a real inference setting. 1) All-gather of a tensor with sizes 1B to 200MB. 2) Running TP among varying text size and tokens. For the first experiment, we ran 35 different package sizes with 5 repeats each. The network’ s perceived latency and bandwidth was found by fitting a simple curve with two parameters using Ridge Re gr ession . The latency relationship is modeled as in T able II. T able IV shows that the network latency trends were re- produced with near-perfect accuracy ( R 2 ≈ 1 ), achieving an av erage error of ±2% under high-speed and ±17% under ultra- high speed networks. In the second experiment, we ex ecuted the TP algorithm, measured the total duration of data transfers and compared these empirical results against the engine’ s predicted per- formance. Because production HPC clusters utilize shared network and hardware resources, achieving perfectly con- sistent results is challenging due to contention and noise. Despite these en vironmental v ariables, our simulation accuracy remains high, as summarized in T able V. C. Advanced Scenarios T o show advantage of DES , we also ev aluated Uniference with PP and Poisson arriv al of requests that are continuously batched. W e selected the Poisson distribution as it is the standard proxy for realistic production traffic, where stochastic inter-arri v al times create burstiness that forces the system into dynamic batching sizes. Our results sho w < 10% av erage error on predicting av erage delay , whereas static analysis fails to scale. In contrast, the analytical baseline (M/D/1) suffered > 100% error . Furthermore, Uniference maintains high accuracy across non-stochastic workloads, yielding 2% error for PP and 5% error for a hybrid TP+PP parallelization scheme. These results show the engine can accurately simulate tricky synchronization and communication patterns that simple theoretical models miss. V . C A S E S T U DY : D E V E L O P I N G A C O M M U N I C A T I O N A W A R E P O S I T I O N - W I S E P A RT I T I O N I N G S C H E M E Uniference was b uilt to study improving efficienc y of various parallelization schemes. Therefore in order to ev alu- ate efficienc y of the simulator, we would like to share our optimization of the V oltag e algorithm [16], which we call Kilovolts , using computation communication ov erlap and ho w we validated it using our simulator . V oltage is a distributed inference system designed for edge devices that reduces bandwidth consumption by up to 4× compared to conv entional tensor parallelism. It achieves this reduction through a position-wise layer partitioning strategy . In each transformer block, the output of the previous layer x is distributed across all devices. Each device computes its own partition of the transformer layer and then performs an all-gather operation. The aggregated result corresponds to the output of current transformer block, which is subsequently passed as input to the next layer . Fig. 2. Uniference Trace T ool showing V oltage [16] (top) and the improved Kilovolts algorithm (bottom) with the communication overlap on xq . Case Study . Our simulator didn’t require any major changes on the neural networks unlike the other python simulators that depend on generators. Therefore LLama3 was directly forked and integrated into the simulator with a thin wrapper . The V oltage algorithm implements the attention as follows: A p ( x ) = softmax ( x p W Q )( xW K ) T √ F H ( xW V ) Using the Uniference trace tool (Figure 2), we saw that the computation of x p W Q runs after the all gather . Howe ver calculating x p W Q doesn’t need to wait for the all-gather , as x p is already av ailable from the previous layer without the all gather operation. All gather is only required for collecting remaining partitions to fully assemble x . The time gained from this overlap can be calculated as max (0 , T transf er − T xW q ) . Next, we study how transfer and calculation speed changes between dif ferent input shapes and network conditions using our simulator, which showed a potential speed-up between 1% to 10%. For comparison, we deployed V oltage and Kilov olts on four Jetson Orins and varied the network bandwidth and latency . W e observe speedups of up to 5% with CPU (T able VI) and up to 16.1% with GPU execution (T able VII), which matches closely with simulations. Deep-profiling rev ealed that fluctuations in measurements are caused by internal queueing and buf fering of asynchronous collective operations. T ABLE VI S P EE D U P O F Kilovolts O N O R I N C P U Input Len Bw . Lat. Time Speedup (Mbps) (ms) (s) (%) 8 100 10 1.729 [ − 8 . 07% , 2 . 13%] 32 1000 1 1.563 [ − 11 . 81% , 4 . 94%] 256 100 10 12.22 [1 . 24% , 3 . 48%] Deployment Experience. Our deployment experience across varying netw ork conditions highlights the need for a simulator, like Uniference , that allo ws dev elopers and researchers to easily ev aluate system performance under di verse network conditions. While varying network parameters, we found that the Linux kernel provided with the Jetson boards did not include the htb , tbf , or netem modules. As a result, de velopers were required to rebuild the kernel and reflash all four devices to enable traffic control. Using middlew are solutions such as toxiproxy was also infeasible, since the Gloo backend relies on ephemeral ports and does not allo w specifying fixed target ports for communication—an open issue in the Gloo repository that has remained unresolved for ov er seven years. W e also experimented with redirecting a port range to toxiproxy using N A T table rules, but this approach was incompatible with Gloo’ s multiplex ed connections. The only viable solution to control network bandwidth was to employ an OpenWR T router and use netem and tbf for traffic shaping. V I . L I M I TA T I O N S A N D F U T U R E W O R K The simulator was originally dev eloped to support our research on studying, replicating, and discov ering new dis- T ABLE VII S P EE D U P O F O R I N O N C U DA Input Len Bw . Lat. Time Speedup (Mbps) (ms) (s) (%) 269 100 1 1.253 [ − 3 . 11% , 3 . 15%] 269 100 10 1.710 [ − 1 . 24% , 4 . 91%] 269 1000 1 0.428 [ − 7 . 43% , 4 . 34%] 1002 100 1 3.890 [ − 5 . 60% , 4 . 99%] 1002 100 10 4.015 [ − 2 . 66% , 7 . 59%] 1002 1000 1 1.090 [ − 19 . 1% , 16 . 1%] tributed inference algorithms. Consequently , several engineer- ing decisions were made to suit our specific needs, such as focusing primarily on transformer-based models and im- plementing a custom network model rather than adopting a sophisticated simulator like ns-3. Nonetheless, our framework supports the integration of div erse model architectures and network backends within the DES en vironment. W e hope this flexibility will enable researchers to bring their own models and tools according to their experimental requirements. Currently , the tool runs on the host device to simulate distributed ex ecution. If the host device has limited memory , it may not be feasible to run the full model locally . In such cases, users can either share weights across simulated devices or ex- ecute the simulation on higher-end hardware while applying a slowdo wn factor to approximate the performance of the target device. Ho wev er a static slowdo wn factor might not capture performance characteristics of different devices. W e are also dev eloping a remote tar get mode, in which the DES engine can attach to e xternal de vices o ver the network. Because the engine makes no assumptions about the underlying hardware, this mode will enable real-time performance characterization on actual remote devices, eliminating the need to run lightweight threads on the host. V I I . C O N C L U S I O N W e presented Uniference , a discrete e vent simulator for de veloping distrib uted AI algorithms. While the cur- rent frame work provides a robust foundation, future research should focus on improving low-le vel runtime approximations across heterogeneous devices and overcoming host memory constraints. In addition, analyzing behavior under high-speed network configurations could lead to more accurate models for HPC environments. Ultimately , Uniference can serve as a reliable and scalable platform for developing, deploying and benchmarking distributed algorithms and for reproducing both prior and emerging studies, helping bridge the gap between theoretical and practical performance limits. R E F E R E N C E S [1] C. Hu and B. Li, “Distributed inference with deep learning models across heterogeneous edge de vices, ” in INFOCOM . IEEE, 2022, pp. 330–339. [2] A. R. Bambhaniya et al. , “Understanding and optimizing multi-stage AI inference pipelines, ” CoRR , vol. abs/2504.09775, 2025. [Online]. A vailable: https://doi.org/10.48550/arXiv .2504.09775 [3] K. Alizadeh, I. Mirzadeh, D. Belenko et al. , “LLM in a flash: Efficient large language model inference with limited memory , ” in ACL (1) . Association for Computational Linguistics, 2024, pp. 12 562–12 584. [Online]. A vailable: https://doi.org/10.18653/v1/2024.acl- long.678 [4] Y . Zheng, Y . Chen, B. Qian et al. , “ A review on edge large language models: Design, execution, and applications, ” ACM Comput. Surv . , vol. 57, no. 8, pp. 209:1–209:35, 2025. [Online]. A vailable: https://doi.org/10.1145/3719664 [5] S. S. Gill, M. Golec, J. Hu et al. , “Edge AI: A taxonomy , systematic revie w and future directions, ” Clust. Comput. , vol. 28, no. 16, 2025. [Online]. A vailable: https://doi.org/10.1007/s10586- 024- 04686- y [6] O. Friha et al. , “Llm-based edge intelligence: A comprehensiv e survey on architectures, applications, security and trustworthiness, ” IEEE Open J. Commun. Soc. , 2024. [Online]. A vailable: https: //doi.org/10.1109/OJCOMS.2024.3456549 [7] Z. Li, et al. , “PRIMA.CPP: speeding up 70b-scale LLM inference on low-resource everyday home clusters, ” CoRR , 2025. [Online]. A vailable: https://doi.org/10.48550/arXiv .2504.08791 [8] M. Shoeybi, M. Patw ary , R. Puri et al. , “Megatron-lm: T raining multi-billion parameter language models using model parallelism, ” 2019. [Online]. A vailable: http://arxiv .org/abs/1909.08053 [9] F . Cai et al. , “Edge-llm: A collaborative framework for large language model serving in edge computing, ” in ICWS , 2024. [Online]. A vailable: https://doi.org/10.1109/ICWS62655.2024.00099 [10] P . Li, E. Koyuncu, and H. Seferoglu, “ Adaptiv e and resilient model-distributed inference in edge computing systems, ” IEEE Open J. Commun. Soc. , 2023. [Online]. A vailable: https://doi.org/10.1109/ OJCOMS.2023.3280174 [11] Y . Hu, C. Imes, X. Zhao, S. Kundu et al. , “Pipeline parallelism for inference on heterogeneous edge computing, ” CoRR , 2021. [Online]. A vailable: https://arxiv .org/abs/2110.14895 [12] S. Y e, J. Du, L. Zeng et al. , “Galaxy: A resource-efficient collaborati ve edge AI system for in-situ transformer inference, ” in INFOCOM , 2024. [Online]. A v ailable: https://doi.org/10.1109/INFOCOM52122. 2024.10621342 [13] Z. Li, W . Feng et al. , “TPI-LLM: serving 70b-scale llms efficiently on low-resource mobile devices, ” IEEE T rans. Serv . Comput. , 2025. [Online]. A vailable: https://doi.org/10.1109/TSC.2025.3596892 [14] M. A. Qazi, A. Iosifidis, and Q. Zhang, “PRISM: distributed inference for foundation models at edge, ” CoRR , vol. abs/2507.12145, 2025. [Online]. A vailable: https://doi.org/10.48550/arXi v .2507.12145 [15] A. Parthasarathy and B. Krishnamachari, “DEFER: distributed edge inference for deep neural networks, ” in COMSNETS . IEEE, 2022. [16] C. Hu and B. Li, “When the edge meets transformers: Distributed inference with transformer models, ” in ICDCS . IEEE, 2024, pp. 82–92. [Online]. A vailable: https://doi.org/10.1109/ICDCS60910.2024.00017 [17] Y . Zhong et al. , “Distserve: Disaggregating prefill and decoding for goodput-optimized large language model serving, ” in OSDI , 2024. [18] J. Lin, J. T ang, H. T ang et al. , “A WQ: activ ation-aw are weight quantization for on-device LLM compression and acceleration, ” 2024. [Online]. A vailable: https://arxi v .org/abs/2306.00978 [19] J. Liu, T . Liu, Y . Sui, and S. Xia, “Elastiformer: Learned redundancy reduction in transformer via self-distillation, ” CoRR , 2024. [Online]. A vailable: https://doi.org/10.48550/arXiv .2411.15281 [20] X. Liu et al. , “Flowspec: Continuous pipelined speculative decoding for efficient distributed LLM inference, ” CoRR , 2025. [Online]. A vailable: https://doi.org/10.48550/arXi v .2507.02620 [21] A. Agrawal et al. , “VIDUR: A large-scale simulation framework for LLM inference, ” in MLSys , 2024. [22] A. R. Nandhakumar et al. , “EdgeAISim: A toolkit for simulation and modelling of AI models in edge computing en vironments, ” CoRR , 2023. [Online]. A vailable: https://doi.org/10.48550/arXiv .2310.05605 [23] X. W ang et al. , “Simai: Unifying architecture design and performance tuning for large-scale large language model training with scalability and precision, ” in NSDI . USENIX Association, 2025, pp. 541–558. [24] G. Lu et al. , “Distsim: A performance model of large-scale hybrid distributed DNN training, ” in CF . ACM, 2023, pp. 112–122. [Online]. A vailable: https://doi.org/10.1145/3587135.3592200 [25] L. L. Zhang et al. , “nn-meter: towards accurate latency prediction of deep-learning model inference on div erse edge devices, ” in MobiSys . A CM, 2021. [26] D. Arfeen, Z. Zhang, X. Fu, G. R. Ganger, and Y . W ang, “Pipefill: Using gpus during bubbles in pipeline-parallel LLM training, ” in MLSys , 2025. [27] X. Liu, Z. Xu, Y . Qin, and J. Tian, “ A discrete-e vent-based simulator for distributed deep learning, ” in ISCC . IEEE, 2022, pp. 1–7. [Online]. A vailable: https://doi.org/10.1109/ISCC55528.2022.9912919 [28] A. Radford et al. , “Learning transferable visual models from natural language supervision, ” in ICML , 2021.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment