Estimation in moderately misspecified models

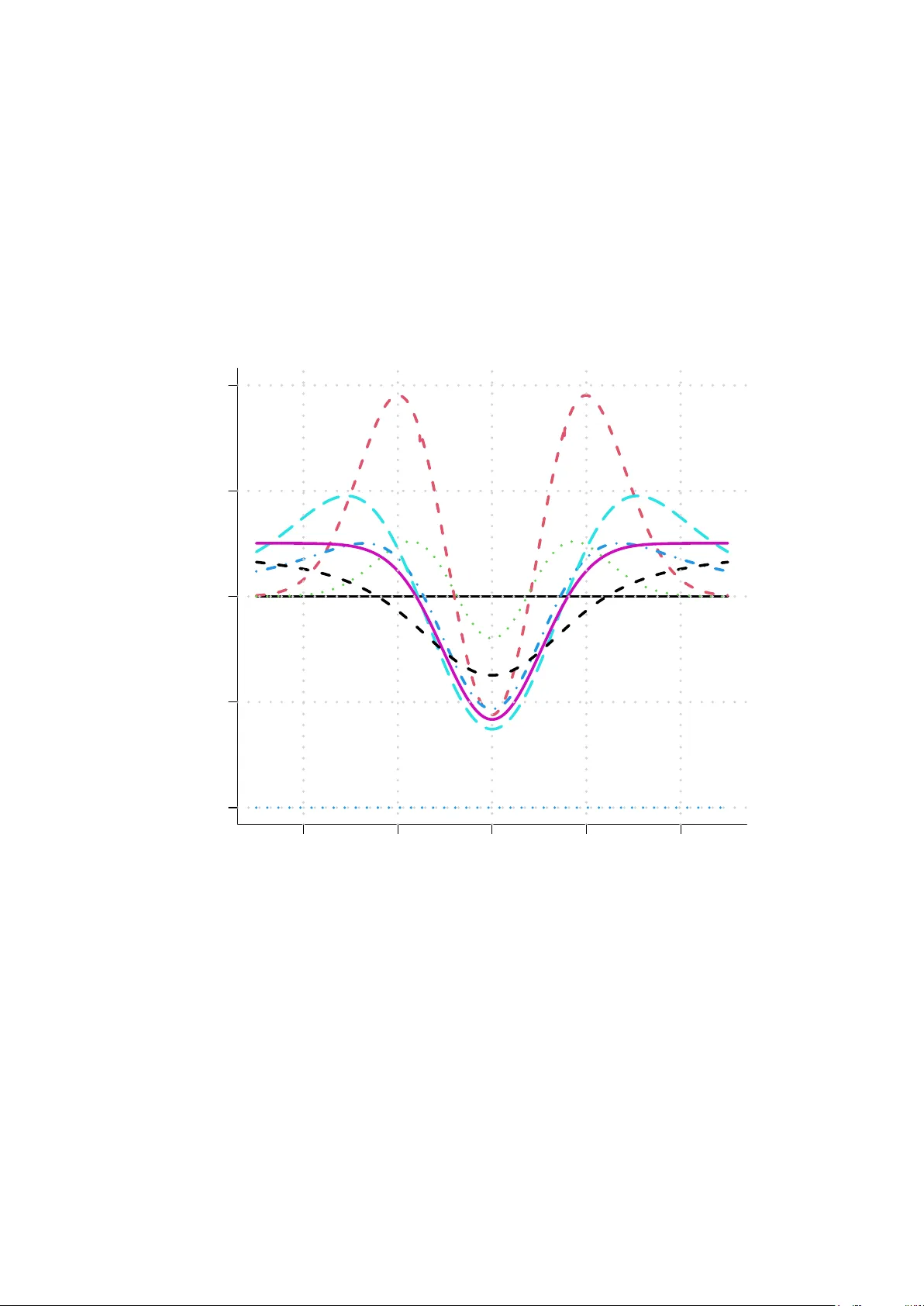

Suppose data are fitted to some parametric model but that the true model happens to be one with an additional parameter. When a parameter is to be estimated one can use likelihood estimation in the wider model or in the narrow model. Including the ex…

Authors: Nils Lid Hjort