On the Use of Bagging for Local Intrinsic Dimensionality Estimation

The theory of Local Intrinsic Dimensionality (LID) has become a valuable tool for characterizing local complexity within and across data manifolds, supporting a range of data mining and machine learning tasks. Accurate LID estimation requires samples…

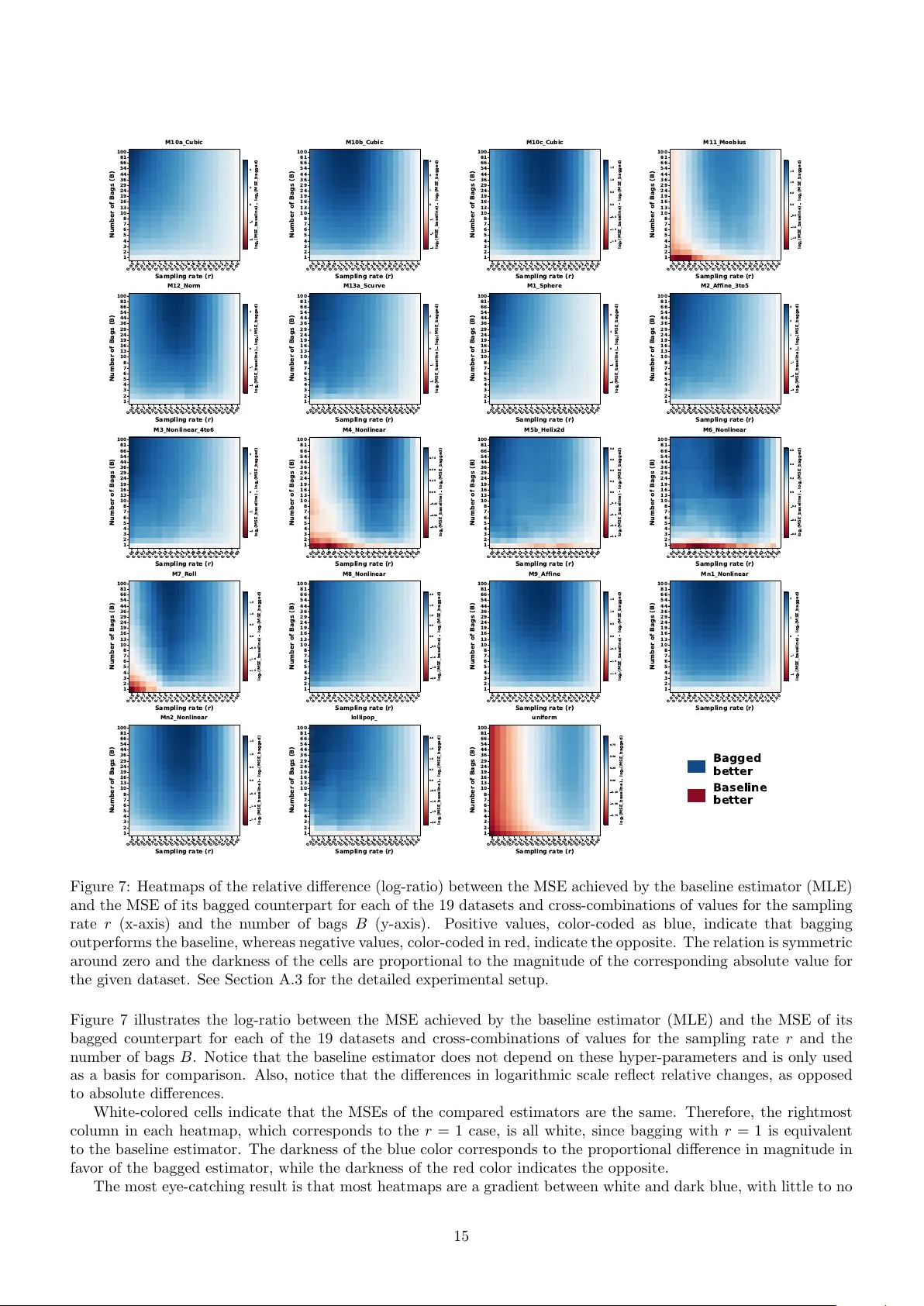

Authors: Kristóf Péter, Ricardo J. G. B. Campello, James Bailey