A Deep Dive into Scaling RL for Code Generation with Synthetic Data and Curricula

Reinforcement learning (RL) has emerged as a powerful paradigm for improving large language models beyond supervised fine-tuning, yet sustaining performance gains at scale remains an open challenge, as data diversity and structure, rather than volume…

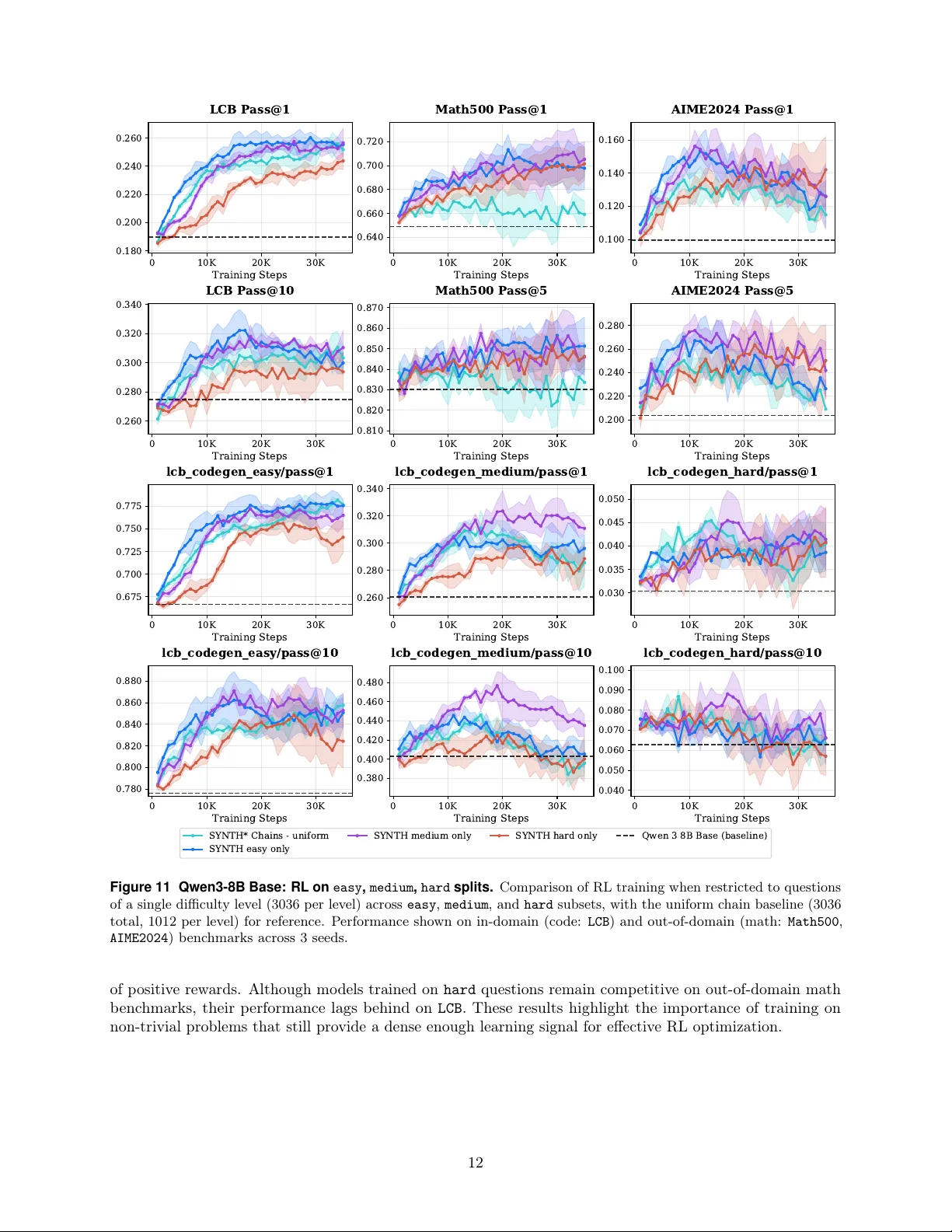

Authors: Cansu Sancaktar, David Zhang, Gabriel Synnaeve