SafeFlow: Real-Time Text-Driven Humanoid Whole-Body Control via Physics-Guided Rectified Flow and Selective Safety Gating

Recent advances in real-time interactive text-driven motion generation have enabled humanoids to perform diverse behaviors. However, kinematics-only generators often exhibit physical hallucinations, producing motion trajectories that are physically i…

Authors: Hanbyel Cho, Sang-Hun Kim, Jeonguk Kang

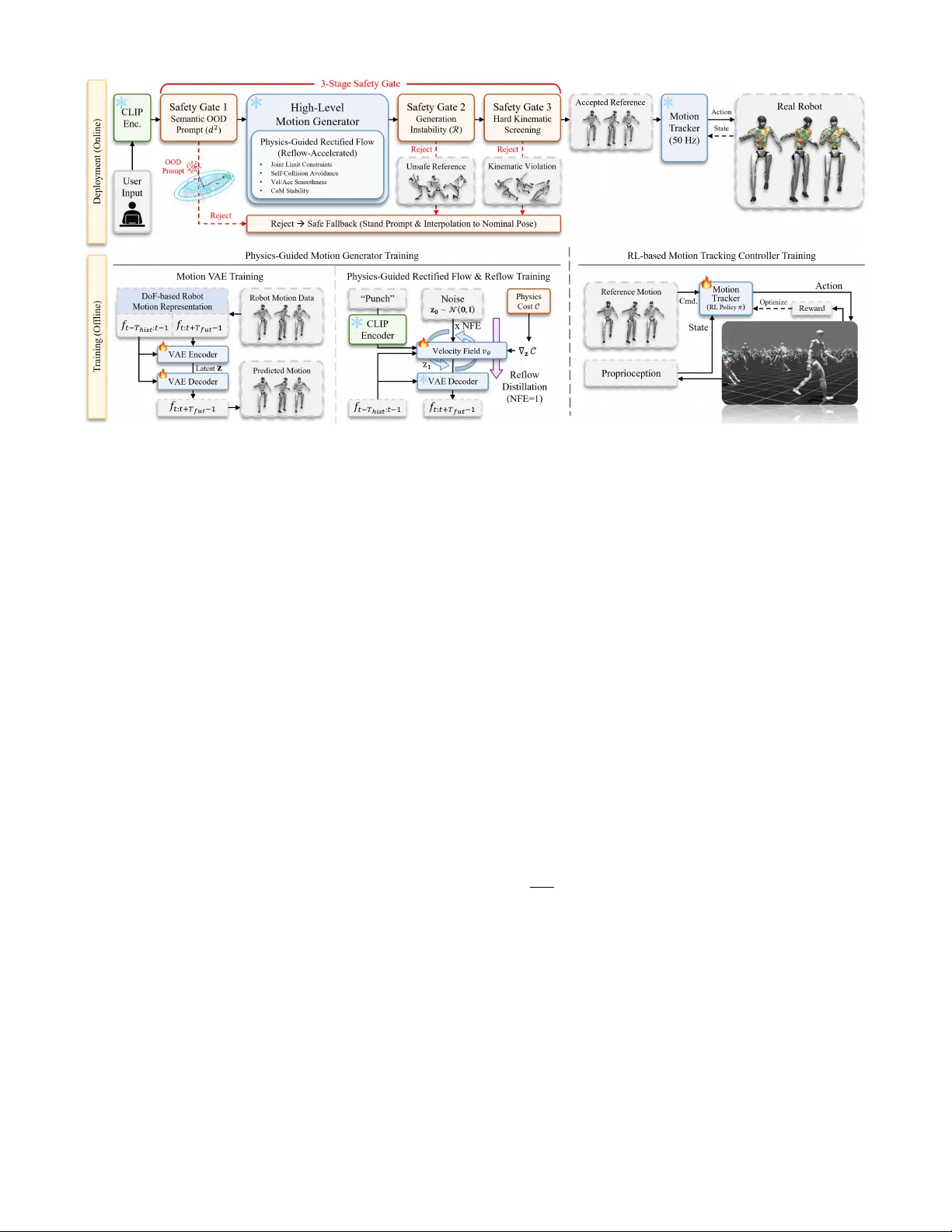

SafeFlow: Real-T ime T ext-Driv en Humanoid Whole-Body Control via Physics-Guided Rectified Flow and Selective Safety Gating Hanbyel Cho Sang-Hun Kim Jeonguk Kang Donghan K oo Future Robot AI Group, Samsung Electronics Abstract — Recent advances in real-time interactive text- driven motion generation hav e enabled humanoids to perf orm diverse behaviors. Howe ver , kinematics-only generators often exhibit physical hallucinations, producing motion trajectories that are physically infeasible to track with a downstream motion tracking controller or unsafe for real-w orld deployment. These failures often arise from the lack of explicit physics- aware objectives for real-robot execution and become more sever e under out-of-distrib ution (OOD) user inputs. Hence, we propose SafeFlow , a text-driven humanoid whole-body control framework that combines physics-guided motion generation with a 3-Stage Safety Gate driven by explicit risk indicators. SafeFlow adopts a two-level architectur e. At the high level, we generate motion trajectories using Physics-Guided Rectified Flow Matching in a V AE latent space to impro ve real-robot executability , and further accelerate sampling via Reflow to reduce the number of function evaluations (NFE) for real-time control. The 3-Stage Safety Gate enables selective execution by detecting semantic OOD prompts using a Mahalanobis score in text-embedding space, filtering unstable generations via a directional sensitivity discrepancy metric, and enfor cing final hard kinematic constraints such as joint and velocity limits befor e passing the generated trajectory to a low-le vel motion tracking controller . Extensive experiments on the Unitree G1 demonstrate that SafeFlow outperforms prior diffusion-based methods in success rate, physical compliance, and inference speed, while maintaining div erse expressiveness. I . I N T RO D U C T I O N Recent advances in text-driv en motion generation [ 1 – 5 ] hav e enabled humanoid robots to synthesize diverse and expressi v e behaviors from natural language. Beyond of fline text-to-motion [ 6 , 7 ], recent work has progressed to w ard real- time interactiv e control, where robots respond to streaming text commands. In particular , systems such as T extOp [ 8 ] demonstrate a new control paradigm in which natural lan- guage serves as a continuously revisable control signal rather than a one-shot task specification, suggesting a promising direction to ward intuitive, text-based humanoid control. Despite this progress, high-le vel motion generators often fail to produce motions that are physically executable and safe on real hardware. Kinematics-only generators [ 1 , 3 , 4 ] can exhibit physical hallucinations, yielding joint limit vi- olations, self-collisions, and unstable balance, which result in physically implausible full-body configurations. Although downstream motion tracking controllers can partially com- pensate, large physical violations degrade motion fidelity and can lead to unstable or unsafe beha viors. These issues become more sev ere under open-ended or out-of-distribution (OOD) user inputs, where generators may produce sev erely distorted motions unsuitable for direct execution (Fig. 1 ). Fig. 1. Failur e Cases of a Baseline T ext-Driven Reference Motion Gen- erator . While a kinematics-only baseline [ 8 ] produces physically feasible motions for simple prompts ( a ), it often generates infeasible references— including joint limit violations ( b ) and self-collisions ( c )—even under in- distribution commands. For out-of-distribution prompts, the generation pro- cess becomes unstable, leading to structural collapse and unsafe , implausible full-body configurations ( d ). These failure modes underscore the critical need for physics-guided generation and runtime safety gating. Addressing this challenge requires improving physical fea- sibility at the generation stage and introducing mechanisms to detect and reject unsafe behaviors prior to ex ecution. T o this end, we propose SafeFlow , a real-time text- driv en humanoid whole-body control framework that com- bines physics-guided motion generation with deployment- time selective execution to improve robustness under open- ended or OOD text inputs. At the core of SafeFlow is a physics-guided motion generator based on rectified flow matching in a V AE latent space. Unlike purely kinematic generation [ 1 – 5 ], our approach incorporates physics-aware objectiv es relev ant to real-robot execution, including joint feasibility , self-collision avoidance, stability , and motion smoothness, to steer sampling toward executable motion regions. While physics-guided sampling has been explored in character animation and offline motion generation [ 9 ], its use for improving real-robot executability in real-time text-dri v en control remains underexplored. T o enable real- time deployment, we further lev erage reflow [ 10 ] distillation so that the model internalizes the physics-aware guidance, drastically reducing the required number of function ev alua- tions while retaining physically executable behaviors. While these generation-lev el improv ements significantly enhance ex ecutability , they do not fully resolve deployment-time safety , particularly under ambiguous or adversarial prompts, motiv ating an additional selectiv e ex ecution mechanism. SafeFlow therefore incorporates a training-free 3-Stage Safety Gate driv en by explicit risk indicators that operates hierarchically across input semantics, generation reliability , and final kinematic feasibility . W e first detect semantic OOD prompts in text-embedding space, then filter structurally un- stable generations by measuring directional flow sensitivity , and finally enforce a last-line kinematic screen to strictly reject motions that violate hardware constraints, including joint and velocity limits, before execution. This hierarchical filtering enables the system to proactiv ely reject unsafe mo- tions rather than attempting to ex ecute all generated outputs. By integrating physics-aware generation with indicator- driv en selective ex ecution, SafeFlow adv ances real-time text- driv en humanoid control to ward safe and robust deployment under unconstrained text inputs. W e validate the proposed framew ork through extensi ve experiments on the Unitree G1 humanoid. Results show that SafeFlow improv es success rate, physical compliance, and inference speed compared to diffusion-based baselines while maintaining div erse expres- siv eness. Our main contributions are summarized as follows: • W e propose SafeFlow , a real-time text-dri ven humanoid whole-body control framework that couples physics- guided generation with deployment-time selectiv e ex- ecution for rob ustness under unconstrained prompts. • W e introduce physics-guided rectified flow matching in a V AE latent space and le verage r eflow distillation to achiev e real-time ex ecution while significantly improv- ing the physical feasibility and real-robot executability of generated motions. • W e propose a training-free 3-Stage Safety Gate to proactiv ely block unsafe beha viors under OOD prompts, utilizing explicit risk indicators: Mahalanobis semantic OOD filtering, directional sensitivity discrepancy metric for generation instability , and hard kinematic screening. I I . R E L AT E D W O R K A. Interactive Language-Driven Humanoid Contr ol Con ventional humanoid whole-body control methods have relied on either executing task-specific commands for lo- comotion and manipulation [ 11 – 15 ], or tracking predefined reference trajectories–often extracted from motion capture data [ 16 ] or videos [ 17 , 18 ]–with reinforcement-learning (RL)-based motion tracking controllers [ 19 – 24 ]. T eleoper- ation [ 25 – 28 ] can enable more flexible behaviors, but it requires human inv olvement, which limits both autonomy and scalability . Recent works have lev eraged large-scale motion datasets [ 16 ] and generativ e motion models [ 1 – 5 ] to translate natural-language instructions into robot motions; howe ver , most approaches focus on of fline generation. More recently , T extOp [ 8 ] demonstrates the feasibility of real-time, interactive control with an autoregressiv e diffusion model [ 29 ], responding to streaming text commands and allowing on-the-fly intent re vision. Howe ver , such systems optimize semantic alignment and often produce reference tra- jectories that violate actuation limits, induce self-collisions, or destabilize balance, especially under out-of-distribution (OOD) commands, yielding physically infeasible or unsafe references and placing heavy burden on downstream motion tracking controllers [ 19 – 21 ]. In the same streaming setting, SafeFlow couples physics-aw are guidance for executable, trackable references with a deployment-time safety gate that proactiv ely rejects unsafe OOD prompts. B. Physics-A ware Humanoid Motion Generation T ext-conditioned motion generators [ 1 – 5 ] often produce kinematically plausible yet physically in valid motions (e.g., foot sliding, ground penetration). T o improve realism, simulator-in-the-loop diffusion methods like PhysDif f [ 9 ] incorporate physics during sampling but introduce substan- tial latency . Meanwhile, physics-based RL approaches like PhysHOI [ 30 ] are tailored to virtual characters rather than hardware-constrained humanoid robots. In robotics, Robot- MDM [ 6 ] and Humanoid-R0 [ 7 ] bridge the kinematic- ex ecution gap but are inherently limited to offline gener- ation. Specifically , RobotMDM synthesizes full reference sequences from discrete prompts, while Humanoid-R0 re- lies on computationally heavy autoregressi ve generation. Both require motions to be pre-computed, making real-time streaming control infeasible. In contrast, SafeFlow proposes physics-guided rectified flo w matching with reflow distilla- tion for f ast and stable online generation. It further introduces a hierarchical safety gating mechanism to proacti vely filter unsafe motions under distribution shifts, reducing the burden on do wnstream motion tracking controllers. C. Deployment-T ime Safety Gating and OOD Robustness Real-time, interactive text-dri ven control exposes robots to open-ended and OOD prompts, which can induce unsafe reference trajectories at deployment time. Most existing interactiv e frame works lack an explicit runtime mechanism to reject such unsafe references; for instance, T extOp [ 8 ] and LangWBC [ 31 ] treat the generator largely as a black box and rely on do wnstream motion tracking controllers to cope with the resulting references [ 19 – 21 ]. SafeFlow addresses this gap with a 3-Stage Safety Gate that hierarchically screens semantic OOD inputs, generation instability via a directional sensitivity discrepancy metric, and hard kinematic limits, ensuring that only ex ecutable and safe reference trajectories are passed to the motion tracking controller . I I I . M E T H O D A. Overview of SafeFlow W e present SafeFlow , a two-le vel framew ork for real- time, interactive text-driv en humanoid control that improves physical executability and deployment-time safety (Fig. 2 ). SafeFlow targets two failure modes in interactiv e text con- trol: kinematics-only generators often produce physically infeasible references, and open-ended or OOD prompts can induce unsafe generations at deployment. T o address both, SafeFlow combines physics-awar e motion generation with deployment-time selective execution using explicit risk indicators. W e describe physics-guided motion generation Fig. 2. Overview of SafeFlow . T op (Deployment, Online): A 3-Stage Safety Gate hierarchically filters OOD semantics, generation instability , and kinematic violations. A reflow-accelerated high-level motion generator provides physically feasible reference motions. If accepted, these are executed by the downstream motion tracking controller; otherwise, a safe fallback is triggered. Bottom (T raining, Offline): The motion generator is trained via V AE latent learning and physics-guided flow matching with reflow distillation (NFE=1). The motion tracking controller is trained in simulation via RL. (Sec. III-B ), a training-free 3-Stage Safety Gate (Sec. III- C ), and RL-based motion tracking controller (Sec. III-D ). Streaming T ext Control. Follo wing T extOp [ 8 ] in the real-time streaming r efer ence gener ation–low-level tracking formulation, SafeFlow augments the loop with deployment- time safety gating for selective ex ecution (Fig. 2 ). At each time step t , the system receives the current text command l t together with the pre vious robot proprioceptiv e state x robot t − 1 . W e first apply the Stage-1 Safety Gate to l t ; if accepted , the physics-guided high-level motion generator G produces a horizon- T fut ( = 8 ) future reference motion sequence con- ditioned on the past T hist ( = 2 ) history reference motions, x ref t : t + T fut − 1 = G x ref t − T hist : t − 1 , l t . (1) The generated reference is then screened by the Stage- 2/3 Safety Gates before execution. The low-le vel tracking controller π runs at the control rate and conv erts the accepted kinematic reference into ex ecutable joint commands, a τ = π x robot τ − 1 , a τ − 1 , x ref τ : τ + T ref − 1 , (2) where x ref τ : τ + T ref − 1 denotes the corresponding segment from the latest accepted reference. If a prompt or generated segment is rejected , the system executes a safe fallback motion and continues with updated streaming commands. B. Physics-Guided Rectified Flow Motion Generation Real-time interactiv e control requires generating physi- cally ex ecutable reference motions with low latency . W e for- mulate the high-level motion generator G using rectified flow matching for stable kinematic modeling. Howe ver , purely kinematic generation often violates physical constraints crit- ical for robot execution. T o ensure physical feasibility , Safe- Flow introduces physics-guided sampling to steer generation tow ard ex ecutable motion regions. Finally , to support lo w- latency streaming, we apply reflow distillation [ 10 ], enabling highly ef ficient sampling via straight flo w trajectories. Latent-Space Motion Representation. W e represent refer- ence motion x ref t using the same DoF-based local incremen- tal per-frame feature f t ∈ R d feat as in T extOp [ 8 ]. W e train a V AE to learn a compact motion latent space. The encoder infers a motion latent from both past and future reference motion as z ∼ Enc( · | f t − T hist : t + T fut − 1 ) , while the decoder reconstructs the future reference using only the past reference and the motion latent as f t : t + T fut − 1 = Dec ( f t − T hist : t − 1 , z ) . T ext-Conditioned Rectified Flow Matching. W e model the text-conditional distribution of future motion latent using rectified flow matching [ 10 ]. W e embed the streaming text command using a CLIP text encoder [ 32 ] as e t = CLIP ( l t ) and condition on the motion history f t − T hist : t − 1 . W e learn a velocity field v θ ( z , u | f t − T hist : t − 1 , e t ) that defines an Ordinary Differential Equation (ODE) transporting a noise distribution to the data distribution: d z u du = v θ ( z u , u | f t − T hist : t − 1 , e t ) , u ∈ [0 , 1] . (3) During training, we sample a ground truth motion latent z 1 = Enc( f t − T hist : t + T fut − 1 ) and a noise latent z 0 ∼ N ( 0 , I ) . W e define a linear interpolation path z u = u z 1 +(1 − u ) z 0 , which implies a constant tar get velocity z 1 − z 0 . The model is trained to minimize the rectified flow matching objecti ve: L RFM ( θ ) = E ∥ v θ ( z u , u | f t − T hist : t − 1 , e t ) − ( z 1 − z 0 ) ∥ 2 2 . (4) At inference, we sample z 0 ∼ N ( 0 , I ) and integrate the ODE from u = 0 to u = 1 using an explicit solver ( e.g ., Euler) with N steps ( i.e ., NFE= N ) to obtain the generated motion latent z 1 , then decode f t : t + T fut − 1 = Dec( f t − T hist : t − 1 , z 1 ) . Physics-Guided Sampling. While text conditioning ensures semantic fidelity , it does not guarantee physical feasibility on a real robot. T o resolve this, SafeFlow employs physics- guided sampling to steer the motion latent trajectory toward ex ecutable motion manifolds. This allo ws us to impose phys- ical constraints purely at inference time without retraining the base model. Let f t : t + T fut − 1 = Dec( f t − T hist : t − 1 , z u ) be the decoded feature sequence at ODE integration time u , and let x ref t : t + T fut − 1 denote the corresponding kinematic reference trajectory obtained from f . W e define a dif ferentiable physics cost C to quantify exe- cutability violations. While gradient-based guidance has been explored in character animation and offline motion [ 1 , 9 , 33 ], to the best of our kno wledge, SafeFlow is the first to adapt this mechanism for r eal-robot executability by enforcing strict hardware limits, self-collision av oidance, and postural stability via CoM regularization. The total cost is a weighted sum of four terms as C x ref t : t + T fut − 1 = P i λ i C i , where C i represents specific constraints ( detailed below ). During ODE integration, we compute the gradient of the cost with respect to the generated motion latent z . This inv olves passing z through the frozen V AE decoder to reconstruct the kinematic reference for cost ev aluation, and then backpropagating. W e then steer the flo w using the guided velocity field ˜ v θ : ˜ v θ ( z , u ) = v θ ( z , u | f t − T hist : t − 1 , e t ) − α ( u ) ∇ z C (Dec( f t − T hist : t − 1 , z )) , (5) where α ( u ) is a time-dependent guidance scale. W e use this steered velocity ˜ v θ for numerical integration to push the trajectory to ward physically feasible regions. W e define C as a weighted sum of four terms designed for real-robot ex ecutability . Let q τ be the joint configuration at time τ decoded from f τ . (1) Joint Limit & (2) Self-Collision: T o strictly enforce hard- ware limits and prevent physical penetrations, we penalize violations using ReLU-squared barriers: C lim = X τ ,j ReLU( q τ ,j − q max j ) 2 + ReLU( q min j − q τ ,j ) 2 , C col = X τ , ( a,b ) ∈P ReLU ( r a + r b + m ) − d ab ( q τ ) 2 , (6) where r a , r b are collision sphere radii for links a, b , d ab is their Euclidean distance, and m is a safety margin. (3) Smoothness & (4) CoM Stability: T o generate smooth, jitter-free motions suitable for tracking, we regularize high- order deriv atives of joints and the Center of Mass (CoM). Let c ( q ) = P m i p i ( q ) P m i be the global CoM position computed via forward kinematics, where m i and p i are the mass and position of the i -th link. C sm = X τ ∥ ˙ q τ ∥ 2 + β q ∥ ¨ q τ ∥ 2 , C stab = X τ ∥ ˙ c ( q τ ) ∥ 2 + β c ∥ ¨ c ( q τ ) ∥ 2 . (7) Here, time deri v ativ es are computed via finite differences. Physics-A war e Reflow . Direct physics-guided sampling im- prov es ex ecutability but increases latency due to iterative gradient computations ( ∇ z C ). T o enable real-time control, we apply the reflow procedure [ 10 ] to distill the guided trajectories into a straightened velocity field. W e generate synthetic pairs ( z 0 , z guided 1 ) , where z guided 1 is the result of the computationally expensiv e guided integration (Eq. 5 ). W e then retrain the model to follow the straight path connecting z 0 to z guided 1 . This process internalizes the physics constraints directly into the network weights, allo wing us to bypass ex- pensiv e online cost gradients and generate safe motions with significantly fe wer steps ( e.g ., NFE=1) during deployment. C. Selective Execution via 3-Stage Safety Gate While physics-guided motion generation improv es av erage ex ecutability , it cannot inherently prev ent failures caused by open-ended or OOD text inputs. Such inputs often reside in sparse regions of the training distribution, leading to physical hallucinations or structurally unstable motions. T o ensure robust deployment without compromising real-time interac- tivity , SafeFlo w introduces a tr aining-fr ee selectiv e ex ecution mechanism. As a hierarchical fir ewall , it filters failure modes at the input semantic , latent generative , and output kinematic lev els, rejecting unsafe references with acceptable latency before reaching the motion tracking controller . Stage 1: Semantic OOD Filtering (Input Level). Stan- dard generators often fail unpredictably when facing out- of-distribution (OOD) prompts. W e detect these efficiently in the CLIP [ 32 ] text embedding space. Since the statistics of training prompts (mean µ and cov ariance Σ ) are pre- computed of fline , inference requires only a lightweight Maha- lanobis distance calculation on the streaming text embedding e t : d 2 ( e t ) = ( e t − µ ) ⊤ Σ − 1 ( e t − µ ) . The threshold τ sem is calibrated to the N -th percentile of distances computed on the training set. Prompts satisfying d 2 > τ sem are rejected instantly , bypassing the motion generator to pre vent synthe- sizing undefined reference motions. Stage 2: Generation Instability Filtering (Model Level). Even for valid prompts, the flo w matching model can tra- verse chaotic regions where the vector field becomes highly anisotropic. T o detect this structural instability , we intro- duce a novel metric that measures the dir ectional sensitivity discr epancy . The key intuition is that in stable regions, the flow’ s response to perturbations should be consistent across directions. Con versely , high variance implies that the generation trajectory is fragile to specific directional noise. W e estimate this by probing the Jacobian J = ∂ v θ /∂ z along M random unit vectors { ϵ m } M m =1 ( e.g ., M = 16 ). First, we compute the directional sensitivity scalar g m for each probe using a finite-dif ference approximation: g m ≈ ϵ ⊤ m v θ ( z + δ ϵ m ) − v θ ( z ) δ ≈ ϵ ⊤ m J ϵ m , (8) where δ is a small perturbation. This scalar g m represents the expansion or contraction of the flo w along ϵ m . Finally , we define the g eneration instability scor e R as the standard de vi- ation of these sensitivities, R = q 1 M P M m =1 ( g m − ¯ g ) 2 , where ¯ g denotes the mean of { g m } . Le veraging par allel batching , this computation incurs negligible latency , enabling r eal-time risk monitoring during generation. A high R ( > τ stab ) indicates that the flo w field is disjointed or near-singular , triggering early rejection to prev ent executing structurally unr eliable reference motions. Stage 3: Hard Kinematic Screening (Output Level). As a final fail-safe, we perform a lightweight, deterministic screen on the kinematic trajectory x ref . W e strictly reject any motion segment that violates intrinsic hardware limits, specifically checking for joint position bounds ( q j / ∈ [ q min j , q max j ] ) and dynamic constraints ( | ˙ q j | > ˙ q max j or | ¨ q j | > ¨ q max j ). While this local check cannot guarantee global stability ( e.g ., balance), it serv es as a necessary last-line defense to pre vent immediate actuator damage. If a rejection is triggered at any stage, the system executes a safe fallback by replacing the current user command with a “stand” prompt while simultaneously inter- polating to a nominal pose, and awaits the next command. D. RL-Based Motion T racking Contr oller W e adopt a goal-conditioned RL motion tracking con- troller trained with PPO in Isaac Lab [ 34 ]. The controller outputs residual joint corrections ∆ q π , forming control tar- gets as q target = q ref ( t ) + ∆ q π , which improv es robustness to imperfect references. T o enhance generalization, future reference observations are expressed in the body-local frame (linear/angular velocities, root height, roll–pitch, and joint targets), ensuring in v ariance to global position and heading. E. Implementation Details Physics-Guided Motion Generator & Safety Gating. Our model is trained on BABEL [ 35 ] retargeted to Unitree G1. W e follow T extOp [ 8 ] for data preprocessing and splits. T raining tuples use sliding windows of ( T hist , T fut ) = (2 , 8) , enabling the generator to operate at 6.25 Hz. Building upon T extOp, we adopt its exact motion representations, Trans- former architectures, and remaining training hyperparame- ters, training the velocity field v θ for 200k iterations. W e build a physics-guided teacher ( SafeFlow (+ Guid.) , NFE = 10) using classifier-free guidance (CFG) decaying from 5.0 to 3.0. Physics-guided sampling uses λ lim = λ stab = 1 . 0 , λ sm = 0 . 1 , λ col = 0 . 01 , β q = 50 . 0 , and β c = 10 . 0 . W e apply physics guidance scale α ( u ) with a linearly increasing schedule from 500 to 10 , 000 across the denoising trajectory , with per-element gradient clamping at ± 0 . 2 . For real-time deployment ( SafeFlow (+ Guid. & Reflow) , NFE = 1), we distill guided ODE trajectories into straight paths via reflow for an additional 200k iterations. Self-collision is computed ov er 14 link pairs with sphere radii r ∈ [0 . 03 , 0 . 10] m and margin m = 0 . 03 m . For safety gating, Stage 1 uses τ sem calibrated to accept 90% of training prompts. Stage 2 ev aluates R using 16 probes ( δ = 10 − 6 ) with threshold τ stab = 5 . 0 . Stage 3 enforces G1 hardware limits. Motion T racking Controller . Since our contributions lie in the physics-guided generator and the 3-Stage Safety Gate, we adopt the same RL tracking formulation as T extOp [ 8 ] ( i.e ., dataset, observ ations, rew ards, and domain randomization). For a fair comparison, the same controller is used to ev aluate both the baseline [ 8 ] and SafeFlow across all experiments. The controller runs at 50 Hz on the onboard Jetson Orin. I V . E X P E R I M E N T S W e ev aluate the effecti veness of SafeFlow through a combination of extensi ve simulation studies and real-world deployment on the Unitree G1 humanoid. Our experiments are designed to validate the system’ s physical ex ecutability , deployment-time safety and rob ustness, computational effi- ciency , and overall practical performance. Specifically , the ev aluation aims to answer the follo wing questions: • Q1 (Executability): How much does physics-guided generation improve the physical feasibility of reference motions and the success rate of the downstream tracker? • Q2 (Safety and Rob ustness): Can the 3-Stage Safety Gate detect and filter out OOD prompts and generation instability to guarantee deployment-time safety? • Q3 (Real-Time Perf ormance): Do the reflo w-acceler- ated motion generator and safety gating pipeline achie ve the lo w latency required for real-time control? • Q4 (Real-Robot Deployment): Can SafeFlow transfer to real hardware to enable interacti ve control while maintaining strict safety against hazardous commands? A. Experimental Setup T o systematically ev aluate SafeFlow across Physical Exe- cutability , Deployment-T ime Safety , and Computational Effi- ciency , we establish a robust training and ev aluation pipeline. The motion generator is trained offline using standard deep learning frameworks [ 36 ], while the motion tracking con- troller is trained in Isaac Lab [ 34 ]. System-le vel ev aluations of the integrated pipeline are conducted in MuJoCo [ 37 ] to validate the Unitree G1’ s behaviors prior to real-world deployment. W e compare our approach primarily against T extOp [ 8 ], a state-of-the-art autoregressiv e diffusion base- line for real-time interacti ve text-dri ven humanoid control. B. Physical Executability (Q1) W e first ev aluate whether the physics-guided generation improv es the physical feasibility of reference motions. T o strictly decouple the performance of the motion generator from the capabilities of the do wnstream tracking controller , we assess ex ecutability in two stages: Generator-Only (kine- matic ev aluation prior to tracking) and System-Level (closed- loop ev aluation with the tracking controller). For ev aluation, we utilize the BABEL [ 35 ] validation prompts, generating 1,000 motion frames per prompt and reporting the averages. Generator -Only Kinematic Feasibility . W e measure the Joint Limit V iolation Rate (JV , the ratio of frames exceeding joint limits, %) and Self-Collision Rate (SC, %) on the generated motions. T able I shows the baseline [ 8 ] frequently violates hardware limits (JV : 43.14%). Notably , adopting our base Flow Matching formulation ( SafeFlow (Flow) ) in- trinsically drops violations to 12.75%. Building upon this stable foundation, our physics-guided sampling further min- imizes these errors ( SafeFlow + Guid. ), and the reflow-distilled model ( SafeFlow + Guid. & Reflow ) maintains strict compliance (JV : 3.08%) under single-step ( NFE=1 ) generation for real- time control (T able III ). Furthermore, CoM V elocity and Joint Acceleration plots (Fig. 3 (a)) reveal that SafeFlow stabi- lizes trajectories and suppresses erratic spikes ( e.g ., baseline T ABLE I P H YS I C A L E XE C U T A B IL I T Y A N D T R ACK I N G F I D EL I T Y . S AF E F L OW I M PR OV ES G E N E RATO R C O MP L I A NC E ( J V : J O IN T L I M I T V I O L A T I O N , S C : S E LF - C O LL I S IO N ) A N D D OW N S T RE A M T R AC K I NG FI DE L I T Y . T RA CK I N G E R RO RS A R E E V A L UATE D O N A LL V A L I D F RA M E S B E F OR E FA I L UR E ( C OM M O N - P R E FI X ) , W I T H S U CC E S S - O N L Y V A L UE S I N P A R EN T H E SE S . Generator-Only System-Level T racking Fidelity Method JV ↓ SC ↓ Succ. ↑ E mp jpe ↓ E vel ↓ E acc ↓ T extOp [ 8 ] 43.14% 11.05% 80.6% 81.42 (78.04) 0.23 (0.20) 10.61 (9.26) SafeFlow (Flow) 12.75% 7.25% 92.7% 55.32 (55.07) 0.17 (0.16) 7.98 (7.55) SafeFlow (+ Guid.) 6.32% 4.39% 98.0% 46.39 (46.45) 0.11 (0.11) 5.48 (5.27) SafeFlow (+ Guid. & Reflow) 3.08 % 1.42 % 98.5 % 40.89 (41.20) 0.09 (0.09) 4.54 (4.42) acceleration peaks at 263.5 rad / s 2 ), yielding kinematically feasible references for the downstream tracking controller . System-Level T racking Fidelity . W e ev aluate the inte- grated pipeline by streaming the generated references to the downstream tracking controller . W e report the Success Rate (Succ., defined as completing the sequence without falling, i.e ., base height > 0.3 m ) along with tracking discrepancy metrics: MPJPE ( E mp jpe , mm ), V elocity Err or ( E vel , m / s ), and Acceleration Error ( E acc , m / s 2 ). T able I shows that the physically compliant references from SafeFlow alleviate the tracker’ s burden, significantly reducing errors across all metrics and boosting the success rate to 98.5%. Moreover , T or que and Joint V elocity plots (Fig. 3 (b)) show SafeFlow mitigates the baseline’ s severe torque chattering and er- ratic velocity spikes ( e.g ., peak velocity of 5.2 rad / s ). This confirms that kinematically feasible generation translates to improv ed system-lev el hardware safety and tracking fidelity . Diversity Preser vation. W e further verify that improved physical feasibility does not come at the cost of motion div ersity . W e measure Multimodality (MModality) [ 38 ] as the av erage pairwise L2 distance in 29-DoF joint-angle space ( rad ) across 10 generations per prompt. Over all 2,362 prompts, the baseline shows higher di versity (1.40 rad ) than SafeFlow (1.09 rad ); howe ver , this gap is largely attributable to unstable motions. When restricting to the 1,889 prompts where both methods succeed to track, the difference shrinks to 1.26 vs. 1.06 rad , and on the 915 prompts where neither method incurs any joint limit violation, the two are virtually indistinguishable (1.00 vs. 0.99 rad , ∆ = 1 . 1% ). Meanwhile, on the 437 prompts where only the baseline fails, its multimodality inflates to 1.99 rad —66% above SafeFlow ’ s 1.20 rad on the same prompts—confirming that much of the baseline’ s apparent di versity stems from physically implau- sible motions rather than meaningful behavioral v ariation. C. Deployment-T ime Safety and Robustness (Q2) W e e valuate the 3-Stage Safety Gate’ s capability to filter unsafe out-of-distribution (OOD) prompts and generativ e instabilities, ensuring physical safety during deployment. Stage 1: Input-Level Semantic Filtering. T o assess robust- ness against untrained or unsafe text inputs, we generate two types of OOD prompts using an LLM [ 39 ] (100 prompts each): T ype A (Unseen V erbs) , representing untrained ac- tions causing latent space collapse ( e.g ., “le vitate”, “crochet Fig. 3. Kinematic Feasibility and T racking Stability . Despite generating dynamic motions (left), our full pipeline, SafeFlow (+ Guid. & Reflow), stabilizes kinematic references and improves tracking. (a) Generator -only : SafeFlow suppresses erratic spikes in CoM velocity and joint acceleration. (b) System-level : SafeFlow mitigates torque chattering and joint velocity spikes, enabling hardware-safe tracking. The x-axis represents time in frames, showing a representativ e active segment (frames 600–950). T ABLE II S T AG E 1 S EM A N T IC O OD F I L T E R I NG . W I T H τ 90 PAS S I N G 9 0% O F T R AI N I N G P RO MP T S , S TAG E 1 A CH I E V ES H IG H AUR OC A N D R EJ E C T S U N SA F E O O D I N P UT S W H I L E P RE S E RVI N G I D C OV E R AG E . Prompt Set Category A UROC ↑ Accept Rate @ τ 90 ID (V al.) In-Distribution – 90.56% (2139/2362) OOD (T ype A) Unseen V erbs 0.9872 5.00% (5/100) OOD (T ype B) Extreme Dynamics 0.9715 7.00% (7/100) a sweater”), and T ype B (Extreme Dynamics) , in volving acrobatic motions exceeding physical hardware limits ( e.g ., “flying tornado kick”). W e compare these against an In- Distribution (ID) set of 2,362 B ABEL validation prompts. W e employ a Mahalanobis distance-based filter , calibrating the threshold ( τ 90 ) to pass 90% of the training prompts. As T able II shows, Stage 1 yields exceptional A UROCs (0.9872, 0.9715) for T ypes A and B. It successfully restricts OOD acceptance to mere 5.00% and 7.00%, while preserving a 90.56% ID acceptance rate. Rather than blindly rejecting inputs, it precisely isolates semantic deviations ( e.g ., “play a grand piano”, “do rapid breakdance airflares”). Ho wev er, since input-le vel filtering cannot foresee all internal genera- tiv e instabilities, Stage 2 directly monitors the flow dynamics during inference to reject structurally unreliable motions. Stage 2 & 3: Model- and Output-Level Filtering. Stage 1 filters semantically OOD prompts, but cannot prevent unreli- able generations arising from inherent randomness of motion generation, ev en under ID prompts. Thus, Stage 2 monitors the generation instability scor e R online and triggers a safe fallback when the current reference becomes failure-prone. (1) Is R a Meaningful Indicator? W e validate R by correlat- ing it with downstream tracking error . W e divide generated sequences into 10-frame windows, recording R and MPJPE ( mm ), then group them into shar ed absolute R quintiles across ID ( BABEL val., 220,944 windows ) and OOD ( T ype B, 7,161 windows ) sequences, excluding windows after tracking failure. Figure 4 sho ws MPJPE increases monotonically Fig. 4. Generation Instability Score R Detects Failure-Prone Ref- erences and Motivates Stage 2. Mean tracking MPJPE of 10-frame windows grouped into absolute R quintiles for ID and OOD sequences. MPJPE increases monotonically with R , indicating that high- R windows correspond to physically unstable references. Notably , e ven ID prompts produce high-instability windows (ID Q5, 87.4 mm ) with larger errors than low-instability OOD windo ws (OOD Q1, 56.6 mm ), showing that semantic OOD filtering (Stage 1) is insufficient and Stage 2 monitoring is necessary . Fig. 5. Instability Score-T riggered Safe Fallback. When the instability score R exceeds the fallback threshold due to unstable flow dynamics, Stage 2 temporarily ov errides the current command, injects a standing prompt, and interpolates the tracker reference tow ard a predefined standing pose. Without Stage 2, the robot fails to track the unstable reference motion; with Stage 2 enabled, it remains stable and awaits the next prompt. with R in both domains, confirming high- R references are harder to track. This is consistent with the higher failure rate under OOD prompts, as OOD windo ws heavily skew tow ard the highly unstable (Q5: n =4 , 466 vs. Q1: n =178 ). Importantly , this trend holds within ID alone : ev en after Stage 1 acceptance, some ID windows fall into the high- instability regime (ID Q5: 87.4 mm ), exhibiting larger errors than low- R OOD windows (OOD Q1: 56.6 mm ). This shows Stage 1 alone is insufficient and moti v ates Stage 2. (2) How Does R Behave During Str eaming Generation? Figure 5 (top, mid) shows R during streaming. For stable ID prompts ( e.g ., “walk forward”, “wave hands”), R re- mains lo w and smooth. Con versely , for extreme dynamics (“ T aekwondo ”), R sharply spikes, indicating unreliable flow dynamics and often preceding motion collapse. (3) Instability Score-T rigger ed Safe F allback: When R ex- ceeds a threshold τ stab , Stage 2 temporarily overrides the input with a safe “stand” prompt, interpolates the reference tow ard a predefined standing pose, and awaits the next input. Figure 5 (bottom) sho ws the impact: without Stage 2 (w/o SFB), the tracker collapses; with Stage 2 enabled (w/ SFB), the system transitions to standing and remains stable. Finally , Stage 3 performs deterministic checks on joint- space bounds ( e .g ., position, v elocity , and acceleration limits) as the ultimate fail-safe, ensuring hardware-safe ex ecution. T ABLE III I N FE R E N CE L A T E N C Y A ND P I PE L I N E B RE A K D OW N . S AF E F L OW AC H I EV E S R E A L - T I M E I N FE R E NC E V IA R E FLO W AC C E LE R A T I O N , W H I L E D E PL OY M E NT - T IM E S A F E TY G A T E S A D D M I NI M A L OV E R HE A D . Pipeline Component Added (ms) ↓ Latency (ms) ↓ Freq. (Hz) ↑ T extOp Generator [ 8 ] - 23.59 ± 1.60 42.4 SafeFlow Generator (+ Guid.) - 172.03 ± 6.33 5.8 SafeFlow Generator (+ Guid. & Reflow) - 10.80 ± 0.98 92.6 + Stage 1 (Semantic OOD) 0.006 ± 0.006 10.81 ± 0.98 92.5 + Stage 2 (Generation Instability) 3.96 ± 1.04 14.77 ± 1.43 67.7 + Stage 3 (Hard Kinematic Screen) 0.013 ± 0.003 14.78 ± 1.43 67.7 D. Real-T ime P erformance (Q3) Real-time interactiv e control requires lo w end-to-end la- tency . Because SafeFlow shares the text encoder [ 32 ] and motion tracking controller with T extOp [ 8 ], we report the latency of the motion gener ator and safety gates only . All generators are ev aluated on a single NVIDIA R TX A6000 GPU, and we report the av erage over 100 runs. As sho wn in T able III , physics-guided sampling increases computation ( i.e . SafeFlow Generator (+ Guid.) ), but r eflow distillation re- duces generation latency to 10 . 80 ms ( i.e . SafeFlow Genera- tor (+ Guid. & Reflo w) ). The 3-Stage Safety Gate adds minimal ov erhead (+ 3 . 98 ms cumulati vely), resulting in 14 . 78 ms ( ∼ 67 . 7 Hz ) for the fully guarded generator . Including the shared text encoder ( ∼ 2 . 99 ms ) and ONNX-compiled controller ( ∼ 0 . 98 ms ), SafeFlow satisfies real-time closed-loop control requirements with asynchronous reference generation [ 8 ]. E. Real-Robot Deployment (Q4) W e deploy SafeFlow on the Unitree G1 humanoid to ev al- uate sim-to-real transferability and deployment-time safety on real hardware. As shown in Fig. 6 , the robot executes a continuous, long-horizon sequence of diverse behaviors with smooth transitions—ranging from upper-body gestures ( e.g ., “wa ve hands”, “punch”) to demanding whole-body dynamics ( e.g ., “squat do wn”, “hop on left leg”)—without intermediate stops or manual resets. Crucially , this deployment highlights the practical value of the 3-Stage Safety Gate in pre venting hardw are-lev el failures. As part of the command sequence, we included a high-risk prompt ( i.e ., “double backflip”) known to induce structurally unstable generation. The safety gate identified and filtered the unsafe reference, prev enting failure-prone trajectories from reaching the motion tracking controller . SafeFlow then triggered safe fallback, allo wing the robot to remain balanced and continue execution under subsequent prompts ( e.g ., “wa ve hands”). These real-robot results demonstrate that SafeFlow enables expressi ve long-horizon behaviors while enforcing deployment-time safety on real humanoid systems. V . C O N C L U S I O N SafeFlow adv ances real-time text-dri ven humanoid control tow ard safe deployment by mitigating ph ysical hallucinations and out-of-distribution prompts via physics-guided gener- ation and selective execution. Our framew ork couples a Physics-Guided Rectified Flow Matching generator in a V AE latent space with a low-le vel motion tracking controller, improving real-robot executability . Deployment-time safety Fig. 6. Real-Robot Deployment of SafeFlow on Unitree G1. The robot ex ecutes a continuous long-horizon command sequence with smooth transitions across div erse behaviors, including upper-body gestures (“wave hands”, “punch”) and whole-body actions (“squat down”, “hop on left leg”). A high-risk prompt (“double backflip”) is included. The 3-Stage Safety Gate filters the unsafe reference and triggers a standing fallback, allowing the robot to maintain balance and continue execution under subsequent prompts. This demonstrates sim-to-real transferability and deployment-time safety on hardware. is enforced by a 3-Stage Safety Gate with Mahalanobis- based semantic filtering, a Jacobian-based directional sensi- tivity score, and hard kinematic checks. Experiments on the Unitree G1 show improv ed success rate, physical compli- ance, and inference speed over diffusion baselines. Overall, SafeFlow enables robust text-conditioned humanoid control under open-ended commands. An important direction for fu- ture work is improving the fallback beha vior to be more task- aware during highly dynamic motions, enabling smoother recov ery beyond the current conservati ve standing fallback. R E F E R E N C E S [1] G. T evet, S. Raab, B. Gordon, Y . Shafir , D. Cohen-Or, and A. H. Bermano, “Human motion diffusion model, ” in ICLR , 2023. [2] M. Petrovich et al. , “TMR: T ext-to-motion retrieval using contrastiv e 3D human motion synthesis, ” in ICCV , 2023. [3] X. Chen, B. Jiang, W . Liu, Z. Huang, et al. , “Executing your commands via motion diffusion in latent space, ” in CVPR , 2023. [4] J. Zhang, Y . Zhang, et al. , “Generating human motion from textual descriptions with discrete representations, ” in CVPR , 2023. [5] M. Zhang, Z. Cai, et al. , “MotionDiffuse: T ext-dri ven human motion generation with diffusion model, ” , 2022. [6] A. Serifi et al. , “Robot Motion Diffusion Model: Motion generation for robotic characters, ” in SIGGRAPH Asia Confer ence P apers , 2024. [7] Z. Zhuang, T . W ang, et al. , “Humanoid-R0: Bridging text-to-motion generation and physical deployment via RL, ” 2025. [Online]. A vailable: https://openreview.net/forum?id=agohD5e wsR [8] W . Xie, J. Zheng, J. Han, J. Shi, W . Zhang, C. Bai, and X. Li, “T extOp: Real-time interactive text-driv en humanoid robot motion generation and control, ” , 2026. [9] Y . Y uan, J. Song, U. Iqbal, et al. , “PhysDiff: Physics-guided human motion diffusion model, ” in ICCV , 2023. [10] X. Liu et al. , “Flow straight and fast: Learning to generate and transfer data with rectified flow , ” , 2022. [11] A. Escontrela, J. Kerr , A. Allshire, J. Frey , R. Duan, C. Sferrazza, and P . Abbeel, “GaussGym: An open-source real-to-sim framework for learning locomotion from pixels, ” , 2025. [12] Y . Xue, W . Dong, et al. , “ A unified and general humanoid whole-body controller for fine-grained locomotion, ” , 2025. [13] W . Xie, C. Bai, J. Shi, J. Y ang, Y . Ge, W . Zhang, and X. Li, “Humanoid whole-body locomotion on narro w terrain via dynamic balance and reinforcement learning, ” , 2025. [14] H. Xue, T . He, Z. W ang, Q. Ben, W . Xiao, Z. Luo, X. Da, F . Castañeda, G. Shi, S. Sastry , et al. , “Opening the sim-to-real door for humanoid pixel-to-action policy transfer, ” , 2025. [15] T . He, Z. W ang, H. Xue, Q. Ben, et al. , “VIRAL: V isual sim-to-real at scale for humanoid loco-manipulation, ” , 2025. [16] N. Mahmood, N. Ghorbani, N. F . T roje, et al. , “AMASS: Archiv e of motion capture as surface shapes, ” in ICCV , 2019. [17] A. Allshire, H. Choi, J. Zhang, et al. , “V isual imitation enables contextual humanoid control, ” , 2025. [18] Z. Shen, H. Pi, Y . Xia, Z. Cen, S. Peng, Z. Hu, H. Bao, R. Hu, and X. Zhou, “W orld-grounded human motion recovery via gravity-vie w coordinates, ” in SIGGRAPH Asia 2024 Confer ence P apers , 2024. [19] Z. Chen, M. Ji, X. Cheng, et al. , “GMT: General motion tracking for humanoid whole-body control, ” , 2025. [20] Q. Liao, T . E. T ruong, X. Huang, Y . Gao, G. T evet, K. Sreenath, and C. K. Liu, “BeyondMimic: From motion tracking to versatile humanoid control via guided diffusion, ” , 2025. [21] Z. Zhang, J. Guo, C. Chen, J. W ang, C. Lin, et al. , “Track any motions under any disturbances, ” , 2025. [22] Z. Luo, Y . Y uan, T . W ang, C. Li, S. Chen, F . Castañeda, Z.-A. Cao, J. Li, D. Minor , Q. Ben, et al. , “SONIC: Supersizing motion tracking for natural humanoid whole-body control, ” , 2025. [23] W . Xie et al. , “KungfuBot: Physics-based humanoid whole-body control for learning highly-dynamic skills, ” in NeurIPS , 2025. [24] J. Han, W . Xie, et al. , “KungfuBot2: Learning versatile motion skills for humanoid whole-body control, ” , 2025. [25] T . He, Z. Luo, W . Xiao, C. Zhang, et al. , “Learning human-to- humanoid real-time whole-body teleoperation, ” in IR OS , 2024. [26] T . He, Z. Luo, et al. , “OmniH2O: Universal and dexterous human-to- humanoid whole-body teleoperation and learning, ” in CoRL , 2024. [27] Y . Ze, Z. Chen, J. P . Araújo, et al. , “TWIST: T eleoperated whole-body imitation system, ” , 2025. [28] Y . Ze, S. Zhao, W . W ang, A. Kanazawa, R. Duan, P . Abbeel, G. Shi, J. W u, and C. K. Liu, “TWIST2: Scalable, portable, and holistic humanoid data collection system, ” , 2025. [29] K. Zhao et al. , “DartControl: A diffusion-based autoregressiv e motion model for real-time text-driven motion control, ” in ICLR , 2025. [30] Y . W ang, J. Lin, A. Zeng, et al. , “PhysHOI: Physics-based imitation of dynamic human-object interaction, ” , 2023. [31] Y . Shao et al. , “LangWBC: Language-directed humanoid whole-body control via end-to-end learning, ” , 2025. [32] A. Radford, J. W . Kim, et al. , “Learning transferable visual models from natural language supervision, ” , 2021. [33] Y . Xie, V . Jampani, L. Zhong, et al. , “OmniControl: Control any joint at any time for human motion generation, ” in ICLR , 2024. [34] M. Mittal et al. , “Isaac Lab: A gpu-accelerated simulation framew ork for multi-modal robot learning, ” , 2025. [35] A. R. Punnakkal, A. Chandrasekaran, et al. , “BABEL: Bodies, action and behavior with english labels, ” in CVPR , 2021. [36] A. Paszke, S. Gross, et al. , “PyT orch: An imperative style, high- performance deep learning library , ” , 2019. [37] E. T odorov , T . Erez, and Y . T assa, “MuJoCo: A physics engine for model-based control, ” in IR OS , 2012. [38] C. Guo, S. Zou, X. Zuo, et al. , “Generating div erse and natural 3d human motions from text, ” in CVPR , 2022. [39] G. T eam, R. Anil, S. Borgeaud, et al. , “Gemini: A family of highly capable multimodal models, ” , 2023.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment