Elements of Conformal Prediction for Statisticians

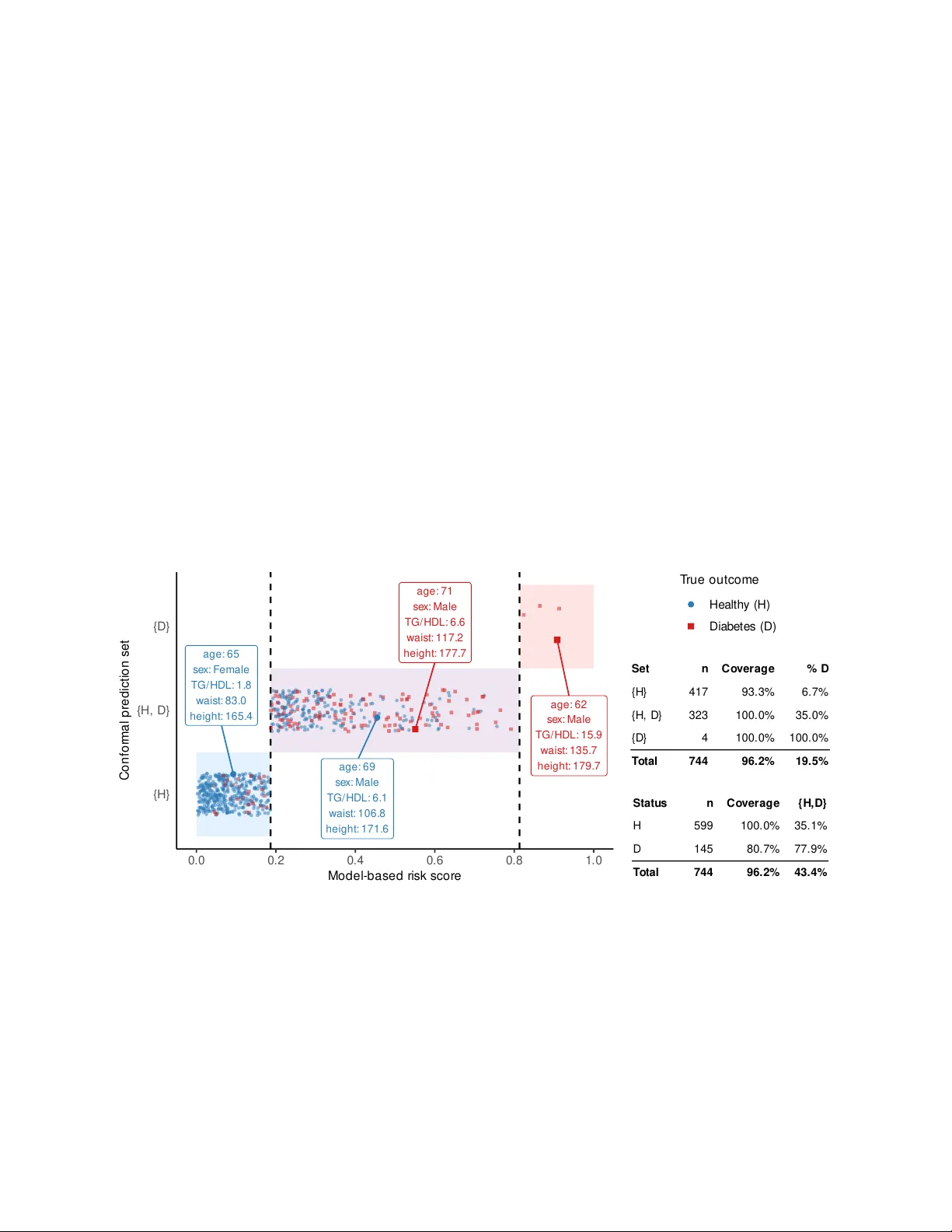

Predictive inference is a fundamental task in statistics, traditionally addressed using parametric assumptions about the data distribution and detailed analyses of how models learn from data. In recent years, conformal prediction has emerged as a rap…

Authors: Matteo Sesia, Stefano Favaro