Latent Style-based Quantum Wasserstein GAN for Drug Design

The development of new drugs is a tedious, time-consuming, and expensive process, for which the average costs are estimated to be up to around $2.5 billion. The first step in this long process is the design of the new drug, for which de novo drug des…

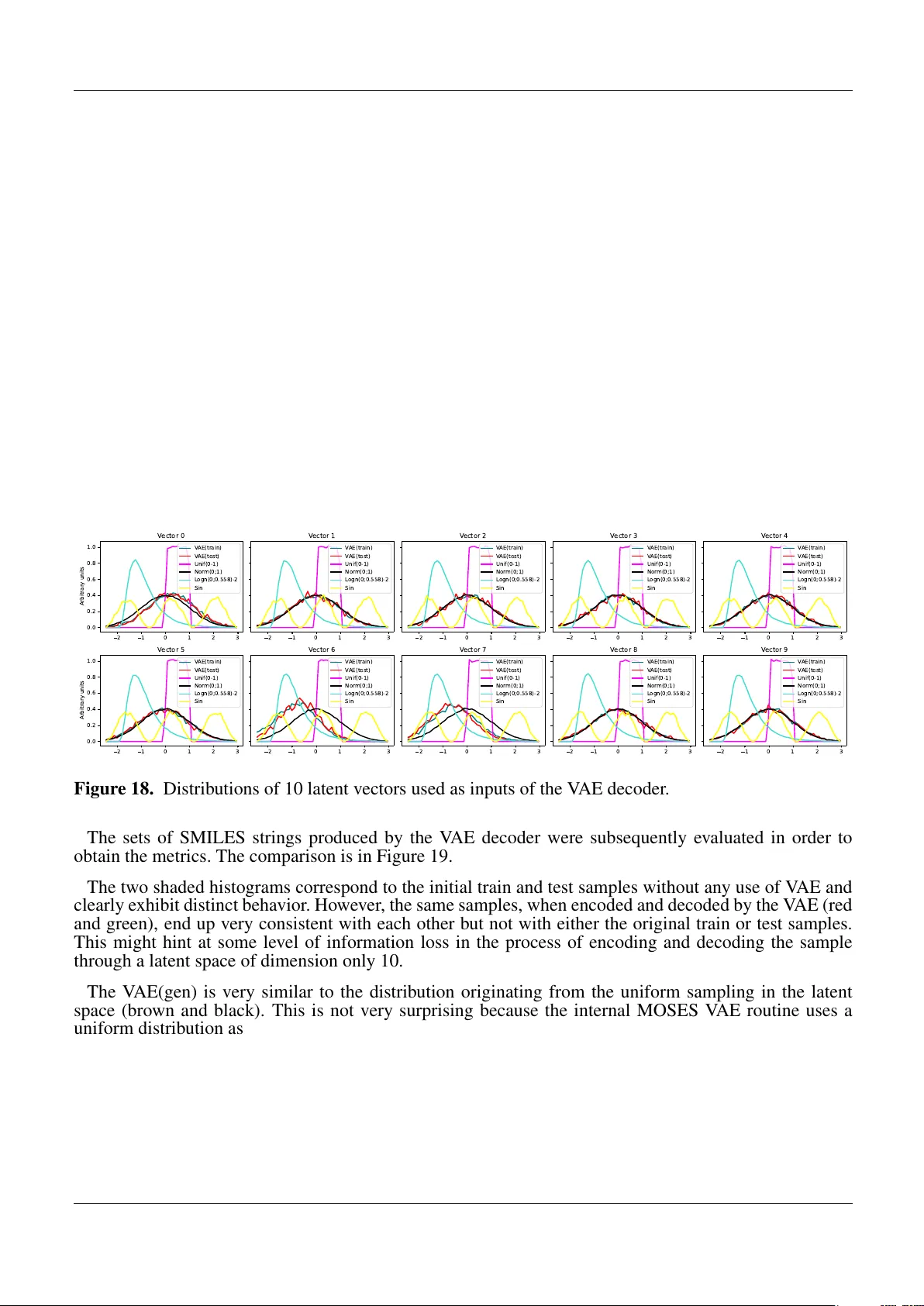

Authors: Julien Baglio, Yacine Haddad, Richard Polifka