Linear-Quadratic Gaussian Games with Distributed Sparse Estimation

Linear-quadratic Gaussian games provide a framework for modeling strategic interactions in multi-agent systems, where agents must estimate system states from noisy observations while also making decisions to optimize a quadratic cost. However, these …

Authors: Tianyu Qiu, Filippos Fotiadis, Xinjie Liu

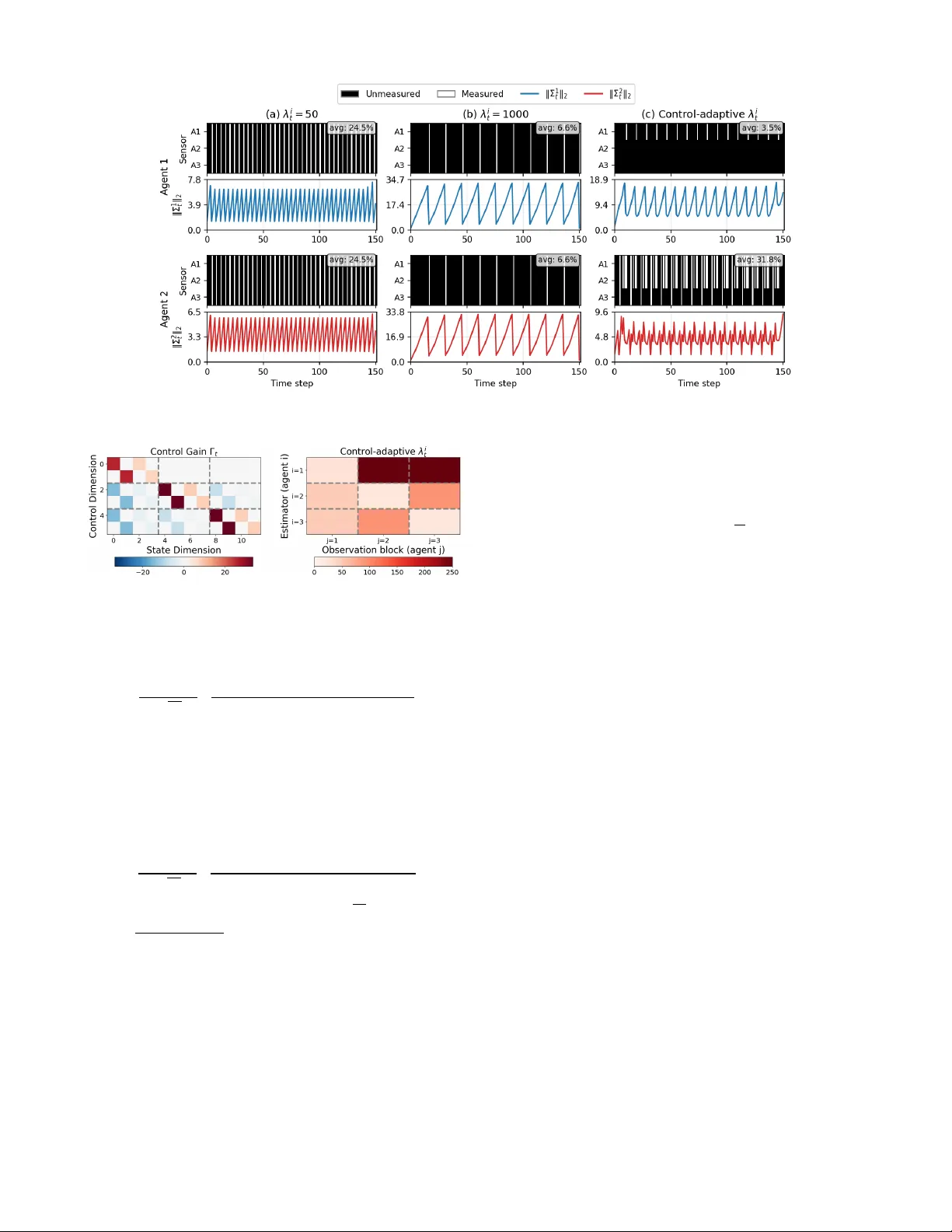

Linear -Quadratic Gaussian Games with Distrib uted Sparse Estimation T ianyu Qiu 1 , Filippos Fotiadis 1 , Xinjie Liu 1 , Christian Ellis 1 , 2 , Jesse Milzman 2 , W esley Suttle 2 , Ufuk T opcu 1 and Da vid Fridovich-K eil 1 Abstract — Linear -quadratic Gaussian games provide a framework for modeling strategic interactions in multi-agent systems, where agents must estimate system states fr om noisy observations while also making decisions to optimize a quadratic cost. Howev er , these formulations usually r equire agents to utilize the full set of available observ ations when forming their state estimates, which can be unrealistic in lar ge- scale or r esource-constrained settings. In this paper , we consider linear -quadratic Gaussian games with sparse interagent obser - vations. T o enf orce sparsity in the estimation stage, we design a distributed estimator that balances estimation e ff ectiveness with interagent measurement sparsity via a group lasso problem, while agents implement feedback Nash strategies based on their state estimates. W e provide su ffi cient conditions under which the sparse estimator is guaranteed to trigger a corrective reset to the optimal estimation gain, ensuring that estimation quality does not degrade beyond a level determined by the regularization parameters. Simulations on a formation game show that the proposed appr oach yields a significant reduction in communication resour ces consumed while only minimally a ff ecting the nominal equilibrium trajectories. I. I ntroduction Dynamic games are widely used to model strategic inter- actions in multi-agent systems, where agents are assumed to share a common representation of state uncertainty . In many applications, ho wev er, agents operate under sev ere resource constraints. For instance, large-scale robot swarms may rely on limited onboard computation and energy re- sources, making it impractical to process or exchange all av ailable measurements. Sparsity in sensing design thus becomes essential for e ffi cient resource allocation. Recent works [1]–[3] hav e explored sparsity in determin- istic games. These works propose sparsity-promoting game formulations to selectively prune less influential interac- tions while preserving e ff ectiv e ov erall agent performance. Howe ver , deterministic games simplify common multi-agent scenarios by ignoring process disturbances and noise, which are inevitable in real-world applications. Classically , linear-quadratic Gaussian (LQG) games [4] extend dynamic game theory to stochastic settings, where This w ork was sponsored by the ARL under Cooperativ e Agree- ments W911NF-23-2-0011 and W911NF-25-2-0021, by NSF under grants 2211548, 2336840, and 2409535, by ONR under grant N00014-24-1-2797, and by AFOSR under grant F A9550-22-1-0403. T . Qiu, F . Fotiadis, X. Liu, C. Ellis, U. T opcu, and D. Fridovich- Keil are with the Oden Institute for Computational Engineering and Sciences, The Univ ersity of T exas at Austin, TX, 78712, USA. (Email: { tianyuqiu, ffotiadis, xinjie-liu, utopcu, dfk } @utexas.edu, christian.ellis@austin.utexas.edu ). C. Ellis, J. Milzman, and W . Suttle are with the DEVCOM Army Research Laboratory , MD, 20783-1138, USA. (Email: jesse.m.milzman.civ@army.mil, suttlewes@gmail.com ). Fig. 1. Feedback LQG game play with distributed sparse estimation in a three-robot formation game. agents interact under process and observation uncertainty . LQG games have been studied extensi vely and are funda- mentally more comple x than deterministic LQ games studied in [2,5] due to the coupling between control and estimation. Specifically , the separation principle [6]–[8] does not gener - ally hold for these games, and equilibrium strategies depend on the underlying information pattern. As a result, much of the literature on LQG games focuses on characterizing equilibrium solutions under various information structures [9]–[15]. Howe ver , these works hav e not inv estigated how sparsity in estimation can be incorporated in LQG games. A natural way to introduce sparsity in LQG g ames is through their estimation stage. In particular , sparsity in estimation can be interpreted as a sensor selection problem, where agents selectively utilize certain observ ation com- ponents when forming their state estimates. Existing work on sensor selection and sparsity-promoting estimation has primarily focused on single-agent or centralized systems [16]–[18]. Related work on distributed estimation also ex- ists [19]. Ho we ver , the distributed estimation problem for multi-agent dynamic games brings two di ffi culties: i) agents interact strategically , and ii) agents are subject to distinct measurements, making classical sensor selection approaches inapplicable. Consequently , distributed sparse estimation de- sign in stochastic games remains largely unexplored. In this work, we study how sparsity can be incorporated into LQG games with individual observations. T o wards this end, our contributions are as follo ws: i) W e first design a distributed state estimator for LQG games that operates using each agent’ s local measurements while agents follow feedback Nash equilibrium strategies [2,5]. ii) Building on this estimator, we introduce a sparsity-promoting distributed estimation framew ork for LQG games based on group lasso, which promotes structured sparsity across interagent observation groups. iii) W e provide su ffi cient conditions showing that if the estimation error cov ariance grows be- yond a level determined by the regularization parameters, the sparse estimator is guaranteed to reset to the optimal estimation gain, thereby prev enting unbounded degradation of estimation quality . iv) Finally , we validate the proposed framew ork through simulations on a three-robot formation game, as illustrated in Fig. 1, demonstrating the trade-o ff between estimation sparsity and trajectory performance in noisy multi-agent en vironments. II. L inear -Q u adra tic G a ussian G ames with I ndividu al O bser v a tions A. Linear-Quadratic Gaussian Games W e consider an N -player discrete-time finite-horizon linear-quadratic Gaussian (LQG) game. In this setting, each agent i ∈ [ N ] B { 1 , . . . , N } 1 is subject to the linear dynamics x i t + 1 B A i t x i t + B i t u i t + w i t , with state x i t ∈ R n i , control input u i t ∈ R m i , and system ma- trices A i t ∈ R n i × n i and B i t ∈ R n i × m i . w i t ∈ R n i , w i t ∼ N (0 , W i t ) is Gaussian process noise with covariance W i t ∈ R n i × n i ⪰ 0. W e further denote the joint state of all agents as x t B [ x 1 ⊤ t , . . . , x N ⊤ t ] ⊤ ∈ R n and the joint control input of all agents as u t B [ u 1 ⊤ t , . . . , u N ⊤ t ] ⊤ ∈ R m with joint state dimen- sion n B P N i = 1 n i and joint control dimension m B P N i = 1 m i . The objecti ve of each agent i is to minimize a quadratic performance criterion over a finite time horizon T , subject to the joint dynamics of all agents. Agent i ’ s problem can be formulated as min u i T X t = 1 1 2 E h ( x ⊤ t Q i t + 2 q i ⊤ t ) x t + ( u ⊤ t R i t + 2 r i ⊤ t ) u t i + 1 2 E h ( x ⊤ T + 1 Q i T + 1 + 2 q i ⊤ T + 1 ) x T + 1 i , (1a) s.t. x t + 1 = A t x t + B t u t + w t , (1b) x 1 ∼ N ( ¯ x 1 , Σ − x , 1 ) , (1c) with A t B blockdiag( { A i t } N i = 1 ) , B t B blockdiag( { B i t } N i = 1 ) and joint process noise w t B [ w 1 ⊤ t , . . . , w N ⊤ t ] ⊤ ∈ R n , following w t ∼ N ( 0 , W t ) , W t B blockdiag( { W i t } N i = 1 ) ∈ R n × n . The joint initial state x 1 follows a prior Gaussian distribution with mean ¯ x 1 and cov ariance Σ − x , 1 ⪰ 0. The N agents’ coupled versions of problem (1) form an LQG game. B. Individual Observation Model In LQG games, each agent i usually does not hav e access to all agents’ full states x t . Instead, it has access to some partial, noisy individual observations y i t ∈ R p i , given by y i t B C i t x t + v i t , v i t ∈ R p i , v i t ∼ N (0 , V i t ) , V i t ∈ R p i × p i ⪰ 0 , (2) where C i t ∈ R p i × n denotes the individual observ ation matrix of agent i , and v i t denotes the individual Gaussian observ ation noise. W e also denote v t B [ v 1 ⊤ t , . . . , v N ⊤ t ] ⊤ ∈ R p , following v t ∼ N ( 0 , V t ) , V t B blockdiag( { V i t } N i = 1 ) ∈ R p × p , p = N X i = 1 p i . 1 In this work, we denote [ Z ] B { 1 , . . . , Z } for any positi ve integer Z . In this paper, we assume that y i t can be obtained from P i individual sensors and thus partitioned into y i 1 t , . . . , y iP i t . It is also common that C i t = I , implying that y i t may comprise interagent observations. C. Contr ol, Estimation, and Information P atterns There is generally no closed-form solution for the feed- back Nash equilibrium in LQG games with individual ob- servations [20]. A common lo w-complexity approximation assumes that each agent i employs the Nash control gains Γ t B [ Γ 1 t , · · · , Γ N t ] ⊤ and α t B [ α 1 ⊤ t , · · · , α N ⊤ t ] ⊤ obtained from the LQG game with process noise but without measurement noise [2,5]. Each agent then estimates the joint state ˆ x i t from its individual observations y i t with estimation gain K i t , and substitutes this estimate into its control input u i t , resulting in: u i t = − Γ i t ˆ x i t − α i t , (3) whose performance relies on the accuracy of ˆ x i t . T o charac- terize this accuracy , we define agent i ’ s individual estimation error e i t and the joint estimation error across agents e t as e i t B x t − ˆ x i t , e t B [ e 1 ⊤ t , · · · , e N ⊤ t ] ⊤ , and their cov ariances as Σ i t B Cov( e i t ), Σ t B Cov( e t ). The central question we in vestigate in this paper is how to design these gains K i t to enforce interagent measurement sparsity , while balancing that against control performance. T o that end, we assume the following information pattern: 1) The system matrices A t , B t , observ ation model C i t , pro- cess and measurement noise covariance W t , V t , control gains Γ t , α t , and distrib uted estimation gain K i t are common knowledge among agents. 2) The indi vidual observations y i t , together with individual estimation ˆ x i t computed via y i t , and feedback control u i t computed via ˆ x i t are not shared among agents. This information pattern captures how agents’ decisions depend upon their limited access to information, particularly when agents’ sensing of one another is sparse . III. D istributed S p arse E stima tion G ain D esign In this section, we design sparse estimation gains K i t for each agent, subject to the aforementioned information pattern. W e first derive the propagation of estimation error and covariance under the distrib uted information structure, then obtain the unconstrained optimal estimation gain as a reference solution, and finally formulate a group lasso optimization that sparsifies this gain by penalizing reliance on uninformative sensors. A. Pr opagation of Joint Estimation Error and Covariance Joint Control Input: A consequence of the assumed information pattern is that each agent i cannot reconstruct the actual joint control u t from (3) applied to the joint system, because agents do not share their state estimates. Instead, each agent can only reconstruct an estimated ˆ u i t based on its estimation of the joint state ˆ x i t . This estimate is given by ˆ u i t = h ˆ u i 1 ⊤ t · · · ˆ u iN ⊤ t i ⊤ = − Γ t ˆ x i t − α t = − Γ t ( x t − e i t ) − α t , (4) where agent i ’ s actual control u i t is the i -th block of ˆ u i t , i.e., u i t = ˆ u ii t . Then, the actual joint control u t applied to the system is the concatenation of all agents’ actual control u i t , given as u t = h ˆ u 11 ⊤ t · · · ˆ u N N ⊤ t i ⊤ = N X j = 1 E j u j t = − α t − N X j = 1 E j Γ t ( x t − e j t ) = − α t − Γ t x t + N X j = 1 E j Γ t e j t , (5) with E i ∈ R m × m being a block diagonal matrix with its ( i , i )-th block being I m i , and the other blocks being zero matrices. Joint State Propagation: Given the joint control input (5), the joint state x t + 1 ev olves according to x t + 1 = A t x t + B t u t + w t = ( A t − B t Γ t ) x t + N X j = 1 B t E j Γ t e j t − B t α t + w t . (6) Linear Estimation Propagation: Each agent i then updates the estimate ˆ x i t + 1 according to its individual observations y i t + 1 and the estimate of control ˆ u i t via ˆ x i − t + 1 = A t ˆ x i t + B t ˆ u i t , (7a) ˆ x i t + 1 = ˆ x i − t + 1 + K i t + 1 y i t + 1 − C i t + 1 ˆ x i − t + 1 , (7b) where K i t is to be designed in the following subsections. Moreov er , subtracting (7a) and (7b) from (6), we obtain each agent i ’ s individual estimation error e i t + 1 as e i − t + 1 = x t + 1 − ˆ x i − t + 1 = A t ( x t − ˆ x i t ) + B t ( u t − ˆ u i t ) + w t = A t e i t + B t N X j = 1 E j Γ t e j t − Γ t e i t + w t , (8a) e i t + 1 = x t + 1 − ˆ x i t + 1 = e i − t + 1 − K i t + 1 C i t + 1 e i − t + 1 + v i t + 1 . (8b) Remark 1. A key observation fr om (8) is that, unlike classical state estimation wher e err or depends only on system dynamics and noise, the err or in LQG games with individual observations is also driven by the mismatch between agent i’ s estimate of the joint contr ol ˆ u i t and the actual u t , and consequently leads to a joint update for the estimation err or . Covariance Update: Finally , concatenating (8a) and (8b), the joint estimation error e t + 1 and covariance Σ t + 1 ev olve as e − t + 1 = ( A t + B t ) e t + w t , (9a) e t + 1 = ( I n 2 − K t + 1 ) e − t + 1 − K t + 1 v t + 1 , (9b) Σ − t + 1 = ( A t + B t ) Σ t ( A t + B t ) ⊤ + W t , (9c) Σ t + 1 = ( I n 2 − K t + 1 ) Σ − t + 1 ( I n 2 − K t + 1 ) ⊤ + K t + 1 V t + 1 K ⊤ t + 1 , (9d) A t = blockdiag( { A t − B t Γ t } N i = 1 ) , B t = B t E 1 Γ t · · · B t E N Γ t . . . . . . . . . B t E 1 Γ t · · · B t E N Γ t , K t + 1 = blockdiag( { K i t + 1 C i t + 1 } N i = 1 ) , K t + 1 = blockdiag( { K i t + 1 } N i = 1 ) . This relation explicitly shows ho w e t + 1 and Σ t + 1 are a ff ected by the distributed estimation gains, which we will design sparsely in the following subsections. B. Distributed Optimal Estimation Design W e now deri ve the unconstrained optimal estimation gain for each agent, which will serve as the reference solution for the sparse design in Section III-C. Specifically , we minimize agent i ’ s individual estimation error cov ariance Σ i t with respect to its estimation gain K i t : Σ i − t = F i Σ − t F i ⊤ , (10a) Σ i t = ( I − K i t C i t ) Σ i − t ( I − K i t C i t ) ⊤ + K i t V i t K i ⊤ t , (10b) where F i B [ 0 , · · · , 0 , I n , 0 , · · · , 0 ] with its i th block being I n . Note that (10b) naturally holds since K t and K t are block diagonal matrices. By letting ∂ tr ( Σ i t ) ∂ K i t = 0 , the stepwise optimal individual estimation gain is expressed as follows: K i ⋆ t ( C i t Σ i − t C i ⊤ t + V i t ) = Σ i − t C i t , K i ⋆ t = Σ i − t C i t ( C i t Σ i − t C i ⊤ t + V i t ) − 1 . (11) Note that each agent’ s estimation gain can be optimized independently . Howe ver , this gain generally processes all sensor observations, motiv ating a sparse relaxation. C. Sparse Estimation via Gr oup Lasso Optimization W e therefore seek a sparse approximation to K i ⋆ t + 1 that zeroes out columns corresponding to uninformative sensors, allowing each agent to rely on fewer observations while preserving estimation accuracy . Mirroring the partition of y i t into P i sensor groups, we partition K i t into P i block columns as K i t = [ K i t [1] , · · · , K i t [ P i ]], where K i t [ ρ ] is the estimation gain associated with sensor ρ . Subsequently , we formulate the following group lasso problem for all i ∈ [ N ] , t ∈ [ T + 1]: min K i t K i t ( C i t Σ i − t C i ⊤ t + V i t ) − Σ i − t C i t 2 F | {z } L Estimation + X ρ ∈ [ P i ] λ i ρ t K i t [ ρ ] F | {z } L Sparsity , (12) where ∥ · ∥ F is the matrix Frobenius norm. In this optimization problem, L Estimation denotes a least square error cost that encourages the stepwise estimation gain K i t to be closer to the optimal indi vidual estimation gain in (11). On the other hand, L Sparsity penalizes large norms of K i t [ ρ ], with non-negati ve regularization constant λ i ρ t , encouraging agent i not to rely on observation y i ρ t , i.e., the measurement from the ρ -th sensor . In what follows, we show that (12) can be cast as a con vex conic program, solvable by o ff -the-shelf optimizers [21]. Proposition 1. Pr oblem (12) is equivalent to a con vex conic pr ogram with quadratic cost. Pr oof. Using matrix vectorization [22], the group lasso prob- lem (12) can be reconstructed as min K i t ( C i t Σ i − t C i ⊤ t + V i t ) ⊗ I vec( K i t ) − vec( Σ i − t C i t ) 2 2 + X ρ ∈ [ P i ] λ i ρ t ∥ vec( K i t [ ρ ]) ∥ 2 , (13) where ⊗ denotes the Kronecker product. Moreover , introduc- ing the slack v ariables s i ρ t , it follows that the optimization (13) has the same optimal solution as min K i t , s i t ( C i t Σ i − t C i ⊤ t + V i t ) ⊗ I vec( K i t ) − vec( Σ i − t C i t ) 2 2 + X ρ ∈ [ P i ] λ i ρ t s i ρ t , s.t. " vec( K i t [ ρ ]) s i ρ t # ∈ C , C B { ( p , q ) | q ∈ R , ∥ p ∥ 2 ≤ q } , which is a conv ex conic program with a second-order cone constraint by definition [23]. □ Finally , note that (12) continuously optimizes the linear estimation gain matrices K i t for all players and time steps. In practice, howe ver , each agent i ’ s ultimate goal is to have a binary decision about whether to utilize sensor ρ to obtain the corresponding measurement y i ρ t . If y i ρ t is not used in constructing ˆ x i t , it is equiv alent to zeroing out K i t [ ρ ], the ρ -th block column of the linear estimation gain K i t . In that way , we introduce a ratio threshold r th ∈ (0 , 1] to zero out K i t [ ρ ] if its norm ∥ K i t [ ρ ] ∥ F is small enough compared to the optimal dense estimation gain K i ⋆ t [ ρ ] in (11), i.e., K i t [ ρ ] = K i ⋆ t [ ρ ] , ∥ K i t [ ρ ] ∥ F ≥ r th ∥ K i ⋆ t [ ρ ] ∥ F , [ reset ] 0 , otherwise . [ zero-out ] (14) The resulting procedure is summarized in Algorithm 1. Algorithm 1 LQG Game with Sparse Distributed Estimation Input: System Parameters: Σ − x , 1 , ( A t , B t , W t ) T t = 1 , ( { C i t } N i = 1 , V t ) T + 1 t = 1 , Deterministic Feedback Nash Strategy: ( Γ t , α t ) T t = 1 , Regularization level ( { λ i ρ t } P i ρ = 1 ) N , T + 1 i = 1 , t = 1 . Output: Sparse Individual Estimation Gain { K i t } N , T + 1 i = 1 , t = 1 . 1: for t = 1 : T do 2: for i = 1 : N do 3: K i t ← (12) // Group lasso optimization 4: y i t ← (2) // Individual noisy observation 5: ˆ x i t ← (7) // Distributed sparse estimation 6: ˆ u i t ← (4) // Feedback control estimation 7: end for 8: u t ← (5) // Actual feedback joint control 9: x t + 1 ← (6) // System update with process noise 10: end for D. Game-Theoretic Contr ol-Adaptive Re gularization For the remainder of this section, we specialize to the interagent measurement case, where each sensor directly measures a specific agent’ s state ( C i t = I ). That is, each measurement y i j t represents agent i ’ s noisy observ ation of agent j . In this setting, the sparse estimation framew ork di- rectly determines which agents each agent observes, making the connection between estimation sparsity and interagent communication explicit. Since the sparsity decision no w corresponds to which agents to observe, the regularization λ i j t should reflect how much agent i ’ s control depends on agent j ’ s state. This dependency is captured by Γ i t [ j ], the j -th block of the feed- back gain. W e thus propose the following control-adaptiv e regularization: λ i j t + 1 = L 1 ∥ Γ i t [ j ] ∥ F , ∥ Γ i t [ j ] ∥ F , 0 , L 2 , otherwise , (15) where Γ i t [ j ] is the j -th block of Γ i t , and L 1 , L 2 > 0 are tuning parameters. When agent i ’ s control depends strongly on agent j (large ∥ Γ i t [ j ] ∥ F ), the regularization is low and the observation is (more likely) retained; when the dependenc y is weak or absent, λ i j t defaults to L 2 , promoting sparsification. E. Covariance-Sparsity T rade-O ff A natural question is ho w much estimation accuracy is sacrificed by sparsifying the estimation gain. W e address this by providing a su ffi cient condition on the regularization level under which the reset mechanism is guaranteed to trigger . This condition becomes easier to satisfy as the estimation error co v ariance gro ws, implying that the system self-corrects before estimation quality degrades too far . Theorem 1. In the interagent measurement case, at time t, K i t [ ρ ] will r eset to K i ⋆ t [ ρ ] for ρ ∈ [ P i ] if max ρ ∈ [ P i ] λ i ρ t ≤ 2(1 − r th ) √ P i · σ min ( Σ i − t )[ σ min ( Σ i − t ) + σ min ( V i t )] 2 σ max ( Σ i − t ) + σ max ( V i t ) , wher e σ min ( · ) and σ max ( · ) denote the minimum and maximum singular value for a matrix, respectively . Pr oof. Case 1: Suppose K i t [ ρ ] , 0 , ∀ ρ ∈ [ P i ]. Then, the first-order optimality condition for the problem (12) gives: 2( K i t − K i ⋆ t )( Σ i − t + V i t ) 2 = − λ i 1 t K i t [1] ∥ K i t [1] ∥ F · · · λ iP i t K i t [ P i ] ∥ K i t [ P i ] ∥ F ⇒ K i t = K i ⋆ t + K λ , where K λ = − 1 2 λ i 1 t K i t [1] ∥ K i t [1] ∥ F · · · λ iP i t K i t [ P i ] ∥ K i t [ P i ] ∥ F ( Σ i − t + V i t ) − 2 . Let ¯ λ i t = max ρ ∈ [ P i ] λ i ρ t . Denoting the ρ -th block column of K λ as K λ [ ρ ], it follows that ∥ K λ [ ρ ] ∥ F ≤ ∥ K λ ∥ F ≤ √ P i ¯ λ i t 2[ σ min ( Σ i − t + V i t )] 2 . (16) Since K i t = K i ⋆ t + K λ holds block-column-wise, i.e., K i t [ ρ ] = K i ⋆ t [ ρ ] + K λ [ ρ ], the re verse triangle inequality and σ min ( Σ i − t + V i t ) ≥ σ min ( Σ i − t ) + σ min ( V i t ) give ∥ K i t [ ρ ] ∥ F ≥ ∥ K i ⋆ t [ ρ ] ∥ F − ∥ K λ [ ρ ] ∥ F ≥ ∥ K i ⋆ t [ ρ ] ∥ F − √ P i ¯ λ i t 2[ σ min ( Σ i − t ) + σ min ( V i t )] 2 . (17) Next, note that for K i t [ ρ ] to reset to K i ⋆ t [ ρ ], the rule (14) requires ∥ K i t [ ρ ] ∥ ≥ r th ∥ K i ⋆ t [ ρ ] ∥ F . Combining this rule with (17), a su ffi cient condition for K i t [ ρ ] to reset to K i ⋆ t [ ρ ] is giv en by ∥ K i ⋆ t [ ρ ] ∥ F − √ P i ¯ λ i t 2[ σ min ( Σ i − t ) + σ min ( V i t )] 2 ≥ r th ∥ K i ⋆ t [ ρ ] ∥ F ⇔ (1 − r th ) ∥ K i ⋆ t [ ρ ] ∥ F ≥ √ P i ¯ λ i t 2[ σ min ( Σ i − t ) + σ min ( V i t )] 2 . (18) Denote K i ⋆ t [ ρ ] = K i ⋆ t E ρ , where E ρ is a matrix that selects the ρ -th block column of K i ⋆ t . Then, it follows that ∥ K i ⋆ t [ ρ ] ∥ F ≥ ∥ K i ⋆ t [ ρ ] ∥ 2 ≥ σ min ( K i ⋆ t ) ∥ E ρ ∥ 2 = σ min ( K i ⋆ t ) ≥ σ min ( Σ i − t ) σ max ( Σ i − t ) + σ max ( V i t ) . (19) Fig. 2. The sensor usage and individual estimation covariance norm ev olution of each robot (Row 1: R1, Row 2: R2, we omit R3 as it is symmetric to R2) in the three-robot formation game under constant regularization le vels (a) λ i t = 50, (b) λ i t = 1000 and (c) game-theoretic control-adaptiv e λ i t . Fig. 3. Game-theoretic control-adaptiv e regularization with respect to feedback Nash control gain Γ i t [ j ] in (15) for a three-robot formation game. Combining (18)-(19), it follows that a su ffi cient condition for K i t [ ρ ] to reset to K i ⋆ t [ ρ ] is ¯ λ i t ≤ 2(1 − r th ) √ P i · σ min ( Σ i − t )[ σ min ( Σ i − t ) + σ min ( V i t )] 2 σ max ( Σ i − t ) + σ max ( V i t ) (20) Case 2: Suppose K i t [ ρ ] = 0 is the optimal solution for (12) for some ρ . Then, using the subgradient optimality condition in (12) with respect to K i t [ ρ ], we obtain λ i ρ t ≥ 2 K i ⋆ t ( Σ i − t + V i t ) 2 E ρ F ≥ 2 σ min Σ i − t ( Σ i − t + V i t ) ≥ 2 σ min ( Σ i − t ) h σ min ( Σ i − t ) + σ min ( V i t ) i > 2(1 − r th ) √ P i · σ min ( Σ i − t ) h σ min ( Σ i − t ) + σ min ( V i t ) i 2 σ max ( Σ i − t ) + σ max ( V i t ) , (21) where the last inequality follows from √ P i ≥ 1, 1 − r th < 1, and σ min ( Σ i − t ) + σ min ( V i t ) σ max ( Σ i − t ) + σ max ( V i t ) ≤ 1. This contradicts (20). Hence, K i t [ ρ ] = 0 cannot be optimal under (20), hence (20) is su ffi cient to trigger a reset of K i t [ ρ ] to K i ⋆ t [ ρ ]. □ IV . N umerical S imula tions W e validate our proposed sparse LQG games framework in a three-robot formation game, and in vestigate robots’ trajectory performance under di ff erent regularization designs. A. Simulation Setup The formation game comprises a le ading robot (R1) that aims to track a static reference path p 1 t , ref , and following robots (R2 and R3) that aim to maintain a reference displace- ment d i j ref between them. Each robot follo ws double-integrator dynamics discretized with time-step ∆ t , i.e., A i t = " I 2 ∆ t · I 2 0 I 2 # , B i t = " ∆ 2 t 2 · I 2 ∆ t · I 2 # , with state x i t = [ p i t , x , p i t , y , v i t , x , v i t , y ] ⊤ encoding 2-D position and velocity and control u i t = [ a i t , x , a i t , y ] ⊤ encoding 2-D acceleration. W e formulate each robot’ s objectiv e as follo ws: J 1 = T X t = 1 E h ∥ p 1 t − p 1 t , ref ∥ 2 2 + ∥ u 1 t ∥ 2 2 i + E h ∥ p 1 T + 1 − p 1 T + 1 , ref ∥ 2 2 i , J i = T X t = 1 X j , i E h ∥ p i t − p j t − d i j ref ∥ 2 2 + ∥ u i t ∥ 2 2 i + X j , i E h ∥ p i T + 1 − p j T + 1 − d i j ref ∥ 2 2 i , i ∈ { 2 , 3 } , j ∈ { 1 , 2 , 3 } , with T = 150 , ∆ t = 0 . 05 s, the reference displacement between robots d 21 ref = [ − 2; − 2] , d 31 ref = [2; − 2] , d 23 ref = − d 32 ref = [4; 0], and the reference path for R1: p 1 t , ref = 30 cos ( k · ∆ t ) , 30 sin (3 k · ∆ t ) for k ∈ [ T ]. W e also set Σ − x , 1 = I 3 ⊗ blockdiag(0 . 25 2 I 2 , 1 . 2 2 I 2 ) , W i t = blockdiag( 0 2 × 2 , 1 . 2 2 I 2 ) , V i t = I 3 ⊗ blockdiag(0 . 25 2 I 2 , 1 . 2 2 I 2 ) and C i t = I 12 , ∀ i ∈ [3] being identical across robots. For the control-adaptiv e regularization design in (15), we set L 1 = 1000 and L 2 = 250, and the resulting regularization is illustrated in Fig. 3. B. Result Analysis W e ev aluate our distributed sparse estimation with game- theoretic control-adapti ve regularization and compare it with static regularization levels λ i t = 50 and 1000. W e observe the following behaviors: i) Fig. 2 shows that sensor usage decreases as the regularization lev el increases, since L Sparsity becomes more dominant in (12). ii) Fig. 4 further sho ws that stronger regularization reduces sensing usage but degrades control performance because estimation error accumulates more quickly , highlighting the trade-o ff between control Fig. 4. T rajectory performance for the formation game with regularization levels (a) λ i t = 0, (b) λ i t = 50, (c) λ i t = 1000, and (d) control-adapti ve λ i t . performance and estimation sparsity . iii) The estimation error increases when measurements are skipped and drops sharply when dense measurements are taken, suggesting that the cov ariance remains bounded under sparse estimation. Compared with static re gularization, the proposed control- adaptiv e re gularization achieves a better balance between sensing resource allocation and control performance. As shown in Fig. 2, R1 sav es sensing resources by prioritizing self-measurement, while R2 and R3 still frequently measure all robots. The sensor usage pattern also sho ws that R2 and R3 measure R1 more often than they measure each other , reflecting R1’ s leadership role in the game. Fig. 4 shows that this adaptive strategy allows R1 to track the reference path well with limited sensing, while R2 and R3 follo w accord- ingly . Overall, compared with static regularization, control- adaptiv e regularization leads to a more e ff ecti ve allocation of inter-robot measurements in games with structured coupling among agents’ objectiv es. V . C onclusions and F uture W ork W e studied LQG games in which multiple agents estimate the joint system state using sparse individual observations. T o promote sparsity in each agent’ s estimator, we proposed a group lasso formulation that zeros out groups of estimation gains, yielding a con vex optimization problem. Future work includes an extension of the proposed approach to nonlinear dynamics and non-quadratic agent objecti ves, via iterativ e LQ game approaches such as [24]–[26]. R eferences [1] M. Chahine, R. Firoozi, W . Xiao, M. Schwager , and D. Rus, “Local non-cooperativ e games with principled player selection for scalable motion planning, ” in 2023 IEEE / RSJ International Conference on Intelligent Robots and Systems (IR OS) . IEEE, 2023, pp. 880–887. [2] X. Liu, J. Li, F . Fotiadis, M. O. Karabag, J. Milzman, D. Fridovich- Keil, and U. T opcu, “ Approximate feedback nash equilibria with sparse inter-agent dependencies, ” arXiv preprint , 2024. [3] T . Qiu, E. Ouano, F . Palafox, C. Ellis, and D. Fridovich-Keil, “Psn game: Game-theoretic prediction and planning via a player selection network, ” arXiv pr eprint arXiv:2505.00213 , 2025. [4] L. S. Shapley , “Stochastic games, ” Pr oceedings of the national academy of sciences , v ol. 39, no. 10, pp. 1095–1100, 1953. [5] D. Fridovich-Keil, Smooth Game Theory , 2024. [Online]. A vailable: https: // clearoboticslab.github .io / documents / smooth game theory .pdf [6] G. W elch, G. Bishop et al. , “ An introduction to the kalman filter, ” 1995. [7] J. V an Den Berg, P . Abbeel, and K. Goldberg, “Lqg-mp: Optimized path planning for robots with motion uncertainty and imperfect state information, ” The International Journal of Robotics Researc h , vol. 30, no. 7, pp. 895–913, 2011. [8] D. Bertsekas, Dynamic pro gramming and optimal contr ol: V olume I . Athena scientific, 2012, v ol. 4. [9] M. Pachter , “Static linear-quadratic gaussian games, ” in Advances in Dynamic Games: Theory , Applications, and Numerical Methods . Springer , 2013, pp. 85–105. [10] ——, “On linear-quadratic gaussian dynamic games, ” in International Symposium on Dynamic Games and Applications . Springer , 2016, pp. 301–322. [11] A. Gupta, A. Nayyar, C. Langbort, and T . Basar , “Common informa- tion based markov perfect equilibria for linear-gaussian games with asymmetric information, ” SIAM J ournal on Control and Optimization , vol. 52, no. 5, pp. 3228–3260, 2014. [12] A. Gupta, C. Langbort, and T . Bas ¸ar, “Dynamic games with asym- metric information and resource constrained players with applications to security of cyberphysical systems, ” IEEE T ransactions on Contr ol of Network Systems , v ol. 4, no. 1, pp. 71–81, 2016. [13] D. V asal and A. Anastasopoulos, “Signaling equilibria for dynamic lqg games with asymmetric information, ” in 2016 IEEE 55th Confer ence on Decision and Contr ol (CDC) . IEEE, 2016, pp. 6901–6908. [14] N. Heydaribeni and A. Anastasopoulos, “Linear equilibria for dynamic lqg games with asymmetric information and dependent types, ” in 2019 IEEE 58th Conference on Decision and Contr ol (CDC) . IEEE, 2019, pp. 5971–5976. [15] B. Hambly , R. Xu, and H. Y ang, “Linear-quadratic gaussian games with asymmetric information: Belief corrections using the opponents actions, ” arXiv preprint , 2023. [16] V . Tzoumas, L. Carlone, G. J. Pappas, and A. Jadbabaie, “Lqg control and sensing co-design, ” IEEE T ransactions on Automatic Contr ol , vol. 66, no. 4, pp. 1468–1483, 2020. [17] F . Fotiadis and K. G. V amvoudakis, “Input-output data-driven sensor selection, ” in 2024 IEEE 63r d Confer ence on Decision and Control (CDC) . IEEE, 2024, pp. 4506–4511. [18] ——, “Input–output data-driven sensor selection for cyber-physical systems, ” A utomatica , vol. 186, p. 112829, 2026. [19] Y . Zhong, N. Y ang, L. Huang, G. Shi, and L. Shi, “Sparse sensor selec- tion for distributed systems: An l1-relaxation approach, ” Automatica , vol. 165, p. 111670, 2024. [20] H. S. Witsenhausen, “ A counterexample in stochastic optimum con- trol, ” SIAM Journal on Contr ol , vol. 6, no. 1, pp. 131–147, 1968. [21] P . J. Goulart and Y . Chen, “Clarabel: An interior-point solver for conic programs with quadratic objecti ves, ” 2024. [22] H. D. Macedo and J. N. Oli veira, “T yping linear algebra: A biproduct- oriented approach, ” Science of Computer Pro gramming , vol. 78, no. 11, pp. 2160–2191, 2013. [23] S. Boyd and L. V andenberghe, Conve x optimization . Cambridge univ ersity press, 2004. [24] D. Fridovich-Keil, E. Ratner, L. Peters, A. D. Dragan, and C. J. T omlin, “E ffi cient iterativ e linear-quadratic approximations for non- linear multi-player general-sum di ff erential games, ” in 2020 IEEE international conference on robotics and automation (ICRA) . IEEE, 2020, pp. 1475–1481. [25] M. W ang, N. Mehr, A. Gaidon, and M. Schwager , “Game-theoretic planning for risk-aw are interactive agents, ” in 2020 IEEE / RSJ Interna- tional Confer ence on Intelligent Robots and Systems (IR OS) . IEEE, 2020, pp. 6998–7005. [26] W . Schwarting, A. Pierson, S. Karaman, and D. Rus, “Stochastic dynamic games in belief space, ” IEEE T ransactions on Robotics , vol. 37, no. 6, pp. 2157–2172, 2021.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment