Quadratic Surrogate Attractor for Particle Swarm Optimization

This paper presents a particle swarm optimization algorithm that leverages surrogate modeling to replace the conventional global best solution with the minimum of an n-dimensional quadratic form, providing a better-conditioned dynamic attractor for t…

Authors: Maurizio Clemente, Marcello Canova

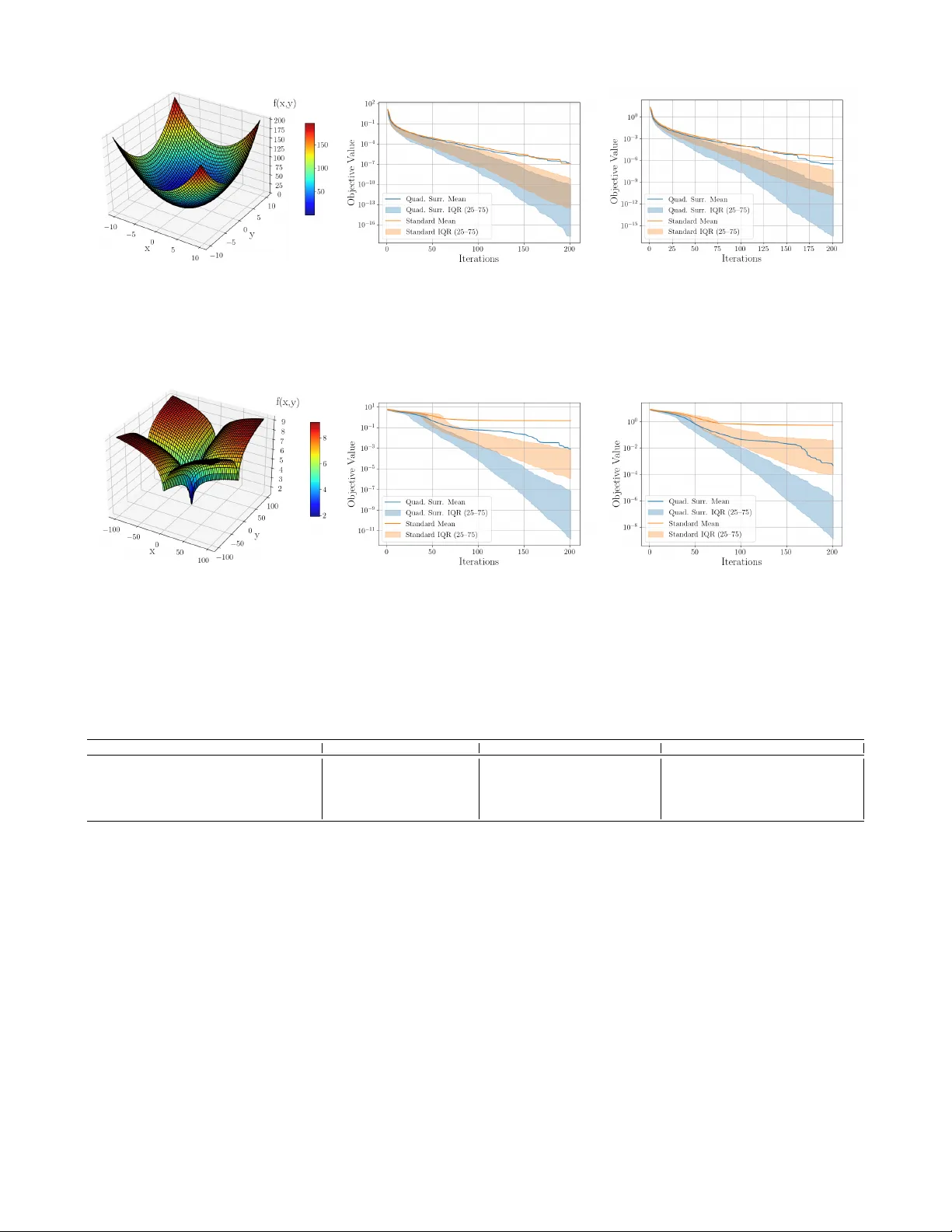

Quadratic Surr ogate Attractor f or P article Swarm Optimization Maurizio Clemente 1 and Marcello Canov a 1 Abstract — This paper presents a particle swarm optimization algorithm that leverages surr ogate modeling to r eplace the con ventional global best solution with the minimum of an n - dimensional quadratic form, providing a better-conditioned dy- namic attractor for the swarm. This refined conver gence target, informed by the local landscape, enhances global con ver gence behavior and increases rob ustness against prematur e con ver - gence and noise, while incurring only minimal computational overhead. The surrogate-augmented approach is evaluated against the standard algorithm through a numerical study on a set of benchmark optimization functions that exhibit diverse landscapes. T o ensure statistical significance, 400 independent runs are conducted for each function and algorithm, and the results are analyzed based on their statistical characteristics and corresponding distrib utions. The quadratic surrogate attractor consistently outperforms the conv entional algorithm acr oss all tested functions. The improvement is particularly pr onounced for quasi-con v ex functions, where the surrogate model can exploit the underlying con vex-lik e structure of the landscape. I . I N T R O D U C T I O N Particle Swarm Optimization (PSO) algorithms are widely recognized as effecti v e tools for solving general optimization problems across a broad range of fields [1]. Their combined flexibility and effecti v eness stems from the use of simple stigmergic collaboration rules rather than gradient infor- mation to con ver ge toward an optimum [2], which allows them to perform well even when the objectiv e function is highly complex, non-differentiable, or non-smooth. In line with other nature-inspired meta-heuristics, swarm algorithms rely heavily on numerous function ev aluations to thoroughly explore the search domain. Howe ver , most of the information generated from the man y function ev aluations is typically discarded, retaining only each particle’ s personal best and the global best. Rather than discarding kno wledge acquired across the domain, it is used to construct a simple surrogate model of the function, improving the algorithm’ s accuracy . This approach is more resilient to premature con ver gence to local optima, as the surrogate function is constructed from multiple distinct best-performing locations, rather than being guided solely by a single global best, as in con ven- tional algorithms. These properties are particularly valuable when addressing highly complex multi-objective problems, such as the integrated design optimization of lithium-ion batteries, where coupled thermo-mechanical and electro- chemical phenomena must be considered simultaneously . When analyzing trade-of fs between energy density and per - formance, the gov erning equations of high-fidelity models are strongly nonlinear and tightly coupled, creating a search 1 Center for Automotive Research, The Ohio State University , Columb us, OH 43212 USA (email: clemente.52@osu.edu) i -th P article i -th P article n -dim i -th P article Best ω k V k i P k B k i c k 2 r 2 ( P k − X k i ) c k 1 r 1 ( B k i − X k i ) X k i X k +1 i Quadratic Surrogate Minimizer Fig. 1: Schematic layout of the particle position X k i update al- gorithm. In the standard approach, particles move following their speed V k i and inertia ω , the location of their own best ev aluation B k i , and the o verall best solution found. In the proposed method, the latter contribution is replaced by the minimum of a quadratic surro- gate model P k constructed from multiple distinct best-performing locations. space riddled with local minima, while the ev aluation of each candidate solution remains computationally expensi ve. Consequently , the quadratic surrogate attractor approach is especially well suited to such problems, where accuracy , efficienc y , and robustness in navigating intricate landscapes are essential. Against this backdrop, this paper introduces the PSO algorithm schematically represented in Fig. 1, leverag- ing a quadratic surrogate modeling approach to replace the location of the overall best with the minimum of a quadratic surrogate as one of the particle attractors. Related Literatur e: PSO has been shown to successfully optimize a wide range of continuous functions [2], [3], balancing indi vidual and social attributes of particles in the swarm through e xploration and exploitation mechanisms. The distributed nature of the approach enables rapid and ef- ficient exploration of div erse regions of the design space, in- creasing the likelihood of discov ering high-quality solutions in complex or high-dimensional landscapes [4]. Con versely , the exploitation mechanism lev erages information gathered from the best-performing solutions to guide particles toward promising regions of the search space, typically using each particle’ s personal best and the global best as attractors . While straightforward to implement and commonly adopted, using the ov erall best as the attractor for the entire sw arm can lead to premature conv ergence to local minima, particularly in functions that pose challenges for hill-climbing. Hybrid formulations employ surrogate-based optimization algorithms [5]–[7], such as sequential quadratic program- ming [8], to refine the search around the optimal agent and thereby enhance exploitation capability and solution accuracy [9]. For very high-dimensional problems, other authors hav e employed surrogate-assisted PSO approaches that incorporate radial basis function networks and Gaussian process regression, using information gathered from the swarm’ s search process [10], [11]. Howe ver , these methods in volve cumbersome implemen- tations, which limits their practicality and makes them pri- marily suitable for large-scale problems. T o address this limitation, a meta-heuristic is introduced that synthesizes information from multiple distinct best-performing locations, providing a simple yet robust strategy against local optima while incurring only minimal additional computational cost. In this context, quadratic models have been applied in areas ranging from large-scale design optimization [12] to control systems [13], o wing to their ability to provide tractable formulations that deliv er relatively accurate and computa- tionally ef ficient solutions [14]. Although numerous PSO variants have been dev eloped o ver the years, to the best of the authors’ knowledge, a meta-heuristic that leverages quadratic surrogate modeling to identify a better-conditioned attractor has not been e xplored. Statement of Contribution: This paper introduces a PSO algorithm that incorporates a quadratic surrogate model to guide the search to ward the optimum. In the proposed method, multiple distinct best-performing locations are fitted with a n -dimensional quadratic form, whose properties are exploited to analytically determine its minimum. During the particle position update phase, this minimum replaces the conv entional global-best solution as a dynamic attractor , providing a refined conv ergence target informed by the local landscape geometry . The surrogate model is iterativ ely up- dated by revising the set of best-performing locations as the swarm explores the design space, progressively refining the approximation of the minimum region. By exploiting the in- formation already obtained through function e valuations, the method improves the accuracy of the search direction with- out increasing the number of particles or iterations, which is particularly advantageous for problems with expensiv e objectiv e ev aluations. Because the surrogate is constructed from multiple best-performing samples, its minimum reflects a consensus of the swarm rather than an individual particle. This property reduces the risk of premature con ver gence to local minima and improv es robustness in noisy optimization en vironments, while introducing only minimal computational ov erhead compared to con ventional PSO. Or ganization: The remainder of this paper is org anized as follows. Section II presents the methodology , highlighting the key aspects of the different algorithms used. Section III compares the method to a standard PSO for various bench- mark functions to ev aluate performance. Finally , Section IV concludes the paper and provides an outlook on future research directions. I I . M E T H O D O L O G Y In the proposed method, the numerous function ev alua- tions performed by PSO are exploited to progressi vely refine information about the landscape of the objective function. The number of points required to construct the surrogate function depends on the problem dimensions. In general, a quadratic function in n variables contains a number of independent coefficients equal to one constant term c ∈ R , n linear terms contained in the vector a ∈ R n , and n ( n +1) 2 quadratic terms corresponding to the unique squared and cross-product coefficients of the symmetric matrix B ∈ R n × n . Hence, the surrogate model takes the general form ˆ f ( x ) = c + a ⊤ x + x ⊤ B x. (1) Each sampled point provides one equation constraining these coefficients. Consequently , at least N Q distinct points are required to uniquely determine the quadratic function N Q = 1 + n + n ( n +1) 2 = ( n +1)( n +2) 2 . (2) The best N Q points are determined through a compari- son procedure that follows the function e valuation of each particle, described in Algorithm 1. Identifying these points requires the same number of function ev aluations and only slightly more comparisons than selecting the con ventional global best, except in the trivial case where the number of particles equals N Q , in which there is no need for compar - isons. This comparison is implemented using a heap structure Algorithm 1: Update of the set Q (best N Q loca- tions) Input: Current best locations Q , new candidate X k i , number of stored points N Q Output: Updated best locations Q Compute the objectiv e function value f ( x ) ; if Ar chive does not exist then initialize empty heap H if minimization then if | H | < N Q then insert ( − f ( X k i ) , X k i ) into H else if f ( X k i ) impr oves upon worst in H then replace worst with ( − f ( X k i ) , X k i ) else if maximization then if | H | < N Q then insert ( f ( x ) , x ) into H else if f ( X k i ) impr oves upon worst in H then replace worst with ( f ( X k i ) , X k i ) retur n Q H , which enables efficient insertion and replacement of solutions. Although the order of the points does not affect the interpolation, it is enforced so that, if the interpolation fails due to collinearity , the method falls back to the overall best solution as the attractor . Once all the particles ha ve been ev aluated, the quadratic surrogate model of the objecti ve function is constructed (or updated) using the set of sampled points stored in Q . Algorithm 2 outlines the procedure for determining the co- efficients of the quadratic curve by solving the corresponding linear system and computing the minimizer x min = − 1 2 B − 1 a, (3) which directly follo ws from setting the gradient of the quadratic surrogate to zero. If the points are collinear, resulting in the matrix B being non-inv ertible, the method falls back to the conv entional approach, returning the ov erall best solution. This also occurs when fewer than N Q particles are available. In general, for N p particles, PSO has a compu- tational cost of O ( n n i N p C ob j ) , where n i is the number of iterations and C ob j is the cost of a single objectiv e function ev aluation. The additional cost introduced by the quadratic fitting step in volv es solving a dense linear system of size N Q , which has a complexity of O ( N 3 Q ) [15]. Hence, the overall computational cost becomes O n n i N p C ob j + N 3 Q . Con- sequently , for expensiv e objecti ve function ev aluations such as multi-physics models, for which the method is primarily intended, the additional quadratic-fit overhead remains com- parativ ely small. Algorithm 2: Quadratic surrogate in n dimensions interpolation and minimization. Input: Set of N Q points Q , objectiv e function f Output: Estimated surrogate minimizer x min , function value f min Initialize coefficient matrix M ∈ R N Q × N Q ; Set column index c ← 0 ; Fill first column of M with ones (constant term); Increment c ; for i = 1 to n do Fill column c of M with coordinate i of all points in Q (linear term); Increment c ; for i = 1 to n do for j = i to n dimensions do Fill column c of M with product of coordinates i and j of all points in Q (quadratic term); Increment c ; Evaluate objective values f = [ f ( q 1 ) , . . . , f ( q N Q )] T ; Solve interpolation system M θ = f ; Extract linear coefficients a from θ ; Construct symmetric quadratic coef ficient matrix B from θ ; if B is invertible then Compute minimizer x min = − 1 2 B − 1 a ; Compute function actual value f min ; else // Fallback if B is singular Set x min ← first point in Q ; Set f min ← pre-computed value of f min ; retur n x min , f min Once the location of the minimum and its actual (non- surrogate) function value f min are computed, if this value is lower than the overall best found by the particles, it is used as an alternative attractor for the swarm when updating particle velocities, replacing the conv entional global best solution V k +1 i = ω k V k i + c k 1 r 1 ( B k i − X k i ) + c k 2 r 2 ( x min − X k i ) , (4) and subsequently update the position for the next iteration as X k +1 i = X k i + V k +1 i . (5) The coefficients c 1 and c 2 represent the particles’ individual cognitiv e and social parameters, respectively , while r 1 and r 2 are random values drawn from a uniform distribution ov er [0,1] ( U [0 , 1] ). These stochastic components enhance the method’ s ability to av oid premature con vergence to local optima while preserving strong local search capability [16]. By accepting the surrogate minimizer only when it improves upon the current global best, the positiv e definiteness of B is implicitly enforced, ensuring that the quadratic surrogate is con vex rather than concave when minimizing. Due to the stochastic and deri vati ve-free nature of PSO, establishing global optimality guarantees or classical conv ergence rates is challenging. Most theoretical analyses focus on stability properties and conditions under which particles con verge to an equilibrium point. In practice, the quality of the obtained solution depends strongly on the objecti ve function landscape and the algorithm parameters. T o promote e xploitation over time and ensure stability , the weights of the attractors are adjusted as the iterations progress [17], [18]. In particular, the inertia ω and the individual cognitive parameter c 1 decay linearly , while the social parameter c 2 increases linearly according to ω k +1 = ω 0 − k 2 K , (6) c k +1 1 = c 1 , 0 − k K , (7) c k +1 2 = c 2 , 0 + k K , (8) where k denotes the current iteration of the algorithm and K the maximum number of iterations. At the same time, the maximum velocity v max decays exponentially [19] as v k +1 max = v max , 0 · e ( 1 − k K ) . (9) Howe ver , to counteract the risk that the decay of the inertia parameter may cause particles to become trapped in local minima, the exploration safeguard described in Section III-C of Kwok et al. [20] is implemented. Specifically , the current value of a particle is compared with its position observed S iterations earlier . If the two values are too similar ( γ k i < 0 . 5 ), the particle’ s inertia is multiplied by the coefficient τ , as expressed in the following equations γ k i = | f ( X k i ) − f ( X k − S i ) | | f ( X k − S i ) | , (10) ω k i = ω k i τ . (11) A small lower bound is imposed on γ to avoid numerical ill- conditioning for v alues that approach zero. Finally , if the new position of a particle lies outside the optimization boundaries, the position is clipped. Similarly , if the velocity exceeds the maximum velocity v max , it is also clipped. Fig. 2: Ackley’ s function is shown in the left panel. The right panel compares the quadratic surrogate attractor with the standard algorithm ov er 400 independent runs of 200 iterations each. The solid line represents the mean across runs, while the shaded area denotes the inter-quartile range (25–75%). Fig. 3: Griewank’ s function is shown in the left panel. The right panel compares the quadratic surrogate attractor with the standard algorithm over 400 independent runs of 200 iterations each. The solid line represents the mean across runs, while the shaded area denotes the inter-quartile range (25–75%). I I I . R E S U LT S In this section, the effecti veness of the proposed algorithm is demonstrated through a numerical study on a set of benchmark optimization functions [21]. These functions exhibit div erse landscapes and impose v arying lev els of difficulty for iterati ve optimizers. A total of 400 independent test runs of 200 iterations each are conducted for every function–algorithm pair , and the results are ev aluated based on their statistical characteristics, with the merits assessed through the corresponding result distributions. The performance of the quadratic surrogate minimum attractor algorithm is compared with that of a standard PSO, using identical parameter settings as reported in T able I. All optimizations ha ve been performed on a laptop equipped with an Intel(R) Core(TM) i7- 9750H CPU @ 2.60 GHz, without the use of parallel computing. The full implementation of the algorithm has been released as open-source code and can be accessed at: https://github.com/YAPSO- team/YAPSO . First, the two methods are applied to Ackley’ s [22] and Griew ank’ s [23] functions, shown in Figures 2 and 3, re- spectiv ely . Ackley’ s function [22] shows a large number of local minima surrounding the global minimum at (0 , 0) . Particles can easily become trapped in these local minima, highlighting the function’ s multimodal and challenging na- ture for global search methods. Grie wank’ s function [23] is characterized by a combination of a broad parabolic shape and superimposed cosine oscillations. Its regularly spaced local minima form a challenging but structured landscape for optimization algorithms searching for the global minimum at (0 , 0) . It is observed that the quadratic surrogate attrac- tor approach performs better in both cases, demonstrating greater robustness in handling noisy landscapes and thereby addressing a critical challenge in these algorithms. The numerical performance of the algorithms, across different functions and particle counts, is reported in T able II. In both figures, the left panel portrays the function, while the right panel displays performance in terms of the solution accuracy achiev ed with the number of iterations. Due to the logarithmic representation, the mean value may lie outside the inter-quartile range. T ABLE I: W eights and parameters used [3], [19], [20]. ω c 1 , 0 c 2 , 0 v max , 0 K S τ 0.72984 2.8 2.05 2 200 52 1.2 Sphere function 2D Sphere function, 6 particles 3D Sphere function, 10 particles Fig. 4: The Sphere function is shown in the left panel. The central panel illustrates algorithms performance for the two-dimensional case with six particles, while the right panel presents the three-dimensional case with ten particles. In both cases, the quadratic surrogate attractor is compared with the standard algorithm over 400 independent runs of 200 iterations each. The solid line represents the mean across runs, while the shaded area denotes the inter-quartile range (25–75%). Flower function 2D Flower function, 6 particles 3D Flower function, 10 particles Fig. 5: The Flower function is sho wn in the left panel. The central panel illustrates algorithms performance for the two-dimensional case with six particles, while the right panel presents the three-dimensional case with ten particles. In both cases, the quadratic surrogate attractor is compared with the standard algorithm over 400 independent runs of 200 iterations each. The solid line represents the mean across runs, while the shaded area denotes the inter-quartile range (25–75%). T ABLE II: Comparison of the quadratic surrogate attractor (Q.S.) and the standard PSO (Std.) across benchmark functions. V alues report the mean, ex ecution time, and inter-quartile ranges (IQR 25–75) over 400 independent runs of both algorithms for 200 iterations. Negati ve percentages denote a more accurate solution. Function N p Bounds Mean Q.S. Mean Std. Rel. Diff. Time Q.S. [ s ] Time Std. [ s ] Rel. Diff. IQR 25-75 Q.S. IQR 25-75 Std. Ackley 2D 6 [[-32.768, 32.768],[-32.768, 32.768]] 1.307e-2 3.294e-1 -96.03% 91.27 84.41 + 8.12 % (1.112e-11)–(3.568e-7) (1.037e-7)–(1.478e-4) Griewank 2D 6 [[-600, 600],[-600, 600]] 2.119e-1 7.927e-1 -73.27% 117.55 102.41 + 14.78 % (1.265e-12)–(1.300e-1) (1.010e-8)–(2.610e-1) Sphere 2D 6 [[-10, 10],[-10, 10]] 1.182e-7 1.235e-7 -4.292% 67.19 60.32 + 11.38 % (1.791e-18)–(5.523e-11) (2.838e-14)–(7.071e-10) Sphere 3D 10 [[-10, 10],[-10, 10],[-10, 10]] 3.122e-7 2.334e-6 -86.62% 75.09 67.59 + 11.10 % (3.306e-17)–(1.637e-10) (1.525e-11)–(4.652e-8) Flower 2D 6 [[-100, 100],[-100, 100]] 8.045e-4 4.776e-1 -99.83% 76.43 69.21 + 10.43 % (1.501e-12)–(7.327e-8) (1.132e-6)–(7.560e-4) Flower 3D 10 [[-100, 100],[-100, 100],[-100, 100]] 4.565e-4 5.374e-1 -99.92% 96.25 87.39 + 10.14 % (1.221e-9)–(2.168e-6) (9.853e-5)–(3.932e-2) Figures 4 and 5 analyze the performance of the quadratic surrogate attractor approach in comparison with the standard PSO algorithm for the sphere function f ( x ) = n X i =1 x 2 i , (12) and the Flower function, f ( x ) = n X i =1 log | x i | + 1 , (13) a more challenging, quasi-con vex function. The sphere func- tion is characterized by a simple conv ex quadratic shape centered at the origin. Its smooth landscape contains a single global minimum at (0 , 0) , making it a standard benchmark for e v aluating the con vergence speed and ac- curacy of optimization algorithms. The flower function is characterized by logarithmic growth along both coordinate axes, producing a landscape with a sharp basin near the origin and gradually flattening slopes outward. It also has a single global minimum at (0 , 0) , while the nonlinear curvature provides a distinct challenge compared to purely quadratic functions. T o analyze the ef fect of dimensionality on the method, the two-dimensional and three-dimensional cases of these functions are considered. In the 3D case, ten particles are used, which is the minimum required to construct a three-dimensional surrogate. It is observed that more complex functions benefit from this augmentation, whereas simpler functions (such as the 2D Sphere) only show little improvement. In line with the results obtained from other , more computationally demanding surrogate-assisted approaches [11], the proposed quadratic surrogate attractor PSO algorithm consistently outperforms the standard PSO in terms of solution quality for a fixed number of iterations. The improvement is particularly pronounced in the case of quasi-con ve x functions, where the surrogate model is able to exploit the underlying conv ex-like structure of the landscape. Given enough particles to build the surrogate ( N p ≥ N Q ), the approach is well suited ev en for higher- order functions, since an y interior minimizer beha ves approx- imately quadratically locally , and the surrogate captures the predominant second-order behavior around the minimizer . Additionally , any objective function whose minimum lies on the boundary of the feasible set results in the surrogate minimizer lying either on the boundary or outside the feasible region, thereby directing the particles toward the boundary in both cases. Since the surrogate is quadratic and its gradient is analytically av ailable, the constrained minimizer under box constraints could in principle be computed directly via the Karush–Kuhn–T ucker conditions. This capability is not exploited in the present method, but it suggests a potential extension for a more rigorous treatment of boundary optima than relying on the unconstrained minimizer falling outside the feasible region. Finally , the effect of e xtending the velocity update rule by incorporating the surrogate-based attractor alongside the con ventional global best is inv esti- gated. This modification degraded performance, likely due to conflicting attractor dynamics that reduced the swarm’ s ability to conv erge ef fectively . Replacing the global best with the surrogate-based attractor, rather than combining them, therefore appears to be the more effecti ve strategy . I V . C O N C L U S I O N S This paper proposed a quadratic surrogate modeling ap- proach to augment the PSO algorithm by replacing the ov erall best solution in fav or of a better-conditioned dynamic attractor for the swarm. The refined con ver gence target, in- formed by the local landscape, is determined by interpolating multiple distinct best-performing locations and analytically computing the minimum of the quadratic function. This method improves resilience against premature con vergence and noisy en vironments while maintaining minimal com- putational overhead. T o ensure statistical significance, 400 independent runs of 200 iterations were conducted for each function–algorithm pair , analyzing statistical characteristics and corresponding distributions. The surrogate attractor ap- proach demonstrated consistently higher accuracy than the standard algorithm in all cases considered, with only a modest increase in computational time. These findings open the way for future e xtensions, in- cluding in vestigations on the impact of limited iterations or termination conditions, a thorough study of the ef fect of swarm size, and comparisons with other advanced optimiza- tion algorithms. R E F E R E N C E S [1] R. Poli, “ Analysis of the publications on the applications of particle swarm optimisation, ” Journal of Artificial Evolution and Applications , 2008. [2] J. Kennedy and R. Eberhart, “Particle swarm optimization, ” in Pro- ceedings of ICNN95 - International Conference on Neural Networks , vol. 4, pp. 1942–1948 vol.4, 1995. [3] M. Clerc and J. Kennedy , “The particle swarm - explosion, stability , and conver gence in a multidimensional complex space, ” IEEE Tr ans- actions on Evolutionary Computation , vol. 6, no. 1, pp. 58–73, 2002. [4] M. R. Bonyadi and Z. Michalewicz, “Particle swarm optimization for single objective continuous space problems: A revie w , ” Evolutionary Computation , vol. 25, no. 1, 2017. [5] L. W ang, S. Shan, and G. G. W ang, “Mode-pursuing sampling method for global optimization on expensi ve black-box functions, ” Engineering Optimization , vol. 36, no. 4, pp. 419–438, 2004. [6] M. Kazemi, G. W ang, S. Rahnamayan, and K. Gupta, “Metamodel- based optimization for problems with e xpensiv e objective and con- straint functions, ” Journal of Mechanical Design , vol. 133, no. 1, pp. 014505:1–7, 2011. [7] N. V . Queipo, R. T . Haftka, W . Shyy , T . Goel, R. V aidyanathan, and P . K. T ucker , “Surrogate-based analysis and optimization, ” Progr ess in Aer ospace Sciences , vol. 41, no. 1, pp. 1–28, 2005. [8] M. Qaraad, S. Amjad, N. Hussein, M. Farag, S. Mirjalili, and M. El- hosseini, “Quadratic interpolation and a new local search approach to improve particle swarm optimization: Solar photovoltaic parameter estimation, ” Expert Systems with Applications , vol. 236, p. 121417, 09 2023. [9] Y . Zhang, S. W ang, and G. Ji, “ A comprehensiv e survey on particle swarm optimization algorithm and its applications, ” Mathematical Pr oblems in Engineering , 2015. [10] Y . T ang, J. Chen, and J. W ei, “ A surrogate-based particle swarm opti- mization algorithm for solving optimization problems with expensi ve black box functions, ” Engineering Optimization , vol. 45, pp. 557–576, 05 2013. [11] X. Cai, H. Qiu, L. Gao, C. Jiang, and X. Shao, “ An efficient surrogate- assisted particle swarm optimization algorithm for high-dimensional expensi ve problems, ” Knowledge-Based Systems , p. 104901, 07 2019. [12] M. Clemente, F ootprint-aware Electric V ehicle F amily Design . Phd thesis (research tu/e / graduation tu/e), Mechanical Engineering, Apr . 2025. Proefschrift. [13] A. Bemporad and M. Morari, “Robust model predictiv e control: A survey , ” in Robustness in identification and contr ol (A. Garulli and A. T esi, eds.), (London), pp. 207–226, Springer London, 1999. [14] S. Boyd and L. V andenberghe, Conve x optimization . Cambridge Uni v . Press, 2004. [15] G. H. Golub and C. F . V an Loan, Matrix Computations . Baltimore, MD, USA: Johns Hopkins University Press, 4 ed., 2013. [16] H. Dai, C. D.D., and Z. Zheng, “Effects of random values for particle swarm optimization algorithm, ” Algorithms , vol. 11, no. 2, p. 23, 2018. [17] Y . Shi and R. Eberhart, “ A modified particle swarm optimizer, ” in 1998 IEEE International Confer ence on Evolutionary Computation Pr oceedings. IEEE W orld Congress on Computational Intelligence (Cat. No.98TH8360) , pp. 69–73, 1998. [18] G. Sermpinis, K. Theofilatos, A. Karathanasopoulos, E. F . Geor- gopoulos, and C. Dunis, “Forecasting foreign exchange rates with adaptiv e neural networks using radial-basis functions and particle swarm optimization, ” European Journal of Operational Researc h , vol. 225, no. 3, pp. 528–540, 2013. [19] He, Y an, Ma, W ei Jin, and Zhang, Ji Ping, “The parameters selection of pso algorithm influencing on performance of fault diagnosis, ” MATEC W eb Conf. , vol. 63, p. 02019, 2016. [20] N. M. Kwok, Q. P . Ha, D. K. Liu, G. Fang, and K. C. T an, “Efficient particle swarm optimization: a termination condition based on the decision-making approach, ” in 2007 IEEE Congress on Evolutionary Computation , pp. 3353–3360, 2007. [21] “Global optimization test functions index, ” 2013. [22] D. H. Ackley , A connectionist machine for genetic hillclimbing . Boston, MA: Kluwer Academic Publishers, 1987. [23] A. O. Griewank, “Generalized descent for global optimization, ” Jour - nal of Optimization Theory and Applications , vol. 34, pp. 11–39, 1981.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment