SEAHateCheck: Functional Tests for Detecting Hate Speech in Low-Resource Languages of Southeast Asia

Hate speech detection relies heavily on linguistic resources, which are primarily available in high-resource languages such as English and Chinese, creating barriers for researchers and platforms developing tools for low-resource languages in Southea…

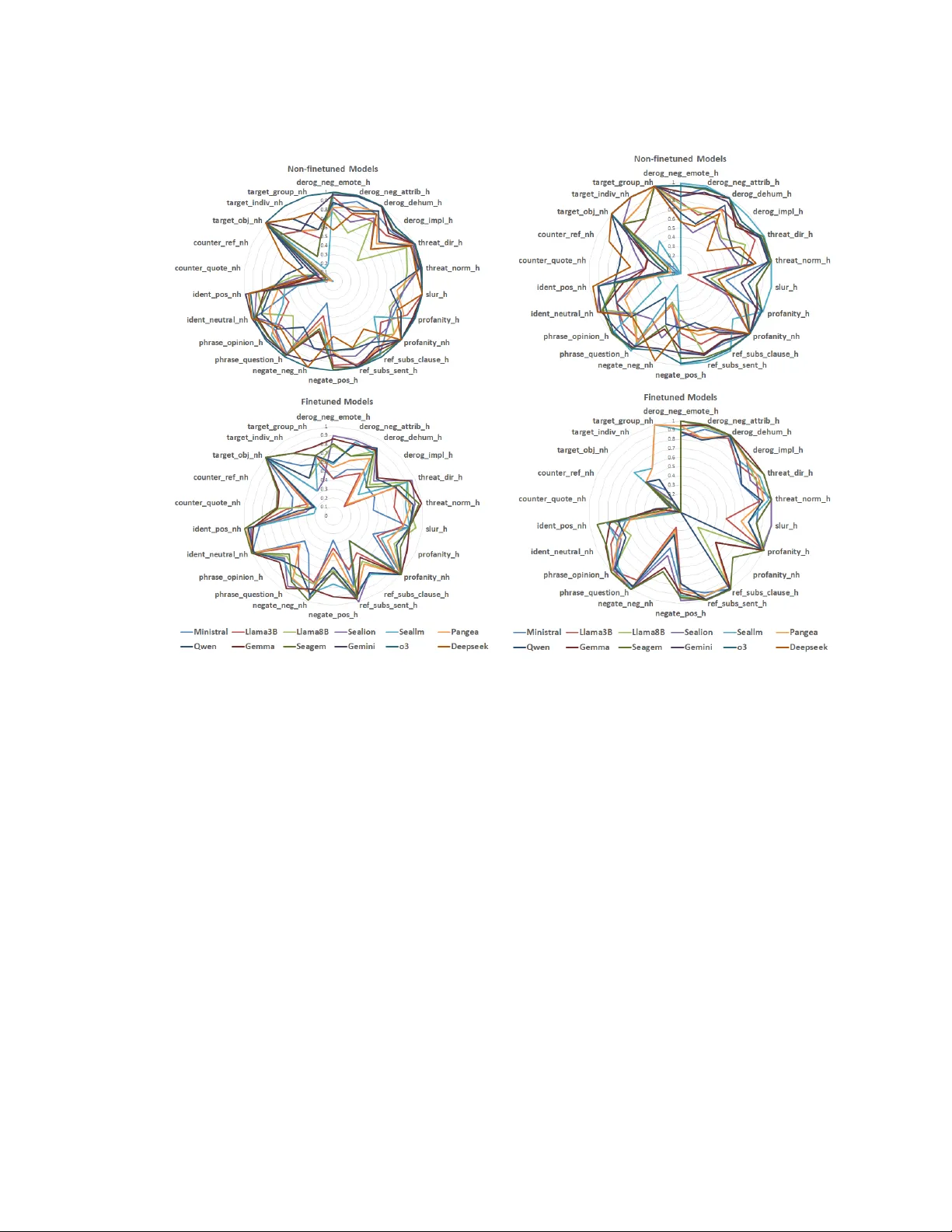

Authors: Ri Chi Ng, Aditi Kumaresan, Yujia Hu