Test-Time Adaptation via Many-Shot Prompting: Benefits, Limits, and Pitfalls

Test-time adaptation enables large language models (LLMs) to modify their behavior at inference without updating model parameters. A common approach is many-shot prompting, where large numbers of in-context learning (ICL) examples are injected as an …

Authors: Shubhangi Upasani, Chen Wu, Jay Rainton

Published as a conference paper at ICLR 2026 T E S T - T I M E A D A P T A T I O N V I A M A N Y - S H OT P R O M P T - I N G : B E N E FI T S , L I M I T S , A N D P I T FA L L S Shubhangi Upasani ∗ 1 , Chen W u 1 , Jay Rainton 1 , Bo Li 1 , Urmish Thakker 1 , Changran Hu 1 , Qizheng Zhang 2 1 SambaNov a Systems, Inc 2 Stanford Univ ersity A B S T R A C T T est-time adaptation enables large language models (LLMs) to modify their be- havior at inference without updating model parameters. A common approach is many-shot prompting, where large numbers of in-context learning (ICL) exam- ples are injected as an input-space test-time update. Although performance can improv e as more demonstrations are added, the reliability and limits of this up- date mechanism remain poorly understood, particularly for open-source models. W e present an empirical study of many-shot prompting across tasks and model backbones, analyzing how performance varies with update magnitude, example ordering, and selection policy . W e further study Dynamic and Reinforced ICL as alternativ e test-time update strategies that control which information is injected and ho w it constrains model behavior . W e find that man y-shot prompting is ef fec- tiv e for structured tasks where demonstrations provide high information gain, but is highly sensitiv e to selection strategy and often sho ws limited benefits for open- ended generation tasks. Overall, we characterize the practical limits of prompt- based test-time adaptation and outline when input-space updates are beneficial versus harmful. 1 I N T RO D U C T I O N T est-time adaptation enables large language models (LLMs) to modify their behavior during infer- ence without a change in model weights (Bro wn et al., 2020; Garg et al., 2022; Zhang et al., 2025). A widely used but underexplored form of such adaptation is prompt-based input-space updates, where task-relevant information is injected directly into the model’ s context. W ith recent advances in long-context architectures (Dao et al., 2022; Su et al., 2024), this update mechanism has ev olved from few-shot prompting to many-shot prompting, where hundreds or thousands of demonstrations can be provided at test time. Many-shot prompting can be vie wed as an increasingly aggressive test-time update: as more demon- strations are added, the model is exposed to a stronger , task-specific behavioral constraint. Prior work (Agarwal et al., 2024) shows that accuracy often improves as the number of in-context ex- amples grows, particularly for structured tasks. In this paper , we study many-shot prompting as a controlled study on LLaMA model family , focusing on when and why input-space updates succeed or fail. Our key observ ations from our chosen testbed include the follo wing: • Many-shot prompting can act as an effecti ve test-time update for structured tasks with accuracy improving consistently up to a moderate update magnitude before saturating. • Reliability of prompt-based test-time updates is highly sensitiv e to ordering of in-context demon- strations, prompt structure and example selection strate gies. • T est-time updates exhibit clear task-dependent success and failure modes. Although additional examples provide useful adaptation signals for classification and information extraction tasks, they gi ve small improvements for open-ended generation tasks such as machine translation. • Structured updates such as Reinforced ICL exhibit similar saturation behavior as update magni- tude increases. ∗ Corresponding author: shubhangi.upasani@gmail.com 1 Published as a conference paper at ICLR 2026 T ogether , these findings highlight both the promise and the limits of prompt-based test-time adapta- tion. 2 B A C K G R O U N D Many-Shot Prompting (Update Magnitude): W ith the av ailability of long-context models, in- context learning (ICL) (Dong et al., 2024) has extended from a few demonstrations to many-shot prompting, where hundreds or thousands of examples can be included at test time. The effecti ve- ness of such updates strongly depends on how the context is constructed; demonstration ordering, div ersity , template format and phrasing of instructions play an important role. Dynamic ICL as Update Policy: Dynamic ICL (Rubin et al., 2022; Liu et al., 2022) constructs prompts on-the-fly by selecting examples for each query based on similarity , heuristics, or task metadata. It is an adaptiv e update policy that determines the information that is injected into the context. Reinfor ced ICL as Update Structure: Reinforced ICL replaces the input-output (IO) demon- strations with reasoning traces, such as chain-of-thought (CoT) examples (W ei et al., 2022; Agarwal et al., 2024), to guide the behavior of the model at the test time. W e treat Reinforced ICL as a structured test-time update. Figure 1 summarizes our view of prompt-based adaptation as a test-time update go verned by update magnitude, update policy , and update structure, whose interplay determines whether additional con- text provides useful signal or introduces noise. Throughout this work, we use “test-time update” to refer to input-space adaptation via prompting, without implying any parameter-le vel modification. W e gi ve additional background on the abov e strategies in Appendix A.1. 3 E X P E R I M E N TA L S E T U P T asks and benchmarks: W e ev aluate prompt-based test-time updates across a di verse set of tasks using benchmarks designed to stress long-context inference. F or structured classification, we use the Banking77 dataset from LongICLBench (Li et al., 2024) which has 77 classes for intent clas- sification task. This dataset enables controlled ev aluation of many-shot prompting under extreme label spaces. T o assess success and failure modes, we additionally include tasks from Evaluation Harness (Biderman et al., 2024) that span reasoning, information extraction, question answering, and machine translation. Models: W e ev aluate two instruction-tuned backbones of differing capacity: LLaMA-3.1-8B- Instruct Grattafiori et al. (2024) and LLaMA-3.3-70B-Instruct Meta AI (2024) to study how test- time updates scale with model size under identical prompting strategies. Instruction tuning en- sures consistent interpretation of task instructions and demonstrations during many-shot adaptation. Base-model ablations, reported in Appendix A.4, perform poorly even at high update magnitudes, supporting our focus on instruction-tuned models. 4 R E S U L T S : B E N E FI T S A N D L I M I T S O F T E S T - T I M E U P D A T E S 4 . 1 M O R E C O N T E X T H E L P S — U N T I L I T D O E S N ’ T W e ev aluate how the effecti veness of prompt-based test-time updates v aries with update magnitude, defined by the total number of in-context demonstrations. For Banking77 with C = 77 classes, we use a per-class shot formulation: each prompt contains n demonstrations per class, resulting in N = n × C total demonstrations (e.g., n = 1 ⇒ N = 77 , n = 5 ⇒ N = 385 ). Unless otherwise stated, we refer to n as the per-class shot count and N as the total number of demonstrations . As shown in Fig. 2(a), as the update magnitude increases for LLaMA-3.1-8B- Instruct, accuracy improves steadily , after which it reaches a plateau at approximately 50–70 shots per class. Beyond this point, additional demonstrations provide diminishing returns. This behavior 2 Published as a conference paper at ICLR 2026 Figure 1: A unified view of prompt-based test-time adaptation. Update design determines whether added context pro vides signal or noise. indicates a clear saturation regime: moderate input-space updates introduce useful task-specific sig- nal, while aggressi ve updates yield diminishing further gains Agarwal et al. (2024). W e hypothesize that the saturation observed in many-shot prompting arises from the limited representational capac- ity of attention-based conditioning. Demonstrations influence predictions through their key–v alue representations in the context, but the resulting update is a weighted aggregation. Once sufficient demonstrations are present to identify the task pattern, additional examples contribute largely re- dundant representations, causing marginal gains to diminish and, in very long prompts, attention competition can ev en lead to performance degradation. T o assess update reliability , we repeat each n-shot setting using 10 random demonstration order- ings. Fig. 2(a) shows that accuracy varies by 2-3 % across shuffles, demonstrating that many-shot prompting remains sensitive to demonstration ordering due to positional and contextual bias. A ver- aging o ver multiple random orders yields more stable results. W e explain more e xperimental details in Appendix A.2. 4 . 2 U P D A T E P O L I C Y M A T T E R S — R E L E V A N C E H E L P S E A R LY , D I V E R S I T Y H E L P S A T S C A L E Next, we study ho w update polic y af fects test-time adaptation under a fix ed context budget in Fig. 2(b) for LLaMA-3.1-8B-Instruct. Across all comparisons, we fix the total number of demonstra- tions N and v ary only the update policy used to select these. W e ensure prompt template consistency and prev ent including query or same-text duplicates in selected examples. Label-wise vs. Cross-label: W e construct prompts using a per -class formulation with n demonstra- tions per class, resulting in N = n × C total demonstrations for Banking77. In label-wise selection, we enforce balanced coverage by selecting e xactly n demonstrations per class. In cross-label selec- tion, we select N demonstrations from the entire dataset, so label frequencies can be uneven. Random vs. similarity: Within either grouping, random selection samples demonstrations uni- formly across the entire dataset, while similarity selection retrieves nearest neighbors to the query in embedding space. More details gi ven in Appendix A.3 and A.5. Comparing these policies, cross-label selection consistently outperforms label-wise selection, sug- gesting that enforcing per-label balance can reduce useful diversity by over representing redundant examples. Cross-label benefits from e xposure to greater contextual diversity thereby improving gen- eralization, label-balance forces many low information examples which is not very helpful. Cross- label similarity-based selection is strong at small update magnitudes (high relev ance), but degrades as N grows, whereas cross-label random selection scales more rob ustly with lar ger N (higher di ver- sity). This reflects a bias-v ariance tradeoff in test-time updates: relev ance-focused (similarity-based) policies help early b ut can ov er concentrate the conte xt as the update becomes aggressiv e; di versity- focused (random) policies scale better . Our best setting is ( n = 1 ⇒ N = 77 ) for cross label similarity . This maximizes relev ance per demonstration per class label, yielding strong task adapta- tion before redundancy degrades performance at larger scale. Since cross-label sampling does not enforce uniform class coverage, improv ements in overall accuracy may partially reflect gains on 3 Published as a conference paper at ICLR 2026 0 1 2 3 4 5 10 50 70 P er -class shots (n) 40 50 60 70 80 90 A ccuracy (%) (a) Banking77: A ccuracy vs Update Magnitude (Mean ± Std) LLaMA -3.1-8B-Instruct 0 1 2 3 4 5 10 50 70 P er -class shots (n) 30 40 50 60 70 80 90 A ccuracy (%) (b) Banking77: Dynamic ICL Update P olicies (LLaMA -3.1-8B-Instruct) Label-wise R andom Cr oss-label R andom Cr oss-label Similarity 0 1 2 3 4 5 10 50 70 P er -class shots (n) 40 50 60 70 80 90 A ccuracy (%) (c) Banking77: Model Capacity vs Update Magnitude LLaMA -3.1-8B-Instruct LLaMA -3.3-70B-Instruct 0 1 2 4 6 8 10 12 14 16 32 Number of r easoning shots 45.0 47.5 50.0 52.5 55.0 57.5 60.0 62.5 65.0 A ccuracy (%) (d) GPQA Diamond: R einfor ced ICL Scaling (LLaMA -3.3-70B-Instruct) Many -shot P r ompting T est- T ime Updates Figure 2: Scaling behavior of prompt-based test-time updates. (a) Many-shot accuracy vs. update magnitude (b) Dynamic ICL update policies (c) Model capacity effects (d) Reinforced ICL scaling frequent classes; future work could analyze per -class accuracy or macro-F1 to better understand the impact on tail classes. Additional results included in Appendix A.3. 4 . 3 L A R G E R M O D E L S B E N E FI T E A R L I E R , S M A L L E R M O D E L S C A T C H U P W e further compare test-time update behavior across model sizes using LLaMA-3.1-8B-Instruct and LLaMA-3.3-70B-Instruct in Fig. 2(c) under identical settings for cross label random selection on Banking 77. At small to moderate update magnitudes, LLaMA-70B consistently outperforms the smaller model, indicating that higher capacity models can more effecti vely exploit div erse in- context supervision. As the update magnitude increases further , the performance gap narrows and the 8B model catches up, suggesting that sufficiently large prompt can partially compensate for limited model capacity . Notably , the 70B model exhibits a performance drop at the largest update magnitude, consistent with ov er-conditioning. In contrast, the smaller model remains in a signal- accumulation regime and is less sensiti ve to this effect. 4 . 4 R E I N F O R C E D I C L E X H I B I T S E A R L Y G A I N S A N D R A P I D S A T U R AT I O N W e next study Reinforced ICL as a form of structured test-time update, where chain-of-thought (CoT) based reasoning traces Ag arwal et al. (2024); W ei et al. (2022) are provided as demonstrations instead of direct input-output pairs. W e delineate the data generation pipeline to obtain these samples in the Appendix A.6. Using GPQA Diamond Rein et al. (2024) subset with LLaMA-3.3-70B- Instruct, we show in Fig. 2(d), Reinforced ICL improves performance as the magnitude of the update increases to 4 demonstrations. Beyond this point, accurac y plateaus and degrades. A plausible explanation is that early demonstrations provide a strong inductive bias, leading to rapid gains with only a few examples. As the number of reasoning traces increases, attention is increasingly divided across long chains-of-thought. This competition for attention reduces the effecti ve influence of any single trace, causing performance to plateau or degrade despite additional conte xt Liu et al. (2024). 4 Published as a conference paper at ICLR 2026 4 . 5 T A S K S T RU C T U R E D E T E R M I N E S T H E E FF E C T I V E N E S S O F T E S T - T I M E U P D A T E S Finally , we analyze when prompt-based test-time updates are effecti ve across tasks (T able 5 in Ap- pendix A.7) using LLaMA-3.3-70B-Instruct. W e find that many-shot prompting consistently im- prov es performance on structure-heavy tasks with constrained outputs, including structured reason- ing (e.g., DR OP Dua et al. (2019)) and information extraction benchmarks (e.g., FD A, SWDE Hao et al. (2011)). In these settings, additional demonstrations provide high information gain by captur- ing relev ant patterns. For tasks with constrained outputs (e.g., ARC-Challenge Clark et al. (2018) and GSM8K Cobbe et al. (2021)), performance improv es sharply with a small number of demon- strations but quickly saturates, indicating that only limited contextual supervision is required to specify task behavior . In contrast, GPQA (multiple choice) exhibits only modest gains at small up- date magnitudes. Finally , open-ended generation tasks such as machine translation (WMT16 Bojar et al. (2016)) show consistent but small improvements with additional context, indicating test-time updates offer limited benefits when task structures are already well captured during pretraining. 5 C O N C L U S I O N Our study highlights both the promise and the practical limits of many-shot prompting as a form of test-time adaptation. W e sho w that increasing the number of in-context demonstrations can serve as an ef fectiv e input-space update for structured tasks. Across tasks and models, performance is highly sensitiv e to update configuration, including demonstration ordering and selection policy . Dynamic ICL with cross-label selection produces more rob ust adaptation than static or label-wise prompting, underscoring the importance of update policy design at test time. At the same time, scaling benefits saturate beyond moderate update magnitudes. Finally , we find that many-shot prompting offers limited benefit for open-ended generation tasks, b ut works well for structured tasks. T aken together , our results suggest that prompt-based test-time updates are most ef fective when demonstrations inject novel, task-specific information, and that careful control over update magnitude, structure, and polic y is essential for reliable deployment. While our experiments focus on a single benchmark, the observed behaviors should be interpreted as preliminary empirical findings, and future work should validate whether these trends generalize across additional datasets and task domains. R E F E R E N C E S Rishabh Agarwal, A vi Singh, Lei Zhang, Bernd Bohnet, Luis Rosias, Stephanie Chan, Biao Zhang, Ankesh Anand, Zaheer Abbas, Azade Nova, et al. Man y-shot in-context learning. Advances in Neural Information Pr ocessing Systems , 37:76930–76966, 2024. Camel AI. Data generation module — camel ai documentation. https://docs.camel- ai. org/key_modules/datagen , 2025. Accessed: 2026-01-XX. Stella Biderman, Hailey Schoelkopf, Lintang Suta wika, Leo Gao, Jonathan T ow , Baber Abbasi, Al- ham Fikri Aji, Pa wan Sasanka Ammanamanchi, Sidney Black, Jordan Clive, et al. Lessons from the trenches on reproducible ev aluation of language models. arXiv pr eprint arXiv:2405.14782 , 2024. Ond ˇ rej Bojar, Rajen Chatterjee, Christian Federmann, Yv ette Graham, Barry Haddow , Matthias Huck, Antonio Jimeno Y epes, Philipp Koehn, V arv ara Logachev a, Christof Monz, Matteo Negri, Aurelie Nev eol, Mariana Ne ves, Martin Popel, Lucia Specia, Marco T urchi, and Duško V itas. Findings of the 2016 conference on machine translation (WMT16). In Proceedings of the F irst Confer ence on Machine T ranslation (WMT16) , pp. 131–198, Berlin, Germany , August 2016. As- sociation for Computational Linguistics. URL https://aclanthology.org . T om Brown, Benjamin Mann, Nick Ryder , Melanie Subbiah, Jared D Kaplan, Prafulla Dhariwal, Arvind Neelakantan, Pranav Shyam, Girish Sastry , Amanda Askell, et al. Language models are few-shot learners. Advances in neural information pr ocessing systems , 33:1877–1901, 2020. Peter Clark, Isaac Cowhey , Oren Etzioni, T ushar Khot, Ashish Sabharwal, Carissa Schoenick, and Oyvind T afjord. Think you ha ve solv ed question answering? try arc, the ai2 reasoning challenge. arXiv pr eprint arXiv:1803.05457 , 2018. 5 Published as a conference paper at ICLR 2026 Karl Cobbe, V ineet K osaraju, Mohammad Bav arian, Mark Chen, Heewoo Jun, Lukasz Kaiser , Matthias Plappert, Jerry T worek, Jacob Hilton, Reiichiro Nakano, et al. T raining verifiers to solve math word problems. arXiv preprint , 2021. T ri Dao, Dan Fu, Stef ano Ermon, Atri Rudra, and Christopher Ré. Flashattention: Fast and memory- efficient exact attention with io-awareness. Advances in neural information pr ocessing systems , 35:16344–16359, 2022. Qingxiu Dong, Lei Li, Damai Dai, Ce Zheng, Jingyuan Ma, Rui Li, Heming Xia, Jingjing Xu, Zhiyong W u, Baobao Chang, et al. A survey on in-context learning. In Proceedings of the 2024 confer ence on empirical methods in natural language pr ocessing , pp. 1107–1128, 2024. Dheeru Dua, Y izhong W ang, Pradeep Dasigi, Gabriel Stanovsky , Sameer Singh, and Matt Gardner . Drop: A reading comprehension benchmark requiring discrete reasoning over paragraphs. arXiv pr eprint arXiv:1903.00161 , 2019. Shiv am Garg, Dimitris Tsipras, Percy S Liang, and Gregory V aliant. What can transformers learn in-context? a case study of simple function classes. Advances in neural information pr ocessing systems , 35:30583–30598, 2022. Aaron Grattafiori, Abhimanyu Dubey , Abhinav Jauhri, Abhinav Pandey , Abhishek Kadian, Ahmad Al-Dahle, Aiesha Letman, Akhil Mathur , Alan Schelten, Alex V aughan, et al. The llama 3 herd of models. arXiv preprint , 2024. Ming Hao, Jyotish Madhavan, Pedro Szekely , and Alon Y . Halevy . Automatic web data extraction from lar ge websites. In Pr oceedings of the VLDB Endowment , v olume 4, pp. 1326–1337. VLDB, 2011. T ianle Li, Ge Zhang, Quy Duc Do, Xiang Y ue, and W enhu Chen. Long-context llms struggle with long in-context learning. arXiv pr eprint arXiv:2404.02060 , 2024. Jiachang Liu, Dinghan Shen, Y izhe Zhang, William B Dolan, Lawrence Carin, and W eizhu Chen. What makes good in-context examples for gpt-3? In Proceedings of Deep Learning Inside Out (DeeLIO 2022): The 3rd workshop on knowledge extraction and inte gration for deep learning ar chitectur es , pp. 100–114, 2022. Nelson F Liu, Ke vin Lin, John Hewitt, Ashwin Paranjape, Michele Bevilacqua, Fabio Petroni, and Percy Liang. Lost in the middle: Ho w language models use long contexts. T ransactions of the association for computational linguistics , 12:157–173, 2024. Meta AI. LLaMA-3.3-70B-Instruct model card, 2024. URL https://github.com/ meta- llama/llama- models/blob/main/models/llama3_3/MODEL_CARD.md . Accessed: 2026-01-XX. OpenAI. Gpt-oss-120b model documentation, 2024. URL https://platform.openai. com/docs/models/gpt- oss- 120b . Accessed: 2026-01-XX. David Rein, Betty Li Hou, Asa Cooper Stickland, Jackson Petty , Richard Y uanzhe Pang, Julien Di- rani, Julian Michael, and Samuel R Bowman. Gpqa: A graduate-lev el google-proof q&a bench- mark. In Fir st Confer ence on Language Modeling , 2024. Ohad Rubin, Jonathan Herzig, and Jonathan Berant. Learning to retriev e prompts for in-context learning. In Pr oceedings of the 2022 conference of the North American chapter of the association for computational linguistics: human language technologies , pp. 2655–2671, 2022. Jianlin Su, Murtadha Ahmed, Y u Lu, Shengfeng Pan, W en Bo, and Y unfeng Liu. Roformer: En- hanced transformer with rotary position embedding. Neurocomputing , 568:127063, 2024. Jason W ei, Xuezhi W ang, Dale Schuurmans, Maarten Bosma, Fei Xia, Ed Chi, Quoc V Le, Denny Zhou, et al. Chain-of-thought prompting elicits reasoning in large language models. Advances in neural information pr ocessing systems , 35:24824–24837, 2022. Qizheng Zhang, Changran Hu, Shubhangi Upasani, Boyuan Ma, Fenglu Hong, V amsidhar Kama- nuru, Jay Rainton, Chen W u, Mengmeng Ji, Hanchen Li, et al. Agentic context engineering: Evolving conte xts for self-improving language models. arXiv pr eprint arXiv:2510.04618 , 2025. 6 Published as a conference paper at ICLR 2026 T able 1: Comparison of per-class shots with total demonstrations in input prompt for Banking 77 n per -class shots N T otal demonstrations 1 77 2 154 3 231 4 308 5 385 50 3850 70 5390 A A P P E N D I X A . 1 A D D I T I O N A L B AC K G R O U N D O N P RO M P T - B A S E D T E S T - T I M E A DA P T A T I O N W e provide additional context on how prompt-based adaptation mechanisms differ in the strength, structure, and selectivity of the update signal the y introduce at test time. In-context learning as test-time updates: In-context learning (ICL) refers to the ability of large language models to adapt their behavior at inference by conditioning on task-specific examples provided in the input, without modifying model parameters. From a test-time perspectiv e, ICL constitutes an input-space update in which task information is injected directly into the context, enabling adaptation without training. Update magnitude in many-shot prompting: Many-shot prompting increases the strength of test- time update by injecting a larger volume of task-specific signal into the context. While additional demonstrations can improve performance by reinforcing task patterns, they also increase redundancy and noise, making update effecti veness sensitiv e to prompt composition. In practice, ordering, di- versity , and formatting choices determine whether additional examples provide new information or ov erwhelm the model with repeated context. Selectivity in Dynamic ICL: Dynamic ICL controls which information is injected into the prompt under a fixed context budget. By selecting demonstrations per query , dynamic policies trade off relev ance and div ersity . Similarity-based selection prioritizes relev ance to the input, while broader sampling increases coverage of the task distribution. This selectivity distinguishes dynamic ICL from static prompting, where the same update is applied uniformly across inputs. Structure in Reinfor ced ICL: Reinforced ICL constrains test-time adaptation through structured reasoning traces rather than direct input–output mappings. By exposing intermediate reasoning steps, the update signal emphasizes how to solve a task instead of what answer to produce. As a result, performance depends not only on the number of demonstrations but also on the consistency and quality of the reasoning patterns being demonstrated. A . 2 A D D I T I O N A L E X P E R I M E N TA L D E TA I L S F O R U P D A T E M A G N I T U D E E X P E R I M E N T S For the results shown in Fig. 2(a) on Banking77, we vary the per-class shot count n . The total number of demonstrations is giv en by N = n × C , where C = 77 is the number of classes. T able 1 illustrates ho w N scales with n . W e stop at N = 5390 demonstrations, corresponding to the maximum supported context length of 128k tokens for LLaMA models. T o assess update stability , we repeat each setting across multiple random seeds, varying both demon- stration ordering and selection, and report mean accuracy along with standard de viation. A . 3 D Y N A M I C I C L S E L E C T I O N S T R A T E G I E S In Dynamic ICL, demonstrations are selected adaptiv ely for each input query rather than using a fixed prompt. W e instantiate Dynamic ICL by combining two axes: (i) grouping constraint and 7 Published as a conference paper at ICLR 2026 0 1 2 3 4 5 10 50 70 P er -class shots (n) 30 40 50 60 70 80 90 A ccuracy (%) Banking77: Dynamic ICL Update P olicies (F ix ed N) Label-wise R andom Cr oss-label R andom Cr oss-label Similarity Label-wise Similarity Figure 3: Dynamic ICL Selection Strategies on Banking 77 (ii) retriev al rule. For a dataset with C classes and per-class shot parameter n , the prompt contains N = n × C demonstrations. Grouping constraint: • Label-wise: enforce exactly n demonstrations from each class ( N = nC ). • Cross-label: select N demonstrations globally; class counts are unconstrained. Retrieval rule: • Random: uniform sampling from the entire dataset. The results of random selection are av eraged across multiple seeds to ensure consistency . • Similarity: top- N retriev al by embedding similarity to the query . Combining the abov e two regimes, we get four strate gies used in our experiments: • Cross-label Random Selection • Label-wise Random Selection • Cross-label Similarity Selection • Label-wise Similarity Selection This taxonomy allows us to isolate the ef fects of relev ance, diversity , and label balance on test-time adaptation behavior . W e make sure that the retriev ed examples do not have the query and same-te xt duplicates, to pre vent leakage while ev aluating the above selection strategies. W e also ensure to keep the instruction and prompt templates constant across all runs. W e add label-wise similarity as an additional comparison to Fig. 2(d) and show the full set of results in Fig. 3 for LLaMA-3.1-8B- Instruct. W e observe that label-wise similarity selection consistently outperforms label-wise random selec- tion across all update magnitudes. This indicates that when label balance is enforced, selecting demonstrations that are semantically rele vant to the query provides a stronger adaptation signal than random sampling within each class. Howe ver , performance under label-wise similarity selection still degrades as the number of demon- strations increases (e.g., from 83.4% at n = 1 per-class shot to 67.4% at n = 70 per-class shot). 8 Published as a conference paper at ICLR 2026 T able 2: Many-shot prompting for Llama-3.1-8B on Banking 77 (accuracy in %). Prompts contain N total demonstrations, constructed using n shots per class ( N = n × C , C =77 ). Per-class shots n LLaMA-3.1-8B LLaMA-3.1-8B-Instruct 50 33.9 80.8 70 35.9 82.3 This suggests that enforcing per-label balance limits the effecti ve diversity of the prompt: as the update becomes more aggressive, additional demonstrations become increasingly redundant, ev en when selected by similarity . Label-wise similarity outperforms cross-label similarity at higher n- shots because restricting retriev al to a fixed label avoids conflicting supervision: although exam- ples become increasingly redundant, they remain label-consistent as update magnitude increases. Label-wise similarity , ho wev er, underperforms cross-label random selection at larger update magni- tudes because the label-wise constraint still limits global diversity and leads to redundancy as more demonstrations are added. Overall, these results show that while similarity-based selection mitigates some weaknesses of label- wise prompting, the label-wise constraint itself restricts scalability , motiv ating cross-label selection strategies that allo w diversity to gro w with update magnitude. A . 4 M A N Y S H O T P R O M P T I N G W I T H L L A M A - 3 . 1 - 8 B W e compare the LLaMA-3.1-8B base model with its instruction-tuned counterpart under identical many-shot prompting conditions on Banking77, using cross-label random selection. As shown in T able 2, the instruction-tuned model substantially outperforms the base model at both update mag- nitudes, highlighting the importance of alignment for interpreting task instructions and in-context demonstrations. At low update magnitudes ( n = 1 – 5 ; not shown), the base model performs near chance, indicating a limited ability to infer task structure from few demonstrations. Ho wev er , as the number of in- context examples increases, the base model exhibits some gains from n = 50 to n = 70 per- class shot, suggesting that many-shot prompting can induce meaningful test-time adaptation even in the absence of instruction tuning. This result indicates that while alignment strongly improv es sample efficienc y , the benefits of large-scale in-context updates are not exclusi ve to instruction-tuned models. A . 5 S I M I L A R I T Y - B A S E D R E T R I E V A L F O R D Y N A M I C I C L For similarity based retriev al, we encode all candidate demonstrations and queries using the sentence-transformers/all-MiniLM-L6-v2 model (384-dimensional embeddings). After generating embeddings, we find n-shot nearest neighbors per class to the query embedding for label-wise sim- ilairty selection. W e make sure that the retrieved examples don’t have the query or same-text du- plicates. This ensures there is no leakage. Across all retriev al strategies, we use a fixed prompt template; only the selected demonstrations vary between conditions. A . 6 D A TA G E N E R AT I O N R E C I P E F O R R E I N F O R C E D I C L T o generate synthetic reasoning traces for Reinforced ICL on GPQA Diamond shown in Fig. 2(d), we follow the Chain-of-Thought data generation pipeline described in the Camel AI framew ork AI (2025). W e instantiate a generator–verifier setup, where both agents are powered by the gpt-oss- 120b OpenAI (2024) model. The generator produces step-by-step reasoning traces under predefined formatting constraints, and the verifier checks the final answer against the ground-truth label. W e retain only examples whose generated final answer exactly matches the correct label, filtering out inconsistent traces. Applying this procedure to the 198 question in GPQA Diamond yields 133 val- idated reasoning demonstrations, which are used for Reinforced ICL experiments. W e used greedy sampling with max tokens set to 12000 to generate CoT traces. W e have shown the generator and verifier prompt in T able 3 and T able 4 respectiv ely . 9 Published as a conference paper at ICLR 2026 T able 3: Prompt template used for the Generator agent during data generation for Reinforced ICL. Generator Prompt Generator agent for creating step-by-step reasoning. Rules: – Use at most 10 steps. – No explanations. – No restating the question. – End immediately after the answer . – Do not reference the order of the answers in the question (e.g., option a, b, c). – For the answer in the tags, ensure it exactly matches one of the listed options. Format: 1. ... 2. ... ... T able 4: Prompt template used for the V erifier agent during data generation for Reinforced ICL. V erifier Prompt V erified agent for step by step reasoning. For the te xt within the tags, make sure it matches exactly to the correct answer , word for word. A . 7 M A N Y - S H OT P RO M P T I N G O N E V A L UAT I O N H A R N E S S W e report full results across Evaluation Harness tasks with LLaMA-3.3-70B-Instruct in T able 5 to characterize where many-shot test-time updates succeed and where they fail. These experiments span structured reasoning, and open-ended generation settings, allo wing us to examine how the effecti veness of prompt-based updates depends on task structure and information content. The com- plete results provide finer-grained evidence for the task-dependent patterns discussed in the main paper , and highlight regimes where additional in-context demonstrations introduce useful adapta- tion signals versus redundant or noisy context. W e use BLEU score to ev aluate machine translation (WMT16) tasks, F1 score to ev aluate reading comprehension (DROP) task and accuracy (in %) based on exact match for the rest of the tasks. T able 5: T ask-dependent performance of many-shot test-time updates (LLaMA-3.3-70B-Instruct) T ask Baseline 4-Shot 16-Shot 32-Shot Domain-Specific / Information-Hea vy T asks DR OP 10.8 13.1 14.2 15.8 FD A 39.65 86.8 89.4 89.7 SWDE 74.17 92.9 95.1 96.4 Structured Reasoning T asks (Constrained Output) ARC-Challenge 38.82 93.72 93.48 93.45 GSM8K 87.56 94.7 94.8 94.8 GPQA (MC) 47.99 51.9 50.0 48.8 Open-Ended Generation T asks WMT16 En-De 34.51 37.0 37.4 37.5 WMT16 De-En 44.76 46.3 46.9 47.0 10 Published as a conference paper at ICLR 2026 Figure 4: Context length scaling with update magnitude A . 8 C O N T E X T G RO W T H U N D E R M A N Y - S H O T A N D R E I N F O R C E D T E S T - T I M E U P D A T E S Figure 4 illustrates ho w prompt context length (in tokens) scales with the number of demonstrations for two settings: Reinforced ICL on the GPQA Diamond subset (left) and many-shot prompting on Banking77 (right). For Reinforced ICL, the context length grows approximately linearly with the number of reasoning demonstrations included in the prompt, reaching a maximum input length of 14.7k tokens at 32-shot. For Banking77, we vary the number of per-class shots n ; the total number of demonstrations in the prompt is N = n × C , where C = 77 is the number of classes. At n =70 ( N =5390 ), the prompt reaches the maximum supported context length of 128k tokens. 11

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

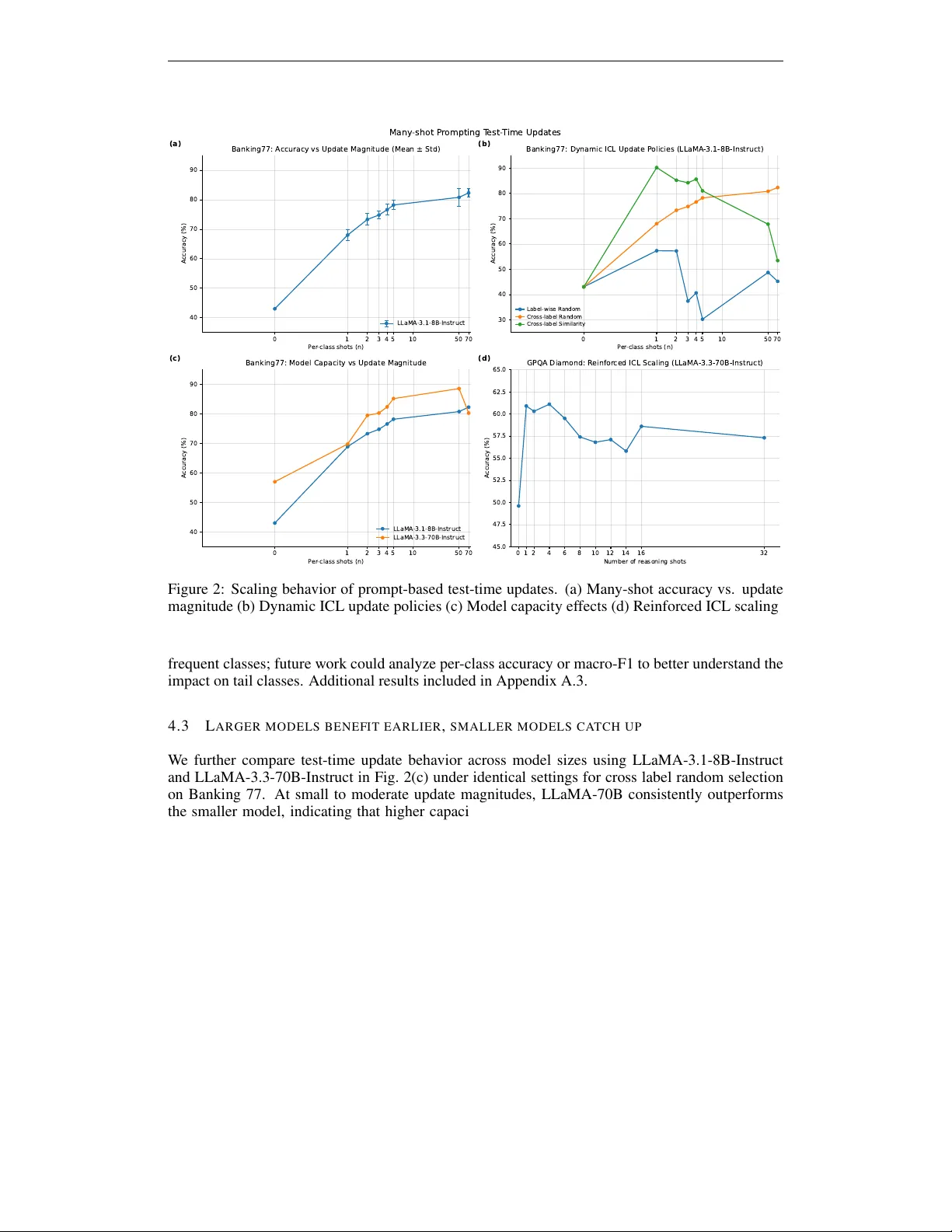

Leave a Comment