Storage and selection of multiple chaotic attractors in minimal reservoir computers

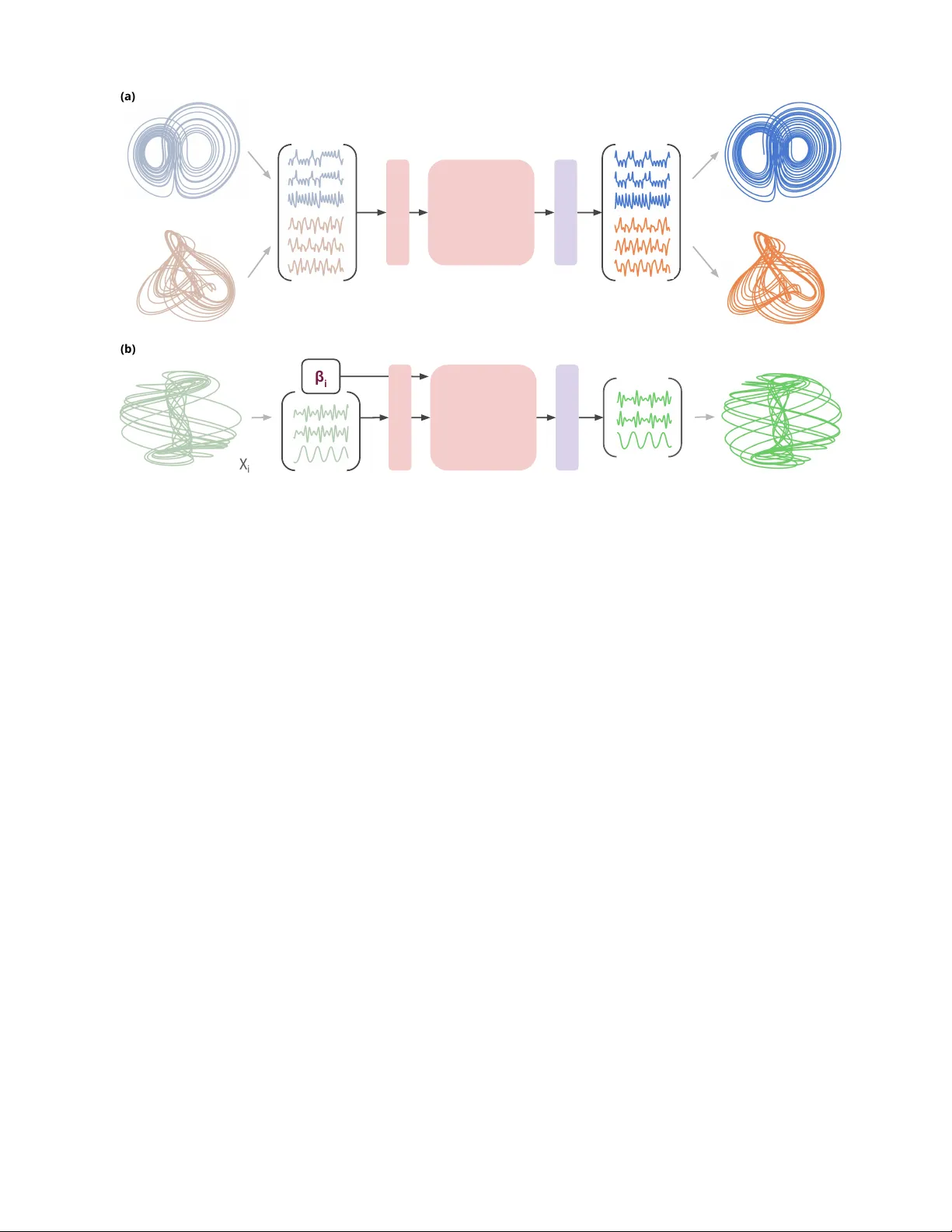

Modern predictive modeling increasingly calls for a single learned dynamical substrate to operate across multiple regimes. From a dynamical-systems viewpoint, this capability decomposes into the storage of multiple attractors and the selection of the…

Authors: Francesco Martinuzzi, Holger Kantz