PrototypeNAS: Rapid Design of Deep Neural Networks for Microcontroller Units

Enabling efficient deep neural network (DNN) inference on edge devices with different hardware constraints is a challenging task that typically requires DNN architectures to be specialized for each device separately. To avoid the huge manual effort, …

Authors: Mark Deutel, Simon Geis, Axel Plinge

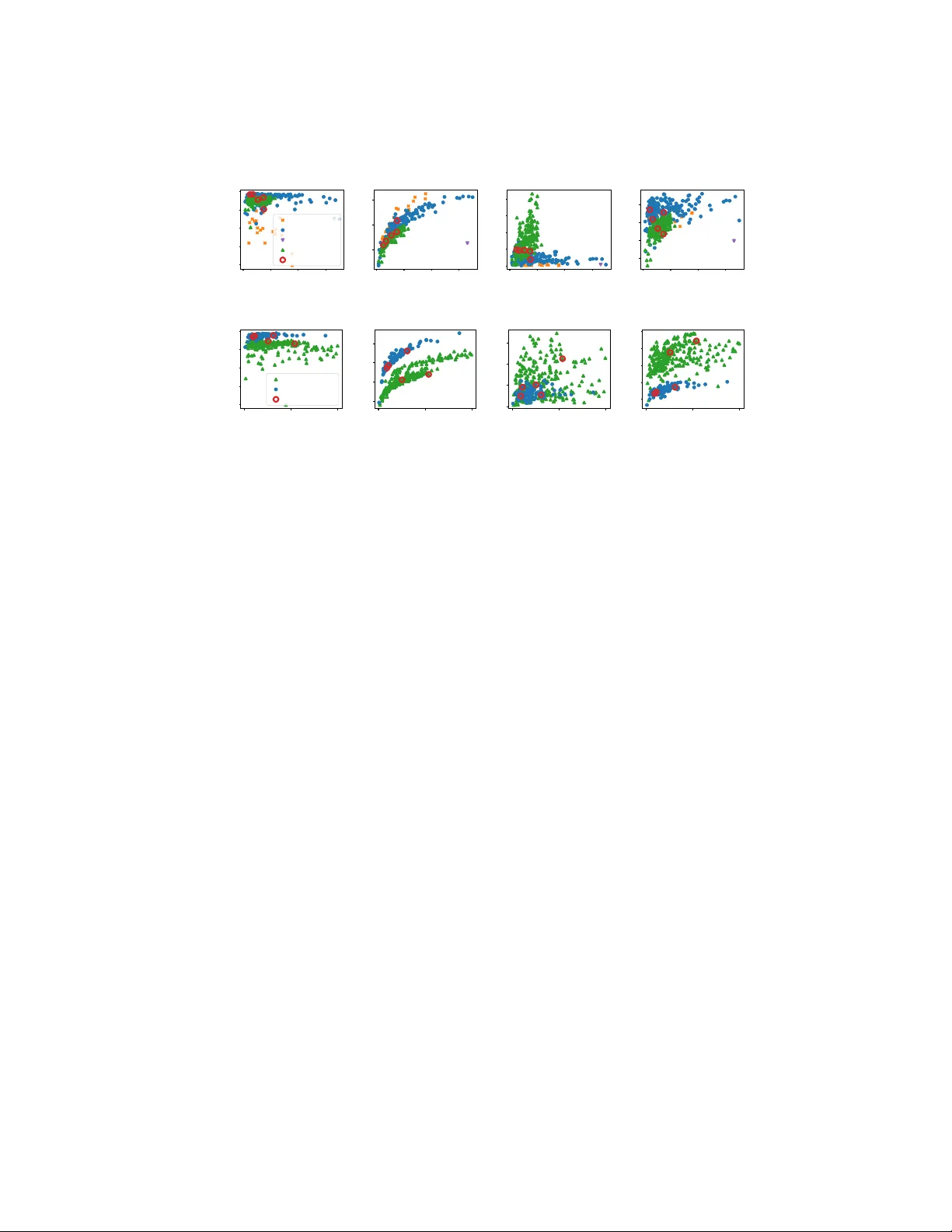

Pr ototypeN AS: Rapid Design of Deep Neural Networks f or Micr ocontr oller Units Mark Deutel, Simon Geis, and Axel Plinge Fraunhofer Institute for Integrated Circuits, Fraunhofer IIS, German y {mark.deutel, simon.geis, axel.plinge}@iis.fraunhofer.de Abstract. Enabling efficient deep neural network (DNN) inference on edge de- vices with different hardware constraints is a challenging task that typically re- quires DNN architectures to be specialized for each de vice separately . T o a void the huge manual ef fort, one can use neural architecture search (N AS). Ho wever , many e xisting NAS methods are resource-intensi ve and time-consuming because they require the training of man y dif ferent DNNs from scratch. Furthermore, the y do not take the resource constraints of the tar get system into account. T o address these shortcomings, we propose PrototypeN AS, a zero-shot N AS method to ac- celerate and automate the selection, compression, and specialization of DNNs to dif ferent target microcontroller units (MCUs). W e propose a nov el three-step search method that decouples DNN design and specialization from DNN training for a given target platform. First, we present a novel search space that not only cuts out smaller DNNs from a single large architecture, but instead combines the structural optimization of multiple architecture types, as well as optimization of their pruning and quantization configurations. Second, we explore the use of an ensemble of zero-shot proxies during optimization instead of a single one. Third, we propose the use of Hyperv olume subset selection to distill DNN architectures from the Pareto front of the multi-objective optimization (MOO) that represent the most meaningful tradeoffs between accuracy and floating-point operations (FLOPs). W e e valuate the ef fectiveness of PrototypeN AS on 12 dif ferent datasets in three dif ferent tasks: image classification, time series classification, and object detection. Our results demonstrate that PrototypeNAS is able to identify DNNs within minutes that are small enough to be deployed on off-the-shelf MCUs and still achiev e accuracies comparable to the performance of large DNN architec- tures. Keyw ords: Neural Architecture Search · Multi-Objectiv e Optimization · Effi- cient AI · Microcontrollers. 1 Introduction W e address the problem of quickly and efficiently designing and training deep neural networks (DNNs) for inference on resource-constrained microcontroller units (MCUs). DNNs hav e become the de facto standard for data analysis and machine learning tasks. Howe ver , with the gro wth of DNNs in both size and computational cost over the last years, running DNNs, especially on a div erse set of different resource constrained em- bedded systems and at low latenc y , has become a major challenge. 2 M. Deutel et al. T o address this problem, we propose PrototypeNAS, a zero-shot neural architecture search (N AS) framew ork for rapidly designing DNNs for MCUs with different hard- ware and resource constraints. Compared to other hardware-aw are N AS frameworks, PrototypeN AS is novel in three aspects. First, it uses an ensemble of zero-shot proxies that compete as objectiv es in a multi-objectiv e optimization (MOO) rather than being weighted and linearized into a single ensemble proxy score. Second, it implements a nov el search space that combines architecture selection, size and structure optimiza- tion, and optimization of pruning and quantization configuration, instead of optimizing them as unrelated problems. Third, it introduces Hypervolume subset selection to fur- ther refine the P areto optimal models from optimization to a set of 3-5 models that cov er the most meaningful tradeoffs between accurac y and resource consumption. Additionally , in our extensi ve e valuation, we demonstrate the effecti veness and ver- satility of PrototypeN AS by using it to find optimized DNNs deployable on an ARM Cortex-M MCU for 12 datasets from three tasks: image classification, time series clas- sification, and object detection. W e also compare PrototypeNAS to two other hardware- aware N AS methods, Tin yN AS (MCUNet) [22] and N A TS-Bench [8]. On av erage, the DNN architectures found by PrototypeN AS outperformed the ones proposed by the other two frame works by 5% in accuracy on the CIF AR10 datasets. As a result, PrototypeN AS is a resource- and time-efficient way to search for DNN architectures without having to train hundreds of DNN architectures to find a single good candidate. Instead, PrototypeN AS reduces the training to only 3-5 DNN candi- dates while still considering hundreds of architectures in its search. 2 Related W ork N AS is a set of techniques used to automate the DNN architecture design process, gen- erally by solving an optimization problem. While the initial focus was on maximizing accuracy and using reinforcement learning to control the search process [37,4], more recently , hardware-aware NAS [31,35], i.e., considering memory and inference speed in addition to accuracy as a MOO, has become a major focus of research. A subset of this work focuses more specifically on N AS for resource-constrained devices such as MCUs [5,22,6]. In addition, zero-shot N AS [19,33,23,27,15,21] has receiv ed considerable attention to address the problem of having to train a large number of DNN candidates, for ex- ample, when using black-box optimization [6] or reinforcement learning [37,4]. All zero-shot N AS techniques rely on using a proxy metric for accuracy to av oid training. As a result, a lar ge number of different zero-shot proxies hav e been proposed, focusing on dif ferent features that can be computed from an untrained DNN, such as the Pearson correlation matrix of the intermediate feature maps [15] or the number of linear re gions in the input space [27]. In addition, zero-shot NAS techniques, especially when used for hardware-aware N AS, often propose the use of a very large pre-trained “super-net” architecture from which smaller networks more suited to the constraints of the target hardware are then deri ved and tuned [5]. While zero-shot NAS works to find a sufficiently good architecture in a relatively short time, there are still challenges to overcome. Zero-shot proxies are known to be PrototypeN AS: Rapid Design of DNNs for MCUs 3 Hypervolume Subset Selection T op-k selection from the Pareto front of the multiobjective optimization using Hypervolume subset selection. Hypervolume subset selection implemented with evolutionary search. Architectural Prototype Exploration Multi-objective optimization with FLOPs and zero-shot proxies as objectives Combination of architecture, structural, and size (pruning configuration) optimization in a single combined search space Dataset Evaluator T op-k selection can be trained, pruned, and quantized for many datasets for the same MCU Different trainers can be used to cover a variety of tasks like classification or object detection. 1 2 3 Fig. 1: Schematic of the three step pipeline of PrototypeN AS. imprecise and bias certain DNN architectures [16,3], often leading to poor correlation with actual training accuracy . Furthermore, the reliance on a single large supernet means that the resulting search space is limited to exploitation around a pre-existing optimum and nev er explores the full architectural landscape of DNNs. In parallel to NAS, other techniques for DNN compression hav e been explored. A significant number of efficient human-engineered DNN architectures have been pro- posed over the years. Starting with SqueezeNet [13], MobileNet [30], and Efficient- Net [32], to more recent designs such as MCUNet [22], which specifically targets microcontrollers, and Con vNeXt [25], which combines a traditional CNN with trans- former elements. In addition, pruning and quantization [9,7] have become common techniques for designing efficient DNN architectures for resource-constrained MCUs. 3 Method PrototypeN AS is a zero-shot NAS method that enables rapid exploration of optimized DNNs for MCU deployment by decoupling design space exploration, i.e., the search for prototypical network architectures and their compression configuration, from training on the target datasets. W e give a schematic overvie w of PrototypeN AS, which is a three-step method, in Fig. 1. 1 First, a constrained multi-objective optimization is performed with the en- semble of zero-shot proxies and the number of floating-point operations (FLOPs) as a proxy for the computational cost of DNN inference as objectiv es. The optimization is constrained by the memory limits of the target MCU to ensure that the exploration focuses only on DNN architectures that can actually be deployed on the target. The search space of the optimization combines both architectural selection from a pool of predefined baseline architectures and their structural and size optimization, i.e., pruning parameter configuration, into a single search space. 2 Second, a top- k selection is per- 4 M. Deutel et al. T able 1: Search space X of the MOO, ∀ i ∈ { 0 , 1 , 2 , 3 } resulting in 14 tunable hyperpa- rameters in total. Optimization Hyperparameter T ype Range/V alues Architecture baseline architecture categorical T ask dependent Structural group depth i categorical [ 0 , 1 , 2 , 3 ] kernel & stride i categorical [[ 3 , 2 ] , [ 3 , 1 ] , [ 5 , 2 ] , [ 5 , 1 ] , [ 7 , 2 ] , [ 7 , 1 ]] Size width multiplier continuous [ 0 . 1 , 1 . 0 ] pruning sparsity i continuous [ 0 . 1 , 0 . 9 ] formed from the resulting Pareto set using Hypervolume subset selection. 3 Finally , the resulting selection of optimized DNN architectures is trained, pruned, and quantized on the target datasets. In the following sections, we describe each of the three steps of PrototypeN AS in more detail. 3.1 Architectural Pr ototype Exploration W e formulate the architectural prototype exploration as a constrained MOO in Eq. (1). The objectiv es are to minimize the number of FLOPs of a model f l o ps ( x ) while maxi- mizing an ensemble of four proxy metrics prox i ( x ) with i ∈ { 0 , . . . , 3 } . The four proxies used in this work are MeCo [15], ZiCo [21], N ASWO T [27], and SNIP [19], which we selected due to the dif ferent DNN features they use for ev aluation, compare [12]. The constraints of the optimization ram max , rom max , and f l o ps max are deriv ed from the hard- ware limits of the tar geted MCU. W e designed the search space from which PrototypeNAS samples DNNs, focusing on emulating the process that a specialist makes when designing an efficient DNN ar- chitecture for an MCU. Based on this premise, we make the following assumptions: First, there is a set of baseline architectures from which DNN candidates can be de- riv ed. Second, each of the baseline architectures can be abstracted as follows: An initial layer pattern, follo wed by a set of repeatable layer patterns ( superblocks ), followed by a classifier . Third, a pruning and quantization scheme is defined that is e xecuted during training to dynamically compress and scale do wn the model during training. Based on these three assumptions, we define the search space X in T able 1. min x ∈ X f l o ps ( x ) , − pr ox 1 ( x ) , . . . , − prox 3 ( x ) s.t. ram ( x ) ≤ ram max rom ( x ) ≤ rom max f l o ps ( x ) ≤ f l o ps max (1) In the following, we describe how X is structured and how DNN prototypes can be created from it, see Fig. 2 for a schematic overvie w . The arc hitectur e hyperparameter allows the optimizer to choose a baseline DNN architecture from a pool of predefined PrototypeN AS: Rapid Design of DNNs for MCUs 5 Group 3 Baseline Ar chitectur e Gr oup Depth , i.e., number of Superblocks Pruning Sparsity of Superblocks Layer Output W idth Multiplier Kernel & Stride of first layer in Superblock Gr oup Depth , i.e., number of Superblocks Pruning Sparsity of Superblocks Kernel & Stride of first layer in Superblock Group 0 ... Architecture Optimization Structural Optimization Size Optimization Fig. 2: The proposed search space and how a DNN prototype can be created from it. Each baseline architecture consists of repeatable superblocks, i.e., a predefined pattern of layers, organized in the search space into four groups to be optimized separately . architectures. In the scope of this work, six DNN architectures are supported for im- age classification, two architectures for time series classification, and one for object detection (see Section 4 for details). The backbone of the selected baseline DNN is then split into repeatable superblocks as described abo ve, for e xample depthwise separable con volutions in MobileNetV2. How each architecture is split into superblocks is defined prior . The superblocks are then organized into four groups. Each group contains at least one super block and up to four additional blocks, which is controlled for each group via the gr oup depth hyper- parameter . In addition, each group has an optimizable kernel & stride hyperparameter which is used to configure the first con volution of each superblock of the group. T o- gether , these hyperparameters allow structural optimization of the baseline DNNs. The part of the search space allo wing for size optimization of the baseline DNNs consists of the width multiplier and pruning sparsity hyperparameters. The width mul- tiplier parameter controlls the initial size of the baseline DNN architecture at the begin- ning of training by scaling the output channels in all con volutional layers uniformly . In addition, an iterati ve pruning schedule is defined that will be executed during training. For each of the four groups of superblocks, a separate pruning sparsity hyperparameter controls the target percentage of channels to be remo ved by pruning. The objectiv e function ev aluation for a set of hyperparameters proposed by the op- timizer consists of three steps: First, the selected baseline architecture is initialized and configured according to the structural optimization. Second, pruning and quantization are applied (without any training) to query parameter and size reduction. Third, the resulting model is translated to C code to get the actual R OM and RAM usage when deployed on te targeted MCU. T o calculate the number of FLOPs of a DNN, Pytorch’ s built-in profiler is used. Since the objectiv e function ev aluation does not require any training due to the use of zero-shot proxies, sampling is both resource and time effi- cient, allowing for rapid e xploration of the search space. 3.2 Hypervolume Subset Selection W e obtain a set of Pareto-optimal architecture and compression configurations from the prototype exploration described in the previous section. The size of this set can be be- 6 M. Deutel et al. tween one and as man y solutions as there were DNNs explored during the optimization. Howe ver , in practice, a set of 3-5 architectural tradeoffs is usually suf ficient for a deci- sion maker to select a DNN for a giv en use case. A smallest model, a model with the highest accuracy , and 2-3 tradeoffs in between. T o av oid situations where large Pareto sets proposed by the optimization ha ve to be trained, compressed, ev aluated, and finally presented to the decision maker , we implemented a Hyperv olume subset selection algo- rithm based on e volutionary programming and inspired by algorithms like [1] that use Hypervolume as a selection criterion. Let H ( S ) be the Hypervolume indicator of a solution set S as described in [36]. W e formulate an optimization problem in Eq. (2) which maximizes H ( S ) of a subset A ⊂ P with k ∈ { 0 , 1 , . . . , | P |} where P is the Pareto set resulting from the prototype exploration. H ( A ) = max B ⊂ P | B | = k H ( B ) (2) W e solve this optimization problem using a greedy selection strategy that retains solutions with higher Hypervolume contributions. First, we encode P as a binary gene g , where each bit in g corresponds to a solution in P , which is either set to 1 if the sample should be part of B , or 0 if not. Consequently , for each gene, only k bits can be set to 1, while all other bits are set to 0. W e then ev olve an initial population G ∈ { g 0 , g 1 , . . . , g n − 1 } of size n , using mutation and crossov er operators, and the Hy- pervolume indicator to e valuate the fitness of each g ∈ G . Naturally , crossover , mutation, and the initial generation of G can lead to the cre- ation of “in valid” genes, i.e. genes with more than k bits set to 1. Our algorithm greedily repairs such genes. If there are less than k bits set to 1, it iterativ ely sets bits to 1 until exactly k bits are set to 1. This has no negati ve effect on the fitness of the gene, since the Hypervolume can only increase or stay the same with additional solutions added, but nev er decrease. If there are more than k bits set to 1, the algorithm instead tries to set additional bits to zero while keeping the Hypervolume as high as possible. T o do this, it sets ev ery bit that is 1 to 0 one by one and recalculates the Hypervolume. Then the algorithm sorts all bits by their calculated Hypervolumes in ascending order . Finally , it selects the top k bits whose remo v al has the largest negati ve impact on the fitness of the gene, while setting all other bits to 0. In all our experiments, we configured the Hypervolume subset sampling algorithm with an initial population size of 2000, a mutation rate of 0 . 3, and ran it for 10 000 generations. 3.3 Dataset Evaluator T o train, prune, and quantize the subsets of DNNs designed by PrototypeNAS, we im- plement tw o dataset ev aluators. One ev aluator is for training image and time series classification tasks using Pytorch Lightning, while the other one is for training the ob- ject detection task with the YOLOv5 framework. As baseline architectures for Proto- typeN AS we used implementations provided by torchvision and torchaudio with slight PrototypeN AS: Rapid Design of DNNs for MCUs 7 modifications so that they can be generated from a set of hyperparameters from the search space introduced in Section 3.1. W e also implemented iterativ e structure pruning and quantization for both trainers. W e used post-training static quantization (PTQ) for the image classification and ob- ject detection tasks, and quantization-aware training (QA T) with 15 additional training epochs for the time series classification task. Since PTQ is applied to DNNs after train- ing, it is generally faster to use and less computationally intensive, while QA T is more resource intensive since it is applied during training, but also more accurate. While we found during our experiments that PTQ worked reliably well for the two image-based tasks, we experienced significant drops in accuracy for the time series datasets when using PTQ. As a result, we used QA T for the time series datasets, as it yielded signif- icantly better accuracies. This is most likely due to QA T’ s ability to better account for the varying acti vation ranges resulting from the time series input. 4 Evaluation W e ev aluate PrototypeN AS on three dif ferent tasks and 12 datasets in total: image clas- sification for the CIF AR10 [18], CIF AR100 [18], GTSRB [11], Flowers [28], Birds [34], Cars [17], Pets [29], and ArxPhotos314 1 datasets, time series classification for the Daliac [20], MAFULD A 2 , and BitBrain Sleep [26] datasets, and person detection using a subset of COCO [24]. For the three tasks, we first performed the prototype exploration as described in Sec- tion 3.1 for 500 trials, then performed the Hypervolume subset selection as described in Section 3.2, and finally e valuated the top-5 selection of each of the tasks on the respec- tiv e datasets. For training, we used the same configuration for all datasets: A batch size of 48, stochastic gradient descent with a learning rate of 0 . 001 and a momentum of 0 . 9, and 100 training epochs. W e provide detailed insight and discussion of our results in Section 4.1 and 4.3. Furthermore, in Section 4.2 we discuss the precision of the proxy ensemble used in PrototypeN AS during exploration. Finally , in Section 4.4 we giv e a comparison of PrototypeN AS to two other hardware a ware NAS frame works, Tin yNAS (MCUNet) [22] and N A TS-Bench [8]. 4.1 Results for Image and T ime Series Classification Fig. 3 shows the results of the prototype exploration for image and time series classi- fication and the four proxy scores MeCo, NASW O T , SNIP , and ZiCo 3 . W e performed separate optimizations for the image and time series datasets because we used dif fer- ent sets of baseline architectures for the two tasks, four DNN architectures for image classification and two for time-series classification, which we denote in the plots with different colors and markers. For image classification, our baseline set includes Mo- bileNetV2 [30], ResNet18 [10], Squeezenet [13], and MbedNet (our own DNN archi- tecture deriv ed from MobileNet), see Fig. 3a. F or time series classification we used 1 https://web.arx.net 2 https://www02.smt.ufrj.br/~offshore/mfs/page_01.html 3 DNNs and pre-trained weights: https://doi.org/10.5281/zenodo.18878249 8 M. Deutel et al. 0 2 4 6 Compute [FLOP s] 1e8 −20 −15 −10 −5 0 MeCo MobileNetV2 MbedNet ResNet SqueezeNet Selected 0 2 4 6 Compute [FLOP s] 1e8 13 14 15 NASWOT 0 2 4 6 Compute [FLOP s] 1e8 0 1 2 3 4 SNIP 1e4 0 2 4 6 Compute [FLOP s] 1e8 2 3 4 5 ZiCo 1e2 (a) Image classification 0 2 4 Compute [FLOP s] 1e8 −40 −30 −20 −10 0 MeCo InceptionTime MbedNet Selected 0 2 4 Compute [FLOP s] 1e8 11 12 13 14 NASWOT 0 2 4 Compute [FLOP s] 1e8 0 2 4 6 SNIP 1e3 0 2 4 Compute [FLOP s] 1e8 0.2 0.4 0.6 0.8 1.0 ZiCo 1e3 (b) T ime series classification Fig. 3: Optimization results. The top-5 DNNs selected from the Pareto front by Hyper- volume subset selection are mark ed with red circles. InceptionT ime [14] and a version of MbedNet where we replaced all 2D con volutions with their 1D counterparts, see Fig. 3b. For image classification, we used a 128 × 128 pixel input for all experiments, while for the time series classification experiments we split the input into windows of constant length and used them without any further pre- processing. After optimization, we identified fi ve models from the final Pareto front using Hypervolume subset selection as described in Section 3.2 and mark the selected models in Fig. 3 with red circles. For both image and time series optimization, the results in Fig. 3 sho w that the four proxies rank the base architectures of their respectiv e search spaces differently . For example, SNIP ga ve a significantly higher score to almost all architectural variants of SqueezeNet than any of the other three proxies in Fig. 3a. Another example of this apparent “disagreement” among the proxies can be seen in Fig. 3b, where NASW O T and MeCo rank MBedNet higher than InceptionT ime, while the other two proxies do the opposite. Another observ ation that can be made is that all DNNs of a single architecture type cluster around their unmodified baseline architecture in the tar get space. This is not un- expected, since once a baseline architecture is selected, the other hyperparameters in PrototypeN AS’ s search space focus on modifying the structure of the baseline architec- ture within the limits of its predefined superblocks, or scaling the architecture in width, but do not fundamentally change its design. This is intentional, as the goal of Proto- typeN AS is to quickly fine-tune a DNN to fit the constraints of a giv en MCU and not to come up with completely ne w DNN designs from scratch. Therefore, another way to interpret the results in Fig. 3 is that each baseline architecture represents a local opti- mum, with the search space being designed in a way to encourage an efficient search around it. PrototypeN AS: Rapid Design of DNNs for MCUs 9 T able 2: T est accuracy of the trained, pruned, and quantized DNNs found by Proto- typeN AS for the image datasets. Latency and energy was measured on an iMXR T1062 Cortex-M7 MCU. Optim. Index 210 283 190 311 237 Architectur e MbedNet MbedNet SqueezeNet SqueezeNet MbedNet Quantized T est Accuracy [%] CIF AR10 91.8 92.5 90.3 90.0 93.7 CIF AR100 67.4 70.5 66.5 63.7 72.3 ArxPhotos314 90.8 95.4 97.4 79.5 93.6 Flowers 88.9 91.6 82.9 86.3 93.3 Birds 62.8 65.7 59.5 55.6 69.9 Pets 99.8 99.8 97.5 96.1 99.9 Cars 64.8 64.1 59.8 55.2 76.4 GTSRB 96.3 96.1 95.8 97.0 95.5 Compute [MFLOPs] 50.1 70.0 105.0 147.5 151.0 R OM [kB] 605.4 774.9 758.4 434.7 356.5 RAM [kB] 111.7 118.3 364.7 281.6 189.7 Latency [ms] 224 . 1 ± 0 . 1 312 . 9 ± 0 . 1 367 . 1 ± 0 . 1 447 . 2 ± 0 . 1 753 . 2 ± 0 . 1 Energy [mJ] 77 . 6 ± 8 . 6 108 . 6 ± 10 . 0 126 . 0 ± 12 . 7 156 . 3 ± 16 . 8 254 . 5 ± 13 . 7 Regarding the difference in proxy scoring observed in our results, we point to the general consensus found in related work that zero-shot proxies are often imprecise and tend to fa vor certain architectures [16,3]. As a result, and similar to related work such as [12], this motiv ates us to consider the ev aluation of multiple proxies to guide our optimization, rather than relying on a single proxy . In constrast, we do not attempt to weight and linearize the different proxies to form a new proxy score, but instead treat them as competing objectiv es in our MOO. As a result, the top k = 5 architectures selected by the Hyperv olume subset selection at the end of the optimization do not directly follo w the ranking of any of the individ- ual proxies and FLOPs, but are instead derived from the Pareto front between FLOPs and all four proxies. As a result, PrototypeN AS avoids being biased toward particular architectures in its search and achiev es a balanced ev aluation. W e sho w the test accuracy on all e v aluated datasets of the fi ve trained, pruned, and quantized DNN prototypes selected by Hypervolume subset selection in T able 2 (image classification) and T able 3 (time series classification) sorted in ascending order by FLOPs. For each of the fiv e DNNs shown in the two tables, we additionally report the base architecture from which the prototype was derived, the model’ s RAM and R OM requirements in kilobytes, reflecting the actual memory requirements on the target MCU, as well as the a verage inference latency and energy consumption of the DNNs when executed on an iMXR T1062 Cortex-M7 MCU. For each dataset, we highlighted the model with the highest test accuracy . For time series classification, we trained with randomly initialized weights, while for image classification, we performed 50 epochs of ImageNet pre-training. 10 M. Deutel et al. T able 3: T est accuracy of the trained, pruned, and quantized DNNs found by Prototype- N AS for the time series datasets. Latency and ener gy was measured on an iMXR T1062 Cortex-M7 MCU. Optim. Index 224 226 73 273 347 Architectur e MbedNet MbedNet InceptionT ime MbedNet InceptionT ime Quantized T est Accuracy [%] BitBrain 83.7 87.2 81.7 88.0 78.9 Mafaulda 96.8 98.3 97.4 97.8 98.5 Daliac 95.9 97.1 97.2 97.0 96.1 Compute [MFLOPs] 36.3 43.2 101.5 123.5 215.3 R OM [kB] 149 231 571 337 978 RAM [kB] 246 244 251 252 250 Latency [ms] 162 . 3 ± 0 . 1 183 . 6 ± 0 . 1 447 . 2 ± 0 . 1 467 . 9 ± 0 . 3 635 . 0 ± 1 . 4 Energy [mJ] 55 . 8 ± 5 . 7 63 . 7 ± 6 . 4 156 . 0 ± 16 . 7 165 . 8 ± 15 . 9 227 . 6 ± 17 . 6 For both the image and time series classification tasks, the selection of DNNs found by PrototypeN AS achiev ed accuracies competitiv e with or better than related embedded DNN architectures, see also Section 4.4 for a direct comparison of our approach with other N AS methods. Furthermore, while a correlation between FLOPs and accurac y can be observed within an architecture type, e.g. both the MbedNet-based architec- tures 210, 283, and 237 and the SqueezeNet-based architectures 190 and 311 in T able 2 show a linear correlation between FLOPs and accuracy , this correlation cannot be ob- served when ranking across the two different architecture types. Since the Prototype- N AS search space optimizes multiple architecture types together , ranking by FLOPs is not sufficient, meaning that the structure and expressi veness of the architectures must also be considered, motiv ating our use of zero-shot proxies in this work. In addition, when considering resource consumption, we noticed that the RAM re- quirements are very similar across all fiv e DNNs for both the image and time series classification tasks, ev en though they have very different compute and R OM require- ments. The reason is that the deployment framew ork we utilize for our experiments reuses memory for multiple intermediate feature maps during inference. This means that the larger initial feature maps typically dominate the overall RAM requirements, since they are often similar in size, as all models share the same input size. As a result, we are only modeling memory requirements as constraints rather than objectiv es during optimization. Finally , our results show that latency and ener gy per sample scale linearly with FLOPs, making it a good proxy for optimizing these two metrics without having hardware in the loop. 4.2 Proxy Ensemble Analysis W e giv e a detailed analysis of the proxy “disagreement” we described in the previous section in T able 4. T o quantify “disagreement”, we compute the Kendall rank corre- lation coefficient (Kendall’ s τ score) to measure the ordinal association between each PrototypeN AS: Rapid Design of DNNs for MCUs 11 T able 4: Kendall’ s τ scores for the datasets and results sho wn in T ables 2 and 3 Dataset MeCo N ASWO T SNIP ZiCo FLOPs CIF AR10 0.0 0.0 -0.2 0.6 0.0 CIF AR100 0.0 0.0 -0.2 0.6 0.0 ArxPhotos314 0.0 0.0 0.2 -0.2 0.0 Flowers 0.2 0.2 -0.4 0.4 0.2 Birds 0.0 0.0 -0.2 0.6 0.0 Pets 0.1 -0.4 0.2 0.8 -0.3 Cars -0.2 -0.2 0.0 0.8 -0.2 GTSRB 0.4 -0.4 0.2 -0.2 -0.4 BitBrain 1.0 0.8 -0.6 -0.4 -0.2 Mafaulda -0.2 0.0 0.6 0.4 0.6 Daliac 0.1 -0.1 0.3 0.1 -0.1 of the four proxy scores and the quantized post-training accuracy we presented in T a- bles. 2 and 3. In addition, we provide the τ score between FLOPs and accuracy . The τ ∈ [ − 1 , 1 ] score quantifies the strength and direction of monotonic relation- ships between tw o v ariables based on order , where 1 describes a perfect agreement, i.e. the rankings are identical, − 1 a perfect disagreement, i.e. the rankings are the opposite of each other , and 0 denotes no correlation between the rankings of the two variables. Ideally , for zero-shot N AS proxies, a τ score close to 1 is desirable, as this means that DNNs with higher proxy scores will also achiev e higher accuracy , while especially a score below 0 is undesirable, as it misguides the N AS algorithm during optimization. In T able 4, it can be seen that none of the four proxies has a consistent and high positiv e τ score for all to ev aluate datasets. Even worse, the proxies sometimes hav e a negati ve relationship with accuracy for some datasets, while working well for others. This can be observed especially well with ZiCo and SNIP . On the other hand, it can be seen that for all datasets, at least one of the four proxies achiev ed a high positiv e τ score and, more importantly , outperformed the score achieved by FLOPs. As a result, we concluded that for our search space (a) using a proxy ensemble is necessary to find a Pareto front of DNNs that, when trained, achiev e consistently good ranking across many datasets and prevent biases and inaccuracies of individual proxies from misguiding the exploration and (b) using zero-shot NAS proxies is a better metric to rank models by approximated accuracy than using FLOPs. Finally , in Fig. 4, we show the τ scores calculated among the proxies themselves for the image and time series optimization as heatmaps. Note that the lower and upper triangular matrices describe the same relationship. In Fig. 4a and 4b we observe that for both optimizations the proxies generally did not hav e a strong relationship with each other , and in the few cases where they did, such as SNIP and ZiCo in Fig. 4b, it was positiv e, i.e. the proxies were in agreement. These results confirm that the use of this specific ensemble of proxies provides PrototypeN AS with diverse and balanced ev alu- ations, thus mitigating the biases and inaccuracies of indi vidual proxies. Furthermore, our results are in agreement with [12], who proposed the same ensemble of proxies. 12 M. Deutel et al. 4.3 Object Detection T o further demonstrate the flexibility of PrototypeN AS, we applied it to an object de- tection task, specifically person detection, trained on the COCO dataset. W e used the Y OLOv5 frame work, replacing its regular backbone netw ork with MbedNet, which we also used in the results presented in Section 4.1, but keeping the original YOLOv5 de- tector instead of a normal classification head. This allo wed us to use the same search space for prototype exploration as for the image classification task, while using the Y OLOv5 data augmentation, loss function, anchor boxes, and training hyperparame- ters for dataset ev aluation after optimization. W e ran two different experiments, one with an input image resolution of 128 × 128 targeting the iMXR T1062, i.e. the same MCU we used for the image classification task, and the second with a larger input image resolution of 320 × 320 and without any memory or FLOPs constraints targeting a larger Raspberry Pi 5 Cortex-A SoC. Identical to the image and time series classification tasks, we used PrototypeN AS to explore 500 DNNs, from which we selected a subset of k = 5 architectures for ev aluation. W e sho w the results between megaflops and mAP 50 , the mean average accuracy of the model at 50% intersection over union, a ke y performance indicator of object recognition models, for the fiv e architectures selected after optimization and after 100 epochs of training for both the 128 × 128 and 320 × 320 pixel input resolutions (see Fig. 5). The results sho w that PrototypeNAS was also able to w ork for use cases outside of the classification tasks that are usually the sole focus of zero-shot NAS research, providing a good k = 5 selection, where each selected DNN model provides another meaningful tradeoff between the tw o ev aluation metrics. 4.4 Comparison with MCUNet and NA TS-Bench W e compare PrototypeN AS to two related hardware aware N AS methods on the CI- F AR10 dataset, see Fig. 6. First, a comparison with MCUNet which is a selection of fiv e DNNs searched with T inyN AS [22], and second a comparison with models from the two search spaces proposed in N A TS-Bench [8]. MeCo NASWOT SNIP ZiCo MeCo NASWOT SNIP ZiCo 1.00 -0.10 0.16 0.15 -0.10 1.00 -0.18 0.31 0.16 -0.18 1.00 -0.08 0.15 0.31 -0.08 1.00 (a) Image classification MeCo NASWOT SNIP ZiCo MeCo NASWOT SNIP ZiCo 1.00 0.08 -0.03 -0.37 0.08 1.00 -0.12 -0.04 -0.03 -0.12 1.00 0.39 -0.37 -0.04 0.39 1.00 (b) T ime series classification Fig. 4: Kendall’ s τ scores between the four zero-shot proxies for the image and time series classification tasks. PrototypeN AS: Rapid Design of DNNs for MCUs 13 0 2 4 6 Compute [FL OPs] 1e8 0.2 0.3 0.4 0.5 mAP 50 128x128 px 320x320 px Fig. 5: Tradeof f between mAP 50 and FLOPs of the MbedNet YOLO architectures de- signed by PrototypeN AS TinyN AS is a tw o-step optimization method based on the Mobile search space [31] that is specifically designed for finding DNNs for MCU deployment. Tin yNAS first optimizes the Mobile search search space to fit the resource constraints of an MCU and then performs one-shot N AS [2] only on the optimized subset to find an efficient model. The optimized search spaces are found by changing the input resolution and the model width hyperparameters. The quality of the sampled search spaces are ev aluated by randomly sampling a number of DNNs from them and e valuating the cumulativ e distribution function of the sampled DNNs’ s FLOPs. In their paper, Lin et al. [22] de- scribe a set of fiv e DNNs (MCUNet in0-in4) which were designed using T inyEngine and for which the authors provide pre-trained weights on ImageNet. W e sho w a comparision between the DNNs designed by PrototypeNAS with MCU- Net for CIF AR10 in Fig. 6a. For PrototypeN AS, we used the same results shown in T able 2, while for MCUNet we fine-tuned the pre-trained ImageNet weights for 100 epochs on CIF AR10. The results show that the five DNN proposed by PrototypeN AS consistently outperform MCUNet in terms of accuracy , sometimes by as much as 5%, at roughly the same computational cost. This shows that compared to other zero-shot search spaces that limit their search to finding subnetworks within a single large super - network architecture, expanding the search space to include pruning and dif ferent archi- tecture classes, as is done in PrototypeN AS, allows finding models with higher knowl- edge density within the same resource footprint. NA TS-Bench is a N AS benchmark for optimizing both DNN topology and size. N A TS-Bench contains two search spaces, one with 15 , 625 trained DNN candidates focusing on architecture topology (TSS) and one with 32 , 768 DNN candidates focusing on architecture size (SSS). N A TS-Bench uses a cell-based approach where the skeleton of each cell is constructed from remaining blocks with a fixed topology . In the size search space, the number of channels of each cell is optimized, effecti vely allowing the DNN candidates to scale in width. The topology search space, on the other hand, optimizes a predefined set of operations within each cell, i.e., the combination of layers. The comparison between PrototypeN AS and the two N A TS-Bench search spaces is shown in Fig. 6b. For N A TS-Bench, we plot all DNNs that have an accuracy higher 14 M. Deutel et al. 0.50 0.75 1.00 1.25 1.50 Compute [FLOP s] 1e8 88 90 92 94 Accuracy [%] 190 210 237 383 311 in0 in1 in2 in3 in4 PrototypeNAS MCUNet (a) T inyN AS (MCUNet in0-in4) 0 1 2 3 4 Compute [FLOP s] 1e8 40 50 60 70 80 90 Accuracy [%] PrototypeNAS Size search space (SSS) T opography search space (TSS) (b) N A TS-Bench Fig. 6: Comparison of PrototypeN AS with T inyN AS (left) and N A TS-Bench (right) for CIF AR10 than 70% on CIF AR10 after the maximum number of training epochs reported by the authors. For PrototypeN AS, we again use the results shown in T able 2 for CIF AR10, with the only difference that we re-trained the models for 100 epochs on a smaller input image resolution of 69 × 69 pixels instead of 128 × 128 to more closely match the 32 × 32 pixel resolution used by N A TS-Bench. W e chose 69 × 69 pixels because it was the smallest input size supported by all the DNNs. The results sho w that the DNNs architectures designed by PrototypeN AS are again competitive with the best architectures found by NA TS-Bench in both of its search spaces. Howe ver , it should be noted that for the N A TS-Bench results, each of the 48 , 393 DNNs had to be trained separately for up to 200 epochs, while for PrototypeNAS only 5 DNNs had to be trained for 100 epochs to obtain models with similar results. 5 Conclusion W e propose PrototypeN AS, a nov el three-step zero-shot N AS method for rapidly de- signing DNNs for MCUs. Unlike previous work, we perform MOO using an ensem- ble of zero-shot proxies to optimize over many baseline architectures, rather than just sampling subsets from a single one. This allows us to find DNN architectures within minutes that achie ve competitive accuracies on 12 different datasets for tasks such as image classification, time series classification, and object detection. The found DNNs run on standard MCUs and outperform architectures found using other hardware-a ware N AS methods such as Tin yN AS and NA TS-Bench on CIF AR10. Acknowledgement This work was funded by the European Commission as part of the MANOLO project under the Horizon Europe programme Grant Agreement No.101135782. PrototypeN AS: Rapid Design of DNNs for MCUs 15 References 1. Bader , J., Zitzler, E.: HypE: An algorithm for fast hyperv olume-based many-objectiv e opti- mization. Evolutionary computation 19 (1), 45–76 (2011) 2. Bender , G., Kindermans, P .J., Zoph, B., V asudev an, V ., Le, Q.: Understanding and simplify- ing one-shot architecture search. In: International conference on machine learning (ICML). pp. 550–559 (2018) 3. Bhardwaj, K., Cheng, H.P ., Priyadarshi, S., Li, Z.: Zico-bc: A bias corrected zero-shot N AS for vision tasks. In: Conference on computer vision and pattern recognition (CVPR). pp. 1353–1357 (2023) 4. Cai, H., Chen, T ., Zhang, W ., Y u, Y ., W ang, J.: Efficient architecture search by network transformation. In: Conference on artificial intelligence (AAAI). vol. 32 (2018) 5. Cai, H., Gan, C., W ang, T ., Zhang, Z., Han, S.: Once for all: T rain one netw ork and specialize it for ef ficient deployment. In: International conference on learning representations (ICLR) (2020) 6. Deutel, M., Kontes, G., Mutschler , C., T eich, J.: Combining multi-objectiv e bayesian opti- mization with reinforcement learning for T inyML. Transactions on Evolutionary Learning 5 (3), 1–21 (2025) 7. Deutel, M., W oller, P ., Mutschler , C., T eich, J.: Energy-ef ficient deployment of deep learning applications on Cortex-M based microcontrollers using deep compression. In: MBMV 2023; 26th W orkshop. pp. 1–12 (2023) 8. Dong, X., Liu, L., Musial, K., Gabrys, B.: N A TS-Bench: benchmarking N AS algorithms for architecture topology and size. Transactions on pattern analysis and machine intelligence 44 (7), 3634–3646 (2021) 9. Han, S., Mao, H., Dally , W .J.: Deep compression: Compressing deep neural networks with pruning, trained quantization and huffman coding. arXi v:1510.00149 (2015) 10. He, K., Zhang, X., Ren, S., Sun, J.: Deep residual learning for image recognition. In: Con- ference on computer vision and pattern recognition (CVPR). pp. 770–778 (2016) 11. Houben, S., Stallkamp, J., Salmen, J., Schlipsing, M., Igel, C.: Detection of traffic signs in real-world images: The german traffic sign detection benchmark. In: International joint conference on neural networks (IJCNN). pp. 1–8 (2013) 12. Huang, J., Xue, B., Sun, Y ., Zhang, M.: Evolving comprehensi ve proxies for zero-shot neu- ral architecture search. In: Genetic and e v olutionary computation conference (GECCO). pp. 1246–1254 (2025) 13. Iandola, F .N., Han, S., Moskewicz, M.W ., Ashraf, K., Dally , W .J., Keutzer , K.: SqueezeNet: AlexNet-lev el accuracy with 50x fewer parameters and< 0.5 MB model size. arXiv:1602.07360 (2016) 14. Ismail F awaz, H., Lucas, B., Forestier , G., Pelletier, C., Schmidt, D.F ., W eber , J., W ebb, G.I., Idoumghar , L., Muller , P .A., Petitjean, F .: Inceptiontime: Finding Alexnet for time series classification. Data mining and knowledge disco very 34 (6), 1936–1962 (2020) 15. Jiang, T ., W ang, H., Bie, R.: Meco: zero-shot NAS with one data and single forward pass via minimum eigenv alue of correlation. Advances in neural information processing systems (NeurIPS) 36 , 61020–61047 (2023) 16. Jing, K., Chen, L., Xu, J., T ai, J., W ang, Y ., Li, S.: Zero-shot neural architecture search with weighted response correlation. Neurocomputing p. 131229 (2025) 17. Krause, J., Stark, M., Deng, J., Fei-Fei, L.: 3D object representations for fine-grained cate- gorization. In: Conference on computer vision and pattern recognition w orkshops (CVPR). pp. 554–561 (2013) 18. Krizhevsk y , A., et al.: Learning multiple layers of features from tiny images. T ech. rep. (2009) 16 M. Deutel et al. 19. Lee, N., Ajanthan, T ., T orr, P .H.: Snip: Single-shot network pruning based on connection sensitivity . arXiv:1810.02340 (2018) 20. Leutheuser , H., Schuldhaus, D., Eskofier , B.M.: Hierarchical, multi-sensor based classifica- tion of daily life activities: comparison with state-of-the-art algorithms using a benchmark dataset. PloS one 8 (10), e75196 (2013) 21. Li, G., Y ang, Y ., Bhardwaj, K., Marculescu, R.: Zico: zero-shot N AS via in verse coefficient of variation on gradients. arXi v:2301.11300 (2023) 22. Lin, J., Chen, W .M., Lin, Y ., Gan, C., Han, S., et al.: MCUNet: Tiny deep learning on iot devices. Advances in neural information processing systems (NeurIPS) 33 , 11711–11722 (2020) 23. Lin, M., W ang, P ., Sun, Z., Chen, H., Sun, X., Qian, Q., Li, H., Jin, R.: Zen-NAS: A zero-shot N AS for high-performance deep image recognition. In: International conference on computer vision (ICCV). vol. 2021 (2021) 24. Lin, T .Y ., Maire, M., Belongie, S., Hays, J., Perona, P ., Ramanan, D., Dollár , P ., Zitnick, C.L.: Microsoft COCO: Common objects in context. In: European conference on computer vision (ECCV). pp. 740–755 (2014) 25. Liu, Z., Mao, H., W u, C.Y ., Feichtenhofer , C., Darrell, T ., Xie, S.: A con vnet for the 2020s. In: Conference on computer vision and pattern recognition (CVPR). pp. 11976–11986 (2022) 26. López-Larraz, E., Sierra-T orralba, M., Clemente, S., Fierro, G., Oriol, D., Minguez, J., Mon- tesano, L., Klinzing, J.G.: "Bitbrain open access sleep dataset" (2025). https://doi.org/ doi:10.18112/openneuro.ds005555.v1.1.0 27. Mellor , J., T urner , J., Storkey , A., Crowle y , E.J.: Neural architecture search without training. In: International conference on machine learning (ICML). pp. 7588–7598 (2021) 28. Nilsback, M.E., Zisserman, A.: Automated flower classification ov er a lar ge number of classes. In: Sixth Indian conference on computer vision, graphics & image processing. pp. 722–729 (2008) 29. Parkhi, O.M., V edaldi, A., Zisserman, A., Jaw ahar , C.: Cats and dogs. In: Conference on computer vision and pattern recognition (CVPR). pp. 3498–3505 (2012) 30. Sandler , M., Ho ward, A., Zhu, M., Zhmogino v , A., Chen, L.C.: MobilenetV2: Inv erted residuals and linear bottlenecks. In: Conference on computer vision and pattern recognition (CVPR). pp. 4510–4520 (2018) 31. T an, M., Chen, B., Pang, R., V asudevan, V ., Sandler, M., How ard, A., Le, Q.V .: Mnasnet: Platform-aware neural architecture search for mobile. In: Conference on computer vision and pattern recognition (CVPR). pp. 2820–2828 (2019) 32. T an, M., Le, Q.: Efficientnet: Rethinking model scaling for con volutional neural networks. In: International conference on machine learning (ICML). pp. 6105–6114 (2019) 33. T anaka, H., Kunin, D., Y amins, D.L., Ganguli, S.: Pruning neural networks without an y data by iteratively conserving synaptic flow . Adv ances in neural information processing systems (NeurIPS) 33 , 6377–6389 (2020) 34. W ah, C., Branson, S., W elinder, P ., Perona, P ., Belongie, S.: The Caltech-UCSD birds-200- 2011 dataset. T ech. Rep. CNS-TR-2011-001, California Institute of T echnology (2011) 35. W u, B., Dai, X., Zhang, P ., W ang, Y ., Sun, F ., W u, Y ., T ian, Y ., V ajda, P ., Jia, Y ., K eutzer, K.: Fbnet: Hardware-aw are efficient con vnet design via dif ferentiable neural architecture search. In: Conference on computer vision and pattern recognition (CVPR). pp. 10734–10742 (2019) 36. Zitzler , E., Thiele, L.: Multiobjecti ve evolutionary algorithms: a comparativ e case study and the strength Pareto approach. T ransactions on Evolutionary Computation 3 (4), 257–271 (2002) 37. Zoph, B., V asudev an, V ., Shlens, J., Le, Q.V .: Learning transferable architectures for scalable image recognition. In: Conference on computer vision and pattern recognition (CVPR). pp. 8697–8710 (2018)

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment