POLCA: Stochastic Generative Optimization with LLM

Optimizing complex systems, ranging from LLM prompts to multi-turn agents, traditionally requires labor-intensive manual iteration. We formalize this challenge as a stochastic generative optimization problem where a generative language model acts as …

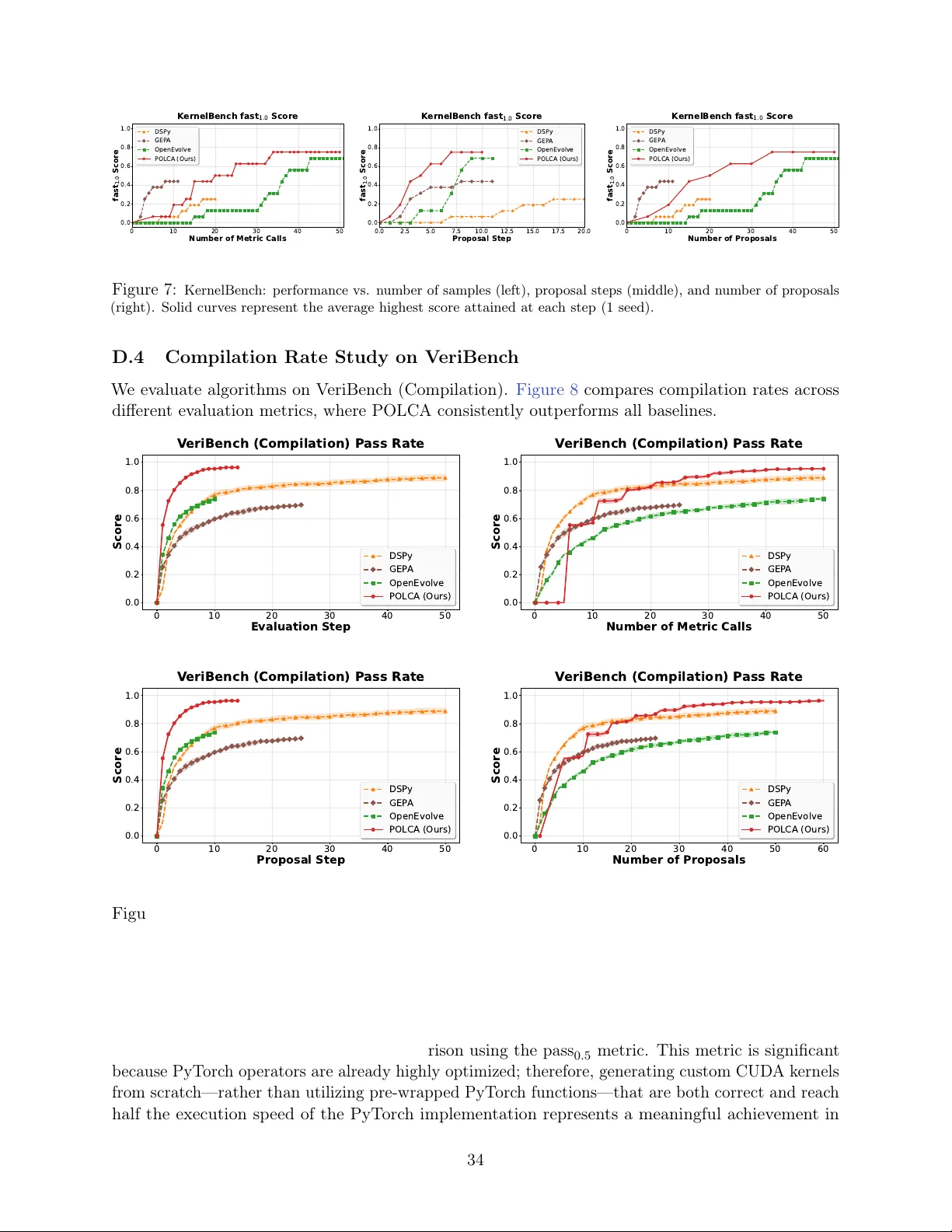

Authors: Xuanfei Ren, Allen Nie, Tengyang Xie