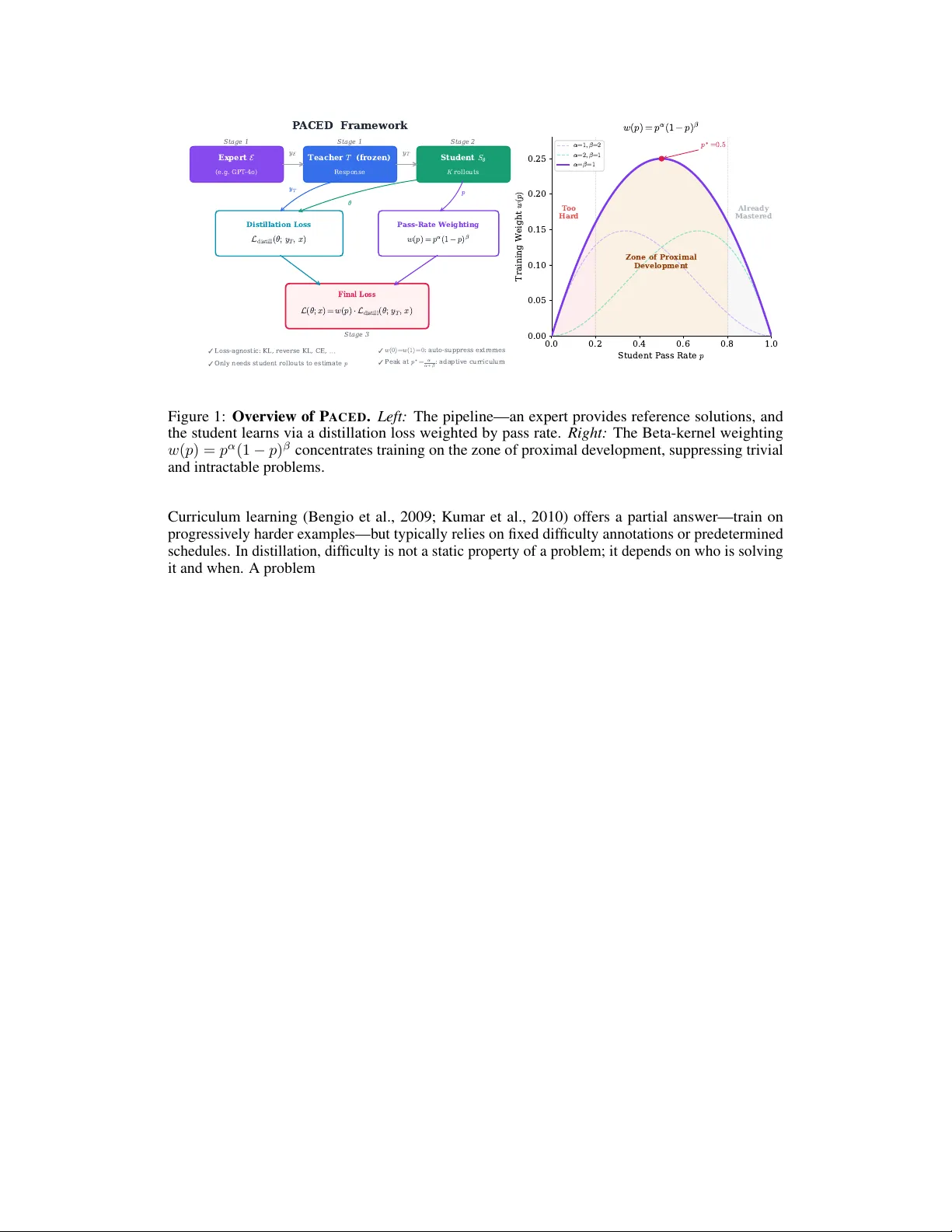

PACED: Distillation and Self-Distillation at the Frontier of Student Competence

Standard LLM distillation wastes compute on two fronts: problems the student has already mastered (near-zero gradients) and problems far beyond its reach (incoherent gradients that erode existing capabilities). We show that this waste is not merely i…

Authors: Yu, a Xu, Hejian Sang