Tokenization Tradeoffs in Structured EHR Foundation Models

Foundation models for structured electronic health records (EHRs) are pretrained on longitudinal sequences of timestamped clinical events to learn adaptable patient representations. Tokenization -- how these timelines are converted into discrete mode…

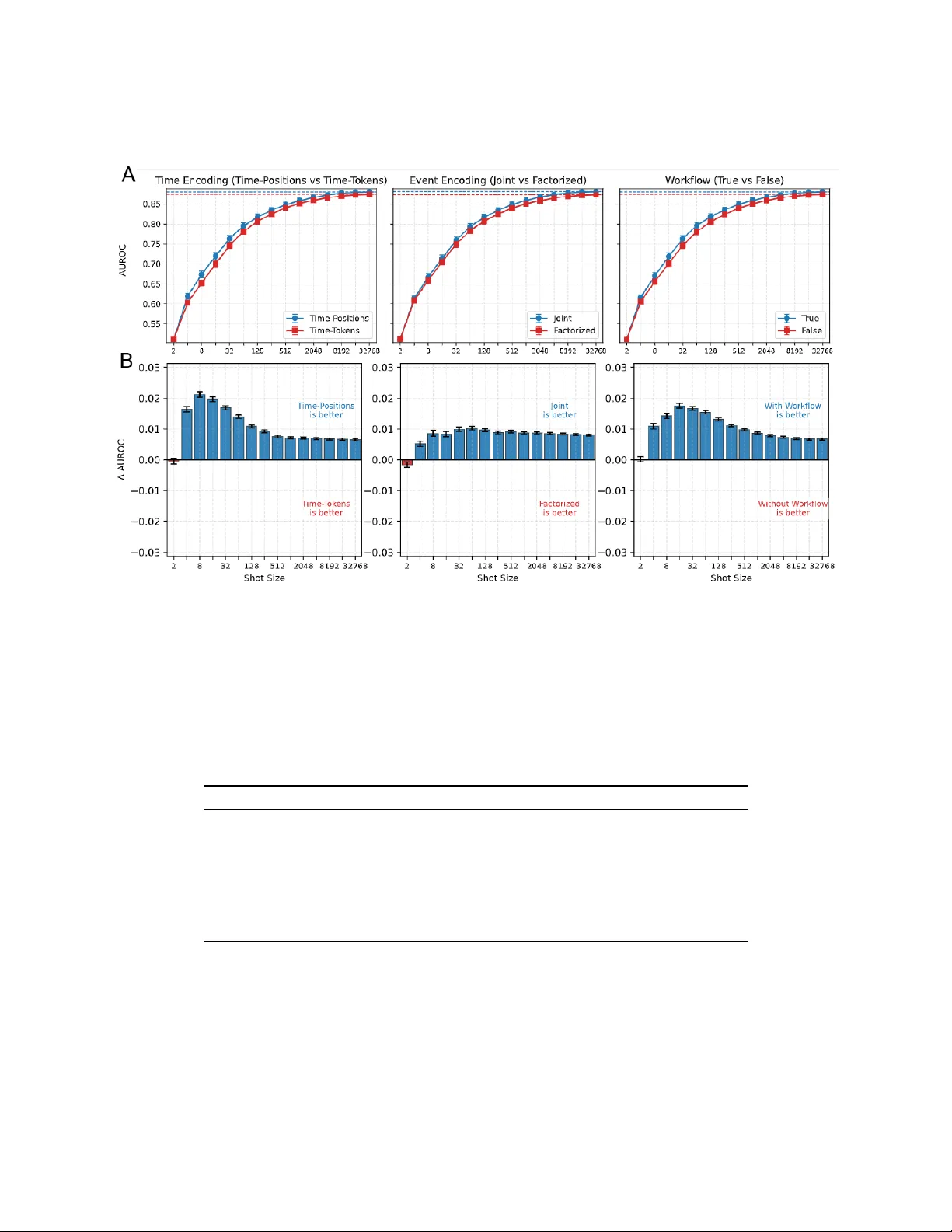

Authors: Lin Lawrence Guo, Santiago Eduardo Arciniegas, Joseph Jihyung Lee