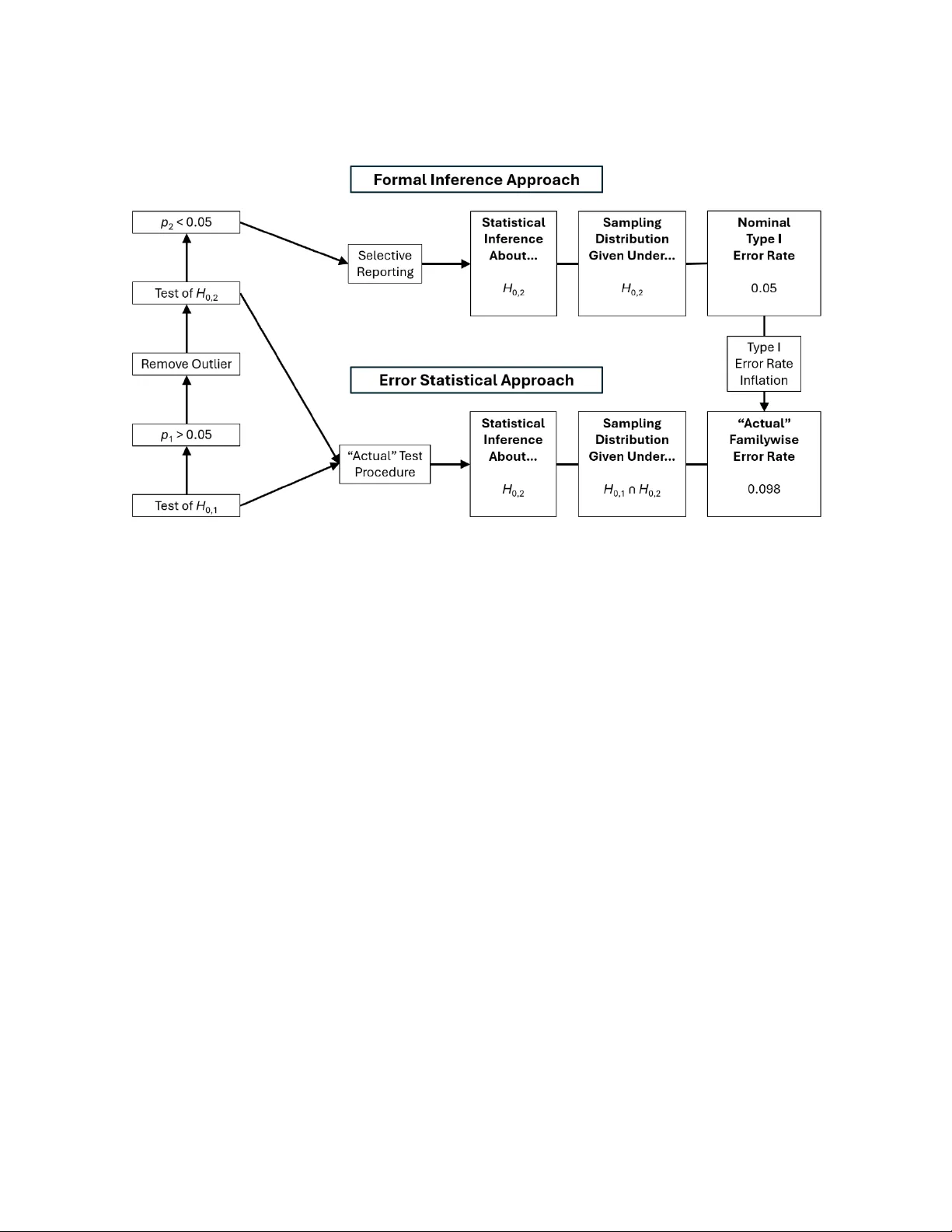

p-Hacking Inflates Type I Error Rates in the Error Statistical Approach but not in the Formal Inference Approach

p-hacking occurs when researchers conduct multiple significance tests (e.g., p1;H0,1 and p2;H0,2) and then selectively report tests that yield desirable (usually significant) results (e.g., p2 < 0.05;H0,2) without correcting for multiple testing (e.g…

Authors: Mark Rubin