Detecting Where Effects Occur by Testing Hypotheses in Order

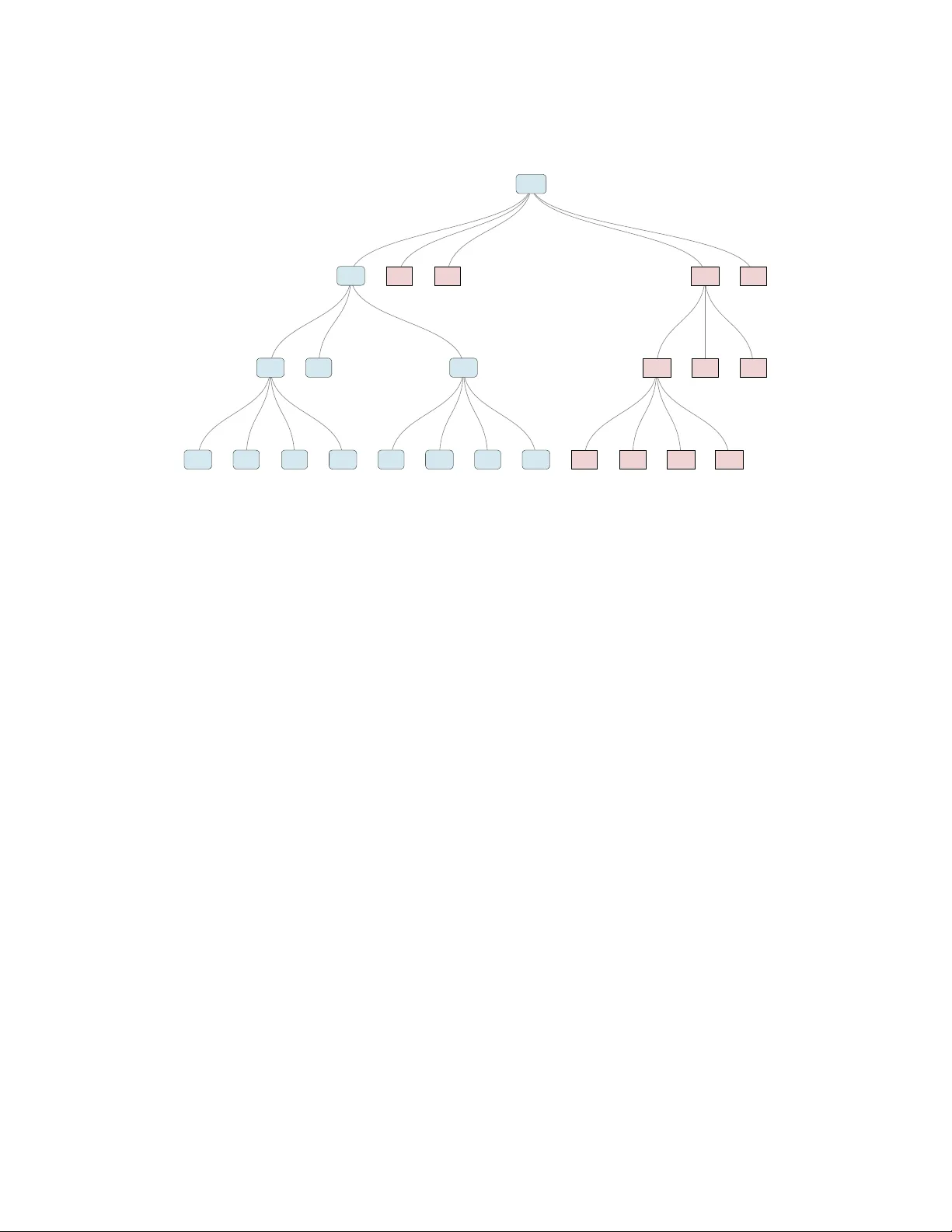

Experimental evaluations of public policies often randomize a new intervention within many sites or blocks. After a report of an overall result -- statistically significant or not -- the natural question from a policy maker is: \emph{where} did any e…

Authors: Jake Bowers, David Kim, Nuole Chen