Scaling Laws for Precision in High-Dimensional Linear Regression

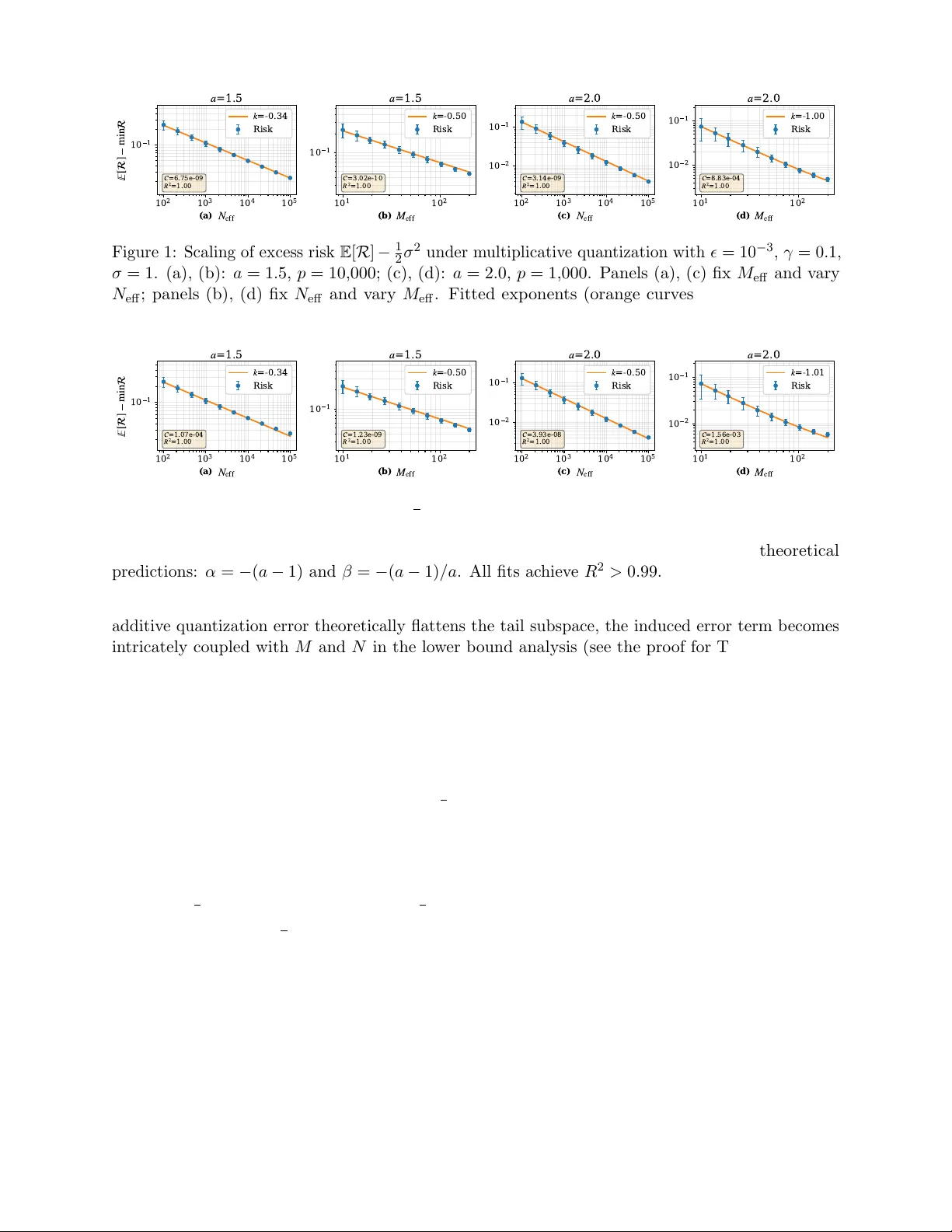

Low-precision training is critical for optimizing the trade-off between model quality and training costs, necessitating the joint allocation of model size, dataset size, and numerical precision. While empirical scaling laws suggest that quantization …

Authors: Dechen Zhang, Xuan Tang, Yingyu Liang