Comparative Evaluation of Machine Learning Models for Predicting Donor Kidney Discard

A kidney transplant can improve the life expectancy and quality of life of patients with end-stage renal failure. Even more patients could be helped with a transplant if the rate of kidneys that are discarded and not transplanted could be reduced. Ma…

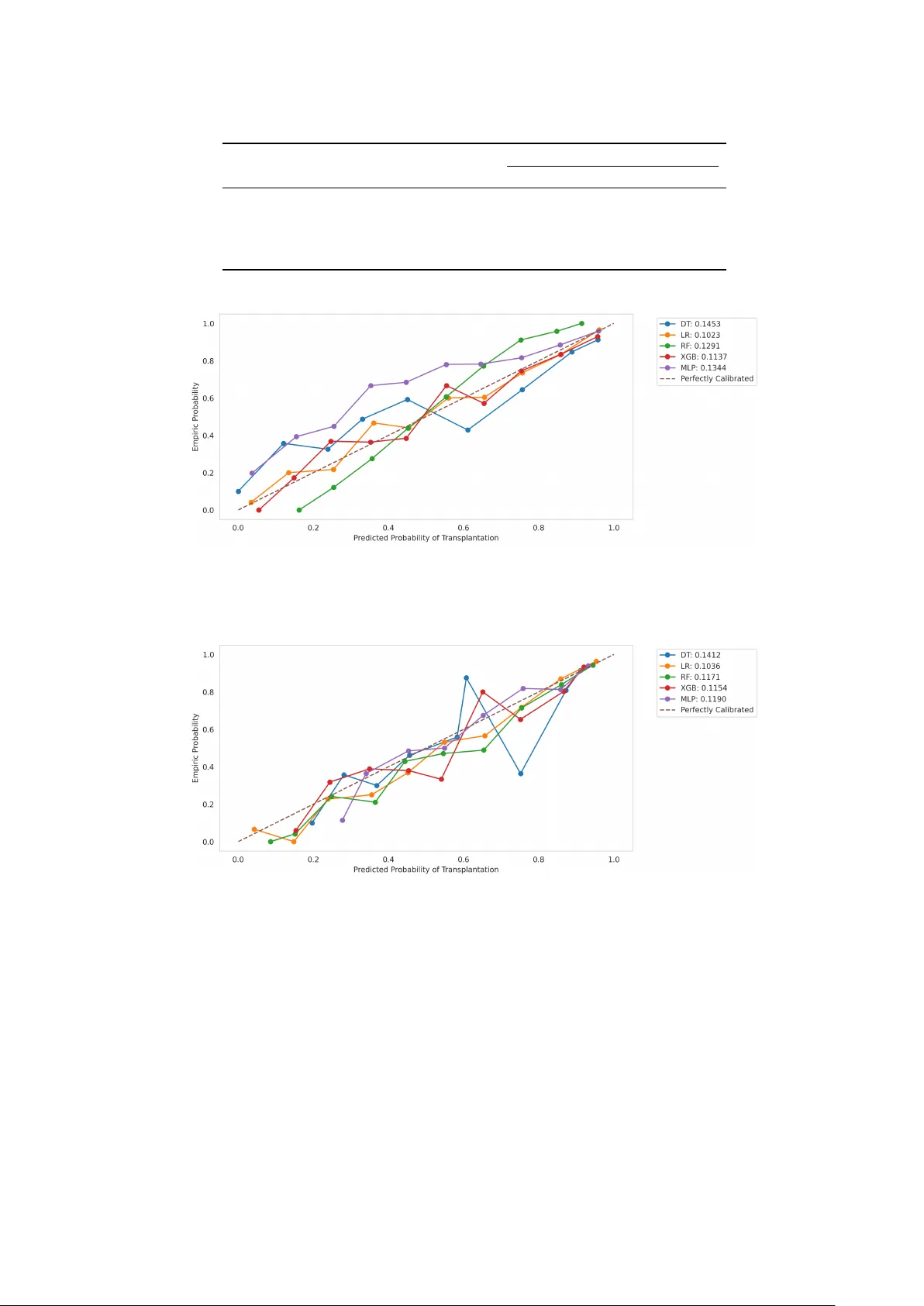

Authors: Peer Schliephacke, Hannah Schult, Leon Mizera