Hawkes Identification with a Prescribed Causal Basis: Closed-Form Estimators and Asymptotics

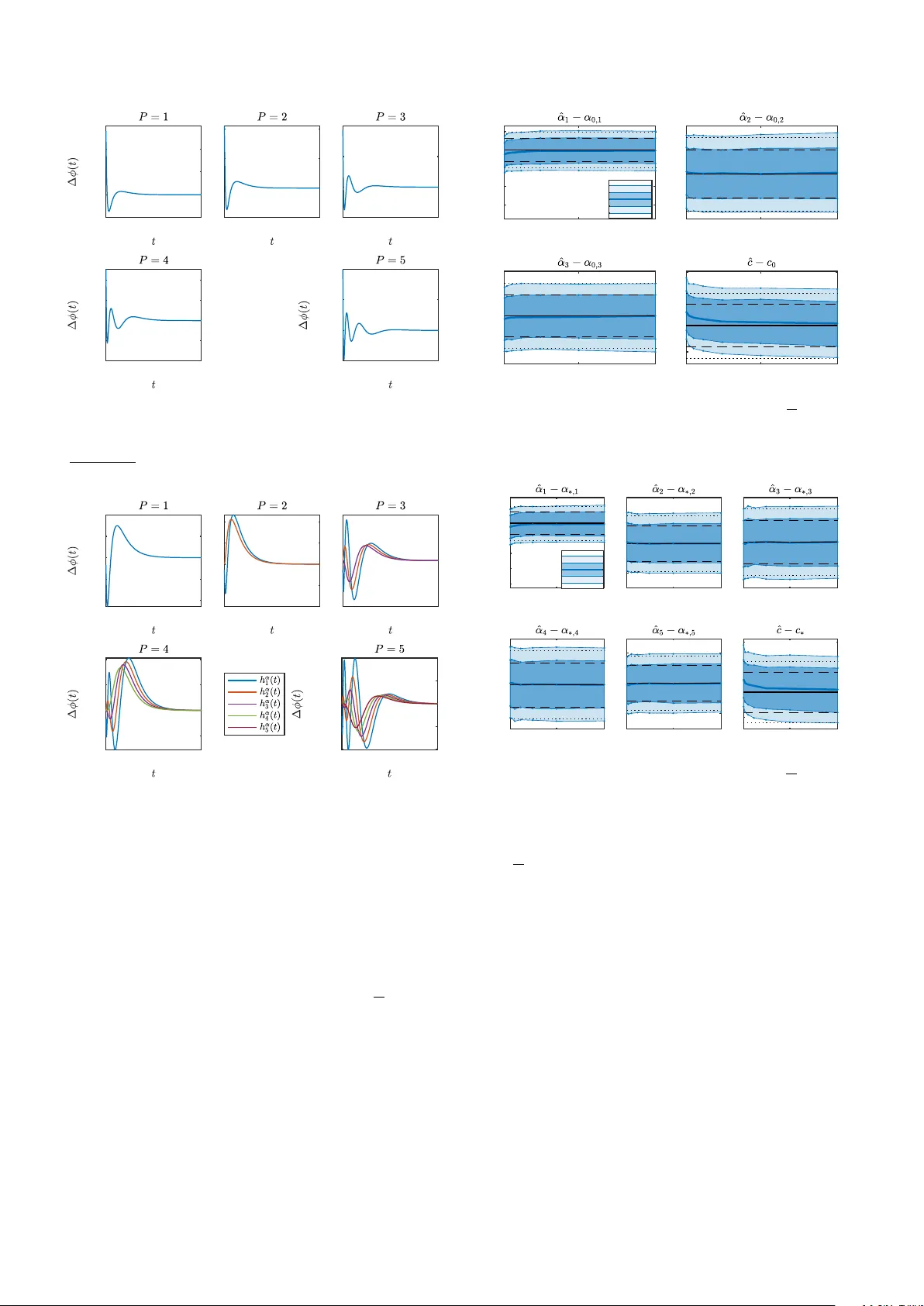

Driven by the recent surge in neural-inspired modeling, point processes have gained significant traction in systems and control. While the Hawkes process is the standard model for characterizing random event sequences with memory, identifying its unk…

Authors: Xinhui Rong, Girish N. Nair