Quantum circuit design from a retraction-based Riemannian optimization framework

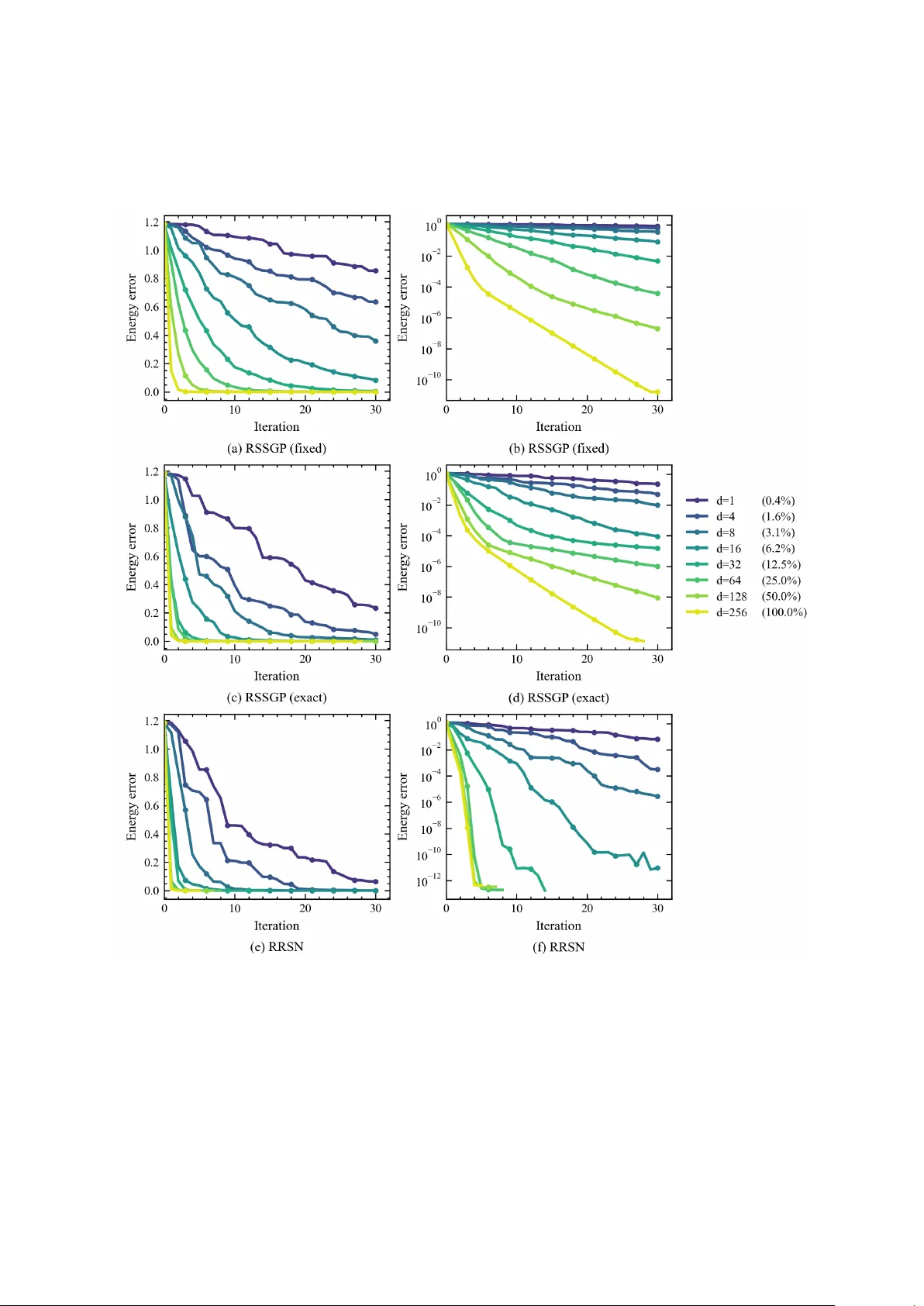

Designing quantum circuits for ground state preparation is a fundamental task in quantum information science. However, standard Variational Quantum Algorithms (VQAs) are often constrained by limited ansatz expressivity and difficult optimization land…

Authors: Zhijian Lai, Hantao Nie, Jiayuan Wu