The eigenvalues of i.i.d. matrices are hyperuniform

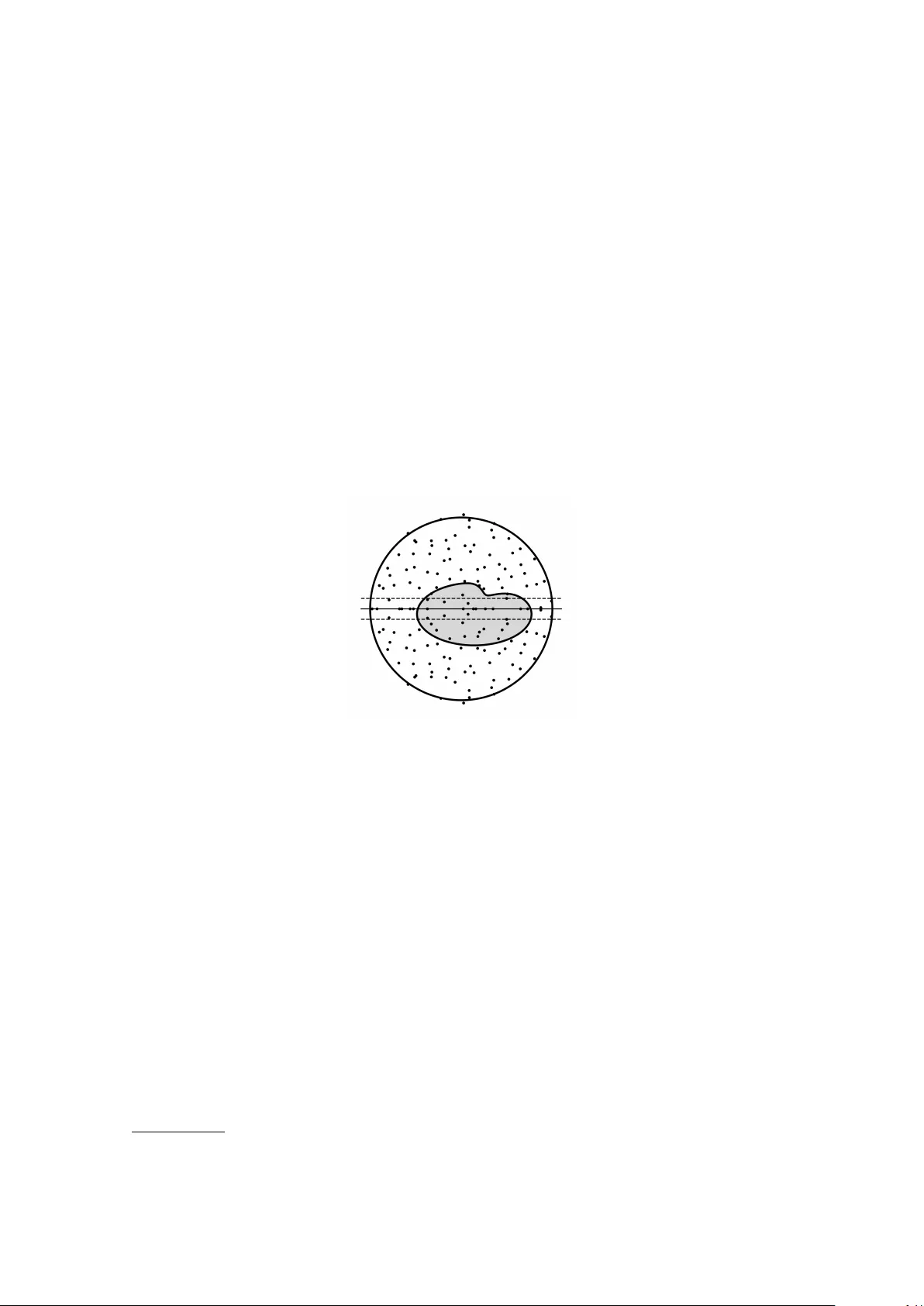

We prove that the point process of the eigenvalues of real or complex non-Hermitian matrices $X$ with independent, identically distributed entries is hyperuniform: the variance of the number of eigenvalues in a subdomain $Ω$ of the spectrum is much s…

Authors: Giorgio Cipolloni, László Erdős, Oleksii Kolupaiev