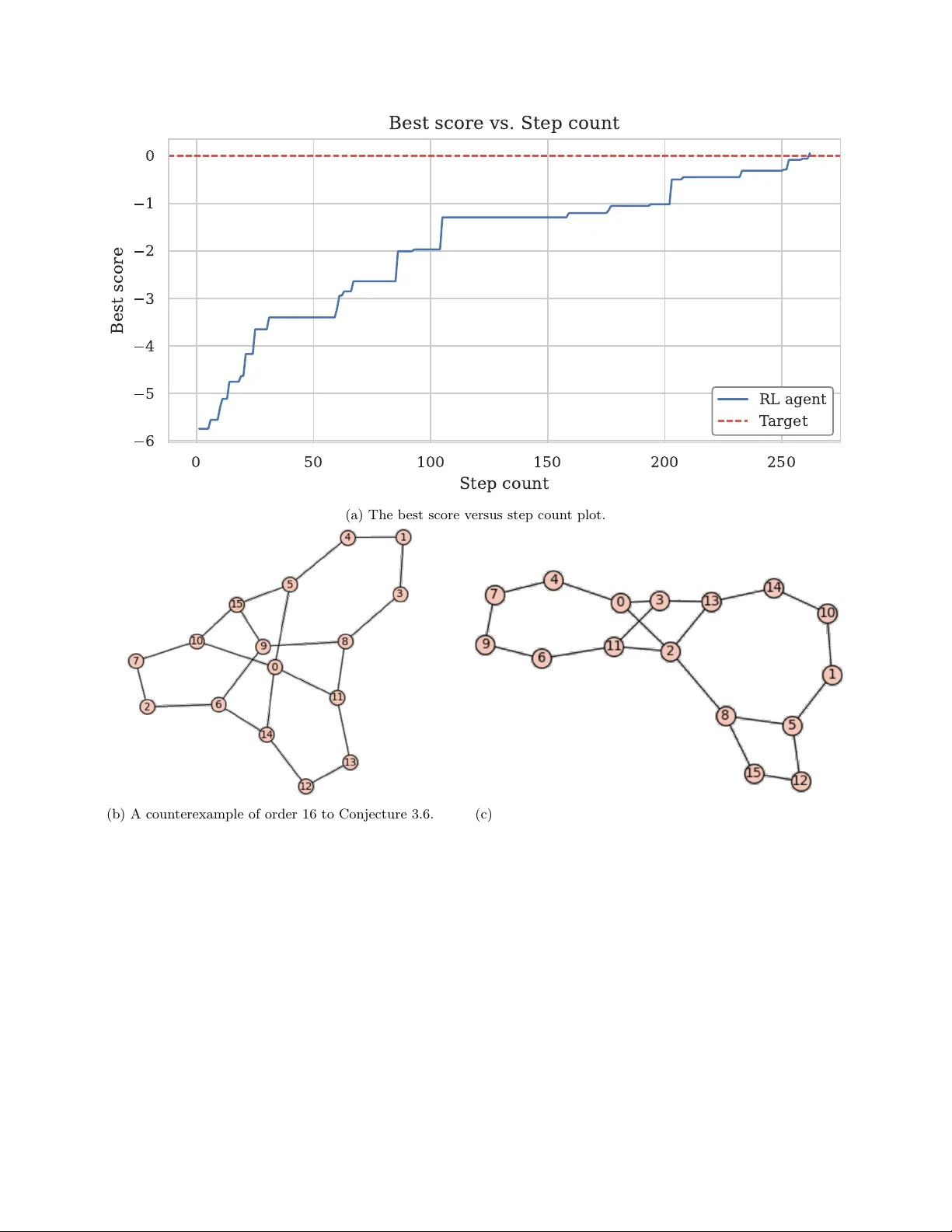

RLGT: A reinforcement learning framework for extremal graph theory

Reinforcement learning (RL) is a subfield of machine learning that focuses on developing models that can autonomously learn optimal decision-making strategies over time. In a recent pioneering paper, Wagner demonstrated how the Deep Cross-Entropy RL …

Authors: ** *저자 정보가 논문 본문에 명시되지 않아 제공되지 않음.* **