Semi-Supervised Learning on Graphs using Graph Neural Networks

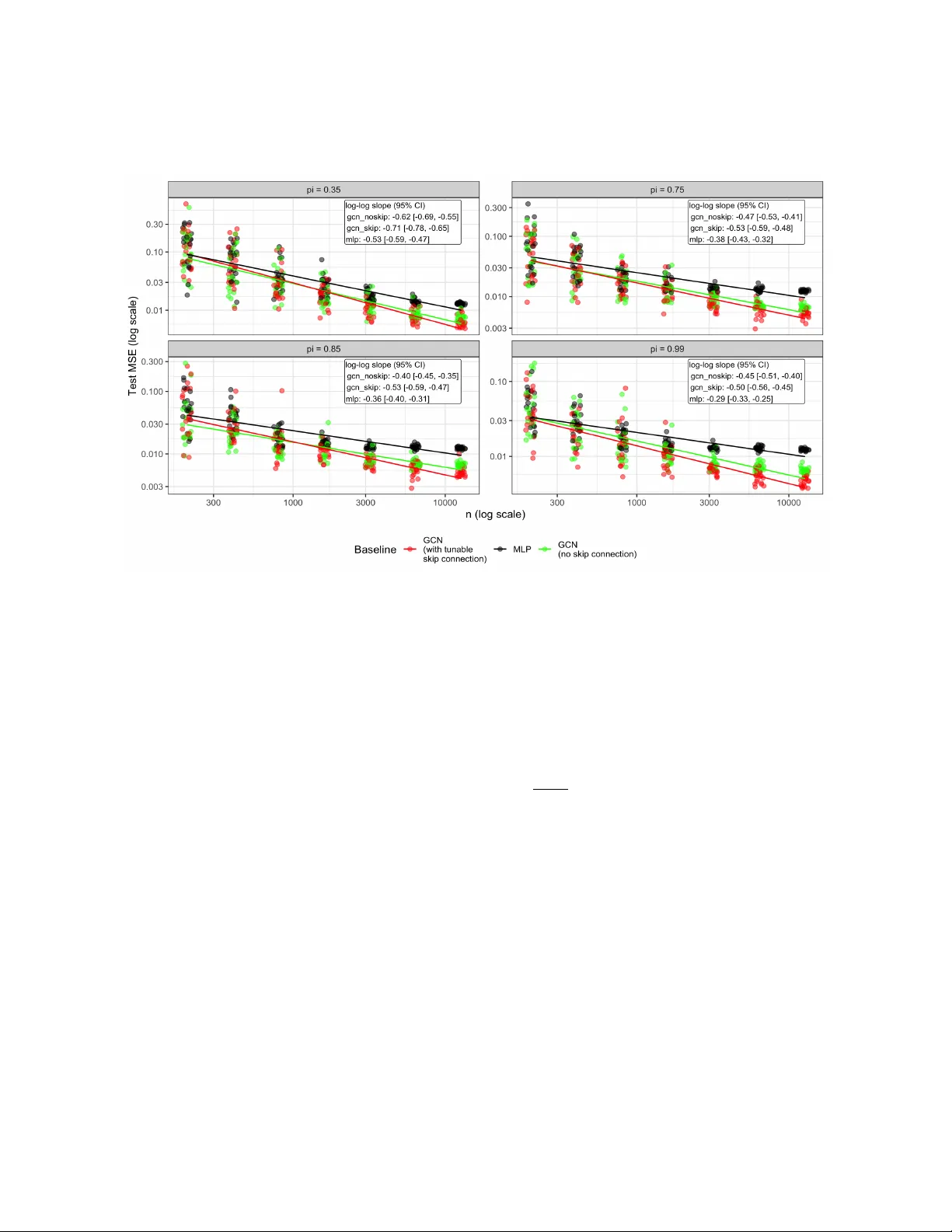

Graph neural networks (GNNs) work remarkably well in semi-supervised node regression, yet a rigorous theory explaining when and why they succeed remains lacking. To address this gap, we study an aggregate-and-readout model that encompasses several co…

Authors: Juntong Chen, Claire Donnat, Olga Klopp