M-estimation under Two-Phase Multiwave Sampling with Applications to Prediction-Powered Inference

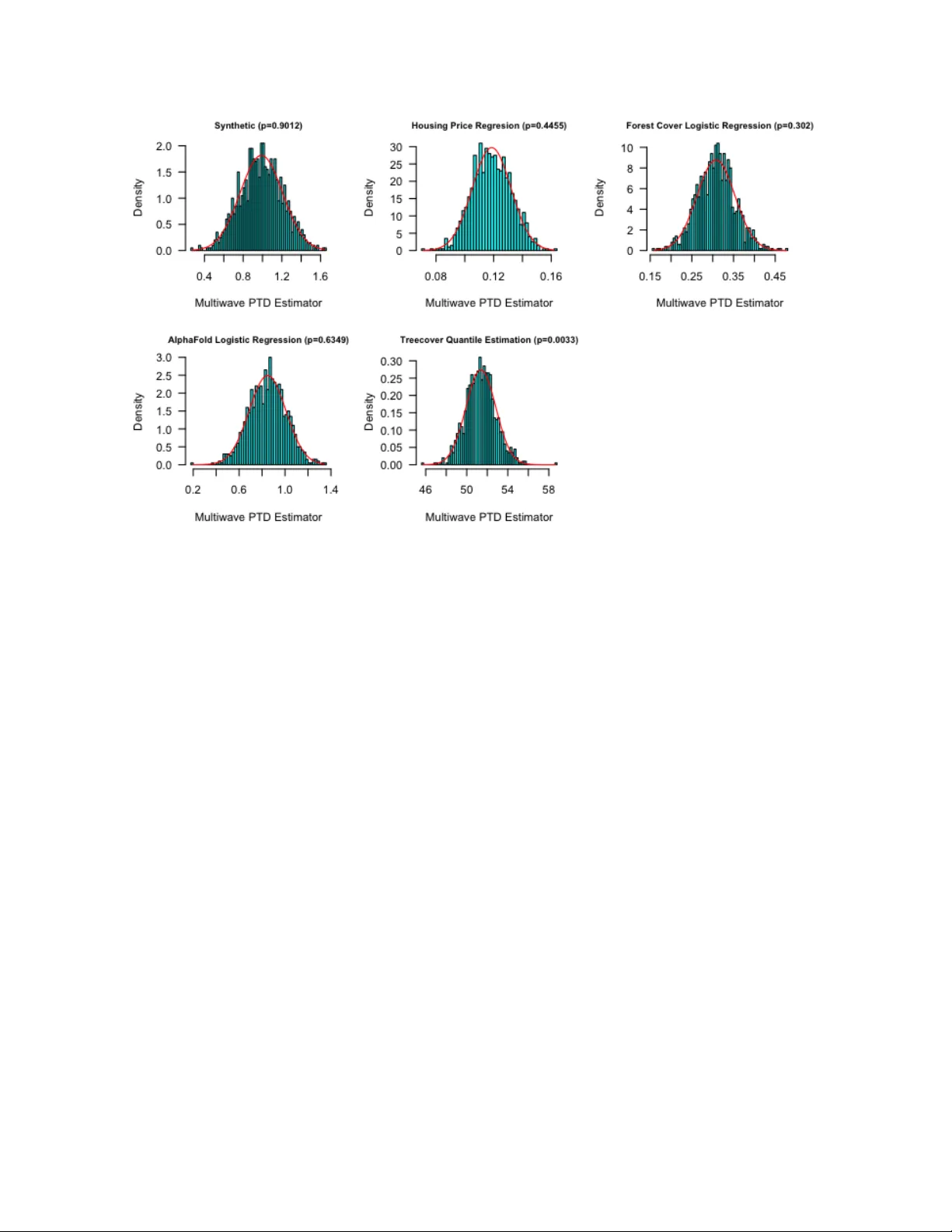

In two-phase multiwave sampling, inexpensive measurements are collected on a large sample and expensive, more informative measurements are adaptively obtained on subsets of units across multiple waves. Adaptively collecting the expensive measurements…

Authors: Dan M. Kluger, Stephen Bates