Machine Learning in Epidemiology

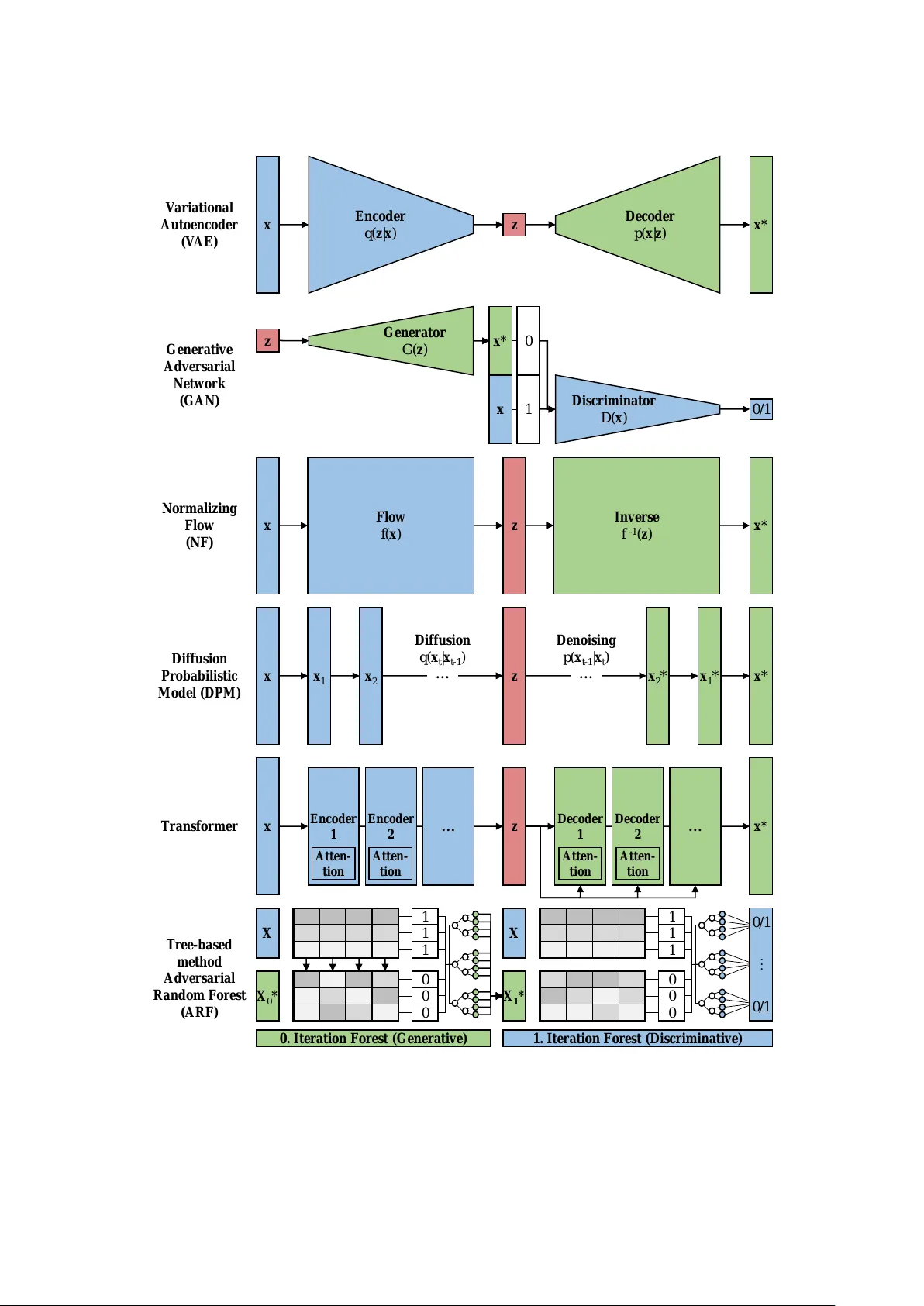

In the age of digital epidemiology, epidemiologists are faced by an increasing amount of data of growing complexity and dimensionality. Machine learning is a set of powerful tools that can help to analyze such enormous amounts of data. This chapter l…

Authors: ** *저자 정보가 본문에 명시되지 않았습니다.* (가능한 경우 원문 혹은 출판사 웹사이트에서 확인 필요) **