Partial Identification under Missing Data Using Weak Shadow Variables from Pretrained Models

Estimating population quantities such as mean outcomes from user feedback is fundamental to platform evaluation and social science, yet feedback is often missing not at random (MNAR): users with stronger opinions are more likely to respond, so standa…

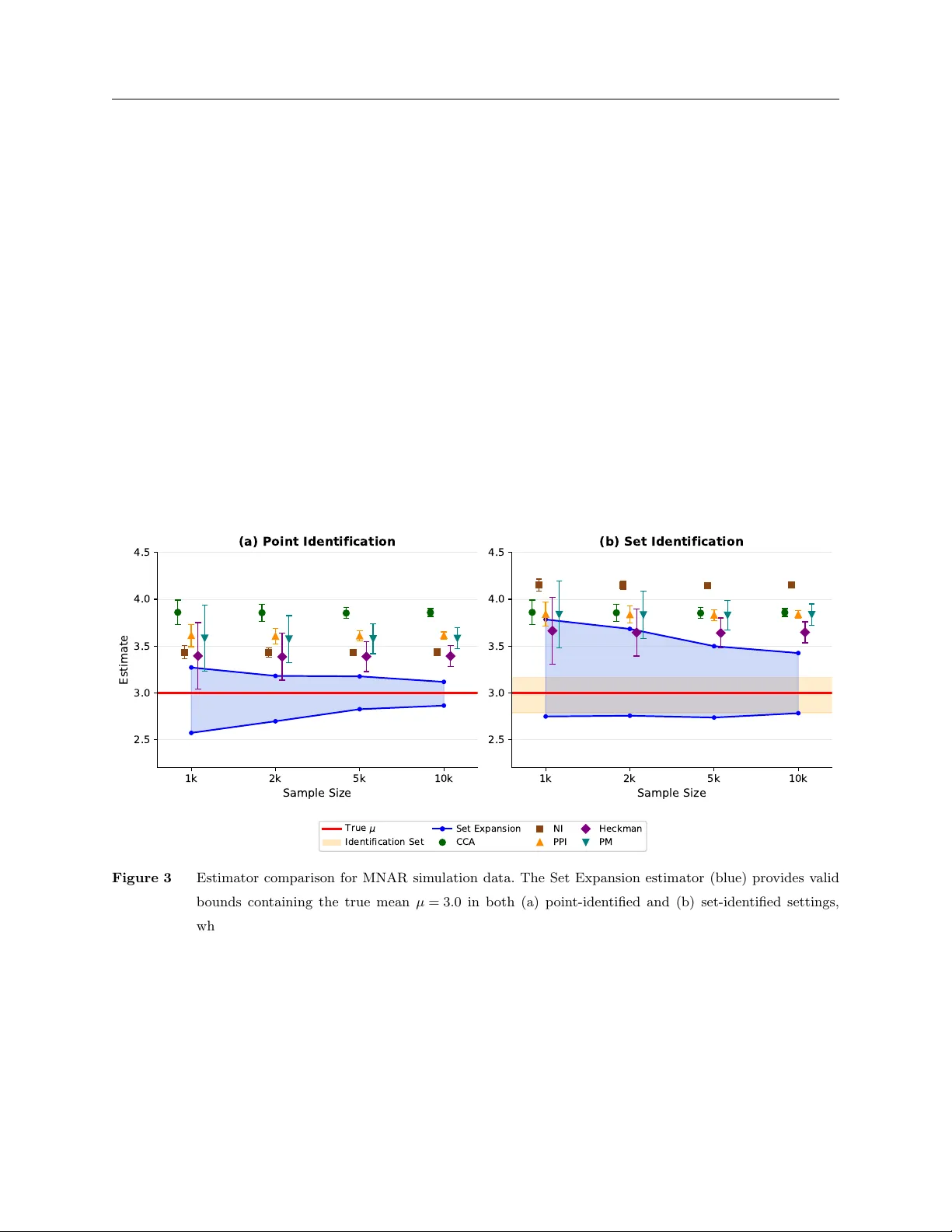

Authors: Hongyu Chen, David Simchi-Levi, Ruoxuan Xiong