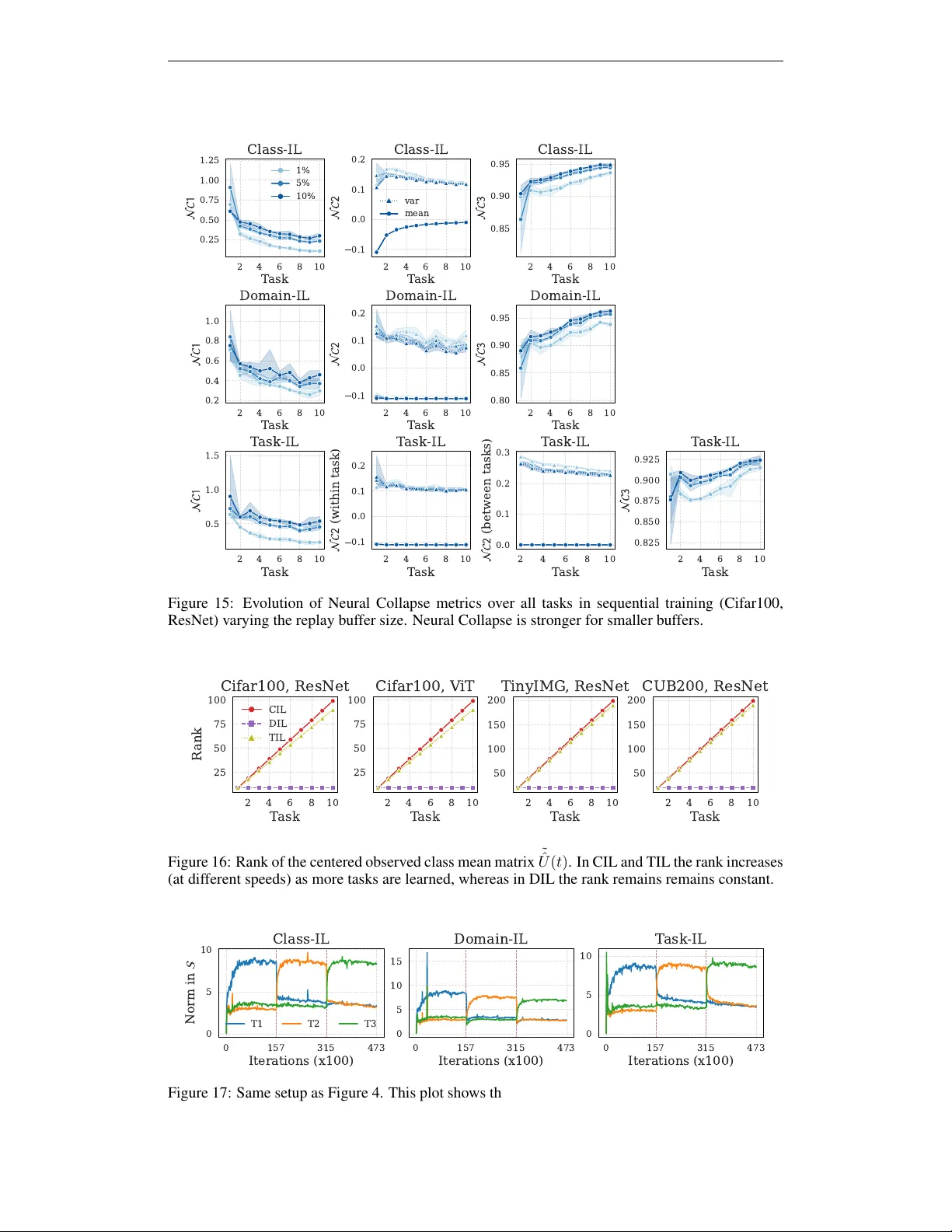

경험 재생에서 깊은 망각과 얕은 망각의 비대칭: 작은 버퍼는 특징 공간을 유지하지만 분류 경계는 왜곡한다

A persistent paradox in continual learning (CL) is that neural networks often retain linearly separable representations of past tasks even when their output predictions fail. We formalize this distinction as the gap between deep (feature-space) and s…

Authors: Giulia Lanzillotta, Damiano Meier, Thomas Hofmann