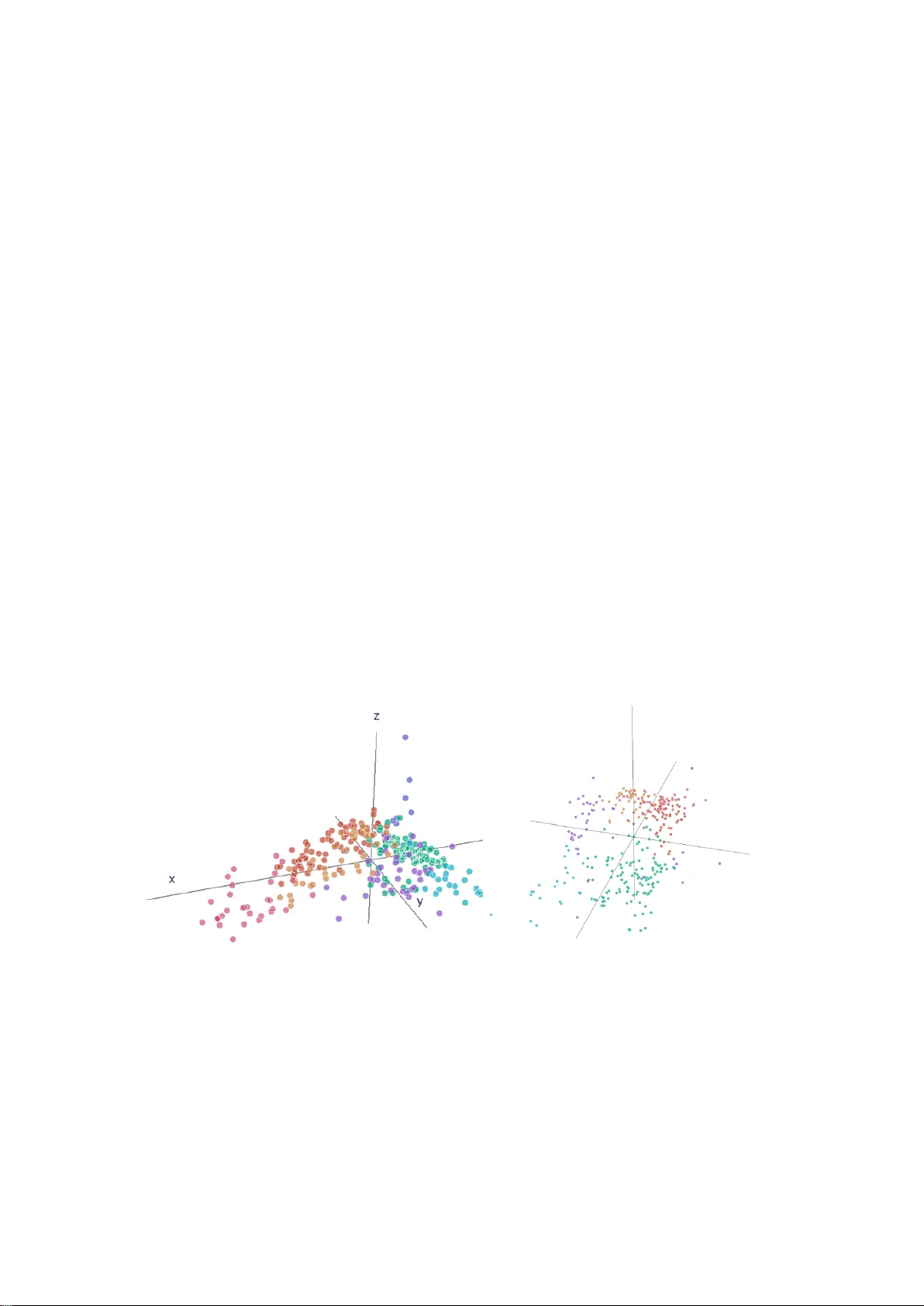

The geometry of financial institutions -- Wasserstein clustering of financial data

The increasing availability of granular and big data on various objects of interest has made it necessary to develop methods for condensing this information into a representative and intelligible map. Financial regulation is a field that exemplifies …

Authors: Lorenz Riess, Mathias Beiglböck, Johannes Temme