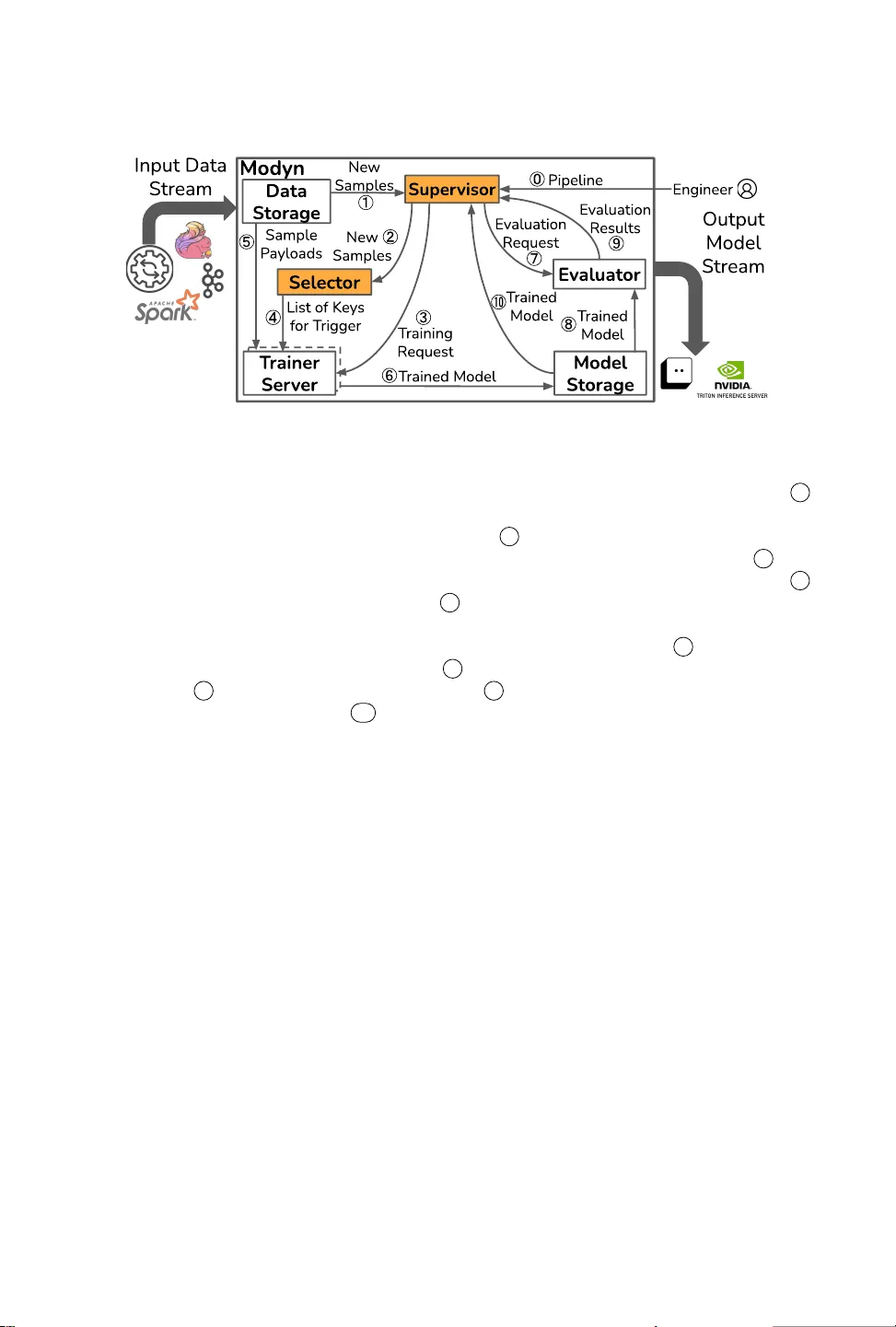

Modyn: Data-Centric Machine Learning Pipeline Orchestration

In real-world machine learning (ML) pipelines, datasets are continuously growing. Models must incorporate this new training data to improve generalization and adapt to potential distribution shifts. The cost of model retraining is proportional to how…

Authors: Maximilian Böther, Ties Robroek, Viktor Gsteiger