CLaMP 2: Multimodal Music Information Retrieval Across 101 Languages Using Large Language Models

Challenges in managing linguistic diversity and integrating various musical modalities are faced by current music information retrieval systems. These limitations reduce their effectiveness in a global, multimodal music environment. To address these …

Authors: Shangda Wu, Yashan Wang, Ruibin Yuan

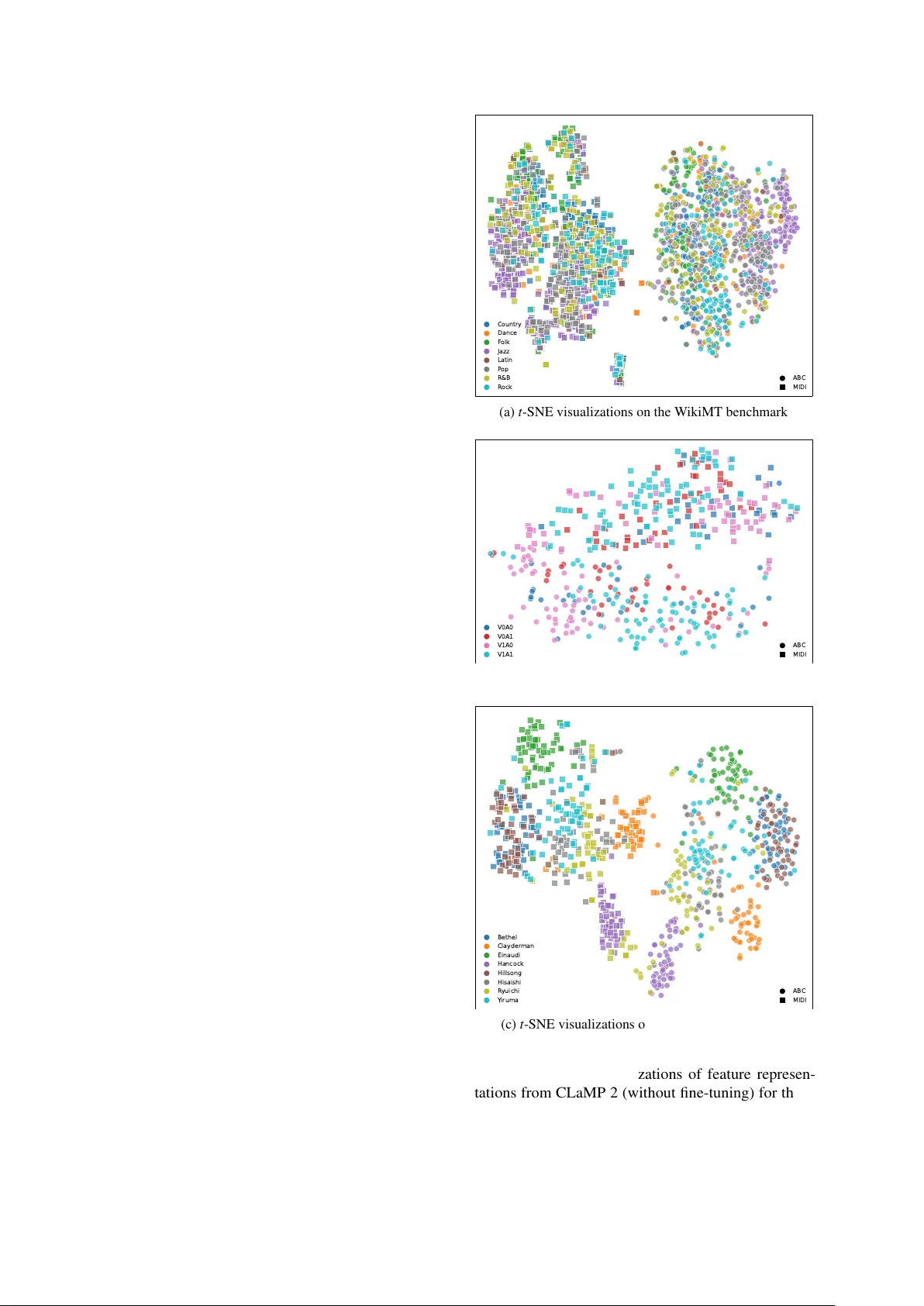

CLaMP 2: Multimodal Music Inf ormation Retriev al Acr oss 101 Languages Using Large Language Models Shangda W u Y ashan W ang Ruibin Y uan Zhancheng Guo Xu T an Ge Zhang Monan Zhou Jing Chen Xuefeng Mu Y uejie Gao Y uanliang Dong Jiafeng Liu Xiaobing Li F eng Y u Maosong Sun Details of contributors, correspondence, and af filiations are on Page 9 https://github.com/sanderwood/clamp2 Abstract Challenges in managing linguistic di versity and integrating various musical modalities are faced by current music information retriev al systems. These limitations reduce their ef fectiv eness in a global, multimodal music en vironment. T o address these issues, we introduce CLaMP 2, a system compatible with 101 languages that supports both ABC notation (a te xt-based musi- cal notation format) and MIDI (Musical Instru- ment Digital Interface) for music information retriev al. CLaMP 2, pre-trained on 1.5 mil- lion ABC-MIDI-text triplets, includes a multi- lingual text encoder and a multimodal music encoder aligned via contrastive learning. By lev eraging large language models, we obtain refined and consistent multilingual descriptions at scale, significantly reducing textual noise and balancing language distribution. Our ex- periments show that CLaMP 2 achie ves state- of-the-art results in both multilingual semantic search and music classification across modali- ties, thus establishing a new standard for inclu- siv e and global music information retrie val. 1 Introduction As a cross-cultural art form that transcends geo- graphical boundaries, music is being accessed glob- ally more than ev er , as people seek div erse content to enhance their aesthetic experience. Ho wev er , current Music Information Retrie val (MIR) sys- tems struggle to meet this demand, particularly in the area of multilingual retriev al. F or example, a Japanese user searching for "Brazilian chor o mu- sic with themes of celebration and car efr eeness" in their nati ve language may face significant chal- lenges. K eyword-based retriev al methods might return choro music, but they often fail to capture the specific themes the user is searching for . Mean- while, existing cross-modal MIR models remain heavily focused on English ( Huang et al. , 2022 ; Elizalde et al. , 2023 ; Doh et al. , 2023b ), making ef fectiv e multilingual semantic search challenging. A ke y limitation in the dev elopment of multilin- gual MIR systems is that most music-text datasets are predominantly in English ( Agostinelli et al. , 2023 ; Lanzendörfer et al. , 2023 ; Manco et al. , 2023 ). As a result, MIR models struggle to pro- cess text queries in non-English languages. Addi- tionally , te xtual noise—such as inconsistent meta- data and variations in terminology—complicates the task of matching descriptions to the appropri- ate music. Addressing these challenges requires adv anced techniques to manage multilingual data more eff ectiv ely and reduce noise, allowing MIR systems to bridge linguistic and aesthetic gaps. Recent Large Language Models (LLMs) ( Ope- nAI , 2023 ; Meta , 2024 ; Google , 2024 ) hav e demon- strated robust performance in language-related tasks. LLMs hav e been used in previous cross- modal MIR models and music-text dataset curation to generate coherent descriptions and annotations ( Doh et al. , 2023a ; W u et al. , 2023b ; Lu et al. , 2023 ; Melechovský et al. , 2024 ). This has proven ef fectiv e in improving text quality and enhancing model performance. Since LLMs are typically mul- tilingual, they hold significant potential for gener - ating high-quality music descriptions in multiple languages. This could overcome the limitations of current MIR systems and significantly enhance global music accessibility . T o leverage these advancements, we introduce CLaMP 2, a cross-modal MIR model designed to ef fectiv ely link multilingual text with di verse music data. The model includes a text encoder ( Conneau et al. , 2020 ) and a music encoder ( W u et al. , 2023a ), which are aligned by contrastiv e learning ( Sohn , 2016 ; v an den Oord et al. , 2018 ). Pre-trained on a substantial dataset of 1.5 million ABC-MIDI-te xt triplets, CLaMP 2 incorporates LLM-generated text to boost its multilingual processing capabilities. This enables the model to gain a deep understand- ing of musical concepts and their subtleties across v arious languages. Notably , CLaMP 2 supports 101 languages and unifies two symbolic music for - mats—ABC notation and MIDI—with ne w encod- ing methods into one frame work. By enhancing multilingual semantic search and inte grating di- verse music data, CLaMP 2 sets a ne w standard for global MIR, enabling users to access music from a wide range of linguistic and cultural contexts. The contributions of this paper are as follo ws: • W e utilized GPT -4 ( OpenAI , 2023 ) to refine the multilingual corpus used for contrastiv e learning. This reduced noise, balanced lan- guage distrib ution, and improv ed the o verall quality of the pre-training dataset. • W e enhanced an existing music encoder ( W u et al. , 2023a ) to support both ABC notation and MIDI data using novel encoding tech- niques for better musical representation. Em- pirical results prov e that joint training on both modalities enhances extracted feature quality . • CLaMP 2 achie ves state-of-the-art results in multiple MIR tasks, showing that LLM- generated data significantly boosts multilin- gual retrie val performance. 2 Related W ork 2.1 Multilingual Language Models Multilingual Language Models (MLMs), trained on text from v arious languages, play a crucial role in Natural Language Processing (NLP) and related fields. Early MLM research used word embed- dings to represent words of different languages in a shared representation space. F or instance, fastT ext ( Joulin et al. , 2017 ) provided pre-trained word em- beddings for multilingual NLP tasks, enabling the calculation of cross-language similarities. In recent years, more advanced MLMs based on complex neural network architectures ( V asw ani et al. , 2017 ) have been introduced. Examples in- clude mBER T 1 , mBAR T ( Liu et al. , 2020 ), and mT5 ( Xue et al. , 2021 ), all of which e volv ed from their monolingual counterparts ( De vlin et al. , 2019 ; Le wis et al. , 2020 ; Raf fel et al. , 2020 ) and are well- suited to multilingual en vironments. XLM-R ( Con- neau et al. , 2020 ) has shown strong performance in lo w-resource languages, demonstrating the effi- cacy of lar ge-scale multilingual modeling. In con- trast to English-centric models, M2M-100 ( F an 1 https://github.com/google- research/bert/blob/ master/multilingual.md et al. , 2021 ) allo ws direct translation between 100 languages, marking a major step forward in mul- tilingual translation. Additionally , SeamlessM4T ( Meta , 2023b ) overcomes the limitations of tradi- tional translation models by supporting up to 100 languages and enabling translation between speech and text, as well as within the same modality , all in a unified frame work. Lately , LLMs ( Zhipu , 2024 ; Mistral , 2024 ; Al- ibaba , 2024 ) hav e become increasingly multilin- gual to better serve a global audience. By utilizing di verse linguistic data from lar ge training corpora, LLMs ha ve impro ved both their accessibility and usefulness for users around the world. Similarly , cross-modal MIR systems must e volve to support multilingual queries, enabling more inclusiv e re- trie val and interaction across languages. 2.2 A pplications of LLMs in Music Recent adv ancements in LLMs ha ve greatly influ- enced the music field. Specifically , many models and datasets no w le verage LLM-generated te xt to improv e both music understanding and generation. MuseCoco ( Lu et al. , 2023 ) uses LLMs to trans- late musical attributes into coherent, detailed de- scriptions, enabling more precise control ov er mu- sic generation. Similarly , Noise2Music ( Huang et al. , 2023 ) le verages pre-trained LLMs to gen- erate musical descriptions paired with audio data, enriching the dataset with semantically rich cap- tions. Beyond generati ve models, TTMR++ ( Doh et al. , 2024 ) enhances text-to-music retriev al by incorporating detailed descriptions from a fine- tuned LLaMA 2 ( Meta , 2023a ) model alongside metadata, leading to more relev ant and accurate search results. For dataset curation, MidiCaps ( Melechovský et al. , 2024 ) provides ov er 168 thou- sand MIDI files, each paired with detailed musi- cal attributes lik e tempo, ke y , and instrumentation. These attrib utes are then utilized by Claude 3 Opus ( Anthropic , 2024 ) to generate fluent captions for the MIDI files. LP-MusicCaps ( Doh et al. , 2023a ) employs GPT -3.5 T urbo ( Ouyang et al. , 2022 ) to generate music descriptions and explores dif ferent instructions to create div erse captions, resulting in 2.2 million captions and 0.5 million audio clips. Ne vertheless, the aforementioned efforts mainly focus on improving te xt coherence and fluency and are English-exclusi ve. T o the best of our kno wl- edge, CLaMP 2 is the first to lev erage the multilin- gual capabilities of LLMs to improv e multilingual performance in the music field. C l a i r d e L une ( M o o nl i g h t ) S ym p h on y N o. 9 i n D m i no r , O p. 125 E l Cho c l o ( T he Co r n Co b) Am a z i n g G r a ce 十面埋伏 ( A m bus h f r o m A l l S i de s ) L a rg e L an gu ag e M od el U n a v i v a ce m e l od i a d i d a n z a a r g e n t i n a ( A l i v e l y Ar g e n t i n e d a n ce t u n e ) 역사적인 전투를 묘사한 강렬한 곡 ( A n in t e n s e p ie c e d e p ic t in g a h is t o r ic b a t t le ) Μ ι α ψ υ χ ι κ ή ύ μ ν ο ς σω τ ηρ ί α ς ( A s o ul f ul h y m n o f r e de m p t i o n) A t r a n q u i l p i a n o p i e ce e v ok i n g n os t a l g i a ( A g r a nd s y m pho n y c e l e br a t i ng uni t y ) E n g lis h - C e nt r i c No i s y T e xt D a t a R e fin e d M u lt ilin g u a l C o r p us P er f or ma n ce D a t a & Sh ee t M u s i c Sh a r ed R ep r es en t a t i on Sp a ce M ul ti l i ng ua l Te x t En c od er M ul ti m o da l Mu si c En c od er C o n t r a s t i v e L e a r n i n g Fr a m e w o r k o f C L a M P 2 Figure 1: CLaMP 2 is a cross-modal MIR model that uses contrasti ve learning to link multilingual text and multimodal music data. It employs GPT -4 to refine the multilingual corpus, reducing noise and achie ving a more balanced language distrib ution. The refined text data is then encoded by a multilingual te xt encoder . Meanwhile, music data in both ABC notation (sheet music) and MIDI (performance data) formats is processed by a multimodal music encoder . Both encoders project data into a shared representation space to connect text and music. 3 CLaMP 2 In this section, we present the CLaMP 2 frame work. W e begin with an ov erview of contrasti ve learning for modality alignment, followed by discussions of the multilingual te xt and multimodal music en- coders. Finally , we introduce the data sources used for pre-training and elaborate on ho w we le verage GPT -4 to enhance data quality . 3.1 Contrastiv e Learning Contrasti ve learning ( Sohn , 2016 ; v an den Oord et al. , 2018 ) is a powerful technique in v arious applications for aligning different modalities ( Rad- ford et al. , 2021 ; Girdhar et al. , 2023 ). It minimizes the distance between paired representations and maximizes that for unpaired ones. This ef fecti vely maps semantically related features (e.g., an image and its caption) close together in a shared represen- tation space while separating unrelated ones. As shown in Fig. 1, CLaMP 2 applies contrasti ve learning to ABC-MIDI-text triplets. The music encoder processes both ABC notation and MIDI data, while the te xt encoder handles the correspond- ing text inputs. During each training epoch, either ABC or MIDI data from each triplet is randomly selected for the music encoder, while the text en- coder processes either the original metadata or the refined multilingual descriptions generated by GPT - 4. Additionally , instrument information is removed from the music data 90% of the time, encourag- ing the model to focus on broader musical con- cepts rather than specific instrumentations. Both encoders project data into a shared representation space to learn the underlying connections between music and te xt. In this space, similar musical and textual concepts are clustered together , while dis- similar ones are kept apart. 3.2 Multilingual T ext Encoder CLaMP 2 uses XLM-R-base ( Conneau et al. , 2020 ), a multilingual text encoder based on RoBER T a ( Liu et al. , 2019 ). W ith 270 million parameters, it is pre- trained on a 2.5TB cleaned CommonCrawl corpus that spans a wide range of languages, enabling it to capture di verse linguistic nuances. During each training epoch, the input te xt for each triplet is randomly selected with the follow- ing probabilities: 50% for the raw text data, 25% for LLM-generated English descriptions, and 25% for LLM-generated non-English descriptions. This selection ensures a balanced exposure to both real- world and LLM-generated multilingual data. Ad- ditionally , we apply text dropout from the original CLaMP framew ork ( W u et al. , 2023a ) to the raw text data. It helps the model generalize better by reducing ov erfitting to specific input patterns. For computational efficienc y , we set the maxi- mum text length to 128. Longer te xts are truncated in one of three ways with equal probability: using the first, the last, or randomly selecting a segment of 128 tokens. This minimizes bias that could arise from relying on a single truncation method. 3.3 Multimodal Music Encoder CLaMP 2’ s multimodal music encoder supports multi-track music encoding in both ABC notation and MIDI. Although they can be mutually con- verted, the y are dif ferent in nature. ABC notation (sheet music), a text-based sheet music represen- tation like sta ve notation, is theory-oriented and ideal for presenting complex musical concepts to musicians for study and analysis. In contrast, MIDI (performance data) precisely encodes performance information related to timing and dynamics, thus suitable for music production and liv e performance. The music encoder of CLaMP 2 is built on M3 ( W u et al. , 2023a ), a self-supervised model designed for feature extraction from sheet music based on bar patching. This method di vides sheet music into bar-like segments, maintaining musi- cal coherence while improving efficienc y . M3 has an asymmetric encoder-decoder framework: the patch-lev el encoder extracts contextualized fea- tures from patches, while the char -lev el decoder then uses these features to autore gressi vely recon- struct each corresponding bar . During pre-training, 45% of patches are randomly selected and uni- formly processed with corresponding probabilities: 80% masked, 10% shuf fled, and 10% unchanged. M3 is optimized via cross-entropy loss to predict original patches from noisy input. Compared to the pre vious M3 model, we made se veral important improv ements to CLaMP 2’ s mul- timodal music encoder . Notably , it no w supports MIDI data. MIDI messages are first read losslessly from the original file using the mido library 2 and then con verted to the MIDI T ext Format (MTF) pro- posed in this paper . As MTF is a text-based format, each message read from it can be treated as a patch for M3. It offers two main adv antages: 1) seamless integration with the M3 framework, enabling the same training methods, and 2) lossless MIDI-to- MTF con version, which preserves all information and avoids common quantization errors found in existing MIDI representations ( Oore et al. , 2020 ; Huang and Y ang , 2020 ; Hsiao et al. , 2021 ). Another improvement is restructuring ABC nota- tion into a v oice-interleav ed form. As previous re- search has verified ( Qu et al. , 2024 ), this can signifi- cantly reduce the dif ficulty of modeling multi-track ABC notation and is conduci ve to training. Im- portantly , our implementation of interlea ved ABC notation adheres to syntax rules, ensuring compati- bility with existing ABC notation tools. The patch-le vel encoder is expanded to 12 layers to better capture comple x musical features, while the char-le vel decoder remains at 3 layers, both with a hidden size of 768. Each patch can hold up to 64 characters, and with a maximum of 512 patches per input sequence, M3 can support a total input of 32,768 characters. Longer sequences are truncated by randomly selecting 512 patches from the start, middle, or end, with equal probability . For details on interlea ved ABC notation and MTF , please see Appendix A and B, respectiv ely . 2 https://github.com/mido/mido Figure 2: The distribution of counts for dif ferent text types within the LLM-processed pre-training dataset. 3.4 Data Sour ces The pre-training dataset for both M3 and CLaMP 2 comes from tw o sources: the Million MIDI Dataset (MMD) ( Zeng et al. , 2021 ) and the W ebMusicT ext (W ebMT) dataset ( W u et al. , 2023a ). The y cover v arious music genres, such as popular and classical, from single- to multi-track compositions. The MMD consists of over 1.5 million MIDI files, compiled by crawling a vast collection of mu- sic files and filtering out any malformed or blank entries. On the other hand, the W ebMT dataset, comprising 1.4 million music-text pairs, includes formats like MusicXML, LilyPond, and ABC nota- tion. These were standardized into ABC notation follo wing an initial con version to MusicXML. T o pre vent information leakage, natural language ele- ments were remov ed from the ABC files. T o unify the datasets, we con vert MMD to ABC, W ebMT to MIDI, and merge them to get 3 million ABC-MIDI-text triplets. Admittedly , conv erting MMD to ABC may lead to the loss of performance details, and con verting W ebMT to MIDI may re- sult in the loss of certain score-related information. Ne vertheless, the k ey benefit is that it enriches data di versity , thus enhancing the model’ s ability to gen- eralize across dif ferent musical modalities. Ho wev er , variations in text quality pose signif- icant challenges. A substantial amount of non- musical content in the te xt data diminishes the ef- fecti veness of pre-training by introducing noise that detracts from rele vant musical information. Fur- thermore, as Fig. 3 shows, the dataset has an imbal- anced language distribution (detected by the langid library 3 ): English accounts for two-thirds of the data, while most languages contrib ute less than 1MB. This imbalance restricts the model’ s ability to ef fectiv ely link music with v arious languages. 3 https://github.com/saffsd/langid.py Figure 3: The amount of data for 97 languages found in the original metadata, displayed in order of magnitude. Figure 4: Count of text entries for 100 non-English languages generated by GPT -4. 3.5 LLM-Based Metadata Pr ocessing T o improve text quality and mitigate imbalanced language distribution, we employed GPT -4 ( Ope- nAI , 2023 ) to filter and enrich the text data. The prompt giv en to GPT -4 consisted of a system in- struction along with two e xamples illustrating the desired outputs, which enhanced its understanding of our requirements. GPT -4 was task ed with identi- fying rele vant music-related elements in each entry , and subsequently generating concise summaries in multiple languages based on these elements. Entries were excluded for lacking specific musi- cal details, containing vague comments like "this is a good song," or having no significant relation to the music. For valid entries, GPT -4 generated con- cise summaries in English and a randomly selected non-English language from the 100 languages to- kenizable by XLM-R. Howe ver , some responses in lo w-resource languages did not conform to the expected format, resulting in fewer entries for these languages. Nev ertheless, as shown in Fig. 4, GPT -4 significantly enhanced language balance, resulting in a total of 1.6 million ABC-MIDI-text triplets. As our dataset is deri ved from two sources, dupli- cate entries may occur . T o resolve this, we merged triplets with identical components, resulting in 1.5 million unique triplets—approximately 1.3 million from W ebMT and 0.2 million from MMD. GPT -4 cleaned the pre-training dataset and en- riched it with multilingual descriptions in 101 languages. This significantly enhanced CLaMP 2’ s multilingual MIR capabilities. Details on the prompt and text e xamples are in Appendix C. 4 Experiments 4.1 Settings W e e v aluated the proposed models, M3 and CLaMP 2, on music classification and semantic search tasks. Training both models together on 8 NVIDIA H800 GPUs took approximately 800 hours. W e split the data, allocating 99% for train- ing and 1% for v alidation. The models were trained for up to 100 epochs. W e adopted mixed- precision acceleration ( Micike vicius et al. , 2018 ) to enhance training ef ficiency . The AdamW optimizer ( Loshchilov and Hutter , 2019 ) was utilized, along with a 1,000-step warm-up ( Goyal et al. , 2017 ). For M3, the batch size was set to 128, and the learning rate was 1e-4. F or CLaMP 2, initialized from M3’ s patch-lev el encoder and XLM-R, the batch size was set to 1024, the learning rate was 5e-5, and the logit scale was set to 1. The ablation study for M3 and CLaMP 2 in- cluded sev eral variants. F or M3, three configura- tions were examined to assess the impact of mix- ing musical modalities on performance: M3-ABC, trained only on ABC data; M3-MIDI, trained only on MIDI data; and full M3, trained on both. For CLaMP 2, fiv e ablations were carried out to under- stand the contrib ution of dif ferent text data sources: CLaMP 2 (w/o en), excluding LLM-generated En- glish data; CLaMP 2 (w/o nen), excluding LLM- generated non-English data; CLaMP 2 (w/o meta), excluding the original ra w text data; CLaMP 2 (w/o LLM), excluding all LLM-generated data; and the full CLaMP 2 setup, using all av ailable text data. T able 1: Classification performance for ABC notation and MIDI was assessed across three datasets: WikiMT (1,010 pieces, 8 genres), V GMIDI (204 pieces, 4 emotions), and Pianist8 (411 pieces, 8 composers). Underlined v alues indicate the top M3 model, while bold values denote the o verall best performance among all models. Model Modality W ikiMT VGMIDI Pianist8 F1-macro Accuracy F1-macro Accuracy F1-macro Accuracy M3-MIDI MIDI 0.2586 0.4158 0.4700 0.5854 0.8683 0.8674 M3-ABC ABC 0.2416 0.4010 0.4955 0.6098 0.7339 0.7470 M3 MIDI 0.2621 0.4257 0.5399 0.6098 0.9199 0.9157 M3 ABC 0.2349 0.4010 0.6016 0.6341 0.7395 0.7590 MusicBERT MIDI 0.1746 0.3219 0.5127 0.5850 0.8379 0.8413 CLaMP ABC 0.3452 0.4267 0.6453 0.6866 0.7067 0.7152 CLaMP 2 MIDI 0.2898 0.4455 0.5246 0.6585 0.8927 0.8916 CLaMP 2 ABC 0.3990 0.4653 0.7449 0.8049 0.8025 0.8072 4.2 Music Classification Acr oss Modalities This e valuation assesses the classification capabili- ties of various models across three datasets, each highlighting a specific aspect of music. • WikiMT ( W u et al. , 2023a ): It contains 1,010 lead sheets in ABC notation from W ikifonia 4 , labeled with 8 genre classes according to the rele vant W ikipedia entries. • V GMIDI ( Ferreira and Whitehead , 2019 ): It contains 204 MIDI scores from video game soundtracks, annotated with 4 emotion classes based on v alence and arousal lev els. • Pianist8 ( Chou et al. , 2021 ): It includes 411 piano performances automatically transcribed from audio to performance MIDI ( K ong et al. , 2021 ) and labeled with 8 composer styles. W e ev aluated our model against state-of-the-art baselines in symbolic music understanding. • CLaMP ( W u et al. , 2023a ): A cross-modal MIR model designed to connect text and sheet music. It is pre-trained on W ebMT using bar patching and masked music modeling. • MusicBER T ( Zeng et al. , 2021 ): A self- supervised MIR model for representation learning, pre-trained on MMD through Oc- tupleMIDI encoding and bar-le vel masking. In this ev aluation, we utilized only the represen- tations from the music encoder . Gi ven that the te xt encoder was not in volved, the multilingual capabil- ities were not in vestigated. As a result, CLaMP 2 under e valuation included all a vailable text data. 4 http://www.synthzone.com/files/Wikifonia/ Wikifonia.zip Notably , we employed a linear classifier on the top layer of each model to assess the quality of musical representations. W e ev aluated each bench- mark in both MIDI and ABC formats to analyze ho w the models utilize information from different musical modalities. The results in T able 1 indicate that mixing musi- cal modalities significantly benefits M3. When trained with both ABC and MIDI, M3 outper- formed its single-modality counterparts on all benchmarks. This implies that training with ABC and MIDI together improv es its feature extraction capability for both modalities. Despite being pre-trained on only 0.2 million nati ve MIDI pieces, M3 consistently outperformed MusicBER T in MIDI classification tasks. This per- formance advantage is attributed to our proposed MTF , which preserves all MIDI information during text con version. In contrast, MusicBER T’ s Octu- pleMIDI encoding suf fers from information loss, which weakens its performance. Once aligned with text data, CLaMP 2 gener- ally outperforms M3 across benchmarks, though performance varies by modalities. In ABC nota- tion, CLaMP 2 achie ves top accuracies of 0.4653 and 0.8049 in W ikiMT and VGMIDI, respecti vely , both of which emphasize score information. How- e ver , in Pianist8, which focuses on performance details, CLaMP 2 excels in MIDI with an accuracy of 0.8916, a significant improvement o ver the orig- inal CLaMP . Still, this falls slightly below M3’ s 0.9157, likely due to limited performance MIDI data in the pre-training dataset. This shortage may hav e caused a slight decline after contrastive learn- ing. Despite this, CLaMP 2 remains highly effec- ti ve across musical modalities, showing its strong potential for music classification. T able 2: The semantic search performance of CLaMP 2 across the W ikiMT and MidiCaps benchmarks under div erse experimental settings. Both datasets contain texts e xclusiv ely in English. Setting W ikiMT (1,010 ABC-text pairs) MidiCaps (1,010 MIDI-text pair s) MRR HR@1 HR@10 HR@100 MRR HR@1 HR@10 HR@100 CLaMP 2 0.3438 0.2705 0.4870 0.7956 0.2695 0.1653 0.4782 0.8634 CLaMP 2 (w/o en) 0.3234 0.2455 0.4800 0.7846 0.2708 0.1723 0.4752 0.8436 CLaMP 2 (w/o nen) 0.3359 0.2615 0.4880 0.7735 0.2490 0.1574 0.4158 0.8297 CLaMP 2 (w/o meta) 0.2856 0.2104 0.4218 0.7585 0.1940 0.1050 0.3713 0.7901 CLaMP 2 (w/o LLM) 0.2797 0.2094 0.4068 0.7375 0.2772 0.1762 0.4822 0.8614 CLaMP 0.2561 0.1931 0.3693 0.7020 0.1236 0.0666 0.2416 0.6412 4.3 Semantic Sear ch on Native English Data Benchmarks in symbolic MIR are relativ ely scarce. T o the best of our knowledge, W ikiMT ( W u et al. , 2023a ) and MidiCaps ( Melechovský et al. , 2024 ) are the only two publicly av ailable music-text datasets for symbolic music. W ikiMT pairs 1,010 ABC notation pieces with W ikipedia text, focus- ing on cultural and historical context. MidiCaps, built on the Lakh MIDI dataset ( Raf fel , 2016 ), in- cludes 168,407 pairs with descriptions of musical features like tempo and chord progression. These datasets hav e different focuses: WikiMT empha- sizes cultural-context understanding, while Midi- Caps targets musical feature analysis. As the pre-training data includes the Lakh MIDI dataset (a subset of MMD), we took precautions to pre vent data leakage. T o this end, we randomly selected 1,010 pieces from the MidiCaps v alidation set to match the size of W ikiMT , which contains only non-training data. CLaMP 2 uses the original formats for testing on these benchmarks. Because the original CLaMP does not support MIDI, we con verted the Midi Caps data into ABC notation for its e valuation. T able 2 shows semantic search results on the W ikiMT and MidiCaps benchmarks, using Mean Reciprocal Rank (MRR) and Hit Rate at T op K (HR@K) to assess model performance in retrieving and ranking rele vant music-te xt pairs. In the W ikiMT benchmark, a clear trend is observed: an y CLaMP 2 v ariant using LLM- generated text, whether in English or non-English, outperforms CLaMP 2 (w/o LLM). For example, CLaMP 2 achiev es an MRR of 0.3438. Howe ver , when excluding LLM-generated te xt in CLaMP 2 (w/o LLM), the MRR drops significantly to 0.2797. This indicates that LLM-generated text greatly en- hances the CLaMP 2’ s ability to capture and con ve y cultural information. Spanish 70.55 F r ench 68.80 R ussian 56.13 Chinese 43.81 Arabic 55.43 Amharic 44.12 0.00 0.05 0.10 0.15 0.20 0.25 0.30 0.35 MRR CLaMP 2 w/o en w/o nen w/o meta w/o LLM (a) MRR scores on the W ikiMT benchmark. Spanish 58.07 F r ench 56.99 R ussian 40.38 Chinese 28.84 Arabic 47.34 Amharic 36.14 0.00 0.05 0.10 0.15 0.20 0.25 MRR CLaMP 2 w/o en w/o nen w/o meta w/o LLM (b) MRR scores on the MidiCaps benchmark. Figure 5: MRR scores across six non-English languages for (a) W ikiMT and (b) MidiCaps benchmarks. BLEU scores belo w each language provide additional conte xt on translation quality . In the MidiCaps benchmark, CLaMP 2 achiev es an MRR of 0.2695, demonstrating strong perfor - mance. Notably , all CLaMP 2 variants significantly outperform CLaMP . This improv ement arises from their nati ve support for MIDI data, enabling a bet- ter capture of performance details. In contrast to the W ikiMT results, excluding LLM-generated te xt does not harm performance, as CLaMP 2 (w/o LLM) achie ves the highest MRR of 0.2772. This suggests that LLM-generated text may not enhance the understanding of musical features. 4.4 Semantic Search Across Multilingual Data T o address the lack of multilingual music-text benchmarks, we translated English texts from W ikiMT and MidiCaps into six languages: Spanish, French, Russian, Chinese, Arabic, and Amharic. Among these languages, Amharic is an e xtremely lo w-resource language with limited pre-training data—less than 1GB in XLM-R and only 17KB in CLaMP 2. W e used SeamlessM4T ( Meta , 2023b ) for its broad translation support, allowing ev alu- ation without native multilingual datasets. Gi ven that translation quality directly af fects retrie val ef- fecti veness, we used BLEU scores 5 to assess the similarity between back-translations and original texts, serving as an indicator of translation quality . This e valuation lacks baselines, as no comparable models support multilingual symbolic MIR. CLaMP 2’ s multilingual retriev al results are presented in Fig. 5. Generally , removing LLM- generated English texts (w/o en) slightly impacts performance. Although in English, they improv e ov erall te xt quality by reducing inconsistencies and irrele vancies, thereby enhancing multilingual re- trie val performance. In contrast, excluding LLM- generated non-English texts (w/o nen) notably hin- ders retriev al for all languages in both benchmarks, especially for low-resource languages like Amharic. Comparing CLaMP 2 (w/o en) with CLaMP 2 (w/o LLM) further confirms the important role of LLM- generated non-English texts in enhancing multilin- gual retrie val performance. Notably , CLaMP 2 (w/o LLM) records the lo w- est MRR across all languages in both benchmarks, indicating poor multilingual performance when re- lying solely on the original text data. Ho wever , ex- cluding the original text data (w/o meta) results in a significant drop in performance. This indicates that CLaMP 2 can effecti vely extract authentic musical concepts from English-centric te xt data, enabling it to transcend language barriers and improve re- trie val across dif ferent languages and cultures. In conclusion, the e valuation of CLaMP 2 on W ikiMT and MidiCaps re veals that LLM-generated texts, particularly non-English texts, significantly enhance multilingual semantic search. Ho wev er, relying solely on them is insufficient, as the original data pro vides authentic details that LLM-generated data may lack. T ogether , they enable CLaMP 2 to perform better across languages by learning a more comprehensi ve representation of music semantics. 5 https://github.com/mjpost/sacrebleu 5 Conclusions CLaMP 2 makes substantial progress in cross- modal MIR by integrating multilingual text and multimodal music data via contrasti ve learning. Le veraging GPT -4 to refine the multilingual cor - pus, it ov ercomes the limitations of e xisting models that are e xclusiv ely trained on English music-te xt datasets. This facilitates more precise alignment between music and text across 101 languages. Experimental results demonstrate that CLaMP 2 achie ves state-of-the-art performance across a vari- ety of MIR tasks. In music classification tasks, the M3 model, trained on both ABC and MIDI data, demonstrates improv ed performance and consis- tently outperforms counterparts trained on a single modality . Building on M3, CLaMP 2 achie ves su- perior performance across div erse benchmarks and modalities. Notably , the incorporation of LLM- generated text data significantly enhances mul- tilingual semantic search. This enhancement is achie ved by reducing te xtual noise and balancing language distribution, which is particularly benefi- cial for lo w-resource languages. CLaMP 2 establishes a new multilingual MIR standard, enabling users worldwide to access a di verse array of musical content across 101 lan- guages. Future dev elopments may b uild on CLaMP 2 to connect with audio and visual modalities, fa- cilitating a more comprehensiv e and culturally rich experience at a global scale. 6 Excluded A pproaches In CLaMP 2, se veral e xperimental strategies were tested, yet failed to achie ve expected improv ements and were thus excluded from the final model. It should be noted that these failed attempts are de- ri ved from our practice and may not be generalized. The integration of discretized audio tok ens ( Dé- fossez et al. , 2023 ) failed to match pre vious au- dio models’ performance and was remov ed. In- spired by MidiCaps ( Melechovský et al. , 2024 ) and MuseCoco ( Lu et al. , 2023 ), we attempted to include musical attrib utes in the text data. How- e ver , this inclusion negati vely impacted perfor- mance. Additionally , extending the patch mask- ing pre-training strategy to contrasti ve learning did not enhance the robustness of CLaMP 2. L2 nor- malization caused con vergence problems and was also excluded. Lastly , a learnable logit scale led to ov er-scaling and degraded representations, so a fixed logit scale of 1 w as used for better stability . 7 Limitations Although CLaMP 2 has made progress, it still has certain limitations. In CLaMP 2, the contrasti ve learning frame work primarily extracts global semantic features, result- ing in a loss of fine-grained temporal information. Consequently , tasks that rely on sequential or time- related details cannot be ef fectiv ely ex ecuted. In addition, the absence of multilingual music- text benchmarks complicates the ev aluation of CLaMP 2’ s performance in non-English languages. T o address this, an existing machine translation model ( Meta , 2023b ) was used to translate English benchmarks into other languages. Ho we ver , ma- chine translation presents its o wn challenges. F or instance, the BLEU score for MidiCaps translations in Chinese is only 28.84, indicating poor transla- tion quality and significantly hindering retriev al performance. Notably , Arabic—despite having far less training data than Chinese in both XLM-R and CLaMP 2—achieves a higher MRR, with a BLEU score of 47.34. This suggests that transla- tion quality has a significant impact on retrie val performance, outweighing the influence of training data size. W ithout nativ e, high-quality benchmarks for non-English languages, it remains unclear how well CLaMP 2 will perform in real-world multilin- gual retrie val tasks. Core Contrib utors Shangda W u 1 , shangda@mail.ccom.edu.cn Y ashan W ang 1 , alexis_wang@mail.ccom.edu.cn Ruibin Y uan 2 , ryuanab@connect.ust.hk Contributors Zhancheng Guo 1 Xu T an 3 Ge Zhang 2 Monan Zhou 1 Jing Chen 4 Xuefeng Mu 4 Y uejie Gao 4 Y uanliang Dong 1 Jiafeng Liu 1 Xiaobing Li 1 Feng Y u 1 Correspondence Maosong Sun 1 , sms@tsinghua.edu.cn Affiliations 1 Central Conserv atory of Music, China 2 Multimodal Art Projection Research Community 3 Microsoft Research Asia 4 NetEase Cloud Music, China Acknowlegdements This work was supported by the follo wing funding sources: Special Program of National Natural Sci- ence Foundation of China (Grant No. T2341003), Adv anced Discipline Construction Project of Bei- jing Universities, Major Program of National So- cial Science Fund of China (Grant No. 21ZD19), and the National Culture and T ourism T echnologi- cal Innov ation Engineering Project (Research and Application of 3D Music). In addition, we would like to express our grat- itude for the use of icons from flaticon 6 in Fig. 1 and Fig. 8. References Andrea Agostinelli, T imo I. Denk, Zalán Borsos, Jesse H. Engel, Mauro V erzetti, Antoine Caillon, Qingqing Huang, Aren Jansen, Adam Roberts, Marco T agliasacchi, Matthew Sharifi, Neil Ze ghidour , and Christian Havnø Frank. 2023. Musiclm: Generating music from text . CoRR , abs/2301.11325. Alibaba. 2024. Qwen2 technical report . CoRR , abs/2407.10671. Anthropic. 2024. The claude 3 model family: Opus, sonnet, haiku . Preprint. Y i-Hui Chou, I-Chun Chen, Chin-Jui Chang, Joann Ching, and Y i-Hsuan Y ang. 2021. Midibert-piano: Large-scale pre-training for symbolic music under - standing . CoRR , abs/2107.05223. Alexis Conneau, Kartikay Khandel wal, Naman Goyal, V ishrav Chaudhary , Guillaume W enzek, Francisco Guzmán, Edouard Grav e, Myle Ott, Luke Zettle- moyer , and V eselin Stoyanov . 2020. Unsupervised cross-lingual representation learning at scale . In Pr o- ceedings of the 58th Annual Meeting of the Associa- tion for Computational Linguistics, ACL 2020, On- line, J uly 5-10, 2020 , pages 8440–8451. Association for Computational Linguistics. Alexandre Défossez, Jade Copet, Gabriel Synnaeve, and Y ossi Adi. 2023. High fidelity neural audio compres- sion . T rans. Mac h. Learn. Res. , 2023. Jacob Devlin, Ming-W ei Chang, K enton Lee, and Kristina T outanov a. 2019. BER T : pre-training of 6 https://www.flaticon.com/ deep bidirectional transformers for language under - standing . In Pr oceedings of the 2019 Confer ence of the North American Chapter of the Association for Computational Linguistics: Human Language T ech- nologies, N AA CL-HLT 2019, Minneapolis, MN, USA, J une 2-7, 2019, V olume 1 (Long and Short P apers) , pages 4171–4186. Association for Computational Linguistics. Seungheon Doh, K eunwoo Choi, Jongpil Lee, and Juhan Nam. 2023a. Lp-musiccaps: Llm-based pseudo mu- sic captioning . In Pr oceedings of the 24th Interna- tional Society for Music Information Retrieval Con- fer ence, ISMIR 2023, Milan, Italy , November 5-9, 2023 , pages 409–416. Seungheon Doh, Minhee Lee, Dasaem Jeong, and Juhan Nam. 2024. Enriching music descriptions with A finetuned-llm and metadata for text-to-music re- triev al . In IEEE International Conference on Acous- tics, Speech and Signal Processing , ICASSP 2024, Seoul, Republic of K or ea, April 14-19, 2024 , pages 826–830. IEEE. Seungheon Doh, Minz W on, K eunwoo Choi, and Juhan Nam. 2023b. T oward uni versal text-to-music re- triev al . In IEEE International Conference on Acous- tics, Speech and Signal Pr ocessing ICASSP 2023, Rhodes Island, Gr eece, June 4-10, 2023 , pages 1–5. IEEE. Benjamin Elizalde, Soham Deshmukh, Mahmoud Al Ismail, and Huaming W ang. 2023. CLAP learning audio concepts from natural language supervision . In IEEE International Confer ence on Acoustics, Speech and Signal Pr ocessing ICASSP 2023, Rhodes Island, Gr eece, J une 4-10, 2023 , pages 1–5. IEEE. Angela Fan, Shruti Bhosale, Holger Schwenk, Zhiyi Ma, Ahmed El-Kishky , Siddharth Go yal, Mandeep Baines, Onur Celebi, Guillaume W enzek, V ishrav Chaudhary , Naman Goyal, T om Birch, V italiy Liptchinsky , Sergey Eduno v , Michael Auli, and Ar - mand Joulin. 2021. Be yond english-centric multi- lingual machine translation . J. Mach. Learn. Res. , 22:107:1–107:48. Lucas Ferreira and Jim Whitehead. 2019. Learning to generate music with sentiment . In Pr oceedings of the 20th International Society for Music Informa- tion Retrieval Conference, ISMIR 2019, Delft, The Netherlands, November 4-8, 2019 , pages 384–390. Rohit Girdhar , Alaaeldin El-Nouby , Zhuang Liu, Man- nat Singh, Kalyan V asudev Alw ala, Armand Joulin, and Ishan Misra. 2023. Imagebind one embedding space to bind them all . In IEEE/CVF Conference on Computer V ision and P attern Recognition, CVPR 2023, V ancouver , BC, Canada, J une 17-24, 2023 , pages 15180–15190. IEEE. Google. 2024. Gemini 1.5: Unlocking multimodal understanding across millions of tokens of context . CoRR , abs/2403.05530. Priya Goyal, Piotr Dollár , Ross B. Girshick, Pieter No- ordhuis, Lukasz W esolowski, Aapo Kyrola, Andre w T ulloch, Y angqing Jia, and Kaiming He. 2017. Ac- curate, large minibatch SGD: training imagenet in 1 hour . CoRR , abs/1706.02677. W en-Y i Hsiao, Jen-Y u Liu, Y in-Cheng Y eh, and Y i- Hsuan Y ang. 2021. Compound word transformer: Learning to compose full-song music ov er dynamic directed hypergraphs . In Thirty-F ifth AAAI Confer- ence on Artificial Intelligence , AAAI 2021, Thirty- Thir d Confer ence on Innovative Applications of Arti- ficial Intelligence, IAAI 2021, The Ele venth Sympo- sium on Educational Advances in Artificial Intelli- gence, EAAI 2021, V irtual Event, F ebruary 2-9, 2021 , pages 178–186. AAAI Press. Qingqing Huang, Aren Jansen, Joonseok Lee, Ravi Ganti, Judith Y ue Li, and Daniel P . W . Ellis. 2022. Mulan: A joint embedding of music audio and natural language . In Pr oceedings of the 23r d International Society for Music Information Retrieval Confer ence, ISMIR 2022, Bengaluru, India, December 4-8, 2022 , pages 559–566. Qingqing Huang, Daniel S. Park, T ao W ang, Timo I. Denk, Andy L y , Nanxin Chen, Zhengdong Zhang, Zhishuai Zhang, Jiahui Y u, Christian Havnø Frank, Jesse H. Engel, Quoc V . Le, William Chan, and W ei Han. 2023. Noise2music: T ext-conditioned music generation with dif fusion models . CoRR , abs/2302.03917. Y u-Siang Huang and Y i-Hsuan Y ang. 2020. Pop music transformer: Beat-based modeling and generation of expressi ve pop piano compositions . In MM ’20: The 28th A CM International Confer ence on Multimedia, V irtual Event / Seattle, W A, USA, October 12-16, 2020 , pages 1180–1188. A CM. Armand Joulin, Edouard Grav e, Piotr Bojanowski, and T omás Mikolov . 2017. Bag of tricks for efficient text classification . In Pr oceedings of the 15th Confer ence of the Eur opean Chapter of the Association for Com- putational Linguistics, EA CL 2017, V alencia, Spain, April 3-7, 2017, V olume 2: Short P apers , pages 427– 431. Association for Computational Linguistics. Qiuqiang K ong, Bochen Li, Xuchen Song, Y uan W an, and Y uxuan W ang. 2021. High-resolution piano tran- scription with pedals by re gressing onset and of fset times . IEEE A CM T rans. Audio Speech Lang . Pr o- cess. , 29:3707–3717. Luca A. Lanzendörfer , Florian Grötschla, Emil Funke, and Roger W attenhofer . 2023. DISCO-10M: A large- scale music dataset . In Advances in Neural Infor- mation Pr ocessing Systems 36: Annual Conference on Neural Information Pr ocessing Systems 2023, NeurIPS 2023, New Orleans, LA, USA, December 10 - 16, 2023 . Mike Lewis, Y inhan Liu, Naman Goyal, Marjan Ghazvininejad, Abdelrahman Mohamed, Omer Levy , V eselin Stoyanov , and Luke Zettlemoyer . 2020. B AR T : denoising sequence-to-sequence pre-training for natural language generation, translat ion, and com- prehension . In Pr oceedings of the 58th Annual Meet- ing of the Association for Computational Linguistics, A CL 2020, Online, July 5-10, 2020 , pages 7871–7880. Association for Computational Linguistics. Y inhan Liu, Jiatao Gu, Naman Goyal, Xian Li, Serge y Edunov , Marjan Ghazvininejad, Mike Lewis, and Luke Zettlemoyer . 2020. Multilingual denoising pre- training for neural machine translation . T rans. Assoc. Comput. Linguistics , 8:726–742. Y inhan Liu, Myle Ott, Naman Goyal, Jingfei Du, Man- dar Joshi, Danqi Chen, Omer Levy , Mike Lewis, Luke Zettlemoyer, and V eselin Stoyanov . 2019. Roberta: A robustly optimized BER T pretraining approach . CoRR , abs/1907.11692. Ilya Loshchilov and Frank Hutter . 2019. Decoupled weight decay regularization . In 7th International Confer ence on Learning Repr esentations, ICLR 2019, New Orleans, LA, USA, May 6-9, 2019 . OpenRe- view .net. Peiling Lu, Xin Xu, Chenfei Kang, Botao Y u, Chengyi Xing, Xu T an, and Jiang Bian. 2023. Musec- oco: Generating symbolic music from text . CoRR , abs/2306.00110. Ilaria Manco, Benno W eck, Seungheon Doh, Minz W on, Y ixiao Zhang, Dmitry Bogdanov , Y usong W u, Ke Chen, Philip T ovstogan, Emmanouil Benetos, Elio Quinton, György Fazekas, and Juhan Nam. 2023. The song describer dataset: a corpus of audio cap- tions for music-and-language e valuation. In Mac hine Learning for Audio W orkshop at NeurIPS 2023 . Jan Melechovský, Abhinaba Roy , and Dorien Herre- mans. 2024. Midicaps - A large-scale MIDI dataset with text captions . CoRR , abs/2406.02255. Meta. 2023a. Llama 2: Open foundation and fine-tuned chat models . CoRR , abs/2307.09288. Meta. 2023b. Seamlessm4t-massiv ely multilin- gual & multimodal machine translation . CoRR , abs/2308.11596. Meta. 2024. The llama 3 herd of models . Preprint , Paulius Micikevicius, Sharan Narang, Jonah Alben, Gregory F . Diamos, Erich Elsen, Da vid García, Boris Ginsbur g, Michael Houston, Oleksii Kuchaiev , Ganesh V enkatesh, and Hao W u. 2018. Mixed pre- cision training . In 6th International Confer ence on Learning Repr esentations, ICLR 2018, V ancouver , BC, Canada, April 30 - May 3, 2018, Confer ence T rack Pr oceedings . OpenRe view .net. Mistral. 2024. Mixtral of experts . CoRR , abs/2401.04088. Sageev Oore, Ian Simon, Sander Dieleman, Douglas Eck, and Karen Simon yan. 2020. This time with feeling: learning expressi ve musical performance . Neural Comput. Appl. , 32(4):955–967. OpenAI. 2023. GPT -4 technical report . CoRR , abs/2303.08774. Long Ouyang, Jeffre y W u, Xu Jiang, Diogo Almeida, Carroll L. W ainwright, Pamela Mishkin, Chong Zhang, Sandhini Agarwal, Katarina Slama, Ale x Ray , John Schulman, Jacob Hilton, Fraser K elton, Luke Miller , Maddie Simens, Amanda Askell, Peter W elin- der , Paul F . Christiano, Jan Leike, and Ryan Lowe. 2022. Training language models to follo w instruc- tions with human feedback . In Advances in Neur al Information Pr ocessing Systems 35: Annual Confer- ence on Neur al Information Pr ocessing Systems 2022, NeurIPS 2022, New Orleans, LA, USA, No vember 28 - December 9, 2022 . Xingwei Qu, Y uelin Bai, Y inghao Ma, Ziya Zhou, Ka Man Lo, Jiaheng Liu, Ruibin Y uan, Lejun Min, Xueling Liu, T ianyu Zhang, Xinrun Du, Shuyue Guo, Y iming Liang, Y izhi Li, Shangda W u, Junt- ing Zhou, T ianyu Zheng, Ziyang Ma, Fengze Han, W ei Xue, Gus Xia, Emmanouil Benetos, Xiang Y ue, Chenghua Lin, Xu T an, Stephen W . Huang, W enhu Chen, Jie Fu, and Ge Zhang. 2024. Mupt: A genera- tiv e symbolic music pretrained transformer . CoRR , abs/2404.06393. Alec Radford, Jong W ook Kim, Chris Hallacy , Aditya Ramesh, Gabriel Goh, Sandhini Agarwal, Girish Sas- try , Amanda Askell, Pamela Mishkin, Jack Clark, Gretchen Krueger , and Ilya Sutske ver . 2021. Learn- ing transferable visual models from natural language supervision . In Pr oceedings of the 38th International Confer ence on Machine Learning, ICML 2021, 18-24 J uly 2021, V irtual Event , volume 139 of Pr oceedings of Machine Learning Resear ch , pages 8748–8763. PMLR. Colin Raffel. 2016. Learning-Based Methods for Comparing Sequences, with Applications to A udio- to-MIDI Alignment and Matching . Ph.D. thesis, Columbia Univ ersity , USA. Colin Raffel, Noam Shazeer , Adam Roberts, Katherine Lee, Sharan Narang, Michael Matena, Y anqi Zhou, W ei Li, and Peter J. Liu. 2020. Exploring the limits of transfer learning with a unified text-to-te xt trans- former . J . Mach. Learn. Res. , 21:140:1–140:67. Kihyuk Sohn. 2016. Improv ed deep metric learning with multi-class n-pair loss objecti ve . In Advances in Neural Information Pr ocessing Systems 29: Annual Confer ence on Neural Information Pr ocessing Sys- tems 2016, December 5-10, 2016, Bar celona, Spain , pages 1849–1857. Aäron v an den Oord, Y azhe Li, and Oriol V inyals. 2018. Representation learning with contrasti ve predicti ve coding . CoRR , abs/1807.03748. Ashish V aswani, Noam Shazeer, Niki P armar , Jakob Uszkoreit, Llion Jones, Aidan N. Gomez, Lukasz Kaiser , and Illia Polosukhin. 2017. Attention is all you need . In Advances in Neural Information Pr o- cessing Systems 30: Annual Confer ence on Neural Information Pr ocessing Systems 2017, December 4-9, 2017, Long Beach, CA, USA , pages 5998–6008. Shangda W u, Dingyao Y u, Xu T an, and Maosong Sun. 2023a. Clamp: Contrasti ve language-music pre- training for cross-modal symbolic music information retriev al . In Pr oceedings of the 24th International Society for Music Information Retrieval Confer ence, ISMIR 2023, Milan, Italy , No vember 5-9, 2023 , pages 157–165. Y usong W u, Ke Chen, T ianyu Zhang, Y uchen Hui, T ay- lor Berg-Kirkpatrick, and Shlomo Dubnov . 2023b. Large-scale contrastive language-audio pretraining with feature fusion and keyw ord-to-caption augmen- tation . In IEEE International Confer ence on Acous- tics, Speech and Signal Pr ocessing ICASSP 2023, Rhodes Island, Gr eece, June 4-10, 2023 , pages 1–5. IEEE. Linting Xue, Noah Constant, Adam Roberts, Mihir Kale, Rami Al-Rfou, Aditya Siddhant, Aditya Barua, and Colin Raffel. 2021. mt5: A massi vely multilingual pre-trained text-to-te xt transformer . In Pr oceedings of the 2021 Confer ence of the North American Chap- ter of the Association for Computational Linguistics: Human Language T echnologies, N AACL-HL T 2021, Online, J une 6-11, 2021 , pages 483–498. Association for Computational Linguistics. Mingliang Zeng, Xu T an, Rui W ang, Zeqian Ju, T ao Qin, and Tie-Y an Liu. 2021. Musicbert: Symbolic music understanding with large-scale pre-training . In F indings of the Association for Computational Lin- guistics: ACL/IJCNLP 2021, Online Event, August 1-6, 2021 , volume A CL/IJCNLP 2021 of F indings of A CL , pages 791–800. Association for Computational Linguistics. Zhipu. 2024. Chatglm: A family of lar ge language models from GLM-130B to GLM-4 all tools . CoRR , abs/2406.12793. % % scor e { 1 | 2 } L : 1 / 8 Q : 1/ 4= 120 M : 3 / 4 K : G V : 1 t r ebl e nm = " P i ano" snm = " P no. " V : 2 bass V: 1 ! m f ! " ^ A lle g ro " d 2 (G A B c | d 2 ) . G 2 . G 2 | ] V: 2 [ G , B , D ]4 A , 2 | B , 6 | ] % % scor e { 1 | 2 } L : 1 / 8 Q : 1/ 4= 120 M : 3 / 4 K : G V : 1 t r ebl e nm = " P i ano" snm = " P no. " V : 2 bass [ V :1 ] ! m f ! " ^ A lle g ro " d 2 (G A B c | [ V :2 ] [ G , B , D ] 4 A , 2 | [ V :1 ] d 2 ) . G 2 . G 2 | ] [ V :2 ] B , 6 | ] S h eet Mu si c Sta n d a rd ABC N o ta ti o n I n t er l eaved A B C N o ta ti o n Figure 6: Comparison between standard and interleav ed ABC notation in multi-track piano sheet music. In- terleav ed ABC notation merges v oices and tags them in-line for a compact and synchronized representation. Colors mark patch boundaries for M3 model encoding. A Interlea ved ABC Notation Standard ABC notation encodes each voice sepa- rately , which often results in corresponding bars being spaced f ar apart. This separation makes it dif ficult for models to accurately understand the interactions between voices in sheet music that are meant to align musically . In contrast, interlea ved ABC notation ef fectiv ely aligns multi-track music by integrating multiple voices of the same bar into a single line, ensuring that all parts remain synchronized. As illustrated in Fig. 6, this format combines voices in-line and tags each bar with its corresponding voice (e.g., [V:1] for treble and [V:2] for bass). By directly aligning related bars, interleav ed ABC notation enhances the model’ s understanding of how dif ferent voices interact within the same bar . T o facilitate this reformatting process, we de- veloped a script for rev ersible and lossless con ver - sion between standard and interleav ed notations, ensuring accuracy without any loss of information. This simplification of multi-track music modeling maintains compatibility with standard ABC syntax, allo wing for effecti ve processing in existing tools. t i c k s _per _beat 480 t i m e_s i gnat ur e 3 4 24 8 0 k ey _s i gnat ur e G 0 s et _t em po 500000 0 c ont r ol _c hange 0 0 121 0 pr ogr am _c hange 0 0 0 c ont r ol _c hange 0 0 7 100 \ t 0 0 10 64 \ t 0 0 91 0 \ t 0 0 93 0 m i di _por t 0 0 not e_on 0 0 74 80 k ey _s i gnat ur e G 0 m i di _por t 0 0 not e_on 0 0 55 80 \ t 0 0 59 80 \ t 0 0 62 80 \ t 455 0 74 0 \ t 25 0 67 80 not e_on 239 0 67 0 \ t 1 0 69 80 \ t 191 0 55 0 \ t 0 0 59 0 \ t 0 0 62 0 not e_on 48 0 69 0 \ t 1 0 71 80 \ t 0 0 57 80 \ t 239 0 71 0 \ t 1 0 72 80 not e_on 215 0 57 0 \ t 24 0 72 0 \ t 1 0 74 80 \ t 0 0 59 80 \ t 455 0 74 0 not e_on 25 0 67 80 \ t 239 0 67 0 \ t 0 67 80 \ t 239 0 67 0 \ t 168 0 59 0 end_of _t r ac k 1 M ID I P ia no - r oll V is ua liz a t ion M3 - e nc ode d M I DI T ext F o rmat Figure 7: Illustration of MIDI T ext F ormat (MTF) en- coded by M3. In this format, MIDI messages are treated as patches for processing. Consecutive messages of the same type are mer ged within a patch, with colors indi- cating the boundaries between patches. B MIDI T ext F ormat The MIDI T ext Format (MTF) provides a struc- tured, textual representation of MIDI data that pre- serves all original information without loss. Each MIDI message is accurately represented, allowing full reconstruction from MTF to ensure no musical nuances are ov erlooked during con version. T o generate MTF , the mido library reads raw MIDI messages from MIDI files. As sho wn in T able 3, the output retains all necessary informa- tion but can be lengthy and redundant. T o simplify this, we streamline the representation by directly reading parameter values in a fix ed order and sepa- rating them with spaces. For instance, the raw time signature message, which includes multiple param- eters—numerator , denominator , clocks per click, notated 32nd notes per beat, and time—is repre- sented in MTF as time_signature 3 4 24 8 0 , as illustrated in T able 4. Other messages, includ- ing control changes and note e vents, are similarly compacted while preserving ke y musical details. This approach improv es computational perfor- mance and maintains precise control of timing and dynamics. Furthermore, when processed by M3, consecuti ve messages of the same type that fit within a single patch (under 64 characters) are combined into one line, with only the first mes- sage containing the type information. This further simplifies representation and improv es processing ef ficiency , as shown in Fig. 7. T able 3: Raw MIDI messages extracted from a MIDI file using the mido library . M e t a M e s s a g e ( ’ t i m e _ s i g n a t u r e ’ , n u m e r a t o r = 3 , d e n o m i n a t o r = 4 , c l o c k s _ p e r _ c l i c k = 2 4 , n o t a t e d _ 3 2 n d _ n o t e s _ p e r _ b e a t = 8 , t i m e = 0 ) M e t a M e s s a g e ( ’ k e y _ s i g n a t u r e ’ , k e y = ’ G ’ , t i m e = 0 ) M e t a M e s s a g e ( ’ s e t _ t e m p o ’ , t e m p o = 5 0 0 0 0 0 , t i m e = 0 ) c o n t r o l _ c h a n g e c h a n n e l =0 c o n t r o l = 1 2 1 v a l u e = 0 t i m e = 0 p r o g r a m _ c h a n g e c h a n n e l =0 p r o g r a m = 0 t i m e = 0 c o n t r o l _ c h a n g e c h a n n e l =0 c o n t r o l = 7 v a l u e = 1 0 0 t i m e = 0 c o n t r o l _ c h a n g e c h a n n e l =0 c o n t r o l = 1 0 v a l u e = 6 4 t i m e = 0 c o n t r o l _ c h a n g e c h a n n e l =0 c o n t r o l = 9 1 v a l u e = 0 t i m e = 0 c o n t r o l _ c h a n g e c h a n n e l =0 c o n t r o l = 9 3 v a l u e = 0 t i m e = 0 M e t a M e s s a g e ( ’ m i d i _ p o r t ’ , p o r t =0 , t i m e = 0 ) n o t e _ o n c h a n n e l = 0 n o t e = 7 4 v e l o c i t y = 8 0 t i m e = 0 M e t a M e s s a g e ( ’ k e y _ s i g n a t u r e ’ , k e y = ’ G ’ , t i m e = 0 ) M e t a M e s s a g e ( ’ m i d i _ p o r t ’ , p o r t =0 , t i m e = 0 ) n o t e _ o n c h a n n e l = 0 n o t e = 5 5 v e l o c i t y = 8 0 t i m e = 0 n o t e _ o n c h a n n e l = 0 n o t e = 5 9 v e l o c i t y = 8 0 t i m e = 0 n o t e _ o n c h a n n e l = 0 n o t e = 6 2 v e l o c i t y = 8 0 t i m e = 0 n o t e _ o n c h a n n e l = 0 n o t e = 7 4 v e l o c i t y = 0 t i m e = 4 5 5 n o t e _ o n c h a n n e l = 0 n o t e = 6 7 v e l o c i t y = 8 0 t i m e = 2 5 n o t e _ o n c h a n n e l = 0 n o t e = 6 7 v e l o c i t y = 0 t i m e = 2 3 9 n o t e _ o n c h a n n e l = 0 n o t e = 6 9 v e l o c i t y = 8 0 t i m e = 1 n o t e _ o n c h a n n e l = 0 n o t e = 5 5 v e l o c i t y = 0 t i m e = 1 9 1 n o t e _ o n c h a n n e l = 0 n o t e = 5 9 v e l o c i t y = 0 t i m e = 0 n o t e _ o n c h a n n e l = 0 n o t e = 6 2 v e l o c i t y = 0 t i m e = 0 n o t e _ o n c h a n n e l = 0 n o t e = 6 9 v e l o c i t y = 0 t i m e = 4 8 n o t e _ o n c h a n n e l = 0 n o t e = 7 1 v e l o c i t y = 8 0 t i m e = 1 n o t e _ o n c h a n n e l = 0 n o t e = 5 7 v e l o c i t y = 8 0 t i m e = 0 n o t e _ o n c h a n n e l = 0 n o t e = 7 1 v e l o c i t y = 0 t i m e = 2 3 9 n o t e _ o n c h a n n e l = 0 n o t e = 7 2 v e l o c i t y = 8 0 t i m e = 1 n o t e _ o n c h a n n e l = 0 n o t e = 5 7 v e l o c i t y = 0 t i m e = 2 1 5 n o t e _ o n c h a n n e l = 0 n o t e = 7 2 v e l o c i t y = 0 t i m e = 2 4 n o t e _ o n c h a n n e l = 0 n o t e = 7 4 v e l o c i t y = 8 0 t i m e = 1 n o t e _ o n c h a n n e l = 0 n o t e = 5 9 v e l o c i t y = 8 0 t i m e = 0 n o t e _ o n c h a n n e l = 0 n o t e = 7 4 v e l o c i t y = 0 t i m e = 4 5 5 n o t e _ o n c h a n n e l = 0 n o t e = 6 7 v e l o c i t y = 8 0 t i m e = 2 5 n o t e _ o n c h a n n e l = 0 n o t e = 6 7 v e l o c i t y = 0 t i m e = 2 3 9 n o t e _ o n c h a n n e l = 0 n o t e = 6 7 v e l o c i t y = 8 0 t i m e = 2 4 1 n o t e _ o n c h a n n e l = 0 n o t e = 6 7 v e l o c i t y = 0 t i m e = 2 3 9 n o t e _ o n c h a n n e l = 0 n o t e = 5 9 v e l o c i t y = 0 t i m e = 1 6 8 M e t a M e s s a g e ( ’ e n d _ o f _ t r a c k ’ , t i m e = 1 ) T able 4: MTF offers a streamlined textual representa- tion of MIDI messages extracted using the mido library . For simplicity , ticks_per_beat , though originally an attribute of MIDI objects in mido, is included as the first message at the beginning of the MTF representation. t i c k s _ p e r _ b e a t 4 8 0 t i m e _ s i g n a t u r e 3 4 2 4 8 0 k e y _ s i g n a t u r e G 0 s e t _ t e m p o 5 0 0 0 0 0 0 c o n t r o l _ c h a n g e 0 0 1 2 1 0 p r o g r a m _ c h a n g e 0 0 0 c o n t r o l _ c h a n g e 0 0 7 1 0 0 c o n t r o l _ c h a n g e 0 0 1 0 6 4 c o n t r o l _ c h a n g e 0 0 9 1 0 c o n t r o l _ c h a n g e 0 0 9 3 0 m i d i _ p o r t 0 0 n o t e _ o n 0 0 7 4 8 0 k e y _ s i g n a t u r e G 0 m i d i _ p o r t 0 0 n o t e _ o n 0 0 5 5 8 0 n o t e _ o n 0 0 5 9 8 0 n o t e _ o n 0 0 6 2 8 0 n o t e _ o n 4 5 5 0 7 4 0 n o t e _ o n 2 5 0 6 7 8 0 n o t e _ o n 2 3 9 0 6 7 0 n o t e _ o n 1 0 6 9 8 0 n o t e _ o n 1 9 1 0 5 5 0 n o t e _ o n 0 0 5 9 0 n o t e _ o n 0 0 6 2 0 n o t e _ o n 4 8 0 6 9 0 n o t e _ o n 1 0 7 1 8 0 n o t e _ o n 0 0 5 7 8 0 n o t e _ o n 2 3 9 0 7 1 0 n o t e _ o n 1 0 7 2 8 0 n o t e _ o n 2 1 5 0 5 7 0 n o t e _ o n 2 4 0 7 2 0 n o t e _ o n 1 0 7 4 8 0 n o t e _ o n 0 0 5 9 8 0 n o t e _ o n 4 5 5 0 7 4 0 n o t e _ o n 2 5 0 6 7 8 0 n o t e _ o n 2 3 9 0 6 7 0 n o t e _ o n 2 4 1 0 6 7 8 0 n o t e _ o n 2 3 9 0 6 7 0 n o t e _ o n 1 6 8 0 5 9 0 e n d _ o f _ t r a c k 1 C Prompt and T ext Examples T o reduce textual noise and balance language distri- bution in pre-training data, we carefully designed a structured prompt to le verage the capabilities of GPT -4. As illustrated in Fig. 8, the prompt comprises a system instruction and tw o con versa- tional examples between a user and the assistant. These examples act as in-context learning refer- ences, helping GPT -4 understand the desired out- put format and the types of information it should extract from the pro vided metadata. After formulating the prompt, we organized the metadata entries in our pre-training dataset into a structured JSON format. For each entry , GPT -4 generated corresponding summaries in both En- glish and a randomly selected non-English lan- guage from the 99 non-English languages sup- ported by XLM-R ( Conneau et al. , 2020 ), in addi- tion to Cantonese. Including Cantonese, which is well-represented in the dataset and sharing vocab u- lary with Mandarin, enables CLaMP 2 to support 101 languages without increasing vocab ulary size. T o ensure high-quality outputs, both the English and non-English summaries must strictly adhere to the specified JSON format. W e implemented filtering criteria to exclude entries that do not meet these requirements, including those returning None , lacking proper JSON structure, or containing non- English summaries in the wrong language. Incon- sistencies and structural errors are more prev alent in lo w-resource languages, as shown in Fig. 4. T o illustrate the effecti veness of this approach, Fig. 9 provides examples that demonstrate GPT - 4’ s ability to generate summaries for different mu- sical compositions. Each example adheres to a structured format, including key metadata—such as the title, composer , genres, description, lyrics, and ensemble information—followed by generated summaries in English and a specified non-English language. In conclusion, our approach effecti vely uses GPT -4 to generate structured summaries from noisy , English-centric metadata, reducing textual noise and achieving a more balanced distribution of various languages. By applying filtering criteria, we first remo ve entries that lack musical informa- tion, followed by those that are poorly structured or mismatched with the specified non-English lan- guage. This method enhances the quality of our pre-training dataset and promotes a multilingual en vironment to better serve div erse languages. Y o u r t as k i s t o p r o vi d e a c o n c i s e , c o mp r e h e n s i v e , an d c o h e r e n t s u mmar y o f t h e mu s i c p i e c e u s i n g t h e p r o vi d e d me t ad a t a. P l e as e w r i t e t h e s u mmar y i n E n g l i s h f i r s t , an d t h e n w r i t e an e q u i v al e n t s u mmar y i n t h e spec i fi ed no n - E n g l i s h l an g u ag e f r o m t h e " n e n _ l an g u ag e " f i e l d . U s e t h i s JSO N f o r ma t : { " s u mmar y _ e n " : " Y o u r E n g l i s h s u mmar y h e r e ." , " s u mmar y _ n e n ": { “ l an g u ag e ” : “ Sp e c i f i e d n o n - E n g l i s h l an g u ag e ." , " s u mmar y " : " Y o u r n o n - E n g l i s h s u mmar y h e r e ." } } If t h e r e i s n o t e n o u g h mu s i c - r e l a t e d i n f o r ma t i o n , r e t u r n ` No n e ` i n s t e ad . S y st em { "t i t l e ": " B r e j e i r o ", "c o m p o s e r ": "Er n e s t o N a z a r e t h ", "g e n r e s ": [ "C h o r o ", "C l a s s i c a l ", "I n s t r u m e n t a l "] , "d e s c r i p t i o n ": " \ " B r e j e i r o \ " i s i n A majo r an d 2 /4 t i me . A jo y f u l me l o d y b e g i n s a t b ar s i x , an d a l i v e l y t an g o r h y t h m s t ar t s a t b ar f o u r t e e n . It h as a D . C . al Fi n e a t b ar f i f t y - t h r e e an d e n d s o n t w o q u ar t e r n o t e s i n b ar t h i r t y - s e v e n . T h e p i e c e , w i t h i t s vi b r an t me l o d i e s an d r h y t h ms , r e f l e c t s c e l e b r a t i o n an d c ar e f r e e n e s s , e mb o d y i n g t h e s p i r i t o f B r az i l i an mu s i c ." , "t a g s ": [ "B r a z i l i a n ", "C h o r o ", "P i a n o "] , " e n s e mb l e s " : [ " So l o P i an o " , " Smal l E n s e mb l e " ] , "i n s t r u m e n t s ": [ "P i a n o "] , " n e n _ l an g u ag e " : " Jap an e s e " } U ser { " title " : " Un title d " , "c o m p o s e r ": "U n k n o w n ", "d e s c r i p t i o n ": "T h i s i s a g o o d s o n g . ", " n e n _ l an g u ag e ": "R u s s i a n " } U ser { " s u mmar y _ e n ": " B r e j e i r o , c o mp o s e d b y E r n e s t o Naz ar e t h , i s a l i v e l y c h o r o p i e c e i n A majo r an d 2 /4 t i me . It f e a t u r e s a jo y f u l me l o d y t h a t b e g i n s a t b ar s i x an d a vi b r an t t an g o r h y t h m i n t r o d u c e d a t b ar f o u r t e e n . T h e p i e c e i n c l u d e s a D . C . al Fi n e a t b ar f i f t y - t hr ee, c o n c l u d i n g o n t w o q u ar t e r n o t e s i n b ar t h i r t y - s e v e n . W i t h i t s t hem es o f c el ebr a t i o n a nd c a r e fr eeness, B r e j e i r o b e au t i f u l l y c ap t u r e s t h e e s s e n c e o f B r az i l i an mu s i c an d i s w e l l - s u i t e d f o r s o l o p i an o an d s mal l ensem bl es. " , " s u mmar y _ n e n ": { " l an g u ag e " : " Jap an e s e " , " s u mmar y " : " 「 ブレジェイロ」は、エルネスト・ ナザレが 作曲 した 活気 あふれるショーロの 作品 で、 イ 長調 の 2/ 4 拍子 で 書 かれています。 第 6 小節 から 始 まる 喜 びに 満 ちたメロディーと、 第 14 小節 で 導 入 される 活気 あるタンゴのリズムが 特徴 です。こ の 曲 には、 第 53 小節 での D . C . al Fi n e が 含 まれ、また 第 37 小節 で 二 つの 四分音符 で 締 めくくられていま す。「ブレジェイロ」は、お 祝 いと 無邪気 さの テーマを 持 ち、ブラジル 音楽 の 本質 を 美 しく 捉 え ており、ソロピアノや 小編成 のアンサンブルに ぴったりの 作品 です。 " } } A ssi st an t No n e A ssi st an t Figure 8: GPT -4 is tasked with generating summaries in English and a selected non-English language. The prompt includes a system instruction and two exam- ples: one shows how to process music metadata—like title, composer , and genre—into clear multilingual sum- maries, while the other identifies entries lacking suf fi- cient musical information. { "title": "Hard Times Come A gain No More", "composer": "Stephen Foster", "genres": ["Children's Music", "Folk"], "description": " \ "Hard Times Come Ag ain No Mor e \ " (sometimes re ferr ed to as \ "Har d Times \ ") is an American parlor song writ ten by Stephen F oster , refl ecting themes of sorro w and hope.", "lyrics": "Let us pause in lif e's pleasures and count its man y tear s, \ nWhile we all sup sorro w with the poor; \ nThere's a song that will linger forever in our e ars; \ nOh ! Hard times c ome again no mor e. \n\ nChorus :\ n'Tis the song , the sigh of the weary , \ nHar d Times, hard times, co me again no mor e. \ nMany da ys you hav e lingered around m y cabin door; \ nOh ! Hard times come again no more. \n\ nWhile we seek mirth and beauty and music light and g ay , \ nThere ar e frail f orms fainting at the door; \ nThough their voi ces are silent, their plea ding looks will say \ nOh ! Hard times come ag ain no mo re. \ nChorus \n\ nThere's a pale weeping maiden who toils her l ife aw ay , \ nWith a worn heart w hose better da ys are o'er: \ nThough her voice would be merry , 'tis sighi ng all the day , \ nOh ! Hard times come ag ain no more. \ nChorus \n\ n'Tis a sigh that is w afted across the tr oubled wave, \ n'Tis a wail that i s heard upon the shore \ n'Tis a dir ge that is murmured around the lowly grave \ nOh ! Har d times come ag ain no more. \ nChor us ", "tags": ["folk", "traditional", " bluegrass", "nostalgic", "heartfelt", "acoustic", "melanch olic", "storytelling", "A merican roots", "resilience"], "ensembles": ["Fo lk Ensemble"], "instruments": ["V ocal", "Violin", "Tin whistle", "Guitar", "Banjo", "T ambourine"], " summary_en ": " \ "Hard Times Come Ag ain No More, \ " composed by Stephen Fost er , is a poignant Americ an parlor song that e xplores themes of sorrow and hope. The lyrics refl ect on the contr ast between lif e's pleasures and i ts hardships, i nviting list eners t o acknowledge both jo y and suffer ing. With a heartf elt chorus tha t repeats t he line \ "Hard times come ag ain no more, \ " the song resonat es with nostalgia and r esilience. It is often perf ormed by folk ensembles and featur es a varie ty of instruments, incl uding vocals, viol in, guitar , and banjo, encap sulating the spirit of American roots music.", " summary_nen ": { "language": "Chinese (Simplified)", "summary": " 《艰难时光再无来临》是斯蒂芬 · 福斯特创作的一首感人至深的美国小歌厅歌曲,探讨了悲伤与希 望的主题。歌词展现了生活的乐趣与艰辛之间的对比,邀请听众去感受快乐与痛苦的交织。歌曲中那句反复吟唱 的 “ 艰难时光再无来临 ” 深情地表达了怀旧与坚韧。它常常由民谣乐队演奏,伴随着人声、小提琴、吉他和班卓琴 等多种乐器,生动地展现了美国根源音乐的独特魅力。 " } } { "title": " ﻖﺻﯾدة كﺎﺗﺑﯾ ", "genres": [" ﺄﻏﺎﻧﯾ شﻌﺑﯾة ", " ترﺎﺛﯾة "], "description": " ﻖﺻﯾدة كﺎﺗﺑﯾ ، أو ﺄﺛﻧاء ﻻذھﺎﺑ ﻺﯩ ُ ﺄﺳ ُ ﻛدار ، ﮫﯾ موﻻ ﻊﺛﻣﺎﻧﯾ شﮭﯾر فﯾ ﺈﺳطﻧﺑوﻟ يروﯾ ﻖﺻة تﻧزھ ك ﺎﺗﺑ وﺎﻣرأة فﯾ ﺄﺳﻛدار فﯾ ﻼﻗرﻧ ﻼﺗﺎﺳﻋ ﻊﺷر .", "lyrics": " ﮫطﻟ ﻼﻣطر ﺄﺛﻧاء ﻻذھﺎﺑ ﻺﯩ ُ ﺄﺳ ُ ﻛدار .\n وﺗﻠ طﺧﺗ ﺄﻛﻣﺎﻣ سﺗرة كﺎﺗﺑﯾ ﻼطوﯾﻟة بﻼطﯾﻧ .\n ﺎﺳﺗﯾﻘظ ﻼﻛﺎﺗﺑ مﻧ ﻼﻧوﻣ وﻌﯾﻧھ مﺧﻣورھ .\n ﻼﻛﺎﺗﺑ لﯾ وﺄﻧ ا لھ فﻣا شﺄﻧ ﻻﺂﺧرﯾﻧ؟ \n كﻣ هو لﺎﺋﻗ ﻼﻘﻣﯾﺻ ﻼﻣﻧﺷﯩ ﻊﻠﯩ كﺎﺗﺑﯾ !\n وﺟدﺗ مﻧدﯾﻟا ﺄﺛﻧاء ﻻذھﺎ ﺑ لﺄﺳﻛدار .\n مﻠﺋﺗ مﻧدﯾﻠﯾ بﻼﻣﻠﺑﻧ .\n وﺟدﺗ كﺎﺗﺑﯾ ب ﺟوارﯾ وﺄﻧا ﺄﺑﺣﺛ ﻊﻧھ .\n ﻼﻛﺎﺗﺑ لﯾ وﺄﻧا لھ فﻣ ا شﺄﻧ ﻻﺂﺧرﯾﻧ؟ \n كﻣ هو لﺎﺋﻗ ﻼﻘﻣﯾﺻ ﻼﻣﻧﺷﯩ ﻊﻠﯩ كﺎﺗﺑﯾ !", "tags": [" ترﺎﺛ ", " شﻌﺑﯾ ", " ث ﻗﺎﻓة ", " ﻖﺻﺻ ", " ﺢﻧﯾﻧ "], "ensembles": [" فرﻗة شﻌﺑﯾة "], "instruments": [" صوﺗ ", " عود " , " بﯾﺎﻧو ", " طﺑﻟة "], "summary_en": " \" ﻖﺻﯾدة كﺎﺗﺑﯾ ,\ " or \ "While Going to Üsküdar , \ " is a popular Ott oman song from Istanbul t hat tells the story of a writer and a woman str olling in Üsküdar duri ng the 19 th century . The lyrics describe a rain y day when the writer' s long coat gets muddy , his sleepy st ate, and his feeli ngs for the w oman beside him. With themes of nos talgia and cultur al heritage, this piece is typically perf ormed by fol k ensembles and featur es vocals, oud, piano , and drums.", "summary_nen": { "language": "Amharic", "summary": " ቃሊዳ ካታቢ , ወይም \" ወደ ኡስክዳር በመሄድ ,\ " በኢስታንቡል የታወቀ ወታዊ ዘፈን ነው፣ ይህ ዘፈን በአስክዳር በወር የነበረ ወንጌላዊ ሰውና እንቁላል ወንድ ያንቀሳቅስ ወቅት ይወዳል። የቃልዎቹ የዝንጉ ቀን የተወዳዳል ነገር እና ዝንባል ባይዛው በእምነት እና በልብስ ይወዳል። እንደ ምናልባት ይከ በሩ፣ በሕይወት የተመለሰ ዝርዝር ይውውዝ። " } } Figure 9: T wo examples of LLM-processed te xt data presented in JSON format, representing the original metadata and LLM-generated summaries in multiple languages for different music pieces. D t -SNE V isualizations of CLaMP 2 Representations This section presents the t -SNE visualizations of feature representations e xtracted from CLaMP 2 across three benchmarks: W ikiMT , V GMIDI, and Pianist8. These visualizations illustrate the clus- tering patterns of musical representations and re- veal an intriguing alignment between the tw o data modalities—ABC notation and MIDI—without any fine-tuning of the model. As demonstrated in Fig. 10, the clarity of cluster- ing correlates with the classification performance from T able 1. Pianist8, which achie ves the highest accuracy , displays well-defined and tight clusters, signifying that the model adeptly apprehends the minute stylistic subtleties at the composer le vel. A particularly notable finding is the mirrored spa- tial alignment between ABC and MIDI across all datasets. This implies that, despite their dissimilar musical encodings, CLaMP 2 capture comparable latent structures within the feature space. The align- ment indicates that CLaMP 2 extracts modality- in variant features—resilient patterns that remain consistent across ABC and MIDI formats. These shared representations are likely to reflect profound musical semantics such as harmonic progressions, rhythmic architectures, or stylistic themes. This symmetry has practical consequences. It suggests that CLaMP 2 could enable cross-modal tasks, for example, retrie ving MIDI files based on ABC queries, without requiring specialized adap- tation. It also points to the potential for trans- fer learning between modalities, where a model trained on one format (e.g., ABC) could operate ef fectiv ely on another (e.g., MIDI). Future work could explore whether introducing explicit align- ment techniques, like contrastiv e learning, could further enhance cross-modal performance between these two modalities. These results spotlight both the strengths and limitations of CLaMP 2: the model demonstrates strong generalization across datasets, capturing meaningful musical patterns across di verse do- mains. Howe ver , it struggles with tasks in volving ov erlapping or ambiguous genre boundaries, sim- ilar to human perception, such as distinguishing between entities like Bethel and Hillsong, which it finds v ery similar . This suggests that while CLaMP 2 excels at identifying clear stylistic dif ferences, it may have dif ficulty differentiating between more closely related or subtle v ariations. Country Dance F olk Jazz Latin P op R&B R ock AB C MIDI (a) t -SNE visualizations on the WikiMT benchmark V0A0 V0A1 V1A0 V1A1 AB C MIDI (b) t -SNE visualizations on the VGMIDI benchmark Bethel Clayder man Einaudi Hancock Hillsong Hisaishi R yuichi Y iruma AB C MIDI (c) t -SNE visualizations on the Pianist8 benchmark Figure 10: t -SNE visualizations of feature represen- tations from CLaMP 2 (without fine-tuning) for three datasets: (a) W ikiMT , (b) VGMIDI, and (c) Pianist8. A notew orthy observ ation is the mirrored spatial align- ment of the ABC and MIDI representations, suggesting that CLaMP 2 effecti vely extracts modality-in variant musical semantics from both formats.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment