Event-Triggered Algorithms for Leader-Follower Consensus of Networked Euler-Lagrange Agents

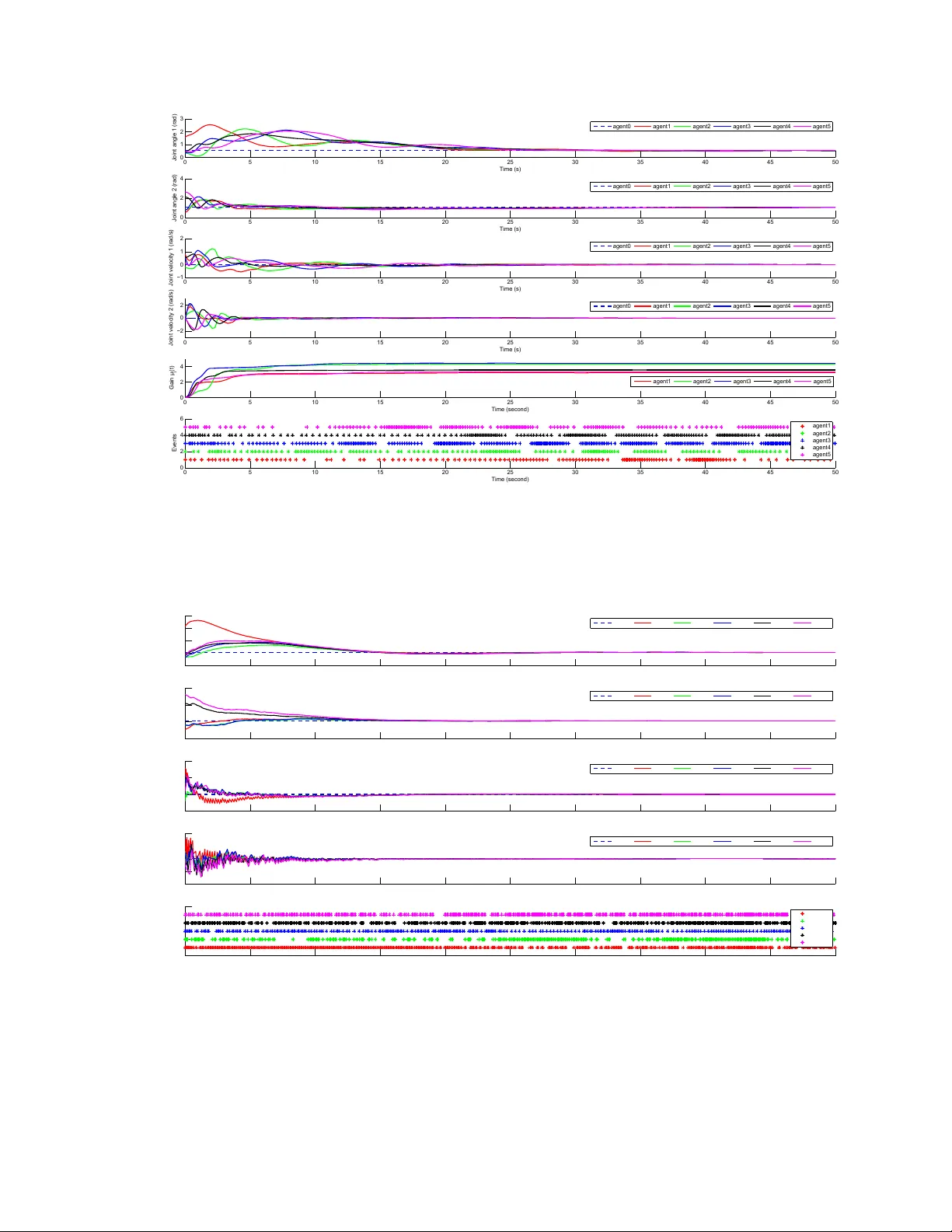

This paper proposes three different distributed event-triggered control algorithms to achieve leader-follower consensus for a network of Euler-Lagrange agents. We firstly propose two model-independent algorithms for a subclass of Euler-Lagrange agent…

Authors: Qingchen Liu, Mengbin Ye, Jiahu Qin