Resource-constrained stereo singing voice cancellation

We study the problem of stereo singing voice cancellation, a subtask of music source separation, whose goal is to estimate an instrumental background from a stereo mix. We explore how to achieve performance similar to large state-of-the-art source se…

Authors: Clara Borrelli, James Rae, Dogac Basaran

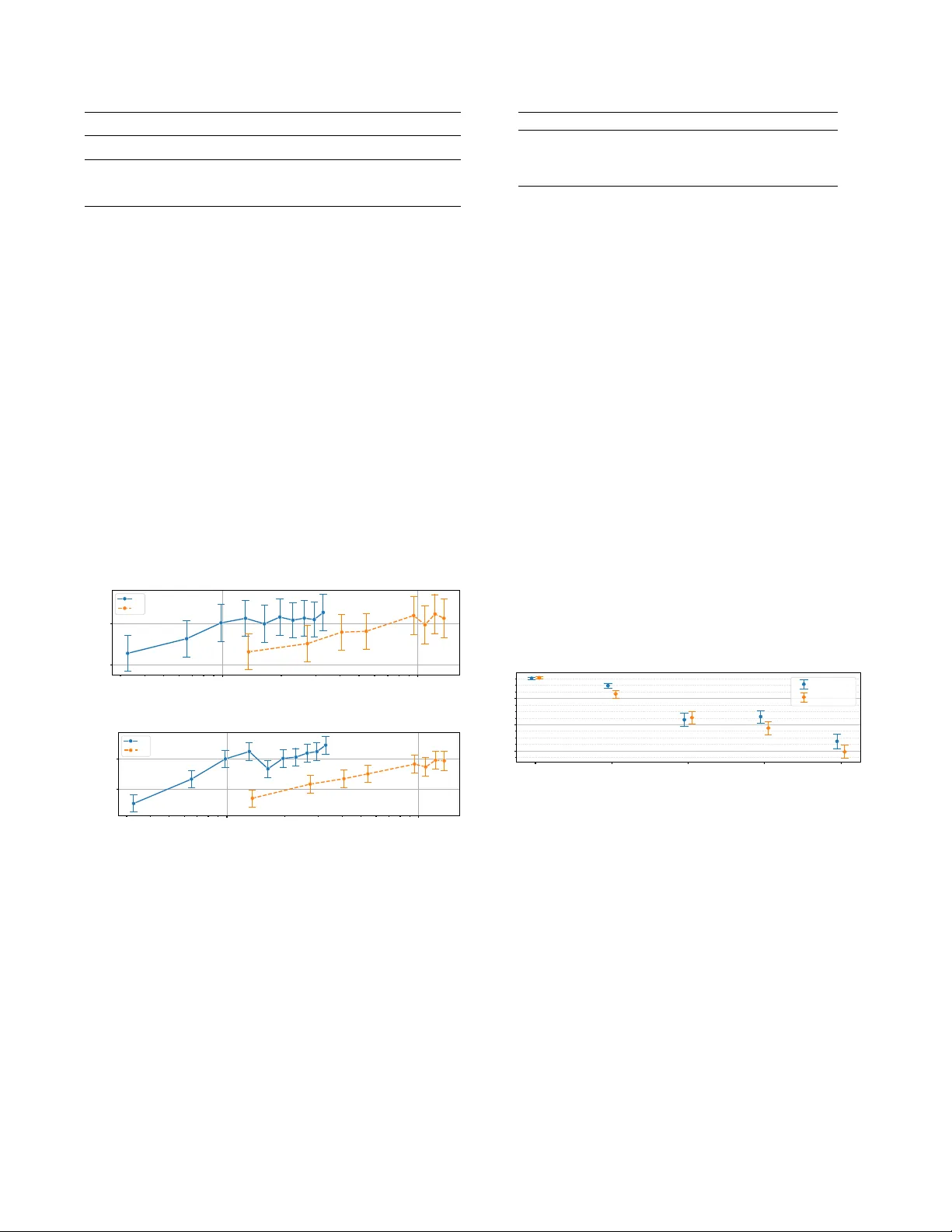

RESOURCE-CONSTRAINED STEREO SINGING V OICE CANCELLA TION Clara Borr elli J ames Rae Dogac Basaran Matt McV icar Mehr ez Souden Matthias Mauch Apple ABSTRA CT W e study the problem of stereo singing voice cancellation, a subtask of music source separation, whose goal is to estimate an instrumental background from a stereo mix. W e explore how to achiev e performance similar to large state-of-the-art source separation networks starting from a small, efficient model for real-time speech separation. Such a model is use- ful when memory and compute are limited and singing voice processing has to run with limited look-ahead. In practice, this is realised by adapting an existing mono model to handle stereo input. Improvements in quality are obtained by tuning model parameters and expanding the training set. Moreover , we highlight the benefits a stereo model brings by introducing a ne w metric which detects attenuation inconsistencies be- tween channels. Our approach is ev aluated using objective offline metrics and a large-scale MUSHRA trial, confirming the ef fectiveness of our techniques in stringent listening tests. Index T erms — singing voice cancellation, music source separation 1. INTR ODUCTION In this w ork we present a study of optimisation and ev aluation of a Singing V oice Cancellation (SVC) system. W e analyse the performance of such as system in limited computational resource scenarios and understand which factors most affect output quality . SVC consists of removing the singing v oice from the instrumental background in a fully mixed song, and can be interpreted as a special case of Music Source Sepa- ration (MSS). The main dif ference lies in the fact that MSS aims to retrieve one separate stem for each source present in the mix, while SVC considers only instrumental accompani- ment as desired source. Recent advances in deep learning ha ve led to significant performance improvements in both SVC and MSS, leading to much improv ed objective metrics. MSS algorithms typ- ically use a spectral [1] or wa veform [2] representation. In the first case, both input and output are expressed in the time-frequency domain and popular solutions adopt a U-Net architecture [1, 3], combined with band-split RNN [4], con- ditioning mechanism [5] or multi-dilated con volutions [6]. Inspired by W ave-Net [7], w aveform-based MSS methods tackle the problem end-to-end. An example is Demucs [2], which uses 1-D conv olutional encoder and decoders with a bidirectional LSTM. Later versions of Demucs include a spectral branch [8] and transformer layers [9]. Similar ap- proaches ha ve been adopted for Singing V oice Separation [10, 11]. All of these methods produce high quality results but are often designed to be run of fline and in a non-causal setup, i.e., having access to the complete audio input, and no constraints on memory or run-time. These methods are not suitable for challenging yet realistic scenarios, where separa- tion happens on low-memory edge devices, under streaming conditions and with hard constraints on processing time. Ef- ficient solutions have been proposed to tackle speech source separation under these circumstances. Con v-T asNet [12] is an end-to-end masking-based architecture which employs 1-D con volutional encoder and decoder , a T emporal Con- volutional Network (TCN)-based separator and depth-wise con volutions to reduce network size. This architecture has also been adapted and expanded to address MSS [13, 14]. In this work Conv-T asNet is adapted and optimised for SVC such that the output is of comparable quality to more resource-hungry networks. Such architecture guarantees a low-memory footprint thanks to a limited number of param- eters, and is able to operate in real-time. W e train the model on a large dataset, to achiev e high quality output and we show how training dataset size and quality af fect the model’ s output quality . The main contributions in this work are: • T o match a real-world setup, we modify Conv-T asNet to take advantage of stereo input and to produce stereo output. This design improves vocal attenuation consistency across stereo channels, and we propose a ne w stereo metric to ver- ify this improv ement. • W e propose a stereo separation asymmetry metric able to measure stereo artifacts and show that the proposed stereo architecture helps to prev ent them. • T o validate the proposed approach, we conduct experiments to e valuate objecti vely and subjecti vely using a MUSHRA- style test. W e sho w that a relatively small and specialised model with appropriate training can reach outstanding high quality com- parable to much larger models. ©2024 IEEE. Personal use of this material is permitted. Permission from IEEE must be obtained for all other uses, in any current or future media, including reprinting/republishing this material for advertising or promotional purposes, creating ne w collectiv e works, for resale or redistribution to serv ers or lists, or reuse of any copyrighted component of this work in other w orks. Encoder Separator Decoder 2D Conv N kernel =384 (N) K size =(1,64) (L) K stride =(1,32) (L/2) S-Conv D = 1 S-Conv D = 2 Concat S-Conv D = 2 X-1 S-Conv D = 1 S-Conv D = 2 S-Conv D = 2 X-1 2D Conv N kernel =2 K size =(1, 384) Sigmoid Mask T ransp 2D Conv N kernel = 1 K size =(1,64) (L) K stride =(1,32) (L/2) 1x1 Conv N kernel =448 (B) x ( t ) ˜ y ( t ) cLN Norm [B, T/2L] [2N, T/2L] [1, 2, T] X=9 R=4 Mix Embedding Instrument Em b edding [N, 2, T/2L] [N, 2, T/2L] [1, 2, T] T ransp ose [2, N, T/2L] × [B, T/2L] (a) Network Architecture 1x1 Conv N kernel =448 (H=B) D Conv N kernel =448 (H=B) K size =3 (P) K dilation = D K groups = 448 (B) PreLu + Norm + PreLu + Norm [B, T/2L] [B, T/2L] [B, T/2L] (b) S-Con v Block Fig. 1 : V ox-T asNet architecture and S-Conv block in detail. Letters in parenthesis follow the original notation used in [12]. In square brackets we report input dimensionality as [Ch, W , H] throughout the network. 2. METHOD A sampled stereo audio signal x ( t ) ∈ R 2 with t ∈ [0 , T ) , which corresponds to a fully mix ed track, can be modelled as a linear sum of an instrumental or accompaniment stem y ( t ) and a v ocal stem v ( t ) , i.e., The goal of our system is to es- timate y ( t ) in real-time and with low memory requirements. A real-time scenario implies that the network output should be produced with lo w-latency . This forces the model to be al- most causal, i.e., the output in a specific time instant depends largely on past input samples and possibly a small portion of future samples, i.e., look-ahead, which can be b uffered. T o be able to satisfy low memory footprint requirements, it is necessary to limit the number of network parameters. 2.1. Network Ar chitecture W e propose V ox-T asNet, an adaptation of Con v-T asNet [12] to the task of SVC in a stereo setup, hence able to jointly esti- mate both left and right channels. Similar to Con v-T asNet, the network follows a masking-based approach and is composed of three modules, as sho wn in Fig. 1. The Encoder block reduces time resolution of the raw audio w av eform using a two-dimensional con volutional (2D Con v) layer . This creates separate embeddings for the left and right channels of the mix whilst benefiting from the information in both. These embed- dings are stack ed and fed into the Separator block. The Sepa- rator block consists of multiple stacks of separable-depthwise con volutional (S-Con v) layers with increasing dilation and es- timates masks for the left and right channel representations. Only the S-Con v layers in the first group are non-causal, mak- ing the Separator block almost entirely causal. This allo ws the netw ork to look ahead for a small time interval. Mask- ing is applied via element-wise multiplication and the result- ing masked representations are fed into the Decoder (T ransp 2D Con v), which outputs stereo audio accompaniment. Aside from the stereo setup, there are tw o main differences between V ox-T asNet and Con v-T asNet. First, the Separator’ s skip con- nections are remov ed to reduce memory footprint, as orig- inally proposed in [15]. Second, we increase the number of S-Con v layers in each group b ut dimensionality is fixed inside each S-Con v layer, rather than expanded and squeezed. This choice allows the separator to learn longer time dependencies, without increasing the number of parameters. 2.2. Stereo separation asymmetry metric During informal listening e xperiments, we noticed that single-channel model produced SVC results which were audibly inconsistent across channels in terms of loudness and attenuation. On the other side, a stereo-native architecture is able to prev ent these artifacts and exploit cross-channel information as well. This lead us to de vise a stereo separation asymmetry metric which attempted to measure this effect. Let’ s consider a source separation metric, i.e., SI-SDR. It can be formulated in a frame-wise setup, i.e., comparing short frames of prediction and ground-truth signal. Let us define ( SI-SDR L ( n ) , SI-SDR R ( n )) as the frame-wise metric computed for N windo ws of length W and hop size H on left channel and right channel respectively , with n corresponding to window index. W e define ∆ SI-SDR ( n ) = | SI-SDR L ( n ) − SI-SDR R ( n ) | as the absolute v alue of difference between left and right frame-wise metric values. W e use as stereo met- ric SSA SI-SDR = 1 N P n ∆ SI-SDR ( n ) , as the av erage over time of ∆ SI-SDR ( n ) . Larger values of SSA M corresponds to large difference between left and right channel’ s separation met- ric distance ov er time, hence quality inconsistency . Note that ∆ SI-SDR ( n ) and SSA SI-SDR can be defined for any other dB- based source-separation metric (i.e., SIR or BSS-Eval met- rics). 2 Dataset Num T racks (T rain/T est) Curated Use A 3,290 Y es T rain B 3,797 (3,375/422) Y es T rain/T est C 13,625 No T rain MUSDB 150 (100/50) Y es T rain/T est T able 1 : Datasets specifications 3. EXPERIMENT AL SETUP T o train and validate our system, we consider four dif ferent datasets. Three datasets are internal and we will refer to them with A , B and C . For benchmarking against state-of-the-art models, we use the publicly av ailable MUSDB [16] dataset. In order to be compatible with the task of SVC, the instrumen- tal accompaniment track is obtained by linearly summing the bass , drums and other stems. Dataset specifications are re- ported in T able 1. For all internal datasets, we apply a pre- processing step to filter out tracks for which the vocal stem is silent for more than 50% of the song. Note from T able 1 that C is lar ger than A and B , b ut not musically curated by experts hence potentially contains noisier samples. T o have comparable experiments, we use a fixed set of training parameters in all of our experiments. Sampling rate is fixed at 44 , 100 Hz, and each training sample is composed of 4 s of stereo audio, randomly sampled from each song at training time. The batch size is equal to 6 and each model is trained for 500 epochs. W e use the Adam optimizer [17] with an initial learning rate 0.0001, scheduled with decay param- eter equal to 0.99. The loss function is a weighted average of L1 distance in time domain and multi-resolution spectral L1 distance using windo w lengths equal to 0 . 01 s, 0 . 02 s and 0 . 09 s and hop-size equal to half of window lengths. Time and spectral losses are weighted with weights 0 . 875 and 0 . 125 re- spectiv ely . As a strong baseline, we use HybridDemucs [8], a large music source separation model without real-time or mem- ory constraints. W e aim to match the quality obtained with HybridDemucs, while keeping as reference a resource- constrained scenario. HybridDemucs is trained on the aug- mented MUSDB dataset and extracts four separate stems from the original mix: drums, bass, vocal and others. W e obtain the accompaniment mix as the sum of drums , bass and others stems. Additionally , we consider a mono version of V ox-T asNet, namely MonoV ox-T asNet. It has a similar architecture to V ox-T asNet, as shown in Fig 1, but 2-D con- volutions are replaced with 1-D con volutions. A stereo output is obtained by separately processing left and right channel and concatenating the results. T o ev aluate the proposed system, we take advantage of both objective and subjective ev aluation. For objective ev al- uation, we use Scale In variant Source-to-Distortion Ratio (SI-SDR) [18] computed between the ground-truth accom- paniment and the estimated accompaniment. Moreover , we use the separation symmetry metric presented in Section 2.2, computed using SI-SDR with W = 1 . 5 s and H = 0 . 75 s, to ev aluate out stereo vs mono model. As objectiv e metrics do not always correlate strongly with human perception of audio quality [19], we report subjective ev aluation results obtained via a Multiple Stimuli with Hidden Reference and Anchor (MUSHRA)-style test [20]. For each MUSHRA trial, participants hear a 10-second clip of a mix and reference (i.e., all stems except the vocals). Using the reference as a benchmark, participants use a 0-100 quality scale to rate the output obtained with HybridDemucs, V ox-T asNet, and MonoV ox-T asNet trained on A and B for 1500 epochs. Also embedded in the test set was the hidden reference (expected to be rated as excellent), as well as a lower anchor (expected to be rated as poor). As a lower anchor we use the original Con v-T asNet architecture without skip connections in the Separator , and trained on A only . Each participate completed MUSHRA trials for four songs. W e exclude judgments from participants if they (a) rated the hidden reference lower than 90 on more than one trial or (b) rated the lo wer anchor higher than 90 for more than one trial. W e test the robustness of our results by comparing subjectiv e model performance on 50 tracks from the MUSDB test set and 53 tracks from the internal datasets obtaining I . Participation w as completed (a) online, (b) while using headphones, and (c) after receiving training and the completion of practice trials. As MUSHRA scores are bounded (between 0 and 100), we model 2,960 judgements obtained from 99 participants as proportions (di- viding each score by 100) using beta regression with a logit link function [21] and applying a transformation to prev ent 0’ s or 1’ s [22]. Results are transformed back to the original 0-100 scale for ease of interpretation. Dependencies in the data (e.g., each participant made multiple judgements) led us to use a Bayesian multilev el model with weakly informative priors. All parameters met the ˆ R < 1 . 1 acceptance criterion [23], indicating model con vergence. 4. RESUL TS 4.1. Objective Ev aluation W e first focus on the analysis of objective metrics on the two ev aluation datasets, MUSDB and B . In T able 2 we sho w the results for two versions of V ox-T asNet compared to the HybridDemucs baseline, together with correspondent latency and memory footprint. Both V ox-T asNet versions and the baseline perform differently on the two test datasets. Cross- dataset testing more strongly af fects the baseline e valuated on B when compared to V ox-T asNet trained on A . Con- sidering SI-SDR values together with the required resources, it is evident ho w V ox-T asNet trained on A is able to reach quality comparable to the baseline with less than 10% net- work parameters and small look-ahead. This analysis sup- ports the importance of training dataset in achie ving high- quality results while meeting the limited resource constraints. 3 Model T raining dataset T est dataset SI-SDR (dB) ↑ Receptive field (s) Look- ahead(s) Num param (M) Hybrid Demucs MUSDB MUSDB 16 . 34 ± 3 . 03 5.00 2.5 80 B 14 . 02 ± 3 . 44 V ox-T asNet MUSDB MUSDB 9 . 90 ± 3 . 22 1.86 0.37 7.5 B 8 . 59 ± 3 . 09 A MUSDB 13 . 27 ± 3 . 13 B 12 . 23 ± 3 . 28 T able 2 : Mean and standard de viations of SI-SDR training V ox-T asNet on A and MUSDB compared to the baseline, to- gether with corresponding latency and memory impact. The second experiment aims at understanding how training dataset size and quality impact model performance. In this case, both A and C are partitioned into subsets of increas- ing size. In Fig 2 we report SI-SDR statistics obtained over the number of tracks of training partitions. As expected, in both cases lar ger training dataset leads to more effecti ve voice cancelling. Comparing the two training datasets at similar dataset size, training on A alw ays leads to higher SI-SDR and the best result is obtained with the largest A partition, despite C being much larger . This result highlights the im- portance of training data quality , since C is not musically curated, unlike A . W e also trained the model combining A and C datasets with dif ferent proportion but no significant improv ements were achie ved. The final e xperiment aims at 10 3 10 4 T raining dataset size 12 13 SI-SDR [dB] A C (a) 10 3 10 4 T raining dataset size 11 . 5 12 . 0 SI-SDR [dB] A C (b) Fig. 2 : SI-SDR mean and standard error for V ox-T asNet obtained training on different partitions of the two training datasets tested on MUSDB (a) and on B (b). analysing the differences between the proposed V ox-T asNet stereo model and its mono version, MonoV ox-T asNet. Both models hav e been trained on A . In the first column of T a- ble 3 we report SI-SDR computed separately on left and right channel, indicated as SI-SDR mono . W e verify that the dif- ference between SI-SDR distributions for mono and stereo model on each ev aluation dataset are not statistically signifi- cant, hence overall quality is not affected by stereo architec- ture. In the second column we report values for the stereo Model T est Dataset SI-SDR mono (dB) ↑ SSA SI-SDR (dB) ↓ V ox-T asNet MUSDB 12 . 79 ± 3 . 19 1 . 10 ± 0 . 45 B 11 . 64 ± 3 . 19 1 . 08 ± 0 . 58 MonoV ox-T asNet MUSDB 13 . 13 ± 2 . 94 1 . 81 ± 0 . 73 B 11 . 85 ± 3 . 21 2 . 30 ± 1 . 34 T able 3 : Mean and standard deviation of SI-SDR mono and of SSA SI-SDR for V ox-T asNet compared with the mono version trained on A , tested on B and MUSDB. For SI-SDR mono , the higher the better . For SSA SI-SDR the lower the better . metric SSA SI-SDR proposed in Sec 2.2. A lo wer value indi- cates lo wer stereo artifacts and higher symmetry between left and right channel vocal attenuation. Results highlight that stereo architecture greatly improves output quality in terms of multichannel artifacts on both test datasets. 4.2. Subjective Ev aluation In Fig 3 we report MUSHRA scores for all conditions tested I and MUSDB. As expected, all models had lower scores than the reference audio on each ev aluation dataset. More- ov er, V ox-T asNet and MonoV ox-T asNet had quality scores lower than HybridDemucs, but higher than the original Con v- T asNet architecture. Generally , models were perceived to hav e higher quality when tested on the MUSDB (vs. I ) dataset. Finally , while there was no meaningful difference between the mono and stereo models for the MUSDB dataset, V ox-T asNet had significantly better quality than MonoV ox- T asNet on the I dataset. Reference HybridDem ucs V ox-T asNet MonoV ox-T asNet Conv-T asNet Model 40 60 80 Rating MUSDB I Fig. 3 : Estimated MUSHRA scores for all conditions tested on I and MUSDB. Intervals are 95% credible interv als. 5. CONCLUSIONS In this work we presented an ef ficient stereo SVC architec- ture, able to operate in real-time and with low memory re- quirements. By training the model on a lar ge dataset, we reached performances comparable to larger and non-real-time models. Moreo ver , we show the benefits of using a stereo ar- chitecture through a new stereo separation asymmetry metric which can be formulated for any source separation metric. The results from objectiv e ev aluation are validated through a large-scale MUSHRA test. W e believ e this study may help in highlighting the key f actors that enable the use of deep learn- ing in real-time music processing. 4 6. REFERENCES [1] M. V ardhana, N. Arunkumar, S. Lasrado, E. Abdulhay , and G. Ramirez-Gonzalez, “Con volutional neural net- work for bio-medical image segmentation with hard- ware acceleration, ” Cognitive Systems Resear ch , v ol. 50, pp. 10–14, 2018. [2] A. D ´ efossez, N. Usunier , L. Bottou, and F . Bach, “Mu- sic source separation in the wa veform domain, ” arXiv pr eprint arXiv:1911.13254 , 2019. [3] R. Hennequin, A. Khlif, F . V oituret, and M. Moussal- lam, “Spleeter: a fast and efficient music source sepa- ration tool with pre-trained models, ” J ournal of Open Sour ce Software , v ol. 5, no. 50, pp. 2154, 2020. [4] Y . Luo and J. Y u, “Music source separation with band- split RNN, ” IEEE/A CM T ransactions on Audio, Speech, and Language Pr ocessing (T ASLP) , vol. 31, pp. 1893– 1901, 2023. [5] W . Choi, M. Kim, J. Chung, and S. Jung, “LaSAFT: La- tent source attentiv e frequency transformation for condi- tioned source separation, ” in IEEE International Con- fer ence on Acoustics, Speech and Signal Processing (ICASSP) , 2021. [6] N. T akahashi and Y . Mitsufuji, “D3net: Densely con- nected multidilated densenet for music source separa- tion, ” arXiv pr eprint arXiv:2010.01733 , 2020. [7] D. Stoller , S. Ewert, and S. Dixon, “W ave-U-Net: A multi-scale neural network for end-to-end audio source separation, ” in International Society for Music Informa- tion Retrieval Confer ence (ISMIR) , 2018. [8] A. D ´ efossez, “Hybrid spectrogram and wav eform source separation, ” in Pr oceedings of the MDX W ork- shop , 2021. [9] S. Rouard, F . Massa, and A. D ´ efossez, “Hybrid trans- formers for music source separation, ” in IEEE Interna- tional Conference on Acoustics, Speech and Signal Pr o- cessing (ICASSP) , 2023. [10] W . Choi, M. Kim, J. Chung, D. Lee, and S. Jung, “In- vestigating U-nets with various intermediate blocks for spectrogram-based singing voice separation, ” in Inter - national Society for Music Information Retrieval Con- fer ence (ISMIR) , 2020. [11] X. Ni and J. Ren, “FC-U 2 -Net: A nov el deep neu- ral network for singing voice separation, ” IEEE/A CM T ransactions on Audio, Speech, and Language Pr ocess- ing (T ASLP) , vol. 30, pp. 489–494, 2022. [12] Y . Luo and N. Mesgarani, “Con v-T asNet: Surpass- ing ideal time–frequency magnitude masking for speech separation, ” IEEE/ACM T ransactions on Audio, Speech, and Language Processing (T ASLP) , v ol. 27, no. 8, pp. 1256–1266, 2019. [13] Y . Hu, Y . Chen, W . Y ang, L. He, and H. Huang, “Hierar- chic temporal con volutional network with cross-domain encoder for music source separation, ” IEEE Signal Pr o- cessing Letters , v ol. 29, pp. 1517–1521, 2022. [14] D. Samuel, A. Ganeshan, and J. Naradowsky , “Meta- learning extractors for music source separation, ” in IEEE International Confer ence on Acoustics, Speech and Signal Processing (ICASSP) , 2020. [15] A. P andey and D. W ang, “TCNN: T emporal conv olu- tional neural network for real-time speech enhancement in the time domain, ” in IEEE International Conference on Acoustics, Speech and Signal Pr ocessing (ICASSP) , 2019. [16] Z. Rafii, A. Liutkus, F .-R. St ¨ oter , S. I. Mimilakis, and R. Bittner , “MUSDB18-HQ-an uncompressed version of MUSDB18, ” https://doi.org/10. 5281/zenodo.3338373 . [17] D. P . Kingma and J. Ba, “ Adam: A method for stochastic optimization, ” arXiv pr eprint arXiv:1412.6980 , 2014. [18] J. Le Roux, S. W isdom, H. Erdogan, and J. R. Hershey , “SDR–half-baked or well done?, ” in IEEE International Confer ence on Acoustics, Speech and Signal Pr ocessing (ICASSP) , 2019. [19] E. Cano, D. FitzGerald, and K. Brandenbur g, “Evalu- ation of quality of sound source separation algorithms: human perception vs quantitati ve metrics, ” in Eur opean Signal Pr ocessing Confer ence (EUSIPCO) , 2016. [20] B. Series, “Method for the subjective assessment of intermediate quality le vel of audio systems, ” Interna- tional T elecommunication Union Radiocommunication Assembly , 2014. [21] S. Ferrari and F . Cribari-Neto, “Beta regression for mod- elling rates and proportions, ” Journal of applied statis- tics , vol. 31, no. 7, pp. 799–815, 2004. [22] M. Smithson and J. V erkuilen, “ A better lemon squeezer? maximum-likelihood regression with beta- distributed dependent variables., ” Psychological meth- ods , vol. 11, no. 1, pp. 54, 2006. [23] A. Gelman, J. B. Carlin, H. S. Stern, D. B. Dunson, A. V ehtari, and D. B. Rubin, Bayesian data analysis , Chapman and Hall/CRC, 2015. 5

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment