Haptic-Assisted Collaborative Robot Framework for Improved Situational Awareness in Skull Base Surgery

Skull base surgery is a demanding field in which surgeons operate in and around the skull while avoiding critical anatomical structures including nerves and vasculature. While image-guided surgical navigation is the prevailing standard, limitation st…

Authors: Hisashi Ishida, Manish Sahu, Adnan Munawar

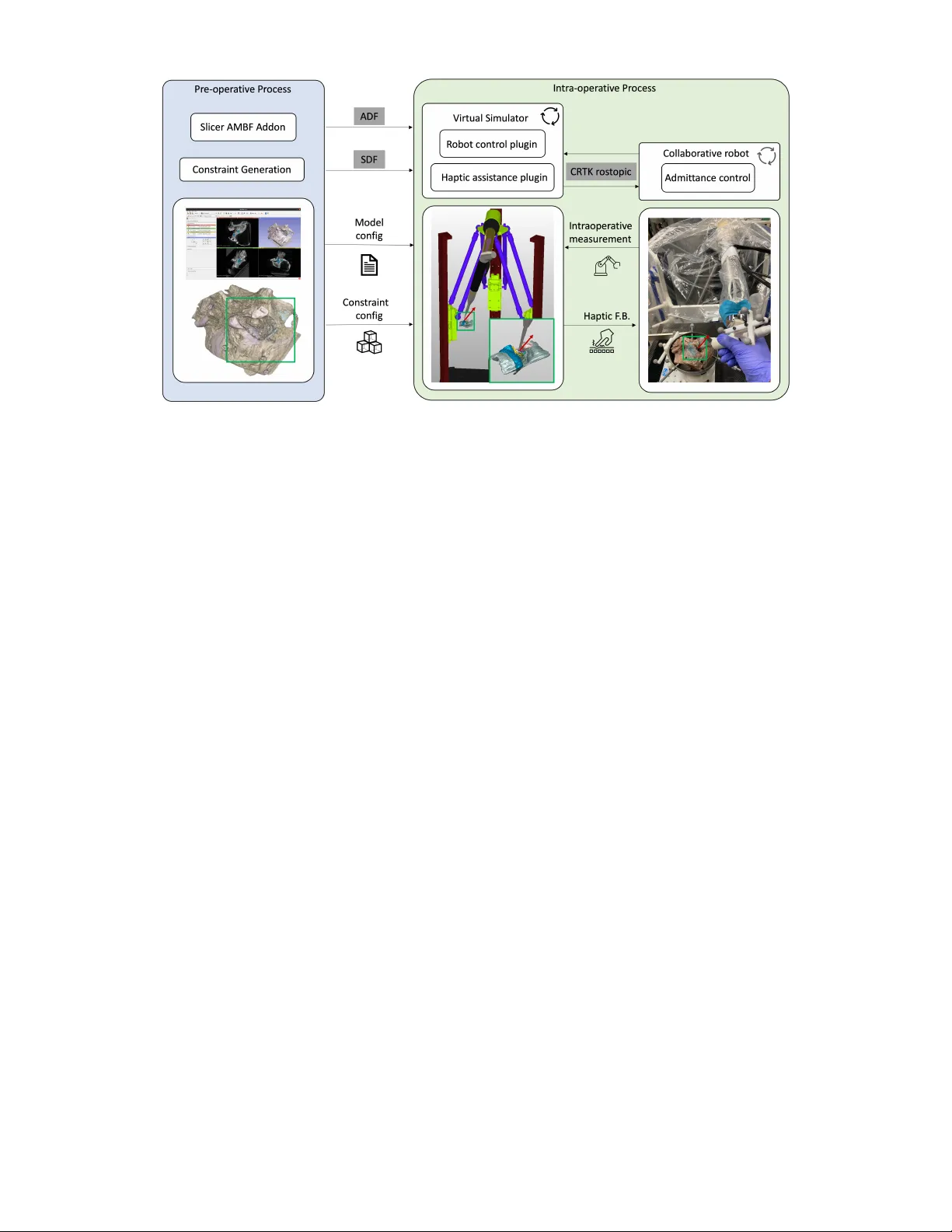

Haptic-Assisted Collaborativ e Robot Framework f or Impr o ved Situational A war eness in Skull Base Surgery Hisashi Ishida 1 ∗ , Manish Sahu 1 ∗ , Adnan Munaw ar 1 , Nimesh Nagururu 2 , Deepa Galaiya 1 , 2 Peter Kazanzides 1 , Francis X. Creighton 1 , 2 , and Russell H. T aylor 1 , 2 Abstract — Skull base surgery is a demanding field in which surgeons operate in and around the skull while avoiding critical anatomical structur es including nerves and vasculature. While image-guided surgical na vigation is the pre vailing standard, limitation still exists requiring personalized planning and rec- ognizing the irreplaceable role of a skilled surgeon. This paper presents a collaboratively controlled robotic system tailored for assisted drilling in skull base surgery . Our central h ypothesis posits that this collaborativ e system, enriched with haptic assistive modes to enforce virtual fixtur es, holds the potential to significantly enhance surgical safety , streamline efficiency , and alleviate the physical demands on the surgeon. The paper describes the intricate system development work required to enable these virtual fixtures through haptic assistive modes. T o validate our system’s performance and effectiveness, we conducted initial feasibility experiments in volving a medical student and tw o experienced sur geons. The experiment focused on drilling ar ound critical structures following cortical mas- toidectomy , utilizing dental stone phantom and cadav eric mod- els. Our experimental r esults demonstrate that our pr oposed haptic feedback mechanism enhances the safety of drilling around critical structures compared to systems lacking haptic assistance. W ith the aid of our system, surgeons wer e able to safely skeletonize the critical structures without breaching any critical structure e ven under obstructed view of the surgical site. I . I N T RO D U C T I O N Skull base surgery poses a profound challenge, demanding the utmost precision as surgeons dissect around critical structures, including nerves and vessels, which are often concealed by operable tissue at sub-millimeter distances [1]. Achieving this precision necessitates an intricate understand- ing of precise anatomy and sub-millimetric control during drilling—a skill acquired through extensiv e training and practice [2]. Furthermore, surgeons must adapt their ap- proach to accommodate the substantial anatomical variation observed among patients. In light of this complexity , the in- tegration of computer-aided and robotic assistance holds sig- nificant promise [3]. Robotic assistance, in particular , offers the advantage of providing precise, tremor-free manipulation of surgical instruments. One piv otal component of many lateral skull base surgeries is mastoidectomy , or removal of mastoid temporal bone [4], and robotic assistance has found a valuable role in this procedure [5]. Howe ver , mastoidectomy often represents only the initial step in these lateral skull base 1 Department of Computer Science, Johns Hopkins University , Baltimore, MD 21218 , USA. 2 Department of Otolaryngology-Head and Neck Sur gery , Johns Hopkins Uni versity School of Medicine, Baltimore, MD 21287 , USA. *These authors contributed equally . Email: hishida3@jhu.edu surgeries, and further drilling in the vicinity of critical struc- tures is imperati ve to access the surgical tar gets including neoplasms and the or gans of hearing. Consequently , robotic systems must ev olve to encompass assistance throughout the entirety of skull base sur gery [6]. Safe deployment of such robotic systems into the skull base requires the robot to dev elop situational awareness and safety-driven virtual fixtures (also known as active constraints) [7] to control its interaction with the sur gical en vironment [8], [9]. The primary objecti ve of this work is to enhance surgi- cal situational awareness by augmenting human perception through the provision of safety-driv en haptic assistance dur- ing the remov al of soft tissues surrounding critical structures in the post-mastoidectomy phase of robotic-assisted surgery (Fig. 1). A major challenge in enabling such haptic assistance lies in generating appropriate feedback proportional to the hand force applied by the surgeon to guide the robot while computationally compensating for the gravitational forces ex erted by the drill mounted on the robot. This work outlines the system dev elopment process and pipeline required to facilitate haptic feedback during skull base drilling using a cooperativ e robot. This encompasses the seamless integration of the cooperativ e robot with a real-time simulation-driven navigation system, as well as the computational elimination of gravitational forces attributed to the drill’ s weight. More- ov er , the generation of guidance virtual fixtures is deri ved directly from patient volumetric imaging data to provide patient-specific assistance. T o demonstrate the performance and efficacy of our sys- tem, we conducted initial feasibility experiments in volv- ing a medical student and tw o e xperienced sur geons. Sur - geons drilled around critical structures following cortical mastoidectomy in phantom and cadaveric models. Our ex- perimental results demonstrate that the proposed feedback mechanism enhances the safety of drilling around critical structures when compared to a system lacking haptic assis- tance. The key contributions of the work are: 1) A comprehensiv e pipeline that spans from creation of complex anatomical constraints to intraoperativ e collaborativ e robotic assistance. 2) The development and seamless integration of safety- driv en haptic assistance to facilitate skull base drilling. 3) Initial feasibility experiments to showcase the system’ s accuracy and performance, particularly in scenarios in volving intricate anatomical features such as the facial nerve. Fig. 1. Conceptual ov erview of the safety-driven haptic assistance: Compliance force, defining the preferred direction of the drill based on the robot’ s position relative to the anatomical surface, is computed in the dynamic simulation model. This force is fed back to the collaborati ve robot controller to generate haptic feedback. It is important to note that although our system is specif- ically designed for collaborativ e robotic assistance in skull base surgery , it is constructed using open-source libraries and adheres to industry-standard data formats. Consequently , our approach has the potential to be applicable across a wide range of robotic systems, sur gical procedures, and anatomical regions. I I . R E L A T E D W O R K Multiple works hav e introduced robotic systems designed to assist humans in v arious manipulation tasks [7]. These systems can be broadly categorized based on the degree of automation they offer , ranging from fully automated to semi-autonomous control. For the sake of brevity , we specifically consider robotic systems applicable to head and neck procedures. While autonomous bone drilling systems hav e shown promise [10], it necessitates precise planning for every possible scenario and surgical approach. It lacks the in valuable input of a surgeon’ s procedural knowledge, technical skill, and real-time perceptual feedback. In contrast, cooperativ e systems in volv e both the surgeon and the robot simultaneously holding the drill. This arrangement allo ws the drill to move in proportion to the surgeon’ s applied force while adhering to activ e constraints set by the controller . Safety constraints can be acti vated when the drill nears a critical structure and remain inactiv e otherwise. In our work, we employ a cooperatively controlled robot for robotic- assisted drilling. W ithin the context of safety-driv en robotic assistance, Ding et al. [11] and Xia et al. [12] implemented planar virtual constraints in their control system and demonstrated its util- ity in phantom drilling. Ho wever , planar constraints, while useful for defining the robot’ s workspace, cannot model anatomical structures which are inherently non-planar . Other studies hav e used segmented CT scans to deriv e complex anatomical constraints directly , enforcing a safety margin of 3 mm between critical structures and the drill [6], [13]. While these works showcased the utility of enforcing safety margins with a cooperative robot during mastoidectomy procedures, these safety margins were frequently breached, resulting in additional time spent reactiv ating the robot and suboptimal user experiences. Our paper focuses on pro viding haptic feedback to effec- tiv ely guide the drill motion away from critical structures, instead of enforcing hard safety barriers. Unlike forbidden- region-based virtual fixtures, which aim to keep the manipu- lator’ s tool away from restricted areas, our haptic-based vir- tual fixtures assist the user in moving the robot manipulator along desired paths or surfaces[14], [7]. T o achieve this, we deriv e distance fields [15] from patient volumetric imaging data. These distance fields define the preferred direction of the drill based on the robot’ s position relativ e to the desired surface. By restraining user-commanded motions in non- desired directions, we establish passiv e guidance, effecti vely steering the drill a way from critical structures. I I I . M E T H O D O L O G Y At the heart of our system, we ha ve a cooperati vely con- trolled robot and an interactive dynamic simulation en viron- ment, Asynchronous Multi-Body Frame work (AMBF) [16]. This simulation en vironment comprises three primary com- ponents: a patient anatomical model, a collaborative robot, and a surgical drill. The anatomical model is crafted from segmented preoperativ e CT images and then registered with the actual patient anatomy and the robot’ s workspace. This ensures that the virtual representation seamlessly mirrors the real-world anatomical structures and its safe operational boundaries. During operation, the simulation model con- tinuously gathers data through the robot’ s state and opti- cal tracking. Simultaneously , it offers real-time situational awareness by establishing spatial relationships between sur- gical instruments and the surrounding tissues. This dynamic model forms the bedrock for implementing virtual fixtures that en velop critical anatomical structures, ef fectiv ely guiding the surgeon’ s actions while ensuring precision and safety during the procedure. In Section III-A, the overvie w of the system (Fig. 2) and interaction between its components are described. The constraints are modeled using the Signed Distance Field (SDF) for the anatomical volumes (Section III-B). The “Haptic assistance plugin” monitors the distance between the virtual instruments and different anatomies in real time and generates the compliance force based on SDF (Section III-C). The compliance force is fed into the collaborativ ely controlled robot to produce a sensation of haptic feedback (Section III-D.2). Lastly , we discuss the calibration and registration pipeline that we used. A. System overview From the preoperati ve CT scans, our system generates SDF for critical anatomies and provides real-time haptic feedback. This incorporates intricate anatomical structures, Fig. 2. System Overview . (Left) preoperative creation of patient model and constraints configuration. (Middle) intra-operative dynamic simulation of the robot and anatomy as well as generation of compliance force. (Right) Collaborativ e robot receiving the compliance force and augment the safety of the procedure. mitigating collision risks with anatomies and enhancing the safety of the operator . The process inv olves the use of 3D Slicer [17], a widely adopted imaging software, for loading and annotating CT scans. The AMBF Slicer plugin is employed to conv ert the “seg.nrrd” format into AMBF Description Files (ADF) compatible with the virtual simula- tor . Once the SDF for all segmented anatomies is generated, the “Haptic assistance plugin” calculates the proposed haptic feedback and sends it back as a compliance force to the robot. Effecti ve communication between the real environment and the simulator is realized by adopting the Collaborativ e Robotics T oolkit (CR TK) [18] con vention, promoting mod- ularity and seamless inte gration with other robotic systems. W e use the robot’ s built-in admittance controller while only incorporating the SDF-based force feedback from the simulator for better interoperability with our system. B. SDF calculation and volume r epr esentation An SDF volume for a specific anatomical structure, ⅁ , is represented as a 3D voxel grid, S ⅁ , where each v oxel stores the distance between the voxel and the closest voxel containing this specific anatomic structure. In this grid, positiv e values indicate vox els outside the anatomy , while negati ve v alues indicate v oxels on the inside. In this paper , we adopts the method proposed in our previous work [15], which internally facilitates Saito and T oriwaki’ s method [19] to calculate SDF in a parallelized manner . From the SDF volume, S ⅁ , the closest distance be- tween the drill tip, x ∈ R 3 , and the nearest anatomy is calculated, d ⅁ ( x ) ∈ R , and using the finite difference, the direction from the drill tip to the closest point of the nearest anatomy , d ⅁ ( | d ⅁ | = 1) , is derived. Please refer to [15] for further details. C. SDF-based haptic feedbac k Giv en n ∈ Z critical anatomies in the surgical scene, the SDF-based force feedback, F S DF ∈ R 3 , can be written as: F ( n ) S DF = F max d ⅁ if d ⅁ < τ ( n ) 0 F max exp λ τ ( n ) 0 − d ⅁ d ⅁ if τ ( n ) 0 < d ⅁ < τ ( n ) f 0 if τ ( n ) f < d ⅁ (1) F S DF = X n F ( n ) S DF (2) where F ( n ) S DF ∈ R 3 represents the force from the n th anatomy in the scene. The closest distance to the n th anatomy , d ⅁ , and the direction to ward the nearest point in the n th anatomy , | d ⅁ | , is deri ved from the previous section. τ ( n ) 0 ∈ R and τ ( n ) f ∈ R are the thresholds to activ ate the hard constraint and haptic feedback, respectiv ely . F max is the maximum force in newtons, [N], and λ ∈ R is a decay constant that determines how steeply the force increases when the virtual drill is close to the anatomy . T o maintain the usability of the cooperative robots, we adjust the SDF-based force feedback, F S DF , according to the user-applied force, F H ∈ R 3 by setting F max = | F H | . Additionally , the compliance force, F C ∈ R 3 , that will be sent to the collaborative robot is adjusted using the following rule: ( F C = F S DF if ( F H + F S DF ) · F H, || < 0 F C = − F H, || Otherwise (3) where F H, || ∈ R 3 is the component of the hand force that is parallel to F S DF . Equation (3) regulates the SDF-based force so that the total force ( F H + F C ) that controls the robot remains aligned with the direction of the user’ s applied force (Fig. 3). Fig. 3. Here, F H denotes the user-applied force and F C is the compliance force generated using SDF . The compliance force is proportional to the user- applied force and effecti vely guides the motion in the preferred direction. D. V irtual simulator and r obotic system. 1) V irtual simulation: AMBF successfully demonstrated the virtual reality simulation for skull base surgery [20], [21]. W e further develop our system to generate compliance force that is later utilized in the admittance control for the collaborativ e robot to enable haptic feedback. First, we created the virtual robot that accurately represents the real robotic system. Next, we import the virtual robot, anatomy from the CT , and SDF for all critical anatomies in this simulator . The SDF-based acti ve constraint explained in the previous section is enforced using the plugin functionality (SDF assistance plugin), and the virtual robot motion is synchronized by the robot control plugin. 2) Collaborative r obotic system: The Robotic ENT (Ear, Nose, and Throat) Microsurgery System (REMS) is a cooperativ ely-controlled robot created specifically for use within otolaryngology–head and neck surgery . [22], [23] For this study , we use a pre-clinical v ersion dev eloped by Galen Robotics (Galen Robotics, Baltimore, MD). REMS offers a significant benefit in instrument stability in head and neck surgery . The cooperative mode of the robot uses the admittance control la w: arg min ∆ q ( | GF H − J ∆ q | ) (4) G ∈ R 6 × 6 is a diagonal matrix that represents the admittance gains, J ∈ R 6 × m is a Jacobian and ∆ q ∈ R m is a joint velocity vector . Thus, the incremental motion of the robot end-effector , ∆ x ∈ R 6 , is expressed as ( ∆ x = J ∆ q ). W e introduced a compliance force term, denoted as F C , to the admittance control for the robot’ s motion. As a result, the updated optimization equation can be expressed as: arg min ∆ q ( | G ( F H + F C ) − J ∆ q | ) (5) E. Calibration and r e gistration Our system requires accurate spatial representation be- tween the physical system components for which we employ state-of-the-art registration and calibration algorithms. For better transparency of the collaborativ e robot, it is critical to filter only the force from the user . This necessitates the exclusion of external forces, a primary example being the gravitational impact on the drill. Addressing this concern is challenging problem due to the flexible cable of the drill, which exhibits non-linearity . W e employed a model for this external force based on a Bernstein polynomial and incorporated gravity compensation as described in [24]. The registration between the actual environment and the simulation is fundamental in this work, especially in locating the drill tip with respect to the robot . T o achiev e sub- millimeter precision, we utilize the optical tracker system, FusionT rack 500 (Atracsys, Switzerland) (Fig. 4). It offers a mean tracking error of 0 . 02 ( ± 0 . 02 ) mm . [21] Fig. 4. Frame transform diagram. F OT represents the frame for the optical tracker . F drill , F ref are the frames attached to the drill marker and reference marker , respectively . F E E is the frame for the robot end- effector . W e perform a hand-eye calibration to calculate the trans- formation between F E E and F dril l . This hand-eye calibration is follo wed by a piv ot calibration to get the position of the drill tip, F tip , with respect to the marker attached to the drill ( F dril l ). T o render haptic feedback that aligns with real- time anatomical positioning, the anatomy is registered with respect to the robot using point-set registration technique. I V . E V A L U A T I O N A N D R E S U LT S T o demonstrate the applicability of our system and assess its efficac y , two experiments were performed: one utilizing a phantom with dental stone and the other in volving a cadaver temporal bone. The dental stone experiment primarily served to validate our system in a controlled setting and also provided an acclimation period before the temporal bone experiment. T ABLE I H A PT I C R E L A T E D C O N TR O L P A R AM E T E RS Denatal stone experiment T emporal bone experiment τ 0 [ mm ] τ f [ mm ] λ τ 0 [ mm ] τ f [ mm ] λ 1.0 4.0 1.0 0.5 4.0 2.0 τ 0 : distance where the unpreferred direction is fully constrained τ f : distance where proposed haptic feedback activ ates λ : decay constant for the proposed haptic feedback The relev ant parameters for the proposed haptic feedback are described in T able I. During the dental stone experiment, we opted for a τ 0 = 1 mm , which was subsequently reduced to τ 0 = 0 . 5 mm in the temporal bone experiments. Further- more, λ is also tuned for the cadav eric experiment to ensure that the surgeons can approach closer to the critical anatomy . A. Experiment setup T o prepare for the experiment, we first affix ed registration pins to the cadav eric temporal bones. High-resolution CBCT (Brainlab LoopX, 0 . 26 mm 3 vox el size) scans were then taken for each temporal bone. Follo wing this, we used 3D Slicer to annotate critical structures and the locations of the registration pins. These annotated anatomical structures were later used to create the patient anatomical model and constraints for our system. Prior to the experiment, the temporal bone was securely positioned within a temporal bone holder . The holder was then firmly anchored to a surgical table to prev ent any potential inadvarent movement. T racking markers were affixed to both the surgical drill and the temporal bone holder to monitor any mov ements or registration discrepancies. Next, we initiated the calibration and registration process. The hand-eye calibration process resulted in an RMSE of approximately 0.2 mm for transla- tion and 0.3 degrees for rotation. For piv ot calibration, we used a 2 mm drill tip, achieving an RMSE v alue of 0.03 mm . Finally , the temporal bone was registered to the virtual simulation using a point-set re gistration method. These points were carefully sampled using the surgical drill, leading to an RMSE of less than 0.5 mm . During the experiment, sur geons conducted drilling procedures around critical structures un- der a surgical microscope (Haag-Streit, K ¨ oniz, Switzerland) equipped with stereo vision. Following the experiment, CT scans were taken for postoperati ve ev aluation (Fig. 5). Fig. 5. Experimental setup. Surgeon uses the surgical drill attached to the robot under microscopic view . Optical tracker was located next to the robot to monitor both the drill and the anatomy . B. Dental stone e xperiment 1) Experiment design: T o validate the safety-enhancing capabilities of our proposed method, we designed a phantom experiment using dental stone powder . A 3D printed bony labyrinth, an inner ear structure, was embedded within the dental stone phantom (as depicted in Fig. 6). T o ensure clear dif ferentiation, the labyrinthine structure was painted in green, with the paint mixed with CT opaque material to facilitate high-quality segmentation. A medical student and two attending surgeons were tasked with delicately skeletonizing the superior part of the Fig. 6. Example visualization of dental stone experiment with a medical student. (Left) 3D printed labyrinth, (middle) Result without assistance. Part of labyrinth was inadvertently drilled. (Right) Result with assistance. No injury to the labyrinth was observed. labyrinth, aiming to av oid any damage. They were provided a time limit of 5 minutes to complete this task, performing it both with and without the assistance of our proposed method. W e randomized the order to mitigate the learning effect. CT scans were taken before and after the experiment, and any damage incurred to the labyrinth was assessed. T ABLE II R E SU LT S F RO M D E N T A L S TO N E E X P ER I M EN T Damage on anatomy [ mm 3 ] Drilled volume [ mm 3 ] subject w/o VF w/ VF w/o VF w/ VF S1 0.58 0.0 878.4 1406.4 A1 0.0 0.0 694.7 726.6 A2 0.0 0.0 511.3 536.8 2) Result and discussion: T able II shows the quantitativ e result for the dental stone experiment. The results show that the medical student inadvertently breached a critical struc- ture when operating without haptic feedback, whereas no breaches occurred with haptic assistance (Fig. 6). In contrast, experienced surgeons were able to av oid the critical structure regardless of the presence of the haptic feedback. Further analysis on the drilled volume suggests that experienced surgeons approach the critical structure more carefully than medical trainees. The drilled volume remained in a similar range for both surgeons with and without haptic assistance. Nev ertheless, the medical student greatly benefited from the proposed haptic assistance, presumably becoming more confident in safe drilling. This is evident as the volume drilled increased by approximately 1.6 times compared to when no haptic assistance was enforced. C. T emporal bone e xperiment 1) Experimental design: T o further assess the efficac y of haptic assistance, we conducted experiments on cada veric temporal bones with the two experienced surgeons. This ca- dav eric study was designed to closely replicate real-life surgi- cal scenarios, thereby facilitating a more accurate ev aluation of the proposed method. In this experiment, the surgeons were tasked with the challenging process of skeletonizing critical structures under conditions that severely limited their visual cues. This was achieved by introducing water into the surgical site during the drilling procedure (as depicted in Fig. 7). This deliberate reduction in visibility within the complex temporal bone anatomy presented challenges akin to the irrigation process encountered in actual sur gical scenarios. It is worth noting that the temporal bone had Fig. 8. Comparison between pre- and post-operative CT scans for closest anatomies. First surgeon (a-d). Second surgeon (e-f). The second surgeon only approached the facial nerve. Both surgeons were able to skeletonize the facial nerve and sigmoid sinus without damaging them. The red lines in the figure represent the closest distance between the critical structure and the exposed surface. Fig. 7. Example microscope view . (a) Normal view , (b) with occulusion. previously undergone mastoidectomy , emphasizing that the drilling primarily occurred within the proximity of critical structures. 2) Results and discussion: As demonstrated in our ear- lier dental stone experiments, e xperienced surgeons e xhib- ited a high lev el of precision and were adept at av oiding damage to critical structures under normal conditions in cadav eric experiment. Howe ver , when confronted with such limited visibility , characteristic of the intricate temporal bone anatomy , their primary reliance shifted to haptic feedback while navigating around critical structures. Despite in those challenging conditions, both surgeons successfully navigated and drilled around the intricate anatomical structures without causing an y damage, thereby highlighting the practicality and effecti veness of our haptic assistance system. As depicted in Fig. 8, the results indicate that the surgeons successfully skeletonized the facial nerve and the sigmoid sinus without any damage. This result shows that there is significant potential for this system to be ef fectively implemented in actual surgical procedures. Such potential not only highlights the system’ s technical capabilities but also highlights its relev ance in adv ancing surgical precision and safety using the collaborativ e robot. V . S U M M A RY A N D F U T U R E W O R K In this work, we have dev eloped a collaborativ e robot system to enhance situational awareness in skull base sur gery by enhancing human perception through safety-driv en haptic assistance. Our system ensure safe drill manipulation by providing progressiv ely increasing haptic feedback as the drill approaches critical structures. Initial experiments us- ing dental stone phantoms and cadav eric temporal bones demonstrate the system’ s feasibility and effecti veness for both novice and experienced surgeons. While our work represents a significant advancement, cer- tain limitations warrant attention. First, our current pipeline relied on manual se gmentation for generating patient anatom- ical models and anatomical constraints. Future work in- volv es integrating an automated segmentation pipeline [25] to streamline this process. Furthermore, our system relies on high-resolution radiological scans to create precise anatomi- cal models and constraints. In cases where high-resolution patient scans are unav ailable, recent methods like those presented in [26] can be considered as an alternativ e for SDF . Secondly , our experiments employed in v asiv e fiducial markers for registration, ensuring a high le vel of accu- racy . Ongoing efforts concentrate on integrating vision-based tracking and registration algorithms to minimize in vasi veness and enhance precision. Lastly , despite our promising initial experimental results, the system’ s performance necessitates validation through extensi ve user studies conducted on a larger scale. This remains a focus of our future research endeav ors. In summary , our work contributes to the advancement of robotic integration in skull base procedures, paving the way for enhancing surgical precision while preserving the indispensable role and e xpertise of the sur geon. A C K N O W L E D G M E N T S A N D D I S C L O S U R E S Hisashi Ishida was supported in part by the IT O foundation for international education exchange, Japan. Nimesh Nagu- ruru is supported in part by NCA TS TL1 Grant TR003100. This work was also supported in part by a research contract from Galen Robotics, by NIDCD K08 Grant DC019708, by a research agreement with the Hong K ong Multi-Scale Medical Robotics Centre, and by Johns Hopkins University internal funds. Under a license agreement between Galen Robotics, Inc and the Johns Hopkins Univ ersity , Russell H. T aylor and Johns Hopkins Univ ersity are entitled to royalty distributions on technology that may possibly be related to that discussed in this publication. Dr . T aylor also is a paid consultant to and owns equity in Galen Robotics, Inc. This arrangement has been revie wed and approved by Johns Hopkins Univ ersity in accordance with its conflict-of-interest policies. S U P P L E M E N T A RY I N F O R M A T I O N A supplementary video is provided with the submission. For more information, visit the project repository. R E F E R E N C E S [1] A. T . Meybodi, G. Mignucci-Jim ´ enez, M. T . Lawton, J. K. Liu, M. C. Preul, and H. Sun, “Comprehensive microsurgical anatomy of the middle cranial fossa: Part I—Osseous and meningeal anatomy , ” F rontier s in Surgery , vol. 10, 2023. [2] B. J. Gantz, “Evolution of otology and neurotology education in the United States, ” Otology & Neur otology , vol. 39, no. 4S, pp. S64–S68, 2018. [3] N. Zagzoog and V . X. Y ang, “State of robotic mastoidectomy: litera- ture review , ” W orld Neurosur gery , vol. 116, pp. 347–351, 2018. [4] M. Bennett, F . W arren, and D. Haynes, “Indications and technique in mastoidectomy , ” Otolaryngologic Clinics of North America , vol. 39, no. 6, pp. 1095–1113, 2006. [5] A. Danilchenko, R. Balachandran, J. L. T oennies, S. Baron, B. Munske, J. M. Fitzpatrick, T . J. Withro w , R. J. W ebster III, and R. F . Labadie, “Robotic mastoidectomy , ” Otology & neurotolo gy: official publication of the American Otological Society , American Neurotolo gy Society [and] Eur opean Academy of Otology and Neurotolo gy , vol. 32, no. 1, p. 11, 2011. [6] H. Lim, N. Matsumoto, B. Cho, J. Hong, M. Y amashita, M. Hashizume, and B.-J. Y i, “Semi-manual mastoidectomy assisted by human–robot collaborative control–a temporal bone replica study , ” Auris Nasus Larynx , vol. 43, no. 2, pp. 161–165, 2016. [7] S. A. Bowyer , B. L. Davies, and F . R. y Baena, “ Active con- straints/virtual fixtures: A survey , ” IEEE Tr ansactions on Robotics , vol. 30, no. 1, pp. 138–157, 2013. [8] N. Simaan, R. H. T aylor, and H. Choset, “Intelligent surgical robots with situational awareness, ” Mechanical Engineering , vol. 137, no. 09, pp. S3–S6, 2015. [9] A. Attanasio, B. Scaglioni, E. De Momi, P . Fiorini, and P . V aldastri, “ Autonomy in sur gical robotics, ” Annual Review of Contr ol, Robotics, and Autonomous Systems , vol. 4, pp. 651–679, 2021. [10] N. P . Dillon, L. Fichera, P . S. W ellborn, R. F . Labadie, and R. J. W ebster, “Making robots mill bone more like human surgeons: using bone density and anatomic information to mill safely and efficiently , ” in 2016 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS) . IEEE, 2016, pp. 1837–1843. [11] A. S. Ding, S. Capostagno, C. R. Razavi, Z. Li, R. H. T aylor, J. P . Carey , and F . X. Creighton, “V olumetric accuracy analysis of virtual safety barriers for cooperative-control robotic mastoidectomy , ” Otology & neur otology: of ficial publication of the American Otological Society , American Neurotology Society [and] European Academy of Otology and Neur otology , vol. 42, no. 10, p. e1513, 2021. [12] T . Xia, C. Baird, G. Jallo, K. Hayes, N. Nakajima, N. Hata, and P . Kazanzides, “ An integrated system for planning, navigation and robotic assistance for skull base surgery , ” Intl J Medical Robotics and Computer Assisted Surg ery , vol. 4, no. 4, pp. 321–330, Dec 2008. [13] M. H. Y oo, H. S. Lee, C. J. Y ang, S. H. Lee, H. Lim, S. Lee, B.-J. Yi, and J. W . Chung, “ A cadav er study of mastoidectomy using an image-guided human–robot collaborative control system, ” Laryngoscope investigative otolaryngology , vol. 2, no. 5, pp. 208–214, 2017. [14] J. J. Abbott, P . Marayong, and A. M. Okamura, “Haptic virtual fixtures for robot-assisted manipulation, ” in Robotics Researc h: Results of the 12th International Symposium ISRR . Springer , 2007, pp. 49–64. [15] H. Ishida, J. A. Barragan, A. Munawar , Z. Li, P . Kazanzides, M. Kazh- dan, D. Trakimas, F . X. Creighton, and R. H. T aylor , “Improving surgical situational awareness with signed distance field: a pilot study in virtual reality , ” arXiv preprint , 2023. [16] A. Munawar , Y . W ang, R. Gondokaryono, and G. S. Fischer, “ A real- time dynamic simulator and an associated front-end representation format for simulating comple x robots and en vironments, ” in 2019 IEEE/RSJ International Confer ence on Intelligent Robots and Systems (IR OS) , Nov 2019, pp. 1875–1882. [17] A. Fedoro v , R. Beichel, J. Kalpathy-Cramer , J. Finet, J.-C. Fillion- Robin, S. Pujol, C. Bauer, D. Jennings, F . Fennessy , M. Sonka, J. Buatti, S. A ylward, J. V . Miller , S. Pieper, and R. Kikinis, “3D Slicer as an image computing platform for the quantitative imaging network, ” Magnetic Resonance Imaging , v ol. 30, no. 9, pp. 1323– 1341, 2012, quantitative Imaging in Cancer. [Online]. A vailable: https: //www .sciencedirect.com/science/article/pii/S0730725X12001816 [18] Y .-H. Su, A. Munawar , A. Deguet, A. Lewis, K. Lindgren, Y . Li, R. H. T aylor, G. S. Fischer, B. Hannaford, and P . Kazanzides, “Collaborative Robotics T oolkit (CR TK): Open software frame work for surgical robotics research, ” in 2020 F ourth IEEE International Conference on Robotic Computing (IRC) , 2020, pp. 48–55. [19] T . Saito and J.-I. T oriwaki, “New algorithms for euclidean distance transformation of an n-dimensional digitized picture with applica- tions, ” P attern Recognition , vol. 27, no. 11, pp. 1551–1565, Nov . 1994. [20] A. Munawar , Z. Li, N. Nagururu, D. Trakimas, P . Kazanzides, R. H. T aylor, and F . X. Creighton, “Fully immersive virtual reality for skull- base surgery: Surgical training and beyond, ” International Journal of Computer Assisted Radiology and Surgery (IJCARS) , 2023. [21] H. Shu, R. Liang, Z. Li, A. Goodridge, X. Zhang, H. Ding, N. Nagu- ruru, M. Sahu, F . X. Creighton, R. H. T aylor , et al. , “T win-s: a digital twin for skull base surgery , ” International J ournal of Computer Assisted Radiology and Surgery , pp. 1–8, 2023. [22] K. C. Olds, P . Chalasani, P . Pacheco-Lopez, I. Iordachita, L. M. Akst, and R. H. T aylor, “Preliminary ev aluation of a new microsurgical robotic system for head and neck surgery , ” in 2014 IEEE/RSJ Inter- national Confer ence on Intelligent Robots and Systems . IEEE, 2014, pp. 1276–1281. [23] K. Olds, “Robotic assistant systems for otolaryngology-head and neck surgery , ” Ph.D. dissertation, Johns Hopkins University , 2015. [24] Y . Chen, A. Goodridge, M. Sahu, A. Kishore, S. V afaee, H. Mohan, K. Sapozhnikov , F . X. Creighton, R. H. T aylor, and D. Galaiya, “ A force-sensing surgical drill for real-time force feedback in robotic mas- toidectomy , ” International Journal of Computer Assisted Radiology and Surgery , pp. 1–8, 2023. [25] A. S. Ding, A. Lu, Z. Li, M. Sahu, D. Galaiya, J. H. Sie werdsen, M. Unberath, R. H. T aylor , and F . X. Creighton, “ A self-configuring deep learning network for segmentation of temporal bone anatomy in cone-beam CT imaging, ” Otolaryngology–Head and Neck Surgery , 2023. [26] H. Zhang, L. Zhu, J. Shen, and A. Song, “Implicit neural field guidance for teleoperated robot-assisted sur gery , ” in 2023 IEEE International Confer ence on Robotics and Automation (ICRA) , 2023, pp. 6866– 6872.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment