Joint Hierarchical Priors and Adaptive Spatial Resolution for Efficient Neural Image Compression

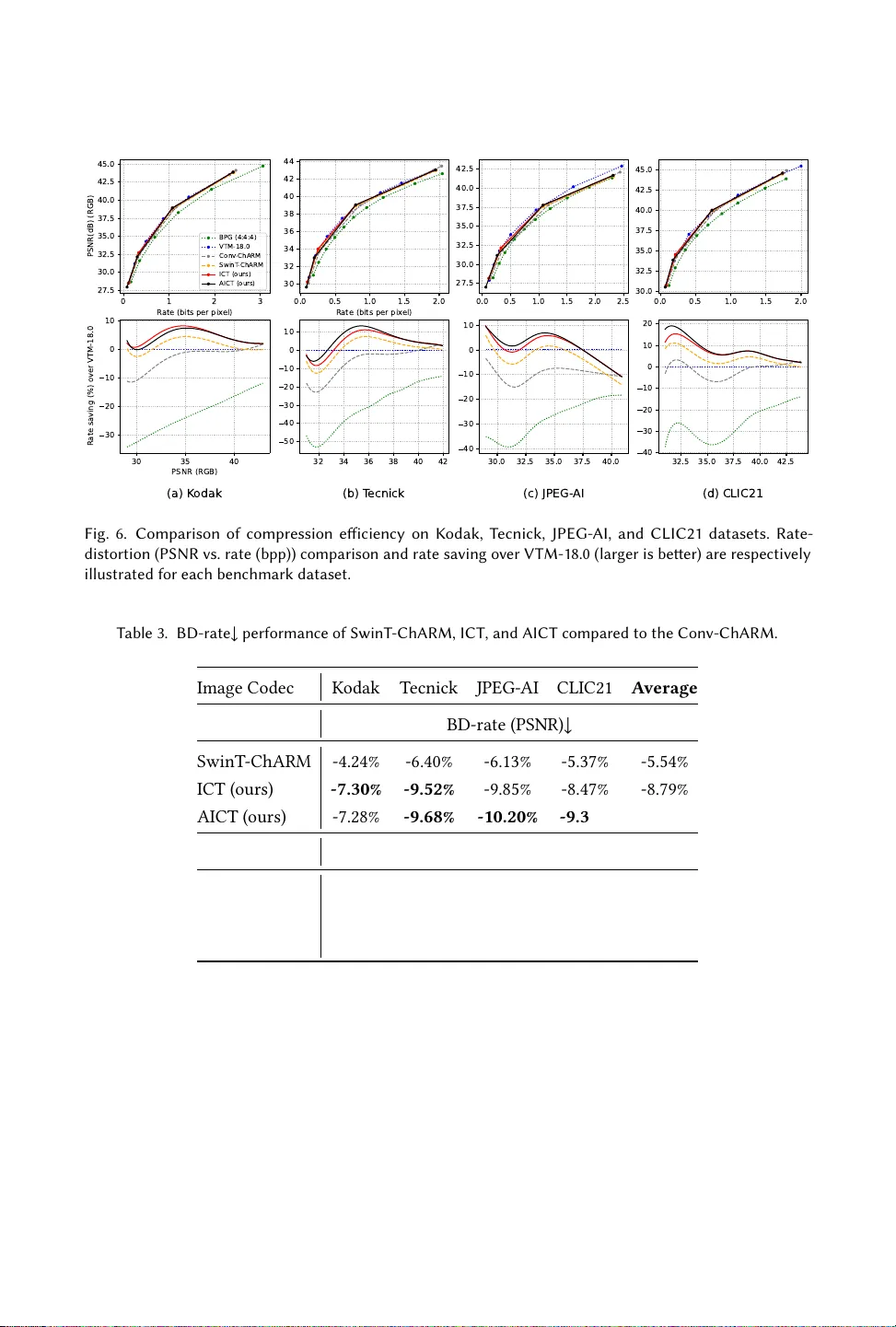

Recently, the performance of neural image compression (NIC) has steadily improved thanks to the last line of study, reaching or outperforming state-of-the-art conventional codecs. Despite significant progress, current NIC methods still rely on ConvNe…

Authors: Ahmed Ghorbel, Wassim Hamidouche, Luce Morin