A Discrete Adapted Hierarchical Basis Solver For Radial Basis Function Interpolation

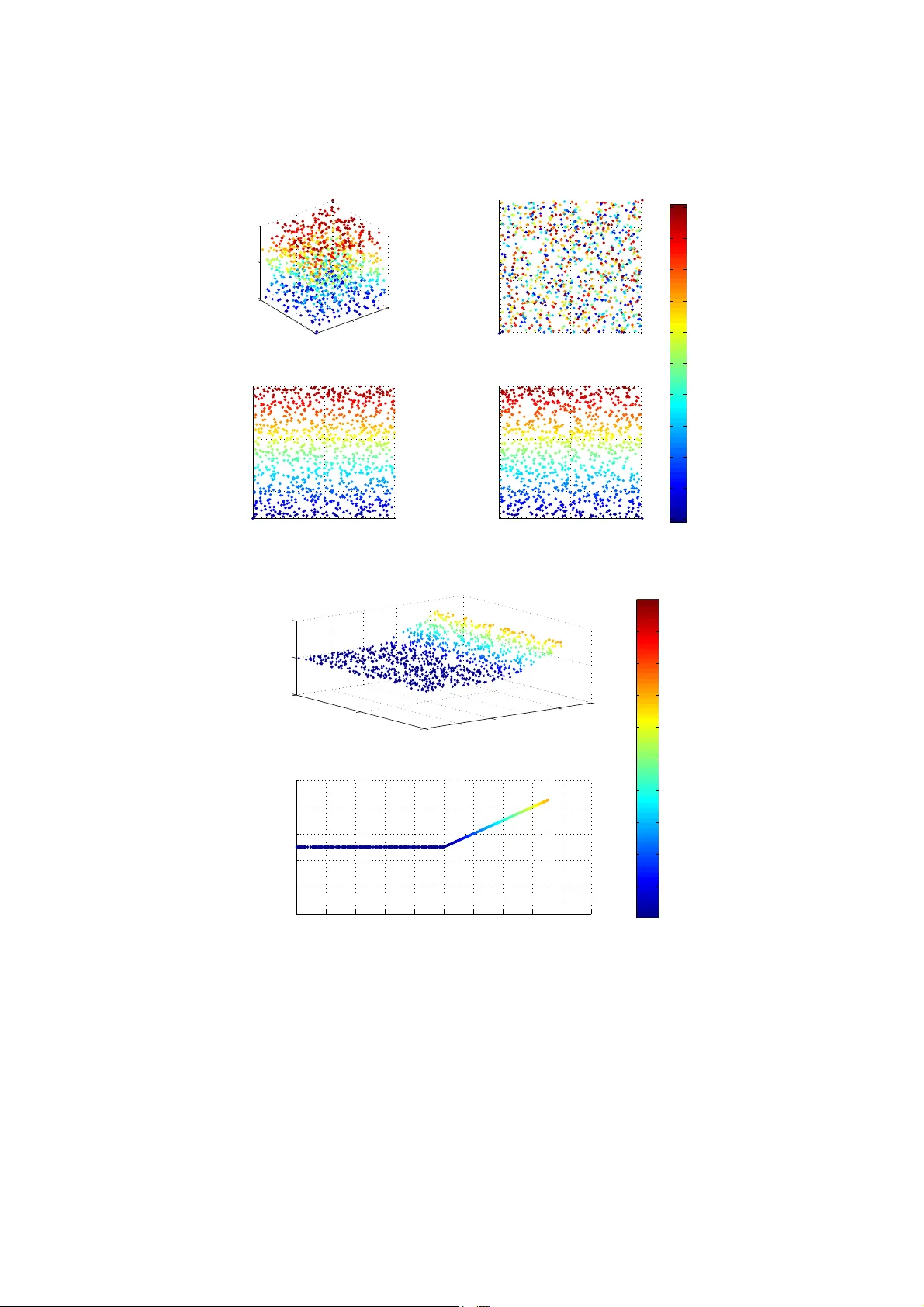

In this paper we develop a discrete Hierarchical Basis (HB) to efficiently solve the Radial Basis Function (RBF) interpolation problem with variable polynomial order. The HB forms an orthogonal set and is adapted to the kernel seed function and the p…

Authors: Julio Enrique Castrillon-C, as, Jun Li