Simplified decision making in the belief space using belief sparsification

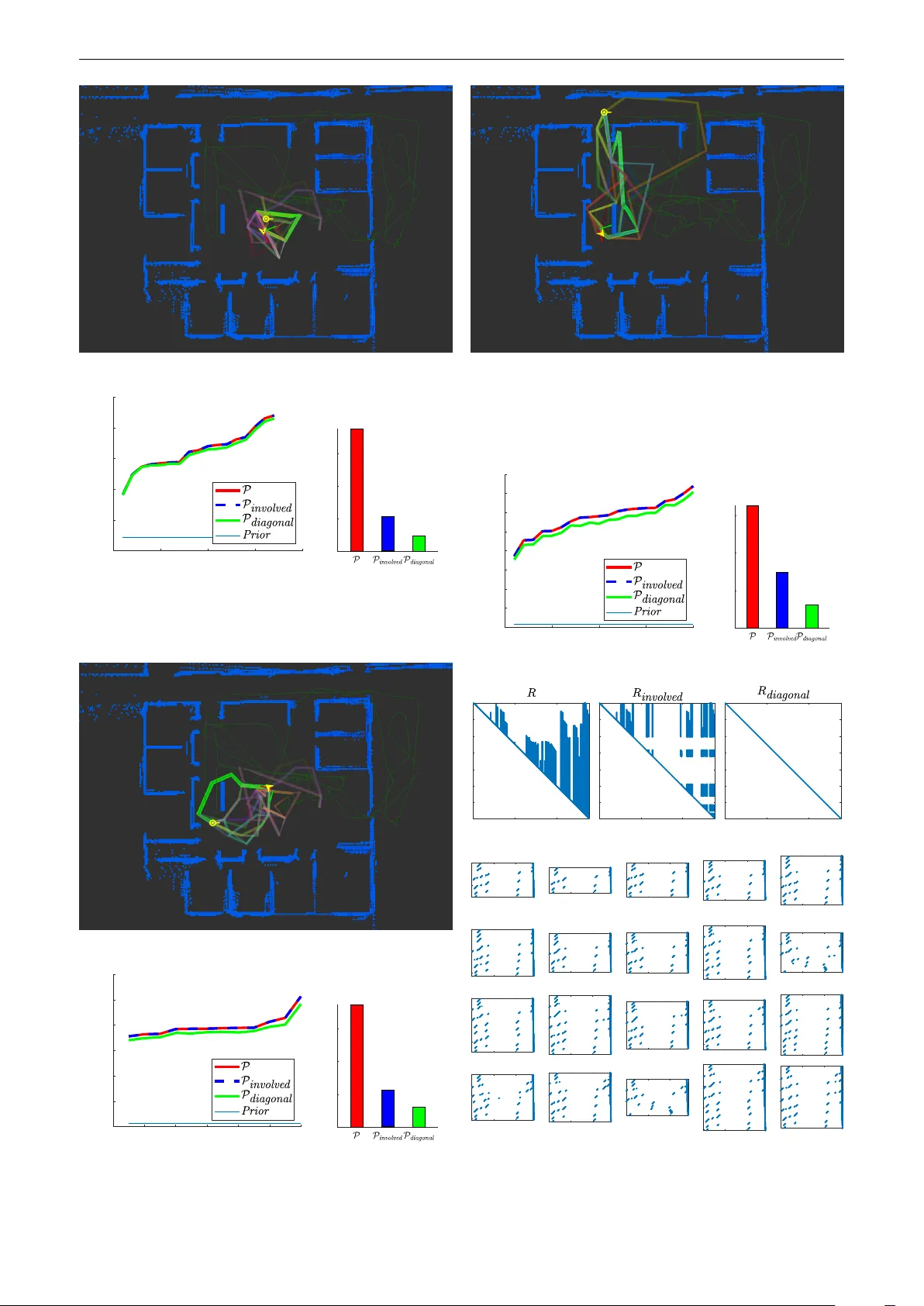

In this work, we introduce a new and efficient solution approach for the problem of decision making under uncertainty, which can be formulated as decision making in a belief space, over a possibly high-dimensional state space. Typically, to solve a d…

Authors: Khen Elimelech, Vadim Indelman