Persistence B-Spline Grids: Stable Vector Representation of Persistence Diagrams Based on Data Fitting

Many attempts have been made in recent decades to integrate machine learning (ML) and topological data analysis. A prominent problem in applying persistent homology to ML tasks is finding a vector representation of a persistence diagram (PD), which i…

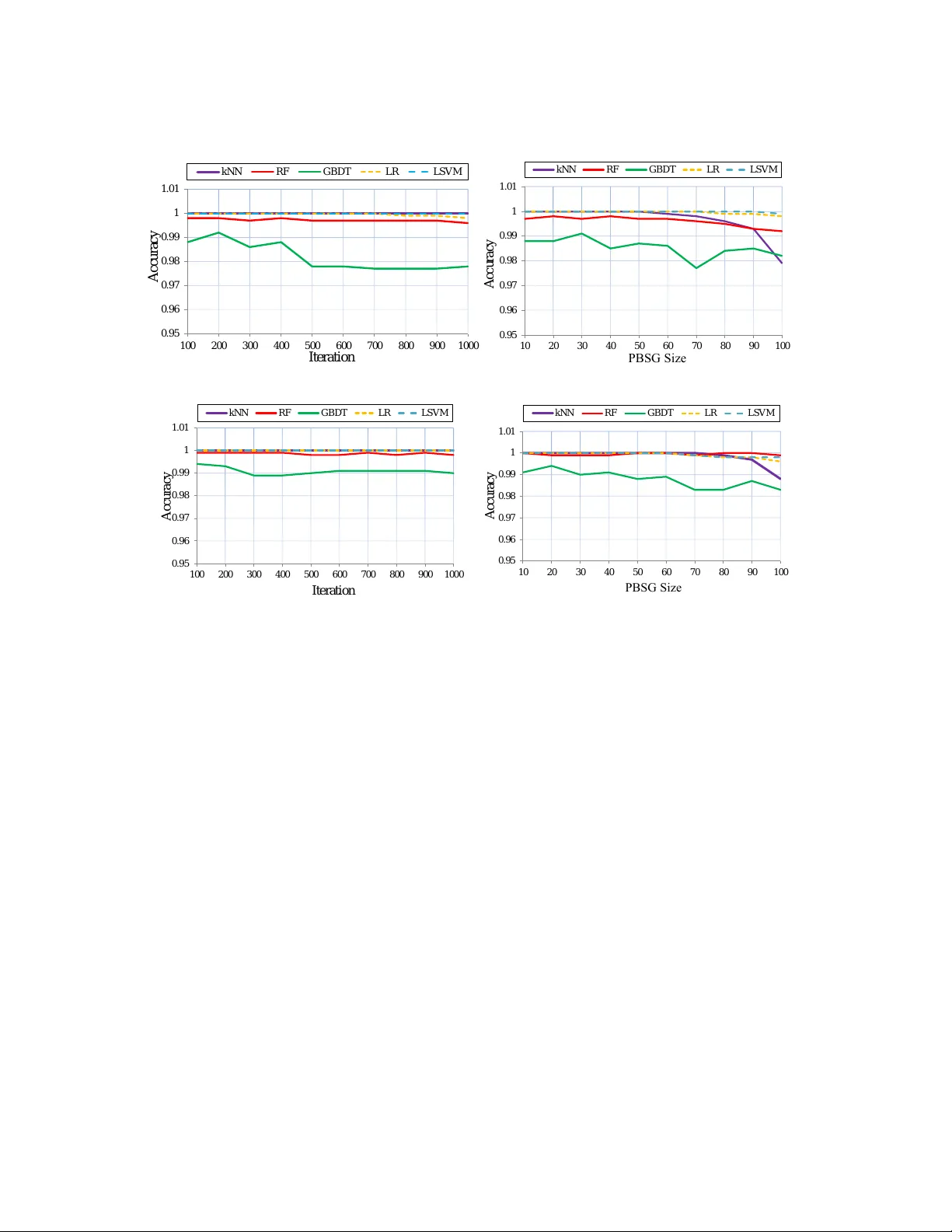

Authors: Zhetong Dong, Hongwei Lin, Chi Zhou