Stochastic-Sign SGD for Federated Learning with Theoretical Guarantees

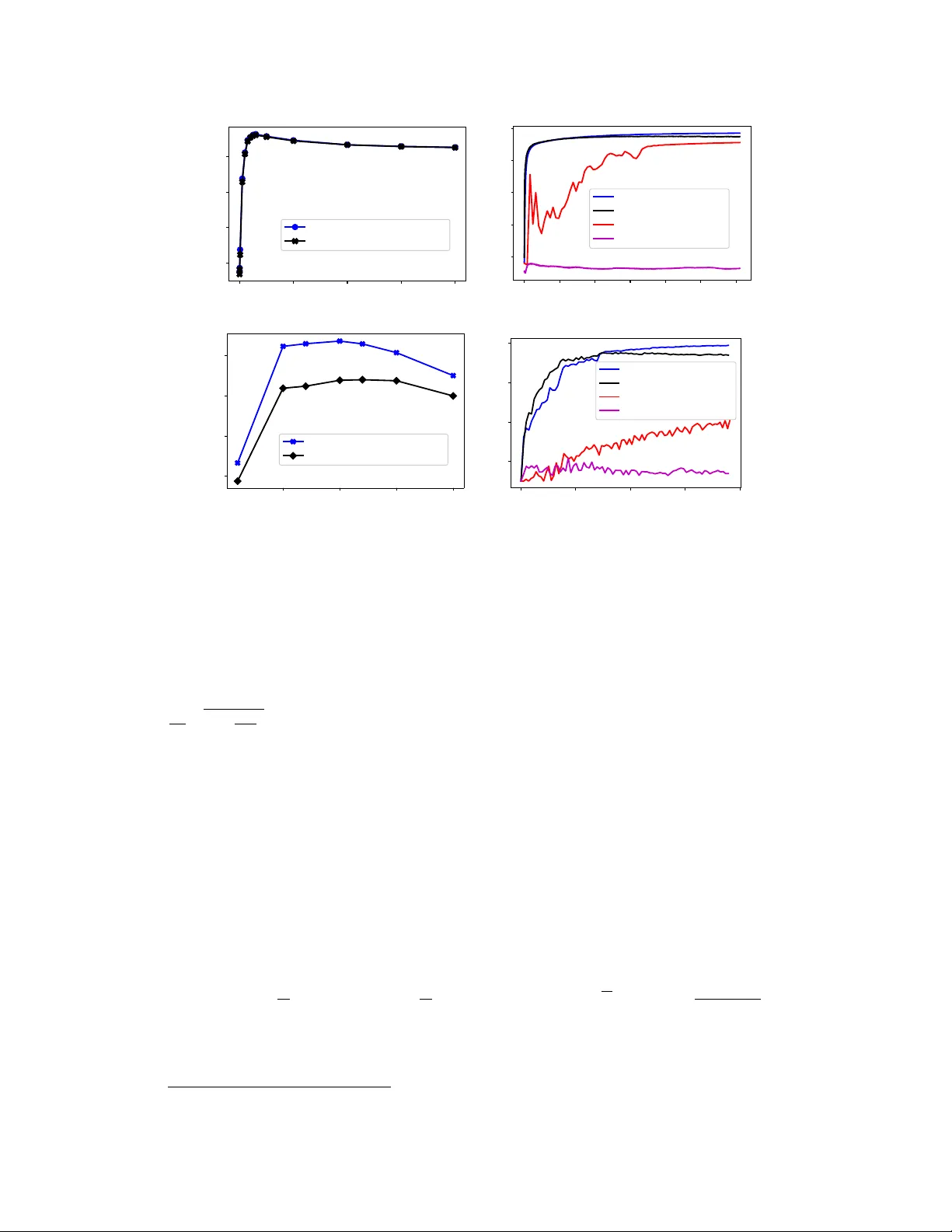

Federated learning (FL) has emerged as a prominent distributed learning paradigm. FL entails some pressing needs for developing novel parameter estimation approaches with theoretical guarantees of convergence, which are also communication efficient, …

Authors: Richeng Jin, Yufan Huang, Xiaofan He